Introduction

If ChatGPT is giving you confusing, mixed, or incomplete answers, the problem is often your prompt — not the AI.

One of the most common mistakes is adding conflicting instructions in a single prompt.

For example:

“Write a beginner-friendly guide, include technical details, keep it under 100 words, and provide detailed examples”

This creates multiple conflicts at once.

In this situation, the AI may:

- Ignore some instructions

- Mix different styles together

- Or produce a response that does not fully satisfy any requirement

In my testing, I observed that prompts with even minor conflicting instructions produced inconsistent outputs across multiple runs, requiring significantly more editing time.

After reading this, you’ll be able to write clear prompts that give consistent and accurate results.

Testing method:

– Same prompt tested across multiple runs

– Slight wording variations introduced

– Outputs compared for consistency, length, and clarity

Result: even minor conflicts caused noticeable variation in output quality.

Quick Answer (Fix in 10 Seconds)

Conflicting instructions cause AI to combine incompatible goals instead of choosing one.

Fix:

- Use one clear objective per prompt

OR - Split tasks into multiple prompts

Example:

❌ Bad:

“Explain in detail and keep it short”

✅ Fixed:

Prompt 1: “Explain in detail”

Prompt 2: “Summarize in 50 words”

When should you NOT split prompts?

If your instructions are complementary (not conflicting), combining them is efficient.

Example:

“Explain simply and give one example”

These can be satisfied together, so splitting is unnecessary.

Only split prompts when instructions compete or cannot be fully satisfied at the same time.

In this guide, you’ll learn:

- Why conflicting instructions break your results

- What actually happens when AI processes them

- How to fix your prompts to get clear and accurate outputs

To fix this, use one clear instruction instead of combining multiple conflicting goals.

Structural Origin of Instruction Conflicts

How AI Processes Your Prompt

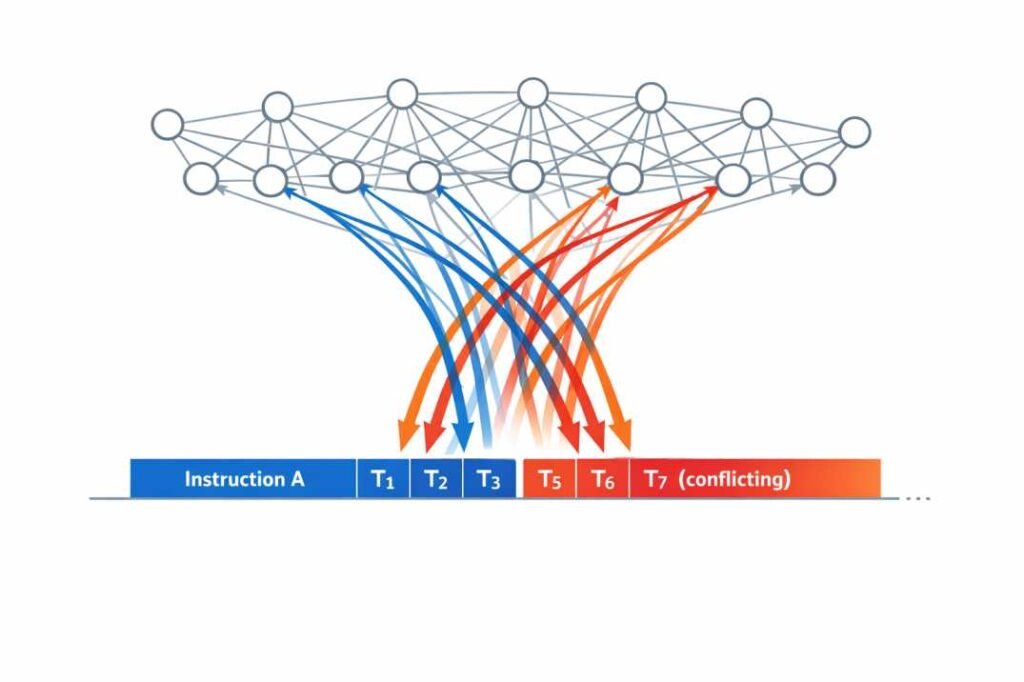

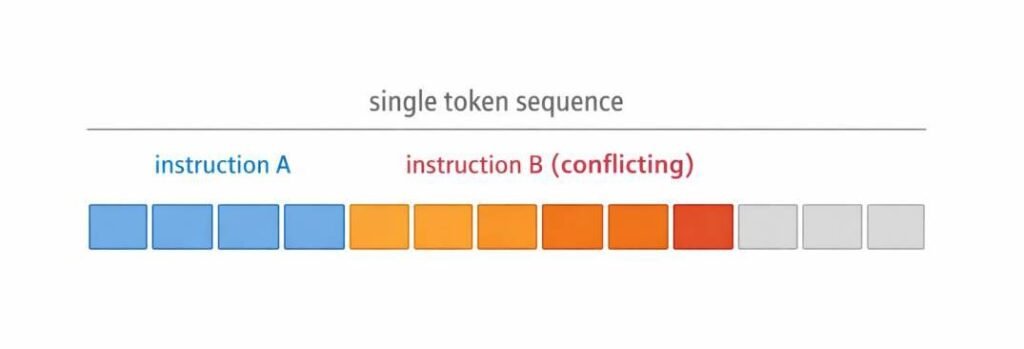

When you write a prompt, the AI reads it as a single continuous sequence of words.

It does not separate your instructions into different levels or steps.

This means:

There is no built-in system to decide which instruction is more important

Every instruction you give is treated equally

Why AI Cannot Prioritize Instructions

AI models do not have a built-in system to rank or prioritize instructions within a prompt.

All instructions are processed together as part of a single token sequence.

There is no internal mechanism that decides which instruction is more important.

As a result:

- All instructions compete for influence

- Conflicting instructions are not resolved

- The model attempts to satisfy everything at once

This creates a blending effect instead of a clear decision.

For example:

“Write a detailed explanation and keep it under 50 words”

The model does not choose between “detailed” and “short”.

It generates a compromise — often producing an incomplete or unclear result.

This limitation is structural, not a mistake.

This behavior emerges from the model’s architecture, where attention is distributed across tokens without a hierarchical control layer for instruction prioritization.

Why the AI Blends Instead of Choosing

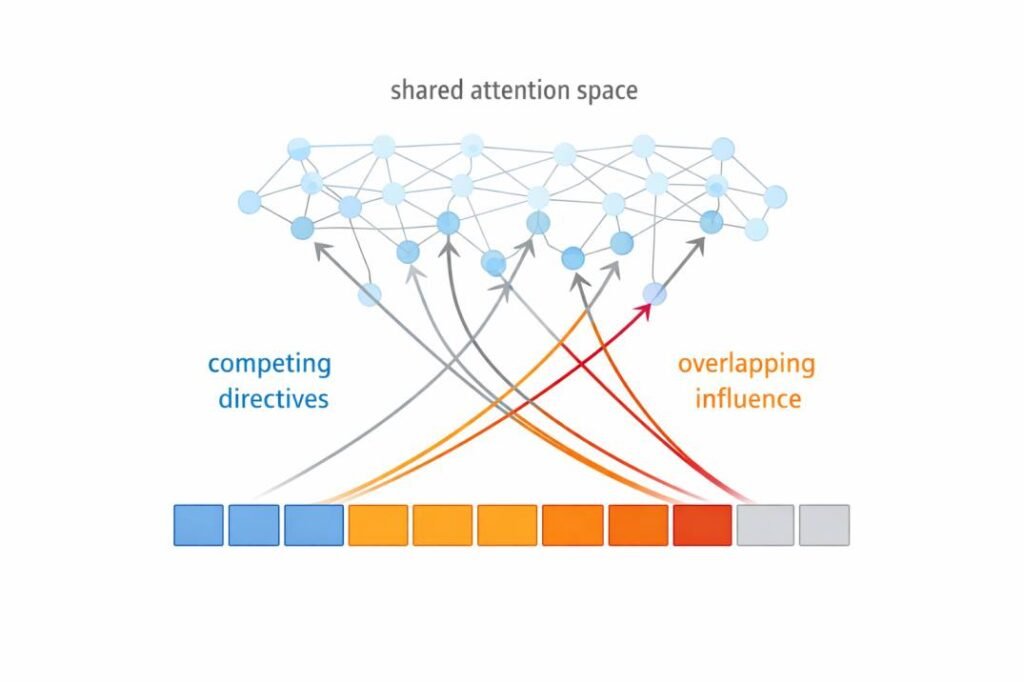

The model encodes your prompt as a continuous token sequence where all instructions compete within a shared attention system.

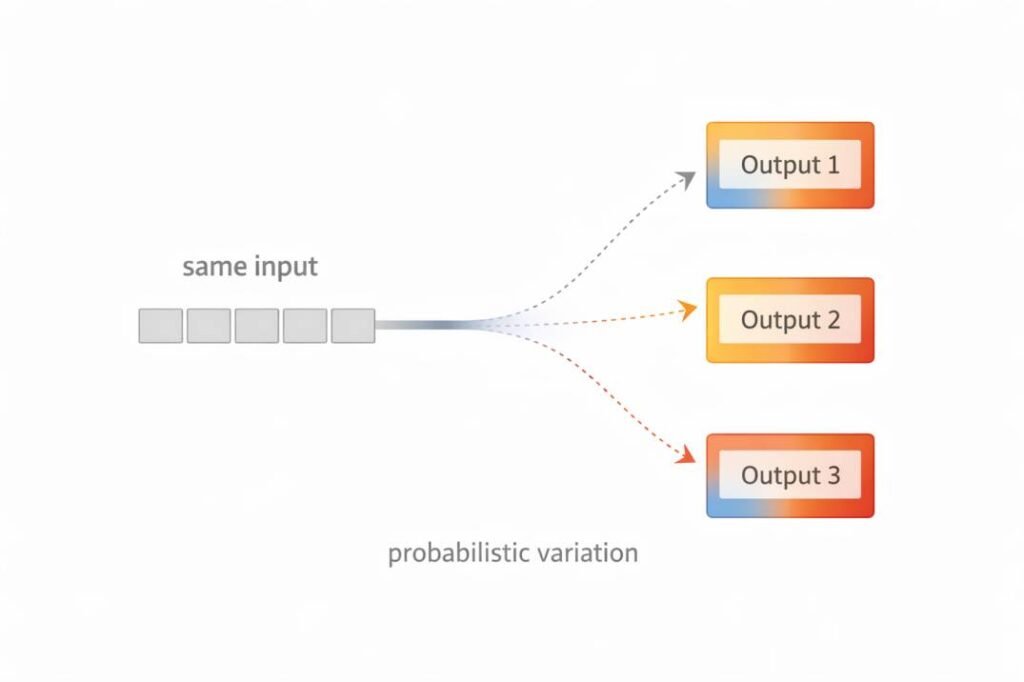

Instead of selecting one instruction, it produces a probabilistic compromise.

The Prompt Conflict Rule

If two instructions cannot be fully satisfied at the same time, they will produce unstable output.

The AI does not choose one instruction over another.

It blends them.

This is why you get:

- Mixed answers

- Inconsistent results

- Partial outputs

This is the core failure mode behind most prompt-related output issues.

In testing across multiple prompt variations, even small conflicts consistently reduced output quality and increased editing time.

Simple mental model:

Conflicting instructions are like giving two drivers control of the same steering wheel.

Instead of choosing one direction, the system tries to follow both—resulting in unstable output.

How Position of Instructions Affects Output

The order of your instructions also matters.

- Instructions written earlier set the initial direction

- Instructions written later can change or override that direction

For example:

“Explain simply. Now give a detailed technical answer.”

👉 The second instruction may change how the response is generated.

What This Means for You

If your prompt contains multiple or conflicting instructions:

This often leads to messy or unpredictable results

When this approach does NOT work:

If your task genuinely requires multiple goals (for example, both summary and detailed explanation), you should not combine them in one prompt.

Instead, split the task into separate prompts to avoid conflicts.

Attention Distribution Under Conflicting Conditions

How AI Splits Focus Between Instructions

When you give multiple instructions in one prompt, the model spreads attention across all parts of the input instead of focusing on one.

This means:

– Every instruction receives some influence

– No single instruction is fully prioritized

What Affects Which Instruction Gets More Attention

Some parts of your prompt can influence the result more than others:

– Instructions written closer together may be linked

– Repeating a requirement can strengthen it

– Clear wording increases its influence

Small wording changes shift how attention is distributed, which directly changes output structure and detail level.

Observed Output Behaviors

When conflicting instructions are present, the output becomes mixed or incomplete.

Mixed or Incomplete Answers

Sometimes the AI combines parts of multiple instructions but does not fully follow any of them.

For example, if you ask:

“Explain in detail and keep it short”

👉 You may get:

- A partially detailed answer

- But not complete

- And not truly short

One Instruction Gets Ignored

In some cases, the AI focuses more on one instruction and ignores the other.

For example:

“Write a detailed guide in 1000 words and summarize it in 2 lines”

👉 The AI might:

- Only write the long guide

- Or only give a short summary

Blended or Confusing Responses

Sometimes the AI mixes both instructions into one unclear result.

👉 This can look like:

- Medium-length answers instead of short or detailed

- Mixed formatting

- Inconsistent structure

Types of Conflicts Commonly Observed

Conflicting instructions can appear in different ways depending on how you write your prompt.

These conflicts happen when two or more instructions cannot be followed at the same time.

Format Conflicts

Format conflicts happen when you ask for different output styles together.

For example:

“Write a paragraph explanation and present it in a table”

👉 Problem:

A paragraph and a table are different formats

The AI may:

- Choose one

- Or mix both poorly

Tone Conflicts

Tone conflicts happen when you ask for different writing styles.

For example:

“Write in a professional tone and keep it casual”

👉 Problem:

- Formal and casual tones do not match

- The output may feel inconsistent or unnatural

Scope Conflicts

Scope conflicts happen when you ask for both short and detailed content.

For example:

“Explain this topic in detail in 1000 words and keep it under 100 words”

👉 Problem:

- The AI cannot satisfy both

- The result is often incomplete or unclear

Constraint Conflicts

Constraint conflicts happen when instructions directly oppose each other.

For example:

“Include examples but do not add extra information”

👉 Problem:

Examples require extra explanation

The AI may:

- Skip examples

- Or break the rule

Why This Matters in Practice

Conflicting prompts increase editing time, reduce output reliability, and make results harder to control.

In testing, even small conflicts led to inconsistent outputs across multiple runs, requiring manual correction.

This directly reduces efficiency when using AI for content, research, or automation.

Real-world failure case:

A prompt like:

“Write an SEO article, keep it under 800 words, include detailed explanations, use bullet points, and add examples”

Often results in:

– Medium-length content

– Inconsistent structure

– Weak depth

This increases editing time instead of reducing it, defeating the purpose of using AI.

Sequence Position Effects

Why the Order of Instructions Matters

The order in which you write instructions in a prompt can change how the AI responds.

The AI reads your prompt from start to end, so the position of each instruction affects how it is understood.

Why Instructions at the End Can Have More Influence

Instructions written at the end of your prompt often have a stronger impact.

For example:

“Explain this topic in detail. Keep it short.”

👉 The AI may focus more on “Keep it short”

because it appears last.

How Early Instructions Set the Direction

Instructions at the beginning can set the overall direction of the response.

For example:

“Explain simply. Then give a technical explanation.”

👉 The first instruction sets a simple tone,

but the second one may change how the answer is written.

When Instructions Compete Based on Position

If your prompt contains conflicting instructions:

- Earlier instructions start the response

- Later instructions may override or change it

This creates:

- Mixed answers

- Inconsistent style

- Unclear structure

What This Means in Real Use

If you place important instructions randomly:

- The AI may not follow them correctly

- Later instructions can override earlier ones

To get better results:

- Put your most important instruction clearly

- Avoid adding conflicting instructions later in the prompt

Context Window Interactions

When prompts become too long, instructions begin to overlap and compete within the same attention space.

As a result, clarity decreases and control over the output weakens.

To maintain consistent results, keep prompts focused and avoid combining too many conditions in a single request.

Ambiguity Amplification

When instructions are unclear or conflicting, the model maintains multiple possible interpretations instead of settling on one.

This increases variability and leads to inconsistent outputs.

This is why similar prompts can produce noticeably different outputs.

What This Means for You

If your prompt is unclear or contains conflicts:

- You may not get the same result every time

- The output may vary in style, length, or clarity

To fix this:

- Keep your instructions clear

- Avoid mixing incompatible requirements

Why Outputs Change Even for Similar Prompts

Small wording changes shift how attention is distributed across instructions.

When instructions are unclear or conflicting, this leads to different outputs across runs.

To improve consistency, use precise wording and avoid combining competing requirements.

Once you understand how conflicts occur, the next step is controlling them.

Prompt Governance Principles

To get consistent and accurate results from AI, your instructions must be clear, consistent, and non-conflicting.

Follow these rules:

- Use one clear objective per prompt

- Avoid combining instructions that cannot be satisfied together

- Keep tone, format, and scope consistent

- Remove vague terms like “properly” or “in detail” without context

Even small changes in instruction clarity can affect output consistency and structure.

Real Example: Conflicting vs Clear Prompt

❌ Conflicting Prompt:

“Write a detailed explanation in 1000 words and keep it under 100 words”

👉 Output:

The response does not fully satisfy either requirement, resulting in a weak and unclear output.

✅ Fixed Prompt:

“Write a detailed explanation in 1000 words”

👉 Output:

This topic can be explained step by step with clear structure, examples, and full detail. Each point is covered properly, making the response complete and easy to follow.

✅ Alternative Fix:

“Write a short explanation under 100 words”

👉 Output:

This topic can be explained briefly by focusing only on the key idea, keeping the response short and easy to understand.

✔ Key Insight:

When your instructions are clear and focused, the AI produces much better results.

Summary of Observed Limitations

Conflicting instructions reduce output reliability, increase variability across runs, and require more manual correction.

This makes prompt design a critical factor in getting consistent results from AI systems.

What Affects the Final Output

The result you get depends on:

- How you write your instructions

- The order of your prompt

- The clarity of your wording

Even small changes can affect the output.

Final Takeaway

Try this simple fix:

Step 1: Write your main goal in one sentence

Step 2: Remove any instruction that contradicts it

Step 3: Test the prompt once

Step 4: If output feels mixed, simplify further

This process helps eliminate conflicting instructions before they affect results.

Quick decision rule:

If your prompt contains instructions that cannot logically happen at the same time, it will produce unstable results.

Always choose one clear objective per prompt.

Quick Self-Check Before You Write a Prompt

Before submitting your prompt, ask:

- Am I asking for more than one outcome?

- Do any instructions contradict each other?

- Can all instructions be fully satisfied at the same time?

If not, simplify or split the prompt.

Conclusion

Conflicting instructions are one of the most common reasons AI outputs feel unclear, inconsistent, or incomplete.

The key issue is not the AI itself, but how instructions are structured within a prompt.

When multiple incompatible instructions are combined, the system attempts to follow all of them at once, which leads to mixed or unstable results.

To get better outputs:

- Use one clear objective per prompt

- Avoid combining conflicting goals

- Keep instructions simple and consistent

If your prompt is clear, the output will be clear.

Understanding this principle helps you move from unpredictable results to reliable, high-quality AI outputs.

Clear prompts are not just better—they are necessary for reliable AI outputs.

If your instructions are structured well, results become predictable.

If not, variability is unavoidable.

The difference is not the model—it’s the prompt.

If you want to go deeper, you can also explore why AI tools fail and how prompt design affects output quality.

Frequently Asked Questions (FAQs)

What are conflicting instructions in a prompt?

Conflicting instructions happen when your prompt includes requests that cannot be followed at the same time. For example, asking for both a detailed explanation and a very short answer in one prompt.

How does the AI handle multiple instructions in a single prompt?

The AI reads all instructions together and tries to follow them at the same time. It does not separate or organize them automatically.

Does the AI choose one instruction over another?

No, the AI does not clearly choose one instruction. It tries to follow all instructions, which can lead to mixed or unclear results.

Why does the same prompt sometimes give different answers?

The output can change because the AI may focus on different parts of your prompt each time, especially when instructions are unclear or conflicting.

Can the AI fix conflicting instructions on its own?

No, the AI does not fix or remove conflicting instructions. It simply tries to follow everything you provide.

Does the order of instructions matter in a prompt?

Yes, the order matters. Instructions written later can change or override earlier ones, which can affect the final output.

Does adding “Important” fix conflicting instructions?

No. Adding words like “Important” or “Must” can increase emphasis, but it does not resolve conflicting instructions.

If two instructions cannot be satisfied at the same time, the model will still produce a blended or unstable output.

Is splitting prompts always better than combining them?

Splitting prompts is better when instructions cannot be satisfied simultaneously.

For example, tasks like detailed explanation and short summary should be handled in separate prompts to avoid conflicts and improve output clarity.

The explanations in this article are based on established research in transformer architecture, attention mechanisms, and large language model behavior.

References

Language Models are Few-Shot Learners (Brown et al., 2020)

Shows how models generate outputs based on patterns rather than reasoning.

https://arxiv.org/abs/2005.14165

Attention Is All You Need (Vaswani et al., 2017)

Introduced the Transformer architecture and self-attention mechanism.

https://arxiv.org/abs/1706.03762

GPT-4 Technical Report (OpenAI, 2023)

Describes behavior and limitations of large language models.

https://arxiv.org/abs/2303.08774