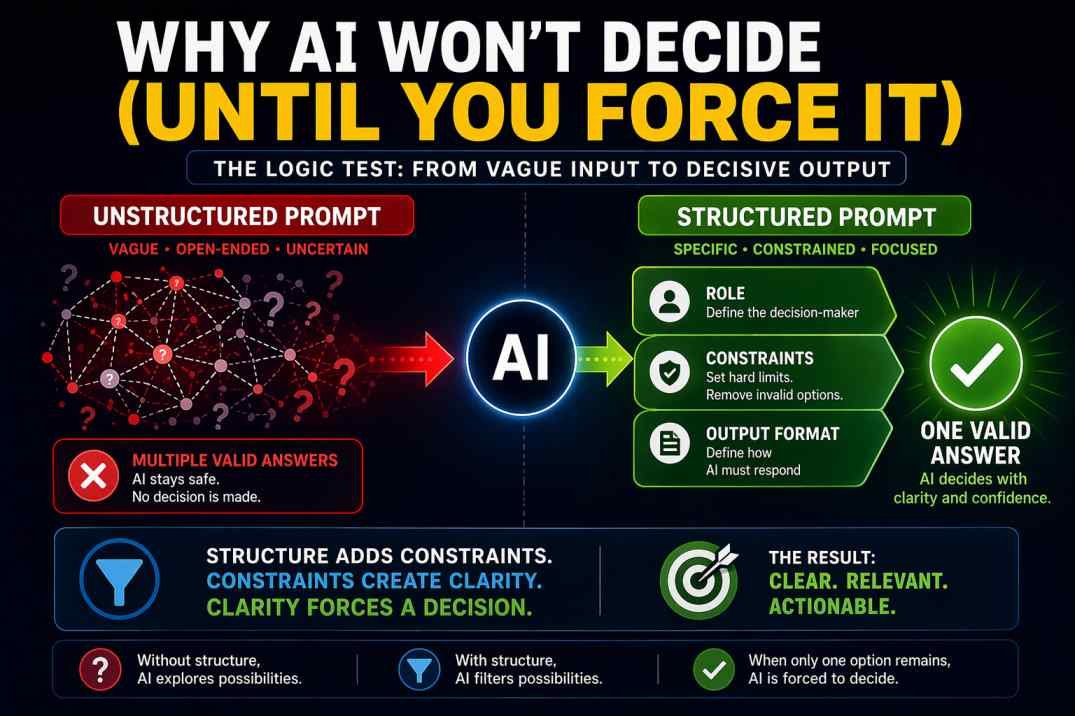

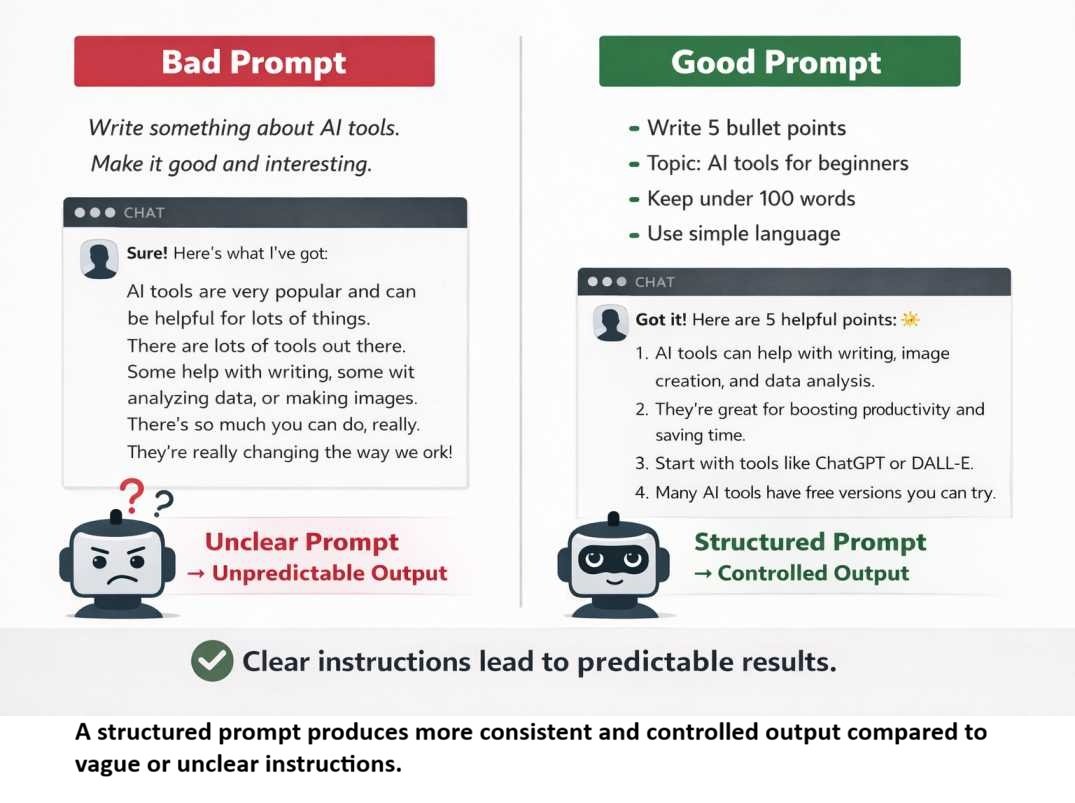

How Prompt Structure Controls AI Output (The Logic Test)

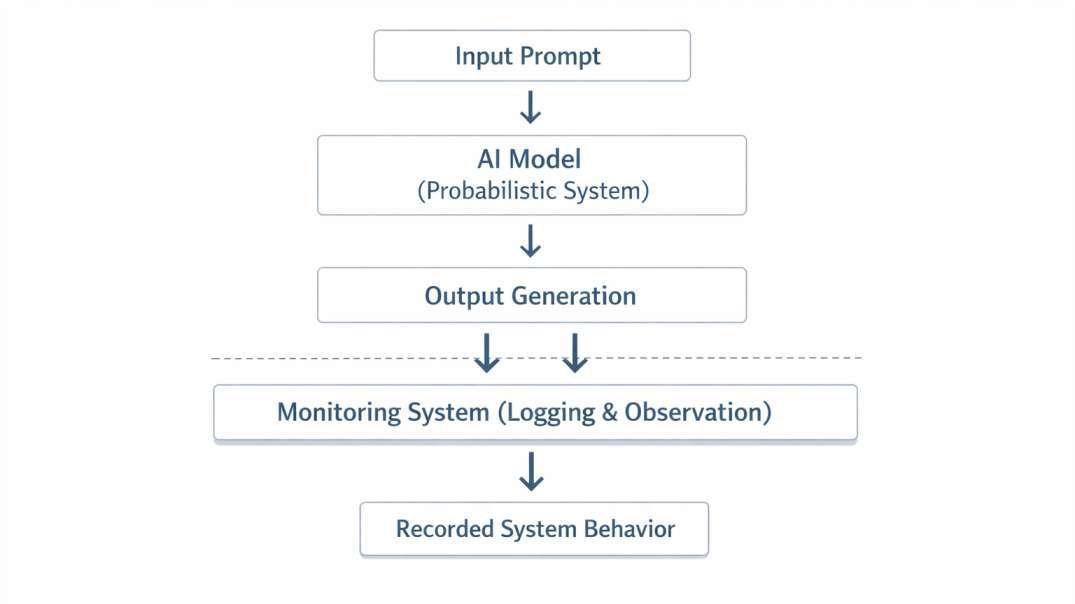

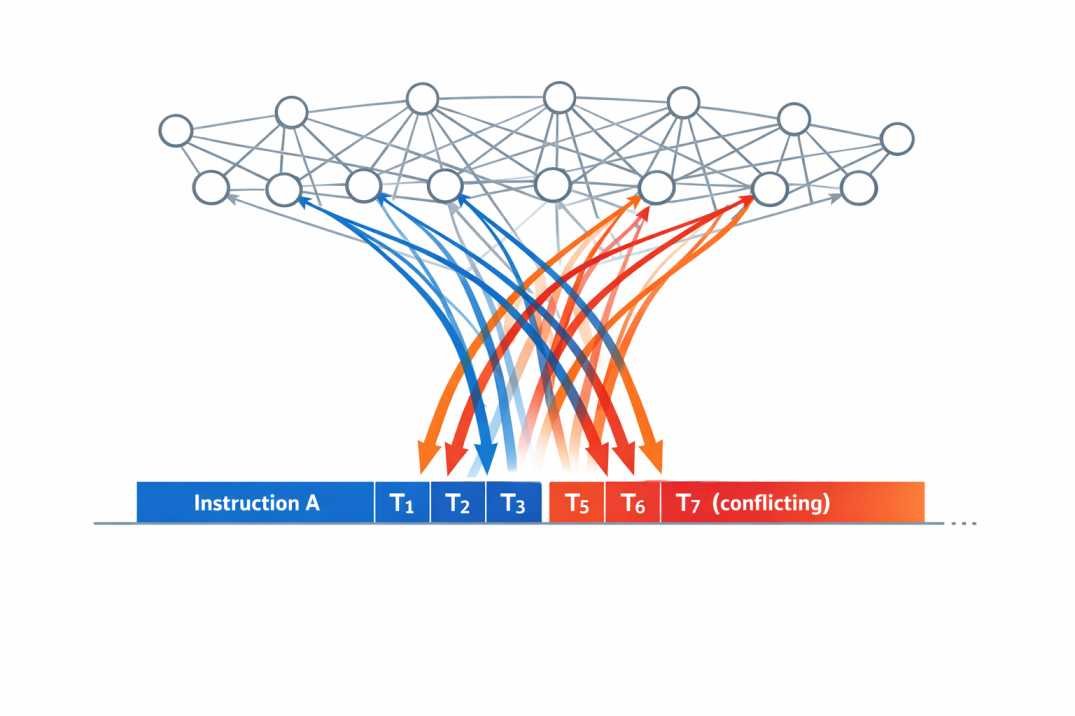

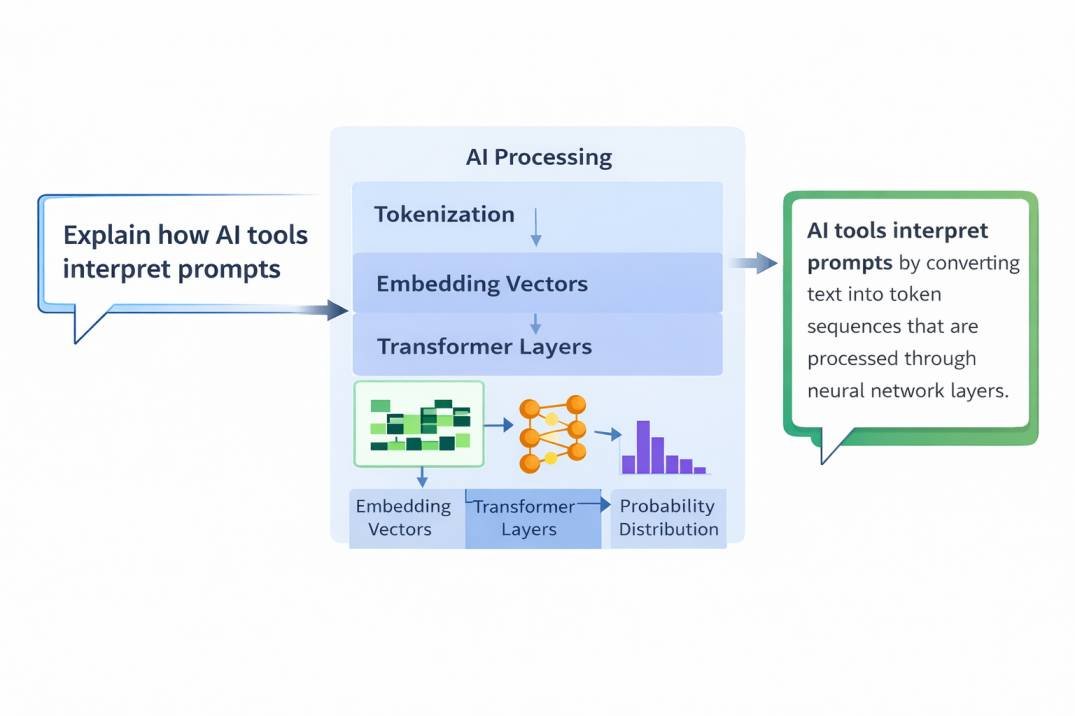

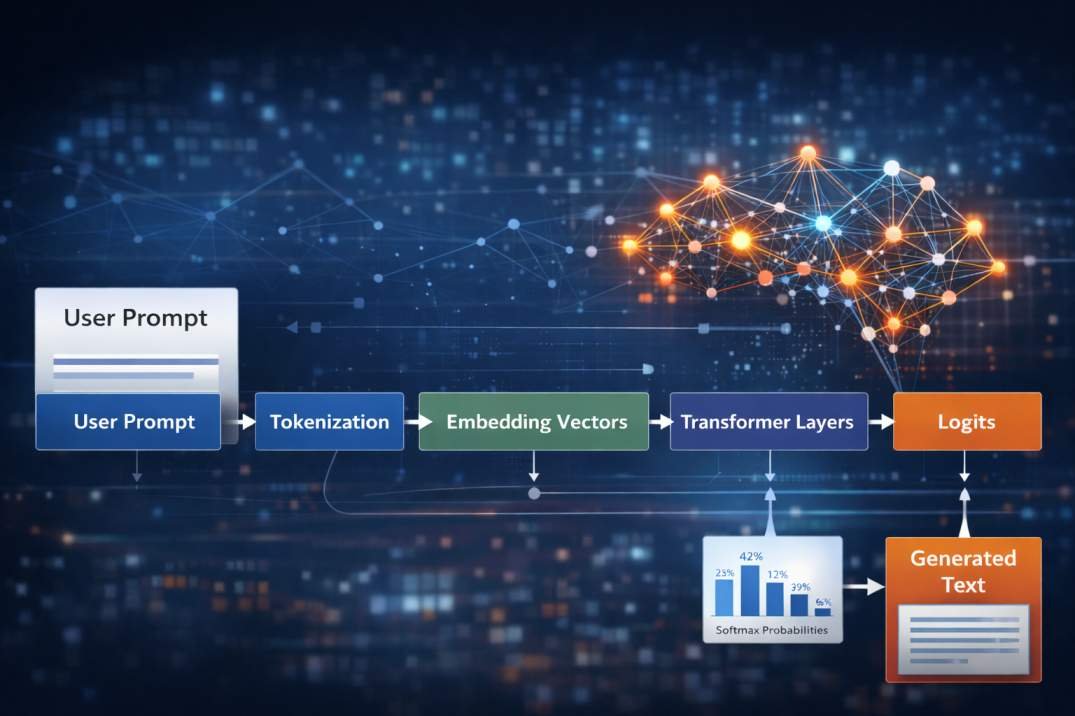

Introduction Prompt structure AI determines whether an AI model avoids decisions or produces a single, clear outcome. Most users treat AI like a search engine: ask a question, get an answer. That assumption is wrong. AI generates responses by selecting the most statistically acceptable option. When your prompt is vague, … Read more