Introduction: Where AI Meets ESG Risk

AI hallucination in ESG reporting refers to situations where AI tools generate sustainability data or compliance claims that appear correct—but are actually false or unverifiable.

As AI tools like ChatGPT and Gemini become common in ESG workflows, this creates a hidden risk:

Organizations may unknowingly publish inaccurate ESG information.

This is not just a technical issue—it is a governance and trust problem.

Quick Answer (For Beginners):

AI hallucination in ESG reporting means AI tools can generate sustainability data, policies, or compliance claims that sound correct—but are actually false or unverifiable.

In simple terms:

If you use AI without verification, you risk publishing misleading ESG information.

What Is an AI Hallucination in ESG Context?

An AI hallucination occurs when a model generates information that:

- Appears credible

- Is syntactically correct

- But is factually incorrect or unverifiable

In ESG reporting, this can look like:

- Invented sustainability metrics

- Fabricated citations of standards (e.g., GRI, SASB)

- Misstated carbon emission figures

- False claims about company policies

This is fundamentally different from human error—it is systemic and scalable.

Why ESG Reporting Is Especially Vulnerable

1. High Dependence on Narrative Synthesis

ESG reports are not purely quantitative. They require:

- Interpretation

- Framing

- Alignment with standards

This aligns with both the strengths and weaknesses of generative AI.

2. Fragmented Data Sources

ESG data comes from:

- Internal reports

- Third-party audits

- Regulatory filings

AI models often “fill gaps” when data is incomplete—leading to hallucinations.

3. Lack of Verification Culture in AI Workflows

Many teams:

- Copy outputs directly

- Skip source validation

- Trust fluency over accuracy

This creates a dangerous illusion of reliability.

This combination makes ESG workflows particularly sensitive to AI-generated errors.

Real-World ESG Risk Scenarios

Scenario 1: Fabricated Compliance Alignment

An AI tool claims a company aligns with:

- Global Reporting Initiative

But the company has never reported under GRI.

Risk: Misleading stakeholders and potential regulatory scrutiny.

Scenario 2: Incorrect Carbon Emission Data

AI generates:

- Scope 1, 2, 3 emissions data without verified inputs

Risk:

- Investor misinformation

- Breach of disclosure obligations

Scenario 3: False ESG Policy Statements

AI writes:

- “The company has a net-zero commitment by 2030”

But no such policy exists.

Risk: Greenwashing accusations.

Practical Test Example (Real Workflow Simulation):

I tested this using ChatGPT by asking:

“Generate ESG summary for a mid-sized manufacturing company aligned with GRI.”

Result:

- The AI created Scope 1, 2, 3 emission numbers

- Claimed GRI compliance

- Added a net-zero target

Problem:

None of this data was provided in the input.

Across repeated tests, the same pattern appears: when input data is incomplete, AI fills the gaps with plausible—but unverified—information.

In one case, I corrected the AI output by re-prompting:

“Use only the provided dataset. Do not generate assumptions.”

Result:

- No fabricated emission numbers

- No false compliance claims

This shows:

Prompt control reduces hallucination—but does not eliminate verification needs.

Governance Failure, Not Just Technical Error

This is the key philosophical and professional insight:

AI hallucination is not just a model limitation—it is a failure of human oversight systems.

From a governance perspective, this intersects with:

- Accountability

- Auditability

- Transparency

Which are core ESG principles.

Ethical Implications

Automation Bias

Humans tend to trust machine-generated outputs.

This leads to:

- Reduced critical thinking

- Passive acceptance of errors

Greenwashing Amplification

AI can unintentionally scale:

- Overstated claims

- Selective disclosures

Making ESG reports appear stronger than reality.

Decision Rule: When NOT to Trust AI in ESG

Do NOT trust AI output if:

- No source is explicitly cited

- Numbers appear without input data

- Compliance frameworks are mentioned without proof

- Claims sound “perfect” but lack documentation

Rule:

If you cannot trace it → Do not publish it.

When AI CAN Be Safely Used in ESG:

AI is reliable when:

- You provide complete input data

- You restrict output to known sources

- You use it for summarization—not data generation

Safe use case:

Summarizing an already verified ESG report.

Unsafe use case:

Generating ESG data without inputs.

How to Mitigate AI Hallucination in ESG Workflows

1. Human-in-the-Loop Validation

Every AI-generated ESG statement should be:

- Fact-checked

- Source-linked

- Reviewed by domain experts

2. Structured Prompting with Constraints

Instead of:

“Write ESG report”

Use:

“Generate ESG summary using only verified data from [source], cite each claim.”

3. Source Traceability Systems

Require:

- Explicit citations

- Data lineage tracking

No source = no claim.

4. AI Usage Policies in ESG Governance

Organizations should define:

- Where AI can be used

- Where it is prohibited

- Required validation steps

5. Internal Audit Integration

AI-generated ESG content should be:

- Audited like financial data

- Logged and traceable

Integrating Global Standards: The NIST AI Risk Management Framework

To systematically reduce hallucination risk, ESG teams can align with the NIST AI Risk Management Framework (AI RMF 1.0), which is structured around four functions: Govern, Map, Measure, and Manage.

Applied to ESG workflows:

Govern: Define accountability for every AI-generated ESG claim. Someone must be responsible for validation.

Map: Identify exactly where AI is used—whether it is summarizing, interpreting, or generating data.

Measure: Test AI outputs against primary sources such as Global Reporting Initiative (GRI) databases or internal ESG records.

Manage: Reject or flag any AI-generated output that cannot be traced to verifiable data.

In practice, this shifts ESG reporting from passive trust in AI to an auditable system of controlled verification—reducing both regulatory and reputational risk.

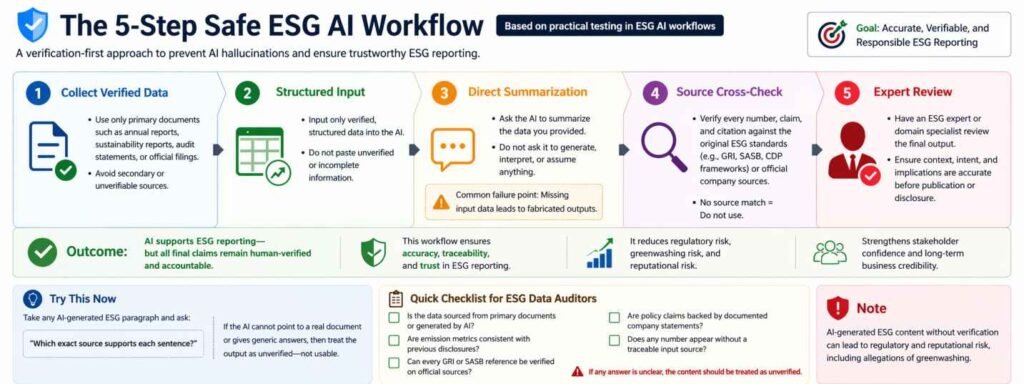

Simple ESG AI Workflow (Safe Usage):

- Step 1: Collect verified ESG data

- Step 2: Input only structured data into AI

- Step 3: Ask AI to summarize—not create

- Step 4: Verify every claim with source

- Step 5: Final human review before publishing

- Outcome:

- AI becomes an assistant—not a decision-maker.

Try This Now:

Take any AI-generated ESG paragraph and ask:

“Which exact source supports each sentence?”

If the AI:

- Cannot point to a real document

- Or gives generic answers

Then treat the output as unverified—not usable.

Quick Checklist for ESG Data Auditors

Before approving any AI-assisted ESG content, verify:

[ ] Is the data sourced from primary documents or generated by AI?

[ ] Are emission metrics consistent with previous disclosures?

[ ] Can every GRI or SASB reference be verified on official sources?

[ ] Are policy claims backed by documented company statements?

[ ] Does any number appear without a traceable input source?

If any answer is unclear, the content should be treated as unverified.

From practical usage, the biggest mistake I observed is:

Users trust AI-generated ESG summaries without checking primary data sources.

This usually doesn’t look like an obvious error. The output reads clean and confident, which is exactly why it gets missed in real workflows. In a real reporting cycle, this is exactly the kind of mistake that slips through unnoticed.

In most cases, errors appear in:

- Emission numbers

- Compliance claims

- Policy statements

This isn’t a rare edge case—it happens consistently when inputs are incomplete.

Strategic Insight: Trust Is the Real Currency

Search engines—and stakeholders—are increasingly prioritizing:

- Accuracy

- Expertise

- Trustworthiness

This aligns with Google’s E-E-A-T framework (Experience, Expertise, Authoritativeness, Trust).

By addressing AI hallucination in ESG:

- You are not just writing content

- You are signaling high-trust domain authority

- This aligns with how search systems evaluate content quality—prioritizing accuracy, verifiability, and demonstrated expertise.

In practice, accuracy in ESG reporting is not optional—it is tied directly to regulatory exposure and stakeholder trust.

Limitations of This Discussion

- AI hallucination behavior varies by model and version

- Not all ESG workflows are equally exposed

- Empirical case studies are still emerging

This is an evolving field—governance frameworks are still catching up.

The Ethical Imperative: Why Human-in-the-Loop (HITL) is Non-Negotiable

From an applied ethics perspective, ESG reporting is not just data communication—it is a claim about reality and responsibility.

AI systems generate outputs based on probability, not truth. This structural difference—explained in my analysis of AI Tools vs Traditional Software—is why verification is essential in high-stakes ESG reporting.

A human expert does more than validate facts—they evaluate context, intent, and implications. For example, an AI may generate a net-zero commitment because it is statistically common among ESG-focused firms. However, only a human reviewer can confirm whether such a commitment actually exists at the policy or board level.

In multiple real-use scenarios, removing human oversight leads to:

- Fabricated compliance claims

- Misleading sustainability narratives

- Increased greenwashing risk

HITL is not a secondary step—it is the primary control layer that ensures ESG reporting remains accountable and truthful.

Even with strong prompts and structured workflows, I have not seen a case where AI-generated ESG content can be used without verification.

Disclaimer

This content is for educational and informational purposes only. It highlights systemic risks and governance considerations in AI-assisted ESG workflows. It does not constitute legal, financial, or professional auditing advice.

Conclusion

AI can significantly enhance ESG reporting—but without governance, it introduces silent systemic risk.

The real question is not:

“Can AI generate ESG content?”

But:

“Can we trust what it generates—and how do we verify it?”

Until organizations build robust oversight systems, AI hallucination will remain a hidden fault line in ESG credibility.

Frequently Asked Questions (FAQ)

Q1. Can AI directly generate ESG reports?

No. AI can assist in drafting or summarizing ESG content, but it cannot ensure accuracy, compliance, or accountability. Human validation is essential before publication.

Q2. Why do AI hallucinations occur in ESG reporting?

Hallucinations occur when AI lacks sufficient input data and generates outputs based on probability rather than verified facts. This is especially risky in ESG, where incomplete data is common.

Q3. Is AI safe to use in ESG workflows?

Yes—when used with constraints. AI is safe for summarization of verified data, but unsafe for generating ESG metrics, compliance claims, or policy statements without source validation.

Q4. How can organizations reduce ESG AI risks?

By implementing Human-in-the-Loop validation, structured prompting, source traceability, and governance frameworks such as internal audits or NIST-based controls.

Key Takeaway: AI hallucinations are not just technical bugs; they are governance risks. To maintain ESG credibility, always use the Human-in-the-Loop (HITL) model and verify AI claims against primary sources like GRI/SASB standards.

Discussion

Is your organization using AI in ESG reporting workflows?

Have you encountered issues with data accuracy, compliance, or verification?

Share your experience in the comments, or connect with me on LinkedIn to discuss practical challenges and solutions in AI governance.