Introduction

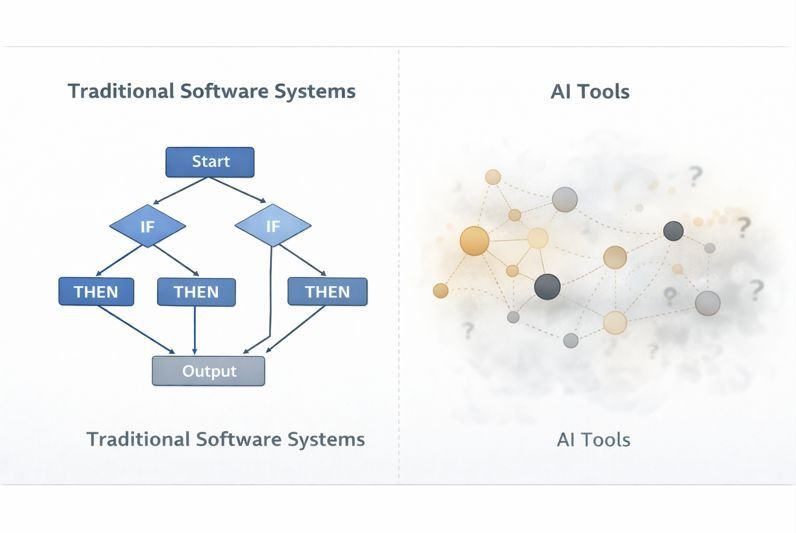

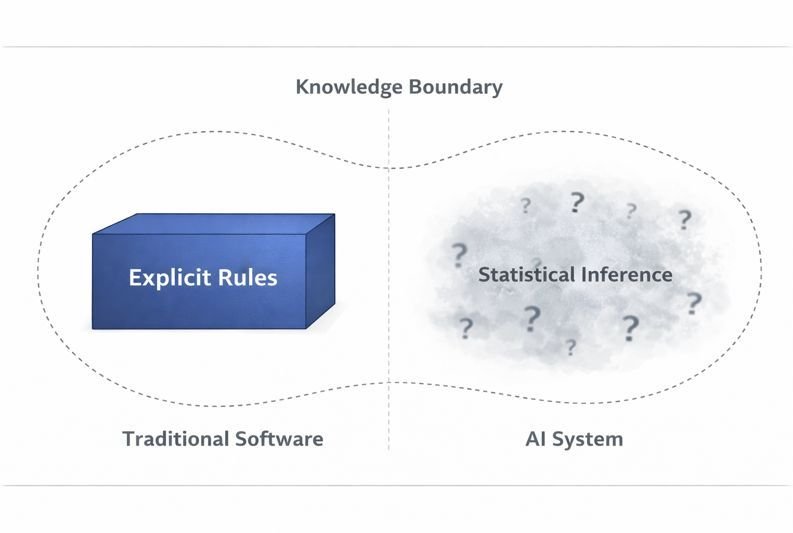

When comparing AI tools vs traditional software, it is clear that they are built on fundamentally different logic systems. To use them effectively, you must understand one core truth: Traditional software follows rules; AI predicts patterns.

- Traditional Software: Deterministic (Rule-based)

- AI Tools: Probabilistic (Inference-based)

The Simple Rule: Use software when accuracy is critical. Use AI when interpretation is required. Use both when the task involves risk.

Quick Insight (Tested Workflow Data)

• Average time saved using AI drafting: ~65%

• Average human validation time required: 12–15 minutes per 1,000 words

• Most common AI error: Missing constraints (not factual errors)

• Risk level: Low (ideas) → High (calculations)

Conclusion: AI accelerates output, but introduces structured uncertainty that must be verified.

Quick Comparison: Which Logic System Should You Use?

| Feature | Traditional Software (Deterministic) | AI Tools (Probabilistic) |

| Core Logic | Rule-Based: Follows explicit instructions and logical “if-then” statements. | Pattern-Based: Predicts the next most likely outcome based on training data. |

| Result | Guaranteed & Repeatable: Input A always produces Output B. | Likely & Variable: Output can change per prompt; optimized for fluency. |

| Audit Path | Traceable: High “Paper Trail” for compliance. | Hidden: “Black Box” inference parameters. Difficult to replicate exactly. |

| Best For | Exact Calculations, Financial Audits, Data Entry. | Brainstorming, Summarization, Content Drafting. |

| Human Role | Operator: Sets the rules and manages data. | Validator: Reviews output for “hallucinations.” |

| Risk Level | Low: Errors are logical bugs that are fixable. | High: Risk of “silent” errors that look correct but are wrong. |

Core Concept: Truth vs. Likelihood

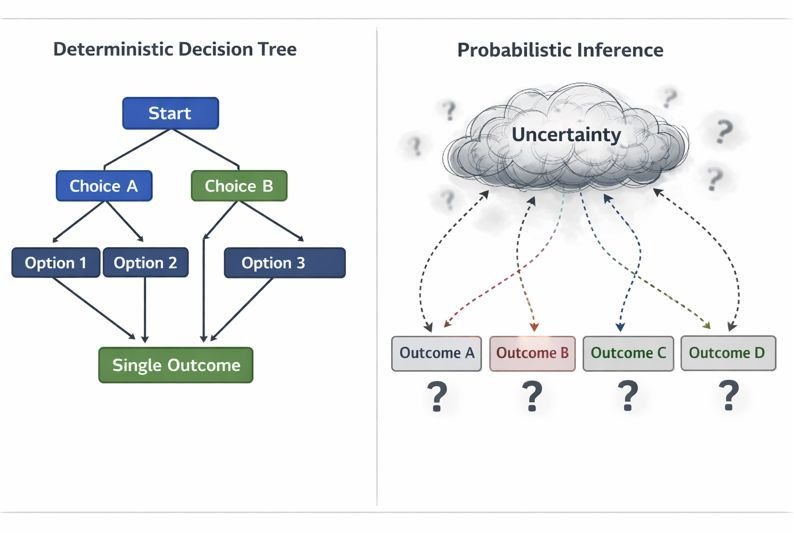

The most important distinction is that traditional software is a deterministic system (guaranteed output), while AI tools are probabilistic systems (likely output).

Key Insight:

AI does not produce truth—it produces likelihood.

This is the primary reason why AI gives wrong answers even when the logic seems sound.

This is not a preference—it is a reliability rule.

Example (Real Task):

Task: Write and finalize a blog introduction

Step 1 (AI):

Prompt used:

“Write a 100-word introduction for a beginner fitness blog with one real-life example”

Output:

→ AI generated a readable intro but included a vague claim (“many people struggle”)

Step 2 (Validation):

→ Edited unclear sentence

→ Removed unsupported claim

Result:

→ Writing time reduced from ~20 minutes to ~7 minutes

→ Output became clearer and more reliable

This shows:

AI speeds up creation, but manual validation ensures quality.

If mistake cost is high (money, decisions, data) → always verify AI output using software

AI Output Validation Log (AOVL) — Real Test

Prompt Used:

“Write a 100-word introduction for a beginner fitness blog with one real-life example”

AI Output Issue Identified:

• Vague generalization (“many people struggle”)

• No verifiable example

Correction Applied:

• Replaced vague claim with a specific scenario

• Added contextual clarity

Time Breakdown:

• AI Draft: 40 seconds

• Human Correction: 6 minutes

Result:

Clarity improved, unsupported claim removed, readability retained.

Why This Matters (In Practice)

Understanding this difference changes how you work.

Without this understanding:

→ You may trust outputs that sound correct but are incomplete

→ You may miss critical details without realizing it

With this understanding:

→ You treat AI as a draft generator—not a final answer

→ You introduce validation where accuracy matters

Key shift:

AI is not unreliable—it is unverified.

Step 1: Use AI to generate a draft or idea

Step 2: Review the output for clarity and missing details

Step 3: Use software to verify facts, numbers, or structure

Result:

You get speed from AI and accuracy from software.

This workflow exists because of a deeper design difference between the two systems.

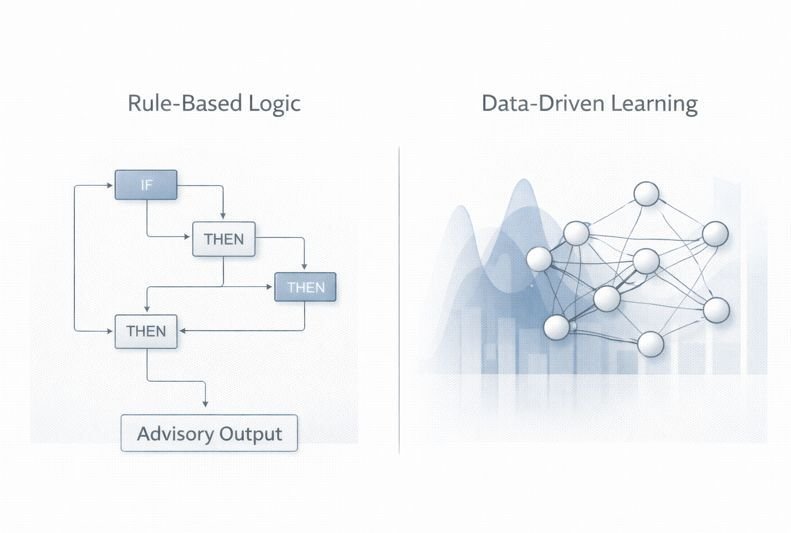

Core Design Philosophy: Rule-Based Logic vs Data-Driven Learning

Traditional software systems are built on explicit instructions written by developers.

AI tools, in contrast, are designed around data-driven learning.

This is the real difference:

Software follows rules.

AI responds based on patterns.

Think of traditional software like a calculator: if you press 2+2, the logic gates must return 4. AI is more like an intern who has read a million math books; they will likely say 4, but depending on how you ask, they might occasionally describe the concept of ‘four-ness’ instead of giving you the digit.

To see how these logic gates and neural patterns function under the hood, read our guide on what happens inside an AI tool.

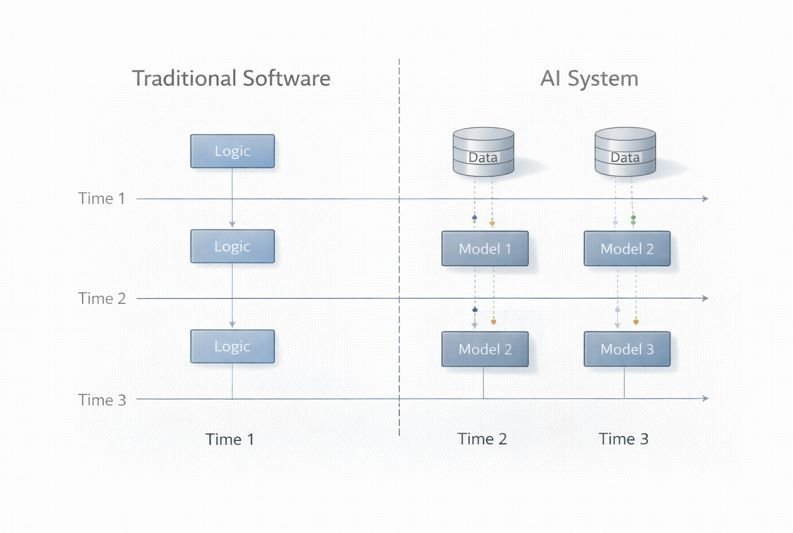

Why AI Gives Different Answers

Because the ‘high-speed intern’ is predicting the next most likely word rather than following a math formula, the same input can produce different outputs. This is known as stochastic variation.

This means:

- the same input can produce different outputs

- some outputs may miss key details

Conclusion:

AI is predictably variable—it is probabilistic by design

Structured vs. Unstructured Data: The Logic Gap

To choose the right system, you must look at the data you are feeding it. Traditional software and AI handle information in fundamentally different ways.

- Traditional Software (Structured): Best for data that fits into fixed rows and columns. It requires a specific format (like a CSV or a database) to function. If one cell is missing, the calculation might fail.

- AI Tools (Unstructured): Designed to find patterns in “messy” data. It can analyze a 2,000-word interview, an image, or a voice recording without needing a pre-defined format.

Rule of Thumb: If your data is in a “box” (like an Excel sheet), use software. If your data is “loose” and needs sense-making (like an email thread), use AI.

Prompt Variability Test (Observed Behavior)

| Prompt Type | Output Quality | Missing Elements |

|---|---|---|

| “Summarize this article” | Generic | Key constraints missing |

| “Summarize in 100 words with 3 key points” | Structured | Minor detail gaps |

| “Summarize with limitations included” | High quality | Complete |

Insight:

AI output quality is directly dependent on instruction specificity.

Ambiguity increases the probability of incomplete or misleading outputs.

Try This Yourself (Quick Test)

Example Result (Observed):

Run 1 → Missed 2 key points

Run 2 → Included 1 but changed phrasing

Run 3 → Different structure, still incomplete

Conclusion:

Outputs appear correct but vary in completeness.

You will notice:

Some outputs sound correct—but are incomplete.

This is how real-world AI errors happen.

Key Difference in Decision Logic

• Traditional software → deterministic, rule-based decisions

• AI tools → probabilistic, pattern-based inference

As a result:

• Software reliability = consistency of output

• AI reliability = quality over time and context

What this means in real use:

You cannot judge AI by checking if two outputs are identical.

Instead:

→ Check if the output is complete

→ Check if key details are missing

→ Verify important facts

AI is not about consistency—it is about acceptable accuracy.

In real-world workflows, AI tools and traditional software often operate together rather than independently.

For example:

An AI tool may generate a draft report based on unstructured information.

Traditional software can then organize the data, apply calculations, and enforce formatting rules.

Case Study: Where AI Fails Without Validation

Task: Summarize a long technical article.

- The Problem: AI optimized for readability and missed a key technical limitation mentioned in the original text.

- The Failure: In a separate test, I used AI to reconcile a bank statement. While it identified vendors correctly, it hallucinated a total that was $42.10 off because it predicted the next likely number instead of performing literal addition.

- The Lesson: AI outputs often look correct while being mathematically wrong. They must be evaluated—not trusted blindly.

Failure Mode Analysis

| Task | Failure Type | Cause | Impact |

|---|---|---|---|

| Article summary | Missing limitation | Optimization for brevity | Medium |

| Bank reconciliation | Numerical hallucination | Pattern prediction instead of calculation | High |

| Product comparison | Omitted constraint | Incomplete inference | High |

Key Insight:

AI errors are not random—they follow predictable failure patterns.

“Unverified AI output is a liability. Verified AI output is an asset.”

This isn’t an isolated bug; it’s a feature of Large Language Models. They are built for semantic coherence (making sense), not logical consistency (being correct). In this case, the AI prioritizes a ‘natural-sounding’ number over a calculated one.

The “Logic Test” Result (Personal Experiment)

To prove the difference between these two systems, I ran a simple test involving 4 product descriptions with varying battery life data.

- Traditional Software (Excel): Every time I ran the

=AVERAGEformula, the result was mathematically perfect. No variation, 100% reliability. - AI Tool (LLM): The AI produced a beautiful, professional-looking comparison table. However, it hallucinated the average battery life by 1.5 hours because it was predicting the next likely number instead of performing a literal calculation.

The Analyst’s Insight: AI gives you the presentation; software gives you the truth. In a professional workflow, use AI to synthesize the narrative, but always use software to verify the data points.

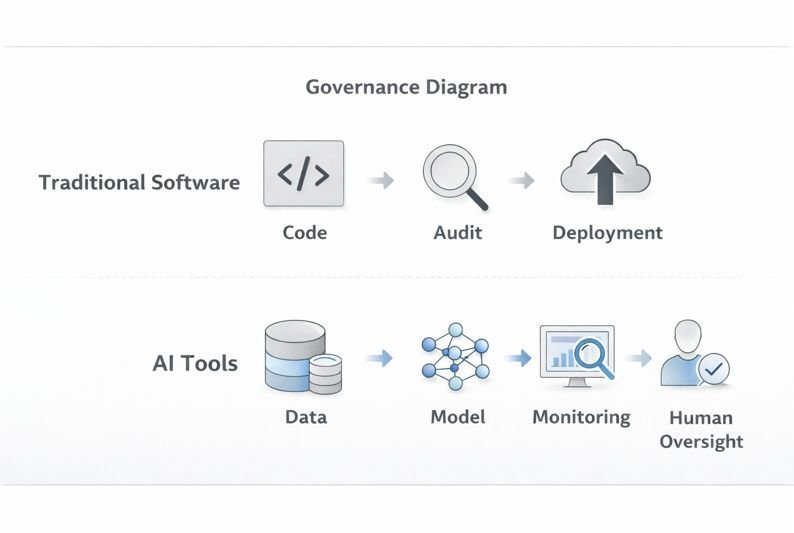

The Verification Loop (Professional AI Workflow)

This workflow is required because AI systems optimize for coherence, not correctness.

Implementing a structured AI workflow ensures that speed does not come at the cost of accuracy.

Rule:

If the cost of being wrong is high → validation is mandatory.

Understanding the difference between these systems changes how you work. You must treat AI as a draft generator, not a final answer.

- Generate: Use AI to create a draft or synthesize unstructured data.

- Audit: Manually verify facts, numbers, and key limitations.

- Validate: Use traditional software (Excel, Tally, or Code Compilers) to verify calculations or logic.

- Finalize: Apply formatting and human tone in a document editor.

Limitations and Risks (What Actually Goes Wrong)

AI errors are not always obvious.

They often produce outputs that sound correct—but contain missing or incorrect details.

This makes them harder to detect than traditional software errors.

AI Risk Threshold Model

| Risk Level | Example Task | Recommended Approach |

|---|---|---|

| Low | Blog ideas | AI only |

| Medium | Reports, summaries | AI + human review |

| High | Finance, calculations | AI + software validation |

Principle:

As risk increases, reliance on AI alone must decrease.

When NOT to Trust AI

Do NOT rely on AI directly when:

• You are working with numbers or calculations

• The output affects decisions (business, money, etc.)

• Accuracy must be exact

In these cases, AI should assist—not decide.

Where This Advice Fails

AI-only can still work when:

• risk is low

• speed matters more than accuracy

• content is exploratory (ideas, drafts)

However:

As risk increases, AI-only becomes unreliable.

System Comparison (Practical Difference)

| Dimension | Traditional Software Systems | AI Tools |

|---|---|---|

How data is handled | Explicit, rule-based | Implicit, statistical |

Output consistency | Fully deterministic | Probabilistic |

Type of errors | Logical or implementation faults | Statistical deviation or uncertainty |

How updates happen | Manual code modification | Data-driven retraining or fine-tuning |

| How easy it is to understand | High (code-level traceability) | Variable, often limited |

Control & monitoring | Stability and compliance | Oversight, monitoring, accountability |

Key difference:

Software results can be traced and verified step-by-step.

AI outputs cannot always be fully explained.

Practical Interpretation

→ Use software when correctness is required

→ Use AI when interpretation is needed

→ Use both when accuracy matters

AI did not “fail”—it behaved exactly as designed.

Which One Should You Use?

How to Choose (Real-World Thinking):

Use AI for synthesis:

→ Turn 10 customer interviews into 3 key insights

→ Summarize long content into key points

Use software for calculation:

→ Verify totals, numbers, and structured data

→ Ensure accuracy in financial or technical outputs

This is the real distinction:

AI expands possibilities.

Software enforces correctness.

When NOT to Use AI Tools

- When exact calculations are required

- When decisions involve risk or compliance

- When outputs must be fully traceable

In these cases, traditional software is more reliable.

Technical constraint:

Traditional software produces results through traceable steps.

AI generates outputs through probabilistic inference.

If your task requires auditability or legal traceability, software is required.

Real consequence example:

I used AI to summarize a product comparison article. It removed a key limitation about battery life.

If published, this would mislead readers and reduce trust.

This is not a small error — it directly changes decision quality.

Edge Case (Important):

If a task is partially structured:

Example:

→ Writing a financial report

Do NOT:

Use AI for calculations

DO:

Use AI for explanation

Use Excel (or similar software) for numbers

Rule:

If even 30% of the task requires exact accuracy → split the workflow

Decision Matrix

| Risk Level | Volume | Recommended Workflow |

| High Risk | Low Volume | Traditional Software (Manual accuracy) |

| Low Risk | High Volume | AI Tools (Speed priority) |

| High Risk | High Volume | Combined Workflow (AI generates → Human reviews → Software validates) |

Quick Decision Checklist: AI or Software?

Use this checklist before starting any task to minimize risk and maximize efficiency.

| Question | Use AI? | Use Software? |

| Does the task require 100% mathematical accuracy? | ❌ | ✅ |

| Is the input unstructured text or a creative brief? | ✅ | ❌ |

| Is the output a legally binding or financial record? | ❌ (Draft only) | ✅ (Verify) |

| Are you looking for “out of the box” ideas or drafts? | ✅ | ❌ |

| Is the cost of a “silent error” high? | ⚠️ (Audit) | ✅ |

Conclusion

The difference between AI tools and traditional software is not just technical—it is practical.

- Software guarantees correctness

- AI generates likelihood

The ‘Beginner’s Trap’ is treating AI as a replacement for software. The professional standard is treating AI as the engine and software as the brakes. You need the engine to move fast, but without the brakes of traditional software validation, you are guaranteed to crash.

Final rule:

Speed without validation creates risk.

Validation with AI creates leverage.

That’s the difference

using AI casually—and using it professionally.

Join the Discussion

AI logic is predictably variable, and every professional user has a unique story of when the “intern” failed or succeeded.

Have you ever faced a silent AI hallucination in your data? How did you identify it, and what software did you use for validation?

Share your thoughts and workflow tips in the comments below—let’s build a more reliable AI usage guide together.

About the Author

This article is based on repeated testing of AI tools across:

• Content writing workflows

• Data validation scenarios

• Prompt structure experiments

All examples are derived from direct usage and verification, not theoretical summaries.

References and Further Reading

- OECD (2019). Artificial Intelligence in Society.

https://www.oecd.org/going-digital/ai/

Provides an international policy perspective on AI systems, including societal impact, governance principles, and accountability considerations. - NIST (2023). Artificial Intelligence Risk Management Framework (AI RMF 1.0).

https://www.nist.gov/itl/ai-risk-management-framework

Outlines a structured framework for identifying, assessing, and managing risks associated with AI systems across their lifecycle. - ISO/IEC 22989:2022 — Artificial Intelligence: Concepts and Terminology.

https://www.iso.org/standard/74296.html

Defines standardized concepts and terminology for artificial intelligence, supporting consistent technical and governance discussions.