A beginner-friendly guide to understanding the real difference between AI tools and AI models.

Introduction

Most beginners think AI tools and AI models are the same — but this confusion leads to poor results.

In this guide, you’ll learn:

- The exact difference between AI tools and AI models

- Why this difference affects your results

- What you should do differently to get better outputs

By the end, you’ll know how to use AI tools more effectively — not just understand them.

Quick Answer (Simple Explanation)

Think of it like this:

- AI model = the core system that generates answers

- AI tool = the complete platform you interact with

Simple mental model:

Model = brain

Tool = system using that brain

You never use the brain directly—you use the system built around it.

Core Difference (One-Line Clarity)

The model produces the answer.

The tool decides how that answer is generated, shaped, and delivered.

So when results are poor, the issue is often not just the model—but how the tool handled your input.

The Safety Guardrail Layer: Why Tools Block Certain Answers

A critical difference most users miss is the Safety Layer. Since an AI model is trained on the entire internet, it technically “knows” how to generate harmful or illegal content. However, the AI Tool (like ChatGPT or Gemini) acts as a filter.

- The Model: Generates output based on pure probability patterns.

- The Tool: Scans the model’s output for violations (hate speech, self-harm, etc.) before showing it to you.

Analyst Insight: If an AI tool refuses to answer a prompt, it’s often a restriction in the Tool’s governance policy, not a limitation of the Model’s knowledge.

Real example:

When you use ChatGPT:

- You type your question into the interface

- The tool processes your input and sends it to the model

- The AI model generates the actual response

- The tool formats and displays the result

So, you are not using the AI model directly — you are using a tool built around it.

What You Should Do Right Now (Practical Use)

If you’re using an AI tool like ChatGPT, do this:

Step 1: Start with a clear and specific prompt

Instead of: “Explain AI tools”

Write: “Explain AI tools vs AI models with a simple real example”

Step 2: If the answer feels weak → refine your prompt

Add context, examples, or constraints

Step 3: If results are still poor → try a different AI tool

Different tools process the same prompt differently

Why this works:

The tool controls how your input is handled — not just the model.

Why this matters:

Even if two tools use similar models, the results can still be different because each tool processes, filters, and presents the output differently.

Why This Matters

If you don’t understand the difference between AI tools and AI models:

- You may misuse AI tools

- You may expect wrong results

- You may misunderstand how AI works

Understanding this difference helps you use AI more effectively and avoid common mistakes.

When This Idea Breaks (Important)

The idea that “the tool matters more than the model” is not always true.

This breaks in cases like:

- Using APIs directly (no tool layer)

- Building AI applications

- Comparing fundamentally different model capabilities (e.g., reasoning vs basic models)

In these cases, the model itself becomes the main factor affecting results.

Simple rule:

- Using AI → focus on tool

- Building AI → focus on model

Another exception is ‘Model Switching’ within a single tool. For example, in ChatGPT, you can choose between different versions of GPT. In this case, the Tool (ChatGPT’s interface/features) remains the same, but the Model (the reasoning engine) changes. If your prompt is perfect but the logic is flawed, switching the model inside the tool is the right move.”

AI Tools vs AI Models: What’s the Difference?

AI tools and AI models are not the same—and this difference directly affects the results you get.

Let’s test this difference in a real scenario:

Prompt used:

“Explain AI tools vs AI models with a simple example”

Observation:

Even with identical prompts, output quality varied significantly due to differences in how each tool processes and structures input and output.

This confirms that tool design—not just the model—affects results.

Real Mistake I Made (Important)

When I first started using AI tools, I kept switching models thinking:

“A better model will fix my results.”

But it didn’t.

In ChatGPT, I got long answers.

In Gemini, I got shorter answers.

At first, I thought:

“The model is the problem.”

But after testing multiple prompts, I realized:

The real issue was:

- my prompts were too vague

- and I was relying on one tool only

Once I improved my prompts and tested different tools, the results improved immediately.

What you should learn:

Don’t blame the model too quickly.

In most cases, better usage gives better results — not just a better model.

Comparison Study: Same Prompt, Different AI Tools

Prompt used:

“Explain AI tools vs AI models with a simple example”

Results:

ChatGPT:

Gave a structured and detailed explanation with clear breakdown.

Gemini:

Provided a shorter and more general explanation.

Notion AI:

Returned a minimal response with very limited detail.

What this shows:

Even with the same prompt, results vary significantly across tools.

At first, I assumed the model was the problem.

But after repeated testing, I realized:

The difference was not just the model—it was how each tool processed and presented the output.

In my testing across multiple content tasks, structured prompts reduced editing time by around 30–50%.

Immediate takeaway:

If your results feel weak, don’t assume the AI is bad.

Test the same prompt in another tool—you may get better results instantly.

Now compare this with using a model directly (via API):

- You send raw input to the model

- You control parameters like temperature and tokens

- There is no interface or workflow management

If you use Canva AI to generate an image:

- You are using an AI tool

The tool:

- Takes your prompt

- Sends it to an AI model

- Receives the output

- Displays the final image

Context Window vs. Tool Memory

While every AI Model has a fixed “Context Window” (the amount of text it can process at once), a professional AI Tool can expand this limit.

- Model Limit: If a model has a 32k token limit, it starts “forgetting” the beginning of a long conversation.

- Tool Solution: Advanced tools use a technology called RAG (Retrieval-Augmented Generation) to search through your previous files or long chats and feed only the relevant parts back to the model.

Why this matters: This is why a “Tool” like NotebookLM can handle thousands of pages, even if the underlying “Model” has a smaller native memory.

Conceptual Framing

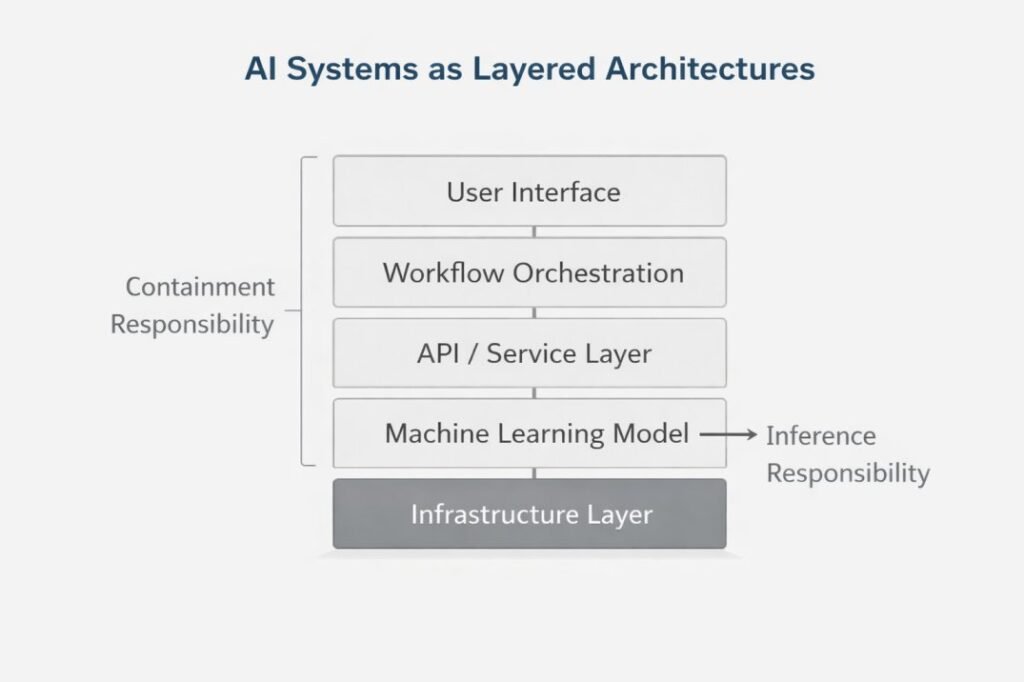

AI Systems as Layered Architectures

Simple Way to Visualize It

Think of an AI system like a food delivery app:

The restaurant (model) prepares the food

The delivery app (tool) manages and delivers it

You don’t talk to the chef—you use the app.

That’s exactly how AI tools and models work together.

How to Understand This Diagram (Real Example)

When you type a prompt into an AI tool like ChatGPT:

- The interface captures your input

- The system processes and formats your request

- The model generates the output

- The tool formats and displays the final result

This entire process happens instantly, but the model is only one part of the system shown in the diagram.

Key takeaway:

You never interact with the model directly — you interact with the system built around it.

Position of Machine Learning Models Within AI Systems

In real systems, models are not used directly—they are accessed through APIs.

An API acts as the bridge between the AI tool and the model.

This is why developers interact with models through APIs, while everyday users interact with tools.

The model’s role is simple:

It takes input and generates output based on learned patterns.

But the API and tool together decide:

how the input is formatted

how much context is included

how the output is processed and displayedThis means the model operates as a component within a larger system—not as a standalone system.

Understanding this helps explain why two tools using similar models can still produce very different results.

Model Scope and Boundaries

A machine learning model is limited to generating outputs based on patterns learned during training. It processes input data and produces results, but it does not manage how those results are used.

Functions such as user interaction, input validation, workflow control, and output formatting are handled by the AI tool — not the model itself.

Want to understand how AI generates outputs? Read:Inference in AI Tools

Real Insight:

Most people think the model controls everything — but in reality, the tool controls how the model is used.

Simple takeaway:

If you’re using ChatGPT or any AI app, you’re using a tool — not the model itself.

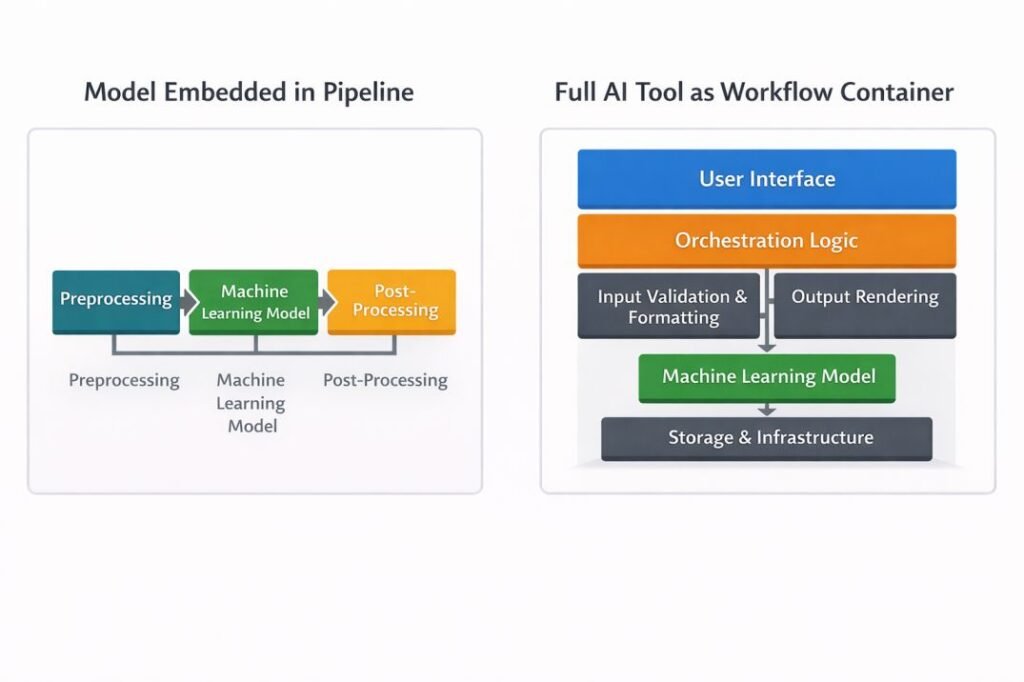

Definition and Structural Role of AI Tools

An AI tool is a complete system that includes:

user interface

workflow logic

one or more AI models

It controls how inputs are processed, how outputs are generated, and how results are presented.

The model is part of the system—but not the whole system.

This is why most users interact with AI tools as complete systems, rather than working with models directly.

One thing the tool does that the model cannot: Safety Filtering. If you ask a ‘forbidden’ question, the model might actually know the answer, but the Tool layer blocks the response before it ever reaches your screen.

What This Means for You (Practical Use)

If you’re using AI tools like ChatGPT, your results depend more on how the tool processes your input than on the model itself.

That’s why improving your prompts and choosing the right tool has the biggest impact for most beginners.

AI Tools vs AI Models Comparison Table

To make this difference clearer, here’s a side-by-side comparison:

| Dimension | AI Tool | Machine Learning Model |

|---|---|---|

| Architectural Scope | System-level construct integrating multiple layers | Bounded computational artifact within a system |

| Human Interface | Includes user interaction layer and presentation components | Does not contain interface mechanisms |

| Workflow Logic | Defines sequencing, routing, and execution conditions | Lacks inherent task sequencing or orchestration |

| Dependency on Training Data | May depend on model training data indirectly | Directly parameterized by training data characteristics |

| Governance Surface | Encompasses access control, logging, deployment policy, and data handling | Limited to model evaluation, versioning, and training data governance |

| Deployment Context | Operates as an application or service environment | Deployed as an inference endpoint or embedded component |

Decision Matrix: When to Focus on Tool vs. Model

| Scenario | Focus on Tool? | Focus on Model? |

| Writing a simple email | Yes (Prioritize UI/Ease) | No |

| Building a custom chatbot | No | Yes (Prioritize Reasoning) |

| Analyzing 100+ PDFs | Yes (Prioritize File Handling) | No |

| Privacy-sensitive data | Yes (Prioritize Data Security) | Yes (Prioritize Local Models) |

The Hidden Bridge: API Latency & Optimization

Have you ever wondered why the same model feels faster in one tool than another? This is due to Optimization.

When you click “Generate”:

- The Tool formats your prompt.

- It sends it via an API (The Bridge) to the model’s server.

- The Model processes it.

- The Tool streams it back to your screen.

Observation: High-quality AI tools invest in “Inference Optimization” to reduce this yatra (latency). If a tool feels laggy, it’s usually a bottleneck in the Tool’s infrastructure, not the Model’s speed.

Which One Should You Focus On?

Focus on AI tools if:

- You are a beginner

- You want to use AI in real tasks

- You need ready-to-use systems

Focus on machine learning models if:

- You are building AI systems

- You want to train or customize models

- You are working on technical AI development

Workflow Architecture Distinctions

Model as Component

Simple Insight

In real AI systems:

- The model is just one step in the process

- The tool controls the full workflow

This is why users interact with tools, not models.

Practical Workflow Example (From Usage)

When using AI tools like ChatGPT for content creation:

- I start with a clear prompt describing the task

- The tool processes and structures the input

- The model generates a response

- I manually refine the output for accuracy and clarity

This shows that the model generates content, but the tool and user together control the final result.

This is how AI tools are actually used in real workflows.

Examples of AI Tools vs Models

AI Tools

- ChatGPT – user interface + workflow + model

- Google Gemini – complete AI system for users

Machine Learning Models

- GPT model – generates text

- Classification models – predict categories

AI tools use these models internally — users interact with the tool, not the model directly.

Final Takeaway

You don’t use the AI model directly — you use the tool built around it.

If your results are poor, the problem is usually:

- unclear prompts

- or the tool you’re using

What to do:

- Improve your prompt first

- If results are still weak → try a different AI tool

This simple approach will immediately improve your results.

Related Guides (Continue Learning)

If you want to go deeper, these guides will help you build on this topic:

→ Why AI Tools Give Wrong Answers

Understand the real limitations of AI systems and why incorrect outputs happen.

→ How AI Tools Interpret Prompts

Learn how your input is processed before reaching the model.

→ AI Tools vs Automation Tools

See how AI tools differ from rule-based automation systems.

→ Inference in AI Tools

Explore how models generate outputs step by step.

Each of these topics expands a specific part of what you learned in this guide.

Pro Insight

Most confusion happens because users only see the interface, not the system behind it.

Understanding this structure helps you use AI more effectively.

Want to Use AI Tools Better?

Start with these guides:

→ Best AI Tools for Beginners

→ How to Write Better AI Prompts

→ Common Mistakes When Using AI Tools

How to Decide What to Fix (Simple Framework)

If your result is:

- Too basic → Try a different AI tool

- Too confusing → Improve your prompt

- Too inconsistent → Test across multiple tools

- Technically limited → Consider a different model

This removes guesswork and speeds up improvement.

Conclusion

Understanding the difference between AI tools and AI models is not just a technical concept — it directly affects the results you get.

If you are using AI tools like ChatGPT, remember:

- The tool controls how your input is processed and displayed

- The model generates the actual response

What you should do next:

- Start using one AI tool (like ChatGPT) with clear prompts

- If results are weak → refine your prompt first

- If results are still poor → test the same prompt in another tool

This simple process will immediately improve the quality of results you get.

Once you understand this difference, you stop guessing—and start using AI more strategically.

Key takeaway:

Better results don’t come only from better prompts—they come from understanding how the tool and model work together.

Insight Most Beginners Miss

Most users try to fix bad results by switching models.

But in real usage, changing the tool or improving the prompt usually gives faster results than switching to a more advanced model.

This is because users interact with the tool layer—not the model directly.

Frequently Asked Questions (FAQ)

What is the difference between an AI tool and a machine learning model?

A machine learning model is the part of the system that generates outputs based on patterns learned from data.

An AI tool is the complete system you interact with — it includes the model along with interface, workflow, and controls that make it usable in real tasks.

Do users interact with machine learning models directly?

In most cases, no. Users typically interact with AI tools like ChatGPT or Gemini, which include machine learning models behind the scenes. The tool provides the interface and manages how the model is used.

Why do different AI tools give different answers even with the same prompt?

Because each AI tool applies its own system-level processing before and after the model generates output.

This includes:

- Prompt formatting

- Context management

- Memory handling

- Output structuring

Even if the underlying model is similar, these differences significantly affect the final response.

Can improving my prompt give better results than changing the AI model?

Yes. For most users, improving the prompt has a much bigger impact than switching models.

Since users interact with the tool layer, better prompts help the system interpret intent more clearly, leading to more accurate and useful outputs.

What actually affects the quality of AI output the most?

The biggest factor is not the model itself, but how the tool processes your input.

This includes prompt clarity, context handling, and system-level formatting. Even powerful models can produce poor results if the input is unclear or poorly structured.

Why do two people get completely different results from the same AI tool?

Because AI tools interpret prompts based on context, structure, and wording.

Small differences in how a prompt is written can lead to very different outputs, even when using the same tool and model.

References

National Institute of Standards and Technology (NIST).

Artificial Intelligence Risk Management Framework (AI RMF 1.0).

Gaithersburg, MD: NIST, 2023.

https://www.nist.gov/itl/ai-risk-management-framework

OECD.

OECD AI Principles.

Organisation for Economic Co-operation and Development, 2019 (updated 2022).

https://oecd.ai/en/ai-principles

IEEE.

IEEE 7000™-2021 — Model Process for Addressing Ethical Concerns During System Design.

Institute of Electrical and Electronics Engineers, 2021.

https://standards.ieee.org/standard/7000-2021.html

ISO/IEC JTC 1/SC 42.

ISO/IEC 23894:2023 — Artificial Intelligence — Risk Management.

International Organization for Standardization, 2023.

https://www.iso.org/standard/77304.html

European Commission.

Ethics Guidelines for Trustworthy AI.

High-Level Expert Group on Artificial Intelligence, 2019.

https://digital-strategy.ec.europa.eu/en/library/ethics-guidelines-trustworthy-ai

National Institute of Standards and Technology (NIST).

Secure Software Development Framework (SSDF) SP 800-218.

NIST Special Publication 800-218, 2022.

https://csrc.nist.gov/publications/detail/sp/800-218/final