Introduction

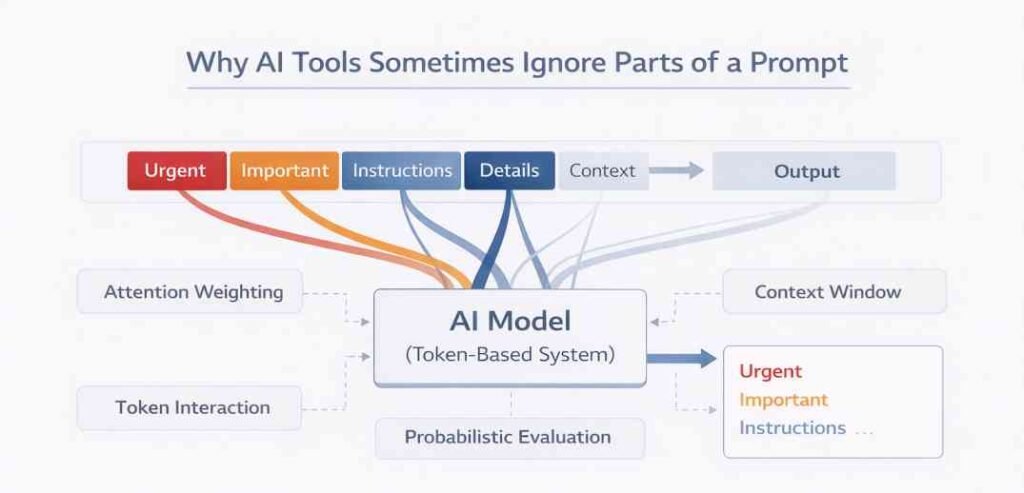

Why AI tools sometimes ignore parts of a prompt is defined through structural and operational characteristics of AI systems. Prompts are processed as sequences of tokens within a bounded computational environment, where multiple mechanisms determine how different segments of input are represented during inference.

These mechanisms include tokenization, attention-based weighting, context construction, and probabilistic evaluation.

Within this framework, prompts are not treated as collections of independently executed instructions. Instead, they are represented as unified token sequences in which all components are evaluated together. The system does not inherently preserve explicit boundaries between different parts of a prompt, and interpretation emerges from the relationships established between tokens during processing.

As a result, not all parts of a prompt contribute equally to the internal representation. This variation is not based on selective inclusion or exclusion but arises from how tokens are weighted, positioned, and evaluated within system constraints. Attention distribution, context limitations, and token relationships collectively determine variation in how prompt components are represented during output generation.

These structural characteristics define how AI systems process input and define the structural conditions under which certain segments exhibit reduced representation within the computational process. This process is structurally related to How AI Tools Interpret Prompts: A Structural Explanation.

In this context, representation refers to the internal encoding of token relationships within the computational structure that is used during inference.

Definition — Representation in AI Systems

Representation refers to the structured encoding of token

relationships within a computational model during inference. It is

formed through token interactions, attention weighting, and

context construction within system-defined constraints.

Definition — Context Window

Context window refers to the bounded subset of tokens available for relational evaluation within a computational system at a given stage of processing.

Definition — Attention Weighting

Attention weighting refers to the assignment of relative relational importance to tokens based on their interactions within the token sequence.

Definition — Probabilistic Evaluation

Probabilistic evaluation refers to the representation of token relationships based on likelihood distributions rather than deterministic selection.

Definition — Token Interaction

Token interaction refers to the relational evaluation between tokens within a shared representational structure.

These foundational concepts are structurally related to how prompts are defined and processed within AI systems: What is a Prompt in AI Tools

Token Sequence Representation and Structural Constraints

Continuous Token Structure

Prompts are processed as continuous sequences of tokens rather than as separate instruction units. During tokenization, all elements of the input—such as instructions, conditions, and descriptive phrases—are converted into tokens and arranged in a single ordered sequence.

- All prompt components are embedded within a single continuous token sequence

- No explicit segmentation defines independent instruction units

- Relational evaluation occurs across the entire sequence

All prompt components are interpreted within a single structural framework without independent segmentation.

Representation Constraint

Because prompts are represented as unified token sequences, all tokens are evaluated collectively within a shared computational space without distinct processing pathways for individual instructions.

- Instruction boundaries are not explicitly preserved

- All tokens participate in the same relational evaluation process

- Representation depends on interactions within the sequence

This structural arrangement refers to non-uniform representation of token groups within the unified sequence. Since tokens are processed together, the relative positioning and interaction between tokens correspond to how individual segments are represented during inference.

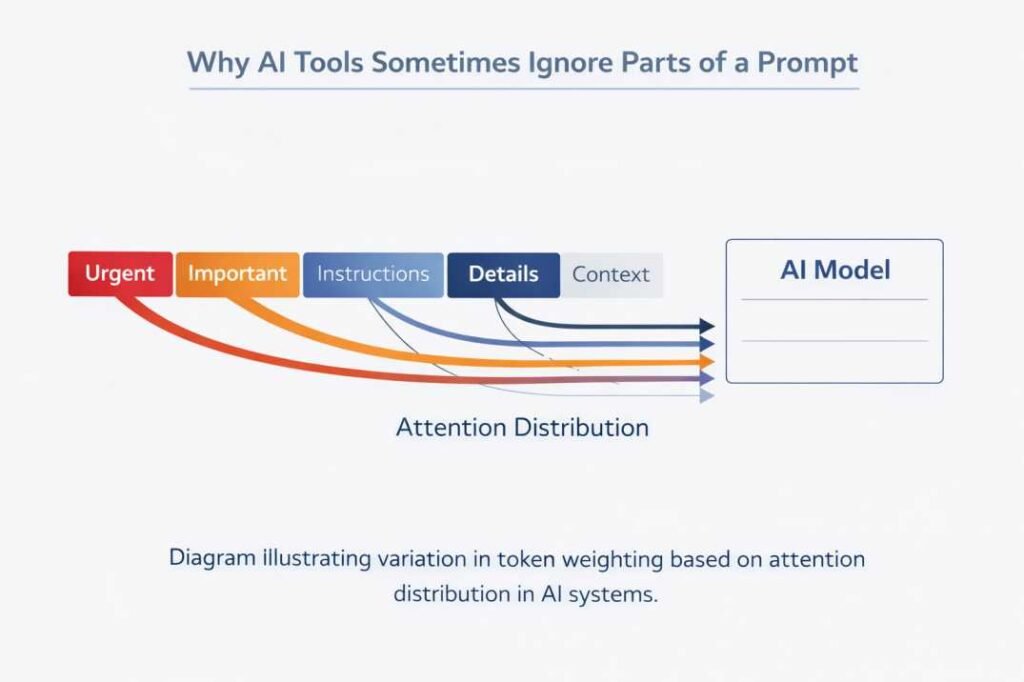

Attention-Based Weight Distribution

Relative Weighting Mechanism

Attention mechanisms are used to evaluate relationships between tokens within the context. Each token is assigned a relative weighting determined by its relational evaluation with other tokens within the context.

- Relative contribution is determined through relational evaluation

- Weighting is dynamic and varies across the sequence

- No uniform distribution of attention is applied

These weights correspond to differences in how tokens contribute to subsequent processing stages, including output generation.

Positional Constraint

Attention does not assign equal influence to all tokens. Instead, weighting varies depending on interaction patterns, token positioning, and contextual relationships.

- Some tokens receive higher relative weighting

- Others may receive lower weighting within the same sequence

- Weight distribution is influenced by overall Positional Constraint

Variation in attention weighting refers to differences in relational contribution among tokens within the sequence.

Structural Observation

Attention operates as a distribution mechanism that evaluates relationships across all tokens simultaneously. All tokens remain within the computational process, but their relative contribution differs based on assigned weighting.

This mechanism is associated with how AI tools interpret prompts by assigning relative importance to tokens within the sequence. This weighting behavior is also examined in Why AI Tools Misinterpret Prompts.

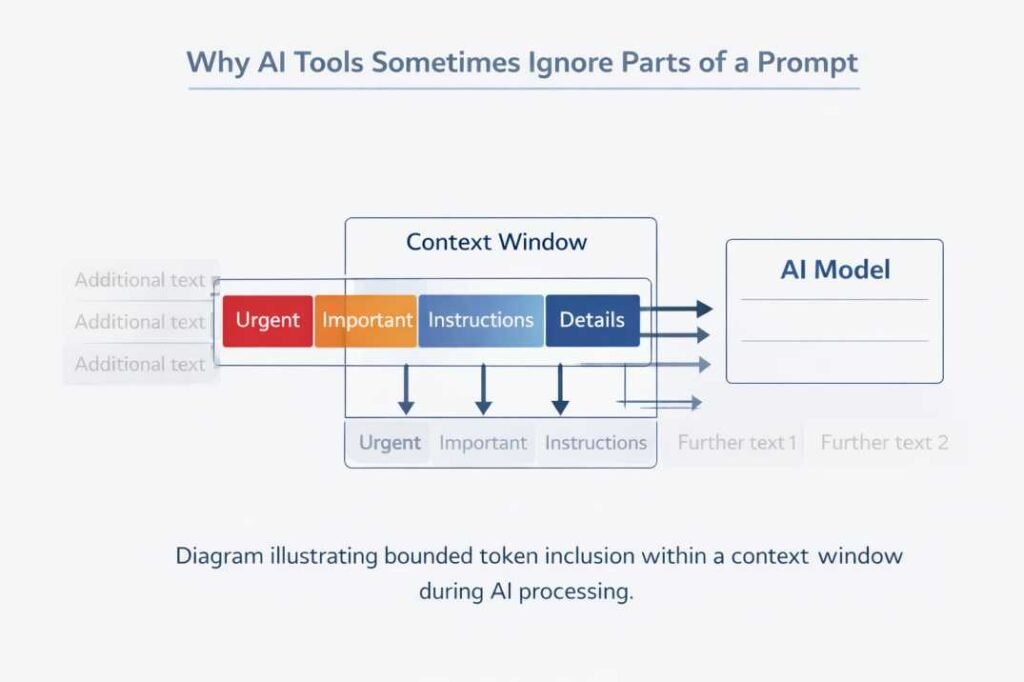

Context Window Constraints

Finite Processing Capacity

AI systems process prompts within a bounded context window that defines the subset of tokens available for relational evaluation at a given stage. Tokens outside this subset are not represented within the active computational context.

- Only tokens within this bounded subset are available for relational evaluation

- Tokens outside this limit are not available for evaluation

- The total number of tokens is constrained by system design

This limitation applies to both input prompts and generated tokens that form part of the evolving context.

Context Capacity Constraint

When the length of a prompt exceeds the available context capacity, not all tokens can be included within the processing window.

- Portions of the prompt may fall outside the context boundary

- Inclusion of tokens depends on their position within the sequence

- Exclusion is determined by system limits rather than semantic priority

This condition refers to exclusion of tokens that fall outside the defined context boundary.

Structural Observation

Context construction determines which tokens are available for relational evaluation at each stage of processing. Since only a subset of tokens is considered, context window limitation refers to bounded inclusion of tokens within the representational structure.

This limitation is structurally related to the inference process in AI systems, where token inclusion and evaluation occur within defined computational boundaries: Inference in AI Tools

Token Interaction and Instruction Overlap

Shared Context Processing

All tokens within the context window are evaluated within a shared representational structure where relational evaluation occurs across the entire sequence.

- Tokens from different parts of the prompt coexist within one structure

- Relationships are evaluated across all tokens simultaneously

- No inherent separation exists between instruction groups

All prompt components participate in shared relational evaluation within the sequence.

Interaction Constraint

Because tokens interact within a shared context, different segments of a prompt may overlap in their relational influence.

- Tokens associated with different instructions may interact or compete

- Overlapping relationships is associated with variation in weighting distribution

- Instruction boundaries are not explicitly enforced

Token interaction overlap refers to shared relational evaluation across token groups within a unified structure.

Structural Observation

Instruction overlap represents a structural condition in which token relationships are resolved within a shared representational space, where interaction patterns are not constrained by instruction-level separation.

This mechanism interacts with other structural conditions within the system, contributing to variation in how token relationships are evaluated.

Positional Constraint

Structural Dependence

The arrangement of tokens within a prompt influences how relationships are evaluated during processing. Since prompts are represented as ordered sequences, variations in structure affect how tokens interact within the system.

Structural elements affecting relational evaluation include:

- Order of instructions within the sequence

- Use of punctuation and separators

- Grouping of related phrases

- Repetition of specific terms

These elements contribute to how tokens are positioned relative to one another.

Structural Limitation

Positional constraint refers to the dependence of token relationships on sequence order, grouping, and structural arrangement within the prompt.

- Reordering instructions changes relational context

- Grouping affects how tokens are associated

- Separation or continuity influences interaction strength

Because token relationships are sensitive to arrangement, different structural configurations of the same content may lead to variation in representation.

Structural Observation

Prompt structure influences how tokens are evaluated within the computational process. The system relies on positional and relational patterns rather than explicit instruction boundaries, which contributes to differences in how various parts of a prompt are represented during processing.

This behavior is associated with broader system constraints that influence how different parts of a prompt are represented during processing.

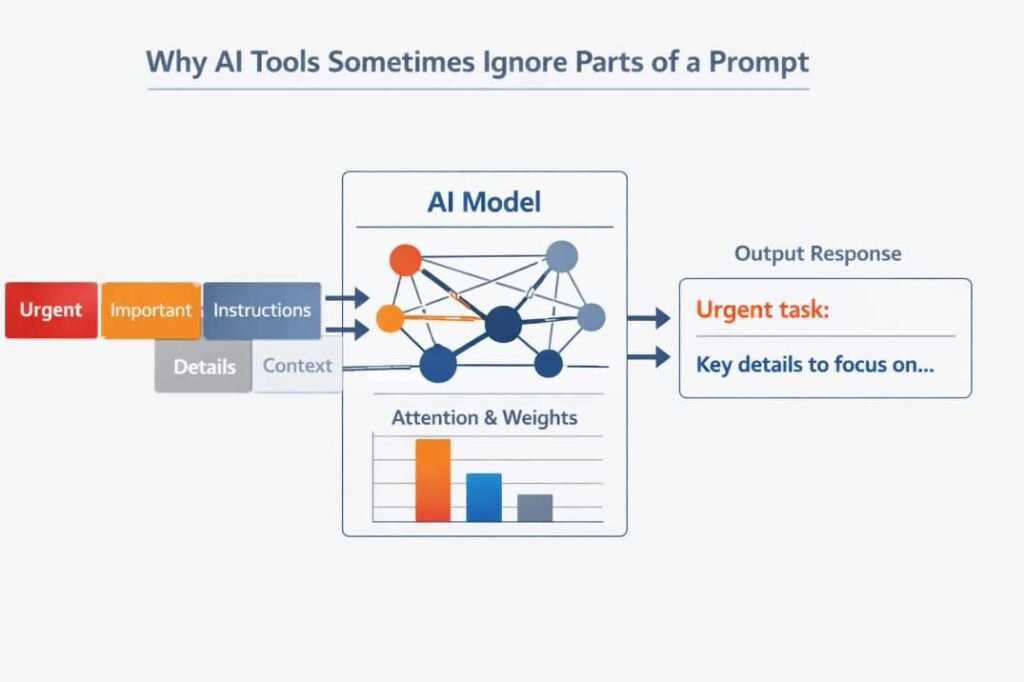

Probabilistic Constraint

Non-Deterministic Evaluation

AI systems evaluate token relationships using probabilistic methods. During processing, multiple potential interpretations of a prompt may exist within the system’s internal representation.

- Token sequences are evaluated based on probability distributions

- Multiple relational pathways may be considered simultaneously

- Output generation is derived from probabilistic selection over possible token continuations based on the computed representation.

Non-deterministic evaluation refers to interpretation derived from probability distributions rather than fixed rule-based selection.

Structural Limitation

Because interpretation relies on probabilistic evaluation, not all parts of a prompt are guaranteed consistent representation.

- Different tokens may contribute with varying probabilities

- Some relational patterns may have lower likelihood within the distribution

- Representation of prompt segments may vary across processing instances

Probabilistic constraint refers to variation in representational outcomes based on likelihood distributions.

Structural Observation

Probabilistic evaluation operates on token relationships within the context. The system does not enforce a single interpretation but selects among possible representations based on computed likelihoods, which may influence how different parts of a prompt are represented.

This mechanism interacts with other structural conditions within the system, contributing to variation in how token relationships are evaluated.

Temporal Context Constraint

Dynamic Context Evolution

During output generation, newly generated tokens are appended to the existing context and become part of subsequent processing stages.

- Generated tokens extend the token sequence

- The context evolves as new tokens are added

- Both input and generated tokens coexist within the same context window

Dynamic context evolution refers to the inclusion of newly generated tokens within the existing token sequence during inference.

Structural Limitation

As the context evolves, the relative influence of earlier prompt tokens may change.

- Newly generated tokens introduce additional relationships

- Temporal constraint refers to shifting relational weighting as the token sequence expands

- Context composition shifts during processing

Temporal context constraint refers to variation in relational weighting as the token sequence expands.

Structural Observation

Prompt interpretation is not confined to the initial input stage. It continues throughout generation as the context expands, and the interaction between original and generated tokens is associated with variation in the evolving representation within the system.

System Constraint

Operational Boundaries

AI systems operate within defined computational and architectural constraints that influence how prompts are processed.

- Token processing limits restrict input size

- Memory and resource allocation define processing capacity

- Latency requirements influence evaluation scope

These constraints establish the operational environment in which prompt processing occurs.

Structural Limitation

System-level constraints affect how input tokens are handled during processing.

- Large inputs may be truncated or partially represented

- Resource limitations influence how relationships are evaluated

- Processing capacity defines the scale of token interaction

System constraint refers to operational boundaries defined by computational capacity, memory allocation, and processing limits.

Structural Observation

System constraints represent the operational boundary conditions within which token-based representation is formed. These boundaries influence how different parts of a prompt are included and represented during processing, contributing to variation in how prompt components are reflected in the output.

Relational Priority Constraint

Token Retention Within Context

Within the context window, tokens are not only limited in number but also evaluated based on their position and interaction with other tokens in the sequence.

- Tokens positioned later in the sequence may interact differently than earlier tokens

- Relative position influences relational access between tokens

- Context composition evolves as tokens are processed

This indicates even when tokens are included within the context window, their relative contribution is shaped by positional and relational factors within the sequence.

Structural Limitation

Not all tokens within the context window contribute equally to the representational structure.

- Some tokens may have stronger relational connectivity

- Others may have weaker interaction patterns

- Relative influence depends on token relationships rather than inclusion alone

Relational priority constraint refers to differential influence of tokens based on positional and relational connectivity.

Structural Observation

Context prioritization emerges from positional and relational dynamics within the token sequence. The system evaluates tokens based on their interactions rather than assigning uniform importance, which is associated with variation in how different segments of a prompt are reflected in the computational process.

This behavior is associated with broader system constraints that influence how different parts of a prompt are represented during processing.

Interaction Competition Constraint

Shared Representational Space

All tokens exist within a shared representational space where relational evaluation occurs across the full sequence without explicit separation between segments.

- Multiple instruction segments coexist within one sequence

- Token groups associated with different instructions interact

- No explicit boundaries separate competing segments

This unified structure is defined by conditions in which all tokens participate to influence one another during evaluation.

Structural Limitation

Because tokens share the same representational space, different groups of tokens may compete for relational influence.

- Overlapping token groups is associated with variation in each other’s weighting

- Some token groups may have stronger relational connectivity

- Others may have reduced influence within the same context

Interaction competition refers to overlapping relational influence among token groups within a shared representational space.

Structural Observation

Competition between token groups reflects the collective nature of token evaluation. The system processes all relationships within a single framework, which may influence how different segments of a prompt are represented depending on how these relationships are resolved.

Positional Boundary Constraint in Prompt Processing

Edge Position Influence

Tokens located at the boundaries of a prompt—such as the beginning or end of the sequence—may interact differently within the overall structure.

- Boundary tokens may have different relational access compared to centrally located tokens

- Interaction patterns can vary depending on sequence position

- Structural limits are defined by the start and end of the token sequence

Positional characteristics determine how tokens participate in relational evaluation.

Structural Limitation

The position of tokens within the sequence introduces constraints on how relationships are formed.

- Tokens at sequence edges may have fewer adjacent relational connections

- Central tokens may interact with a wider range of surrounding tokens

- Positional placement affects relational connectivity

Positional boundary constraint refers to differences in relational connectivity based on token placement within sequence edges or central positions.

Structural Observation

Positional Boundary Constraint arise from the ordered nature of token sequences. The system evaluates relationships based on positional structure, which is associated with differences in how tokens at various positions within the prompt are represented in the computational process.

Absence of Structural Segmentation Constraint

Lack of Explicit Structural Segmentation Constraint

AI systems do not inherently segment prompts into independently executable instructions. Instead, all input components are processed as part of a unified token sequence.

The system does not construct hierarchical instruction representations; all tokens remain within a single-level relational structure.

- Instructions are not assigned separate execution channels

- No explicit boundaries define independent processing units

- All tokens are evaluated within a shared structure

Absence of structural segmentation refers to the lack of independent instruction-level representation within the token sequence.

Structural Limitation

Because there is no built-in mechanism for instruction-level separation, the system does not guarantee independent representation of each instruction.

- Instruction identity depends on token relationships

- No fixed execution order is enforced

- All components are subject to shared evaluation dynamics

This limitation influences how different parts of a prompt are represented within the computational process.

Structural Observation

Interpretation emerges from token-level relationships rather than instruction-level control. The system evaluates the prompt as a continuous structure, which contributes to variation in how different segments of the input are represented during processing.

Relationship to Prompt Interpretation

The structural factors described in this article are directly related to the broader process of prompt interpretation in AI systems. Tokenization, context construction, attention-based weighting, and probabilistic evaluation define how input is transformed and represented during processing.

These mechanisms determine how tokens are positioned, weighted, and evaluated within system constraints, which in turn influences how different parts of a prompt are represented. The same structural processes that define prompt interpretation also contribute to variation in how prompt components are reflected during output generation.

Token-based representation is structurally related to How AI Tools Interpret Prompts: A Structural Explanation, where the transformation of input into computational representations is outlined at a system level.

Integrated Structural Model of Prompt Representation

Prompt representation in AI systems is defined through the interaction of multiple structural mechanisms operating within a unified computational framework.

These mechanisms include:

- Tokenization, which converts input into discrete token units

- Attention-based weighting, which assigns relative relational importance

- Context window construction, which defines the subset of tokens available for evaluation

- Probabilistic evaluation, which determines representation through likelihood-based selection

These components operate simultaneously within a shared representational structure. Representation is defined as emerging from the combined interaction of these processes within system-defined constraints.

Conclusion

Why AI tools sometimes ignore parts of a prompt is defined by structural and operational constraints within AI systems, including token-based representation, attention-based weighting, context window limitations, probabilistic evaluation, and system-level boundaries. These mechanisms determine how different segments of a prompt are represented during processing.

This behavior reflects token-based representation and relational evaluation within system-defined constraints, where prompt components are processed as elements of a unified computational structure rather than independent instructions.

These processes operate within broader system workflows described in How AI Tools Are Positioned Within Workflows: A Process Description.

Frequently Asked Questions (FAQs)

Why do AI tools sometimes ignore parts of a prompt?

This condition refers to variation in token representation determined by attention weighting, context boundary inclusion, and probabilistic evaluation.

Do AI tools process all parts of a prompt equally?

Token processing refers to shared inclusion within a unified structure, with variation defined by relational weighting.

Can prompt length affect how it is processed?

Prompt length refers to the total number of tokens, which is constrained by the context window defining representational inclusion.

Is this behavior consistent across all prompts?

No. This condition refers to probabilistic and context-dependent variation in token representation.

Are instructions processed separately within a prompt?

Instructions are not inherently processed as separate units. They are represented as part of a continuous token sequence, and interpretation emerges from relationships between tokens rather than independent execution.

References

The following sources are presented as foundational technical and institutional materials related to tokenization, attention mechanisms, context constraints, and language model behavior.

Attention Is All You Need

Vaswani, A., et al. (2017)

https://arxiv.org/abs/1706.03762

Language Models are Few-Shot Learners

Brown, T. B., et al. (2020)

https://arxiv.org/abs/2005.14165

BERT: Pre-training of Deep Bidirectional Transformers for Language Understanding

Devlin, J., et al. (2018)

https://arxiv.org/abs/1810.04805

On the Dangers of Stochastic Parrots

Bender, E. M., et al. (2021)

https://dl.acm.org/doi/10.1145/3442188.3445922

OpenAI — Research and Documentation

https://openai.com/research

Google AI — Research Publications

https://ai.google/research/

Stanford University — NLP Research (Stanford NLP Group)

https://nlp.stanford.edu/

MIT CSAIL — Artificial Intelligence Research

https://www.csail.mit.edu/research/artificial-intelligence

- Top 10 Free AI Tools for Beginners (2026 Guide) - April 3, 2026

- How to Use ChatGPT for Beginners (Step-by-Step Guide) - March 31, 2026

- Why AI Tools Fail Outside Training Conditions - March 29, 2026