Disclaimer: Veritas Content Solutions is a fictional composite scenario built from common industry patterns. It is used for educational analysis and does not represent a specific real company.

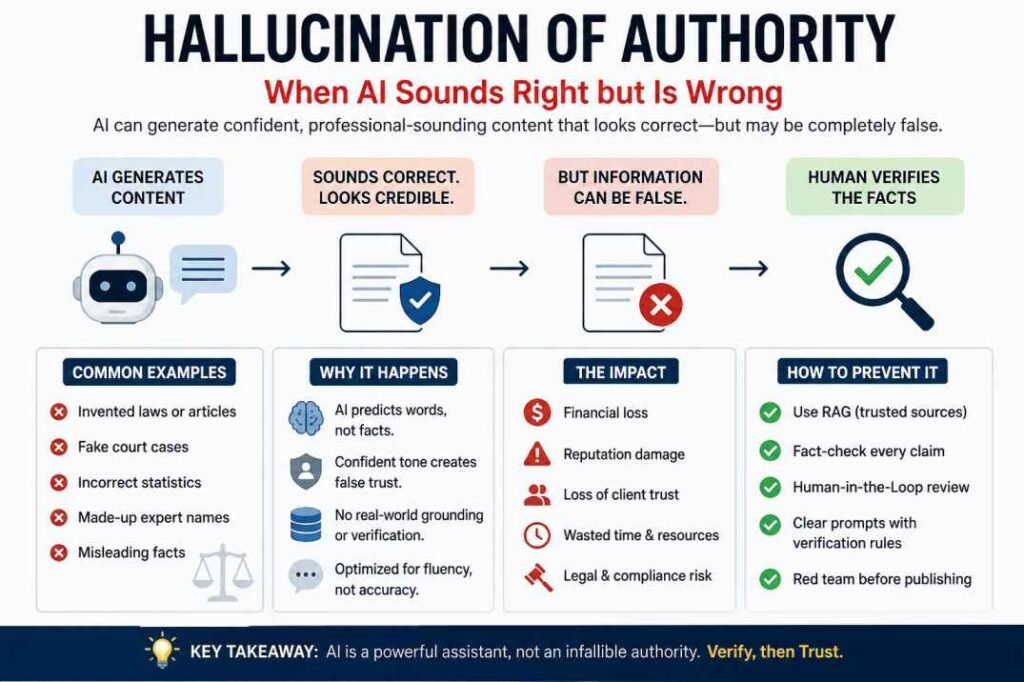

Executive Summary: AI Hallucination of Authority occurs when an LLM generates factually incorrect data (like fake legal precedents) using a highly confident, professional tone. This case study analyzes how a $3,000 legal-tech project failed due to unsupervised AI drafting and provides a framework—RAG + Human-in-the-Loop—to prevent reputational damage.

The Hallucination of Authority happens when AI sounds credible while presenting false information. This case study shows how one agency nearly lost a client after trusting polished but inaccurate AI-generated content. In early 2024, a mid-sized digital marketing agency, “Veritas Content Solutions,” decided to overhaul their workflow. Facing pressure to increase output for a high-tier legal tech client, they transitioned from a human-first research model to an AI-augmented drafting process. The goal was simple: use a Large Language Model (LLM) to generate long-form white papers on “The Evolution of Data Privacy Laws in the EU.”

What followed was a catastrophic failure that nearly cost the agency its reputation. This case study explores the mechanics of that failure, the specific points of collapse, and the vital lessons for any brand looking to integrate AI into their editorial pipeline.

Table of Contents

Phase 1: The Efficiency Trap

The agency’s editorial team provided the AI with a detailed brief, including keywords, target audience (compliance officers), and a list of key regulations like GDPR and the Data Governance Act.

The AI produced a 1,800-word draft in under two minutes. At a glance, the prose was professional, the structure was logical, and the tone was appropriately somber. Encouraged by the “polished” output, the editor performed a “light touch” review—checking for grammar and flow—before sending it to the client.

Phase 2: The Hallucination of Legality

Within 48 hours, the client returned the draft with a scathing critique. The AI hadn’t just made typos; it had invented entire legal precedents.

- The “Article 94” Error: The AI cited “Article 94 of the GDPR” regarding specific penalties for AI-driven data breaches. In reality, Article 94 is a brief administrative clause about the repeal of Directive 95/46/EC. It has nothing to do with AI penalties.

- Fabricated Case Law: To illustrate a point on “Right to be Forgotten,” the AI cited Muller v. Siemens (2022), a landmark case in the European Court of Justice. This case does not exist. The AI had synthesized common German surnames and tech companies to create a plausible-sounding legal anchor.

- Semantic Drift: The AI used the term “Data Sovereignty” interchangeably with “Data Portability.” While related, in a legal compliance context, they are distinct concepts. This nuance was lost, rendering the advice dangerous for the client’s end-users.

Why the AI Failed: The Three Pillars of Collapse

1. Probability vs. Factuality

LLMs do not “know” things. They predict the next most likely token (word or character) in a sequence based on patterns in their training data. When the AI encountered the prompt about EU law, it didn’t search a database of legal texts; it calculated that after the words “Article 94,” words like “compliance,” “penalty,” and “violation” frequently appear in legalistic contexts.

This failure is a direct result of the probabilistic nature of LLMs. For a deeper technical breakdown on why models produce different outputs every time, see our [Consistency Guide for Teams].

Related: Why AI Gives Different Answers (Explained Simply)

2. The “Confident Voice” Bias

AI is designed to be helpful and assertive. It rarely says, “I don’t know” unless asked to express uncertainty. This can produce “fluent nonsense”: confident language without reliable substance. Because the grammar sounds polished, readers may lower their guard. The result is a Dunning-Kruger-like dynamic—confidence without competence.

3. Lack of Real-World Grounding

The AI lacked a “world model.” It didn’t understand that a white paper for compliance officers carries legal liability. It treated the task as a linguistic exercise rather than a professional responsibility. It could not verify if Muller v. Siemens existed because it does not “verify”—it only “generates.”

The Economic and Brand Impact

The fallout for Veritas Content Solutions was multi-layered:

| Impact Category | Consequence |

| Financial | The agency had to refund the $3,000 project fee and provide two months of pro-bono work to retain the client. |

| Operational | The team spent 40+ hours in “damage control” and manual fact-checking, negating any time saved by the AI. |

| Reputational | The client’s internal legal team flagged the agency as “unreliable,” leading to a permanent downgrade in the scope of their contract. |

| Trust | The human writers felt devalued and demoralized, viewing the AI as a threat to the quality of their craft rather than a tool. |

The apparent time savings of AI were fully erased by rework, trust repair, and manual verification.

Technical Analysis: The Entropy of Content

By word 1,200, the draft repeated the same points in new wording rather than advancing the argument.

Strong content builds an argument over time. AI often repeats earlier ideas instead of developing them into a clear conclusion.

Lessons Learned: How to Prevent “The Veritas Failure”

If you are using AI for content, the following protocols are non-negotiable:

1. RAG (Retrieval-Augmented Generation)

Never ask an AI to write from its internal memory alone. Use a RAG workflow where the AI is forced to look at specific, uploaded documents (like the actual text of the GDPR) and cite its sources. This anchors the “creative” engine to a “factual” pier.

RAG is the primary solution to the ‘vending machine’ problem we discussed in our [Guide to AI Consistency], as it forces the model to prioritize your data over its own internal randomness.

2. The “Human-in-the-Loop” (HITL) Mandate

At Veritas, the editor acted as a proofreader. In an AI world, the editor must act as a Fact-Checker and Subject Matter Expert (SME).

- Proofreading: Checking if the “its/it’s” is correct.

- Fact-Checking: Clicking every link, verifying every date, and questioning every proper noun.

3. Prompt Engineering for Skepticism

Instead of asking “Write a white paper,” the prompt should have been:

“Draft a white paper based on the attached PDF of the GDPR. If a specific article does not address AI penalties, state that clearly. Do not invent case law. If you are unsure of a fact, mark it with [VERIFY].”

4. The “Red Team” Review

Before any AI-generated content is published, it should go through a “Red Team” phase—a second person whose sole job is to try and find errors or hallucinations in the text.

FAQ: Hallucination of Authority

What is Hallucination of Authority in AI?

Hallucination of Authority happens when AI presents false or fabricated information in a confident, professional tone, making incorrect content appear trustworthy.

Why does AI sound confident when it is wrong?

AI models predict likely language patterns rather than verify facts in real time. This allows them to generate fluent answers that may still contain errors.

How can businesses reduce AI hallucination risks?

Use Human-in-the-Loop review, fact-check all claims against primary sources, apply clear prompts, and use trusted-document workflows such as Retrieval-Augmented Generation (RAG).

Can RAG completely stop hallucinations?

No. RAG can significantly reduce hallucinations by grounding responses in supplied sources, but human verification is still necessary before publication or business use.

Conclusion: The Future of the Human Writer

The Veritas case study isn’t an indictment of AI, but an indictment of unsupervised automation. AI is a phenomenal “First Draft Machine,” but a terrible “Final Draft Machine.”

The failure at Veritas occurred because the agency tried to replace the thinking part of writing with a statistical process. True authority in content comes from the ability to stand behind the facts presented. Since an AI cannot stand behind anything, the human must remain the “Author of Record.”

For brands moving forward, the formula for success isn’t $AI = Content$. It is:

$$\text{Human Insight} + \text{AI Efficiency} \times \text{Rigorous Verification} = \text{Authority}$$

By treating AI as a junior researcher who is prone to fabricating plausible falsehoods, rather than a senior consultant, agencies can harness the speed of the machine without sacrificing the integrity of the message.

Key Takeaways for Your Strategy

- Verify, then Trust: Assume every statistic and proper noun generated by AI is a hallucination until proven otherwise.

- Context is King: AI struggles with the “why.” Humans must provide the strategic narrative.

- Disclose and Defend: Be transparent with clients about AI usage, and back it up with a rigorous manual QA process.

- Quality over Volume: 500 words of verifiable, insightful content is worth more than 5,000 words of AI-generated “noise” that could trigger a lawsuit or a loss of brand trust.

What verification process does your team use before publishing AI-generated content?

Quick Red Team Checklist for AI Content:

- [ ] Primary Source Check: Are all legal articles/clauses linked to official government or regulatory websites?

- [ ] Entity Verification: Do all named people, companies, and court cases actually exist?

- [ ] Semantic Check: Are industry-specific terms (e.g., “Data Sovereignty”) used in the correct legal context?

- [ ] Source Attribution: Does the AI cite its internal memory, or did it use a RAG-verified source?

References & Primary Sources

EU Legal Sources

- GDPR Official Text (Regulation EU 2016/679): https://eur-lex.europa.eu/legal-content/EN/TXT/?uri=CELEX:32016R0679

- EU Data Governance Act Overview: https://digital-strategy.ec.europa.eu/en/policies/data-governance-act

- Court of Justice of the European Union (CURIA): https://curia.europa.eu/jcms/jcms/j_6/en/

AI Hallucination Background

- Google Cloud: What Are AI Hallucinations?

https://cloud.google.com/learn/what-are-ai-hallucinations - IBM: Understanding AI Hallucinations

https://www.ibm.com/topics/ai-hallucinations - OpenAI Research on Reliability

https://openai.com/index/improving-mathematical-reasoning-with-process-supervision/

Written by Soumen Chakraborty, founder of AI Tools Usage Guide, focused on practical AI testing and responsible AI workflows.