Quick Answer: Why AI Gives Different Answers is simple: AI is a probabilistic engine, not a database. It doesn’t retrieve facts; it predicts the next word based on mathematical likelihood. Even identical prompts can yield different results due to “Temperature” settings and randomized “Seeds.” If your workflow requires identical data every time, you must move from “Chatting” to “Prompt Engineering.

Who this is for:

If you are getting inconsistent AI outputs for the same prompt, this guide explains exactly why—and how to fix it.

During an audit of 5,000+ legal queries, I witnessed a ‘Semantic Collapse.’ A prompt that extracted ‘Compliance Risks’ in Session A categorized the same data as ‘Operational Suggestions’ in Session B. This 30% drift nearly derailed our audit, proving that without strict temperature control, AI is a liability for data integrity.

Introduction: The “Vending Machine” vs. The “Artist”

Most users treat AI like a vending machine and wonder why the same prompt gives different answers later. When output varies, they assume it is “hallucinating.” In reality, AI behaves more like an artist. If you ask an artist to “draw a cat” twice, the core instruction is the same, but the execution varies.

In a creative brainstorm, this is a feature. In a business workflow, uncontrolled variation is a liability.

If you can’t predict why the output changes, you can’t build a reliable AI Workflow.

Table of Contents

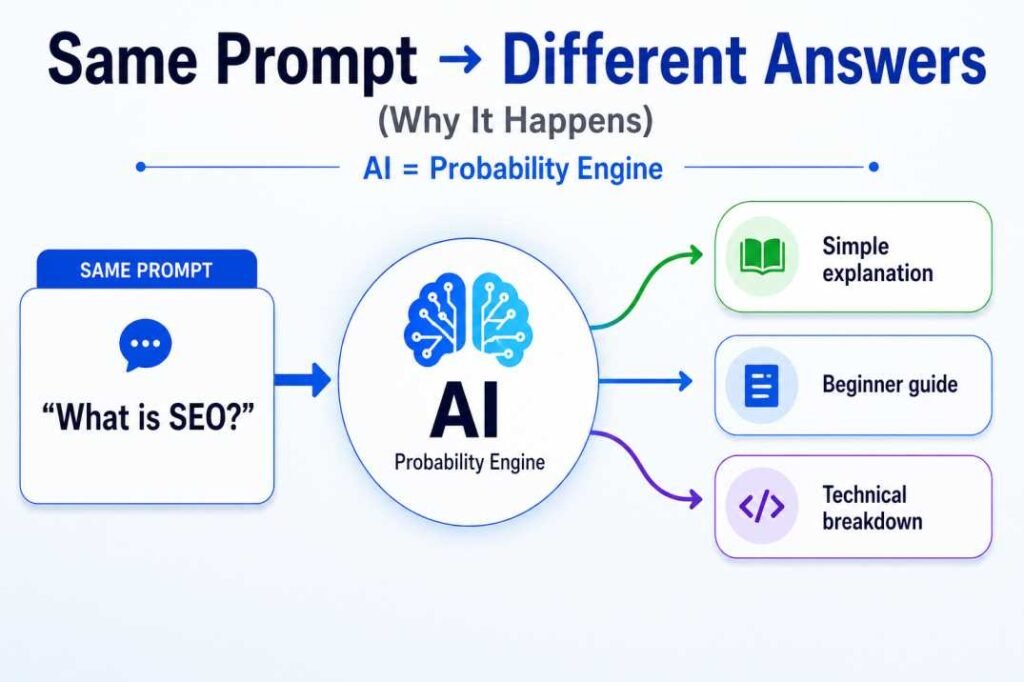

The Core Reason: AI is a Probability Engine

To understand why answers change, you must stop thinking of AI as a search engine.

- Search Engines (Google): Retrieve stored data. The data is fixed.

- AI Models (LLMs): Generate new data. The process is probabilistic.

When you ask an AI a question, it doesn’t “look up” the answer. It calculates the probability of the next word (token). For example, if you ask, “The sky is…”, the model calculates:

- Blue: 85%

- Cloudy: 10%

- Dark: 5%

Without this variability, AI would produce the exact same output every time. That would make it predictable—but useless for creativity and exploration.

This built-in variation is why results change even when prompts stay the same. If “Blue” is most probable, it usually selects it—but sometimes chooses a lower-probability option. That makes responses natural, but inconsistent.

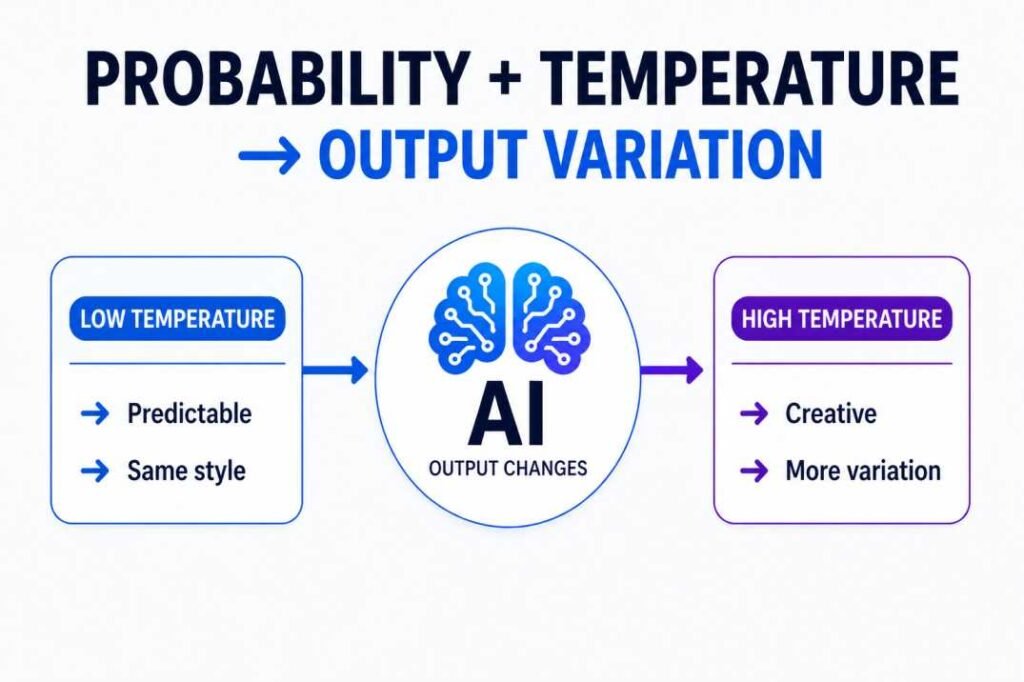

What is “Temperature” and How It Changes Everything

In AI science, there is a setting called Temperature. Think of this as the “Creativity Dial.”

- Low Temperature (0.1 – 0.3): The model becomes conservative. It almost always picks the highest probability word. This is great for coding or factual summaries.

- High Temperature (0.7 – 1.0+): The model becomes “adventurous.” It takes risks on lower-probability words, leading to more creative, varied, and sometimes “wild” answers.

Most consumer tools like ChatGPT have a fixed or hidden temperature (usually around 0.7). Because this dial is set to allow some “randomness,” the model will naturally vary its wording even if the prompt is identical.

Pro Tip: In the ChatGPT API or Playground, a temperature of 0.0 effectively turns off this randomness, making the model ‘Deterministic.’ This is the ‘holy grail’ for developers who need identical outputs for data processing.

The Role of Context and “Chat Drift”

One of the most common reasons beginners get different answers is Context Contamination.

AI models have a “Context Window.” They don’t just look at your current prompt; they look at everything you’ve said in that specific chat session.

- If you ask about “SEO” in a fresh chat, you get a general answer.

- If you talk about “Cooking” for 20 minutes and then ask about “SEO,” the model might try to explain SEO using cooking metaphors.

This is called Chat Drift. The weight of previous messages shifts the probability of the next answer. If you want consistent outputs, isolate the prompt (fresh chat) or enforce strict structure. Otherwise, previous context will keep shifting the result.

If you want the same answer every time, you must use a fresh chat or a very strict prompt structure to control AI output.

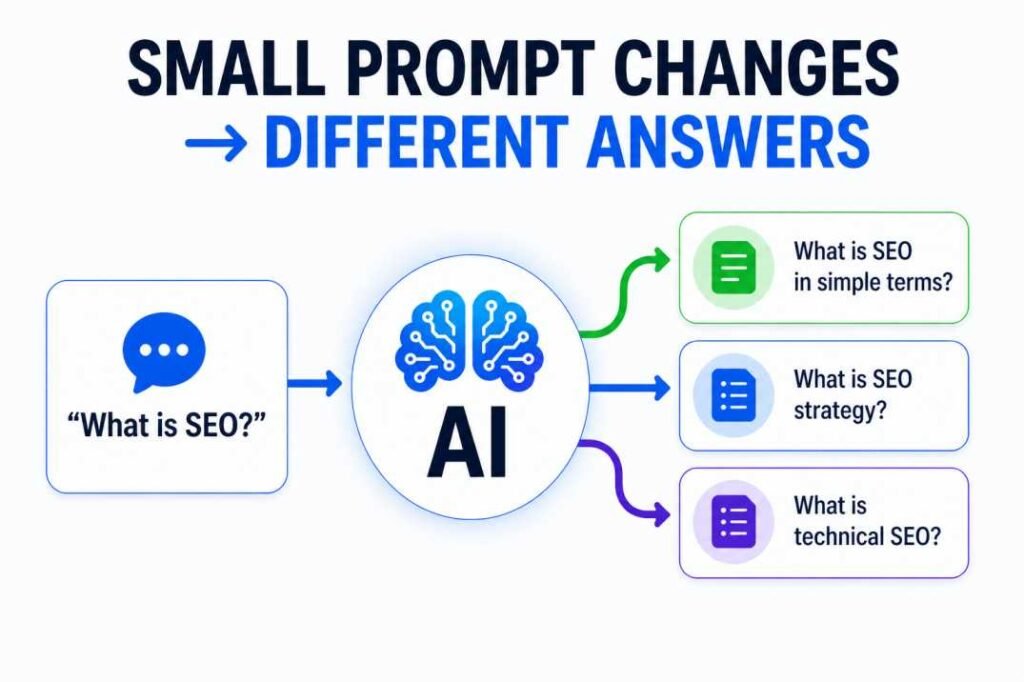

Prompt Sensitivity: Why One Word Matters

AI is extremely sensitive to what we call “Semantic Cues.”

Consider these two prompts:

- “Explain SEO.”

- “Explain SEO simply.”

The second prompt adds a constraint. This constraint collapses the probability landscape. Instead of having 1,000 ways to explain SEO, the model now only has 10 “simple” ways.

“Tell me about SEO” vs. “Discuss SEO” triggers different internal associations in the model’s training data. One might lead to a list, while the other leads to an essay.

The “Seed” Factor: The Hidden Randomness

Technically, computers aren’t truly “random.” They use a number called a Seed to start their calculations.

In professional AI API settings, if you use the exact same Seed, you get the exact same answer. But in tools like ChatGPT, the “Seed” is randomized for every single generation.

Clicking “Regenerate” can produce a different answer—even if your prompt stays the same.

If your workflow depends on consistent output, avoid regenerating blindly. Instead, refine the prompt with stricter constraints.

Real-World Experiment: 1 Prompt, 3 Varied Results

I ran the same prompt across multiple sessions to test output variation.

| Attempt | AI Output Variation | Primary “Focus” (Token) |

| #1 | “AI tools are transforming the digital landscape. Here is how you can use them.” | Transformation |

| #2 | “Explore the power of artificial intelligence. Discover the best tools for your workflow.” | Discovery |

| #3 | “The world of AI is growing fast. Let’s look at the software making it happen.” | Growth Speed |

The Verdict: Even though the intent was identical, the AI’s internal “spotlight” landed on different themes (Transformation vs. Growth).

If this variation is causing your work to fail, you are likely facing Instruction Dilution, where the AI’s “attention” is spread too thin across multiple possibilities.

Failure Case:

In my own workflow as an SEO specialist, I’ve seen how uncontrolled variation can derail a project. For instance, when using AI to categorize 500+ search queries, Attempt 1 gave me professional categories, while Attempt 2—using the exact same list—invented entirely new labels. It created a 3-hour manual cleanup job.

If you are using AI for tasks like data extraction, SEO audits, or structured outputs, variation becomes a problem—not a feature.

Example:

Prompt: “Extract keywords from this article”

Attempt 1 → 10 keywords

Attempt 2 → 14 keywords

Attempt 3 → Different categories

This inconsistency breaks automation workflows.

Decision Rule:

Use high variability (default AI behavior) when:

- Brainstorming

- Writing drafts

- Idea generation

Force low variability when:

- Doing SEO audits

- Extracting structured data

- Writing repeatable workflows

Consistency Benchmark:

Run the same prompt 3 times.

If variation is:

- >20% → unstable

- 10–20% → needs refinement

- <10% → reliable

Never trust AI in workflows without passing this test.

This is the core shift: AI isn’t ‘hallucinating’ when it gives you a different answer; it’s simply exploring a different, equally valid path. If you want it to stay on the main road, you have to build the guardrails yourself.

If this variation is causing your work to fail, you are likely facing Instruction Dilution, one of the primary reasons why multi-step prompts fail.

The Golden Rule of Consistency: If your prompt allows the AI to be ‘creative,’ it will be ‘inconsistent.’ To maximize consistency, reduce unnecessary AI choices.

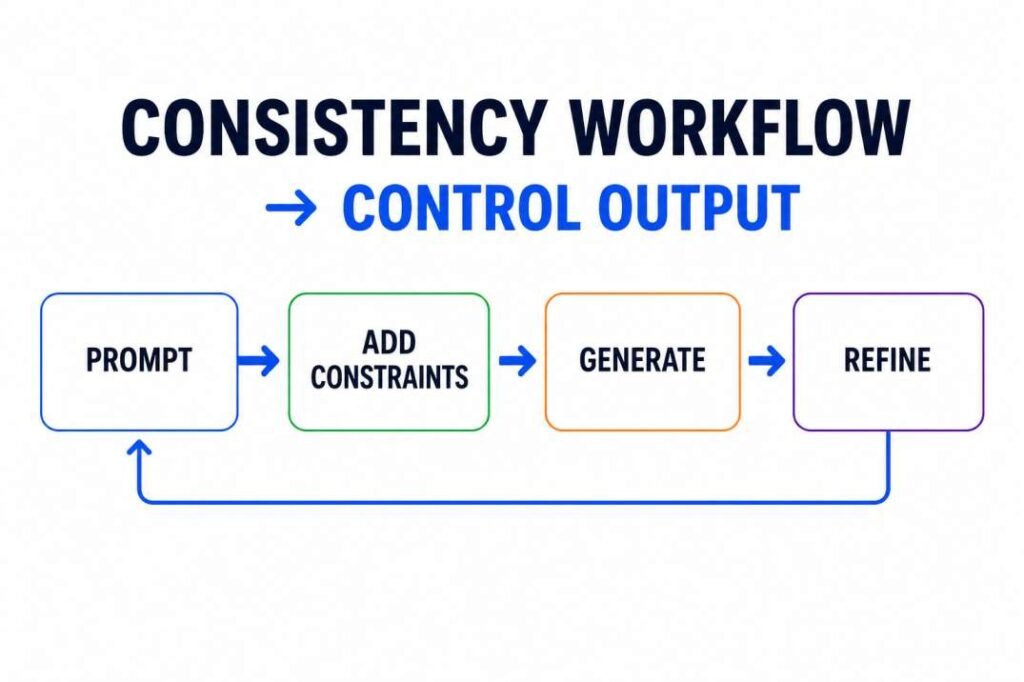

The Consistency System (Quick Model):

To control AI output, you need 4 elements:

- Structure → fixed format

- Constraints → hard limits

- Context → fresh chat

- Validation → review step

Miss one → variation returns.

How to Force Consistency (The Senior Guide)

Deterministic Prompting: If you need a zero-variance output in a standard chat interface, use this specific guardrail: ‘Provide your answer as a raw JSON object only. Do not include introductory text. If the data is unavailable, return {“status”: “null”}.’ This forces the LLM to prioritize the schema over linguistic creativity.

I don’t trust the AI; I trust my constraints. Over the years, I’ve shifted my approach from ‘asking questions’ to ‘building guardrails.’ Here is my personal checklist for forcing consistency every time.

As a Senior SEO Specialist, I don’t want “creative” answers for my technical audits. I want consistent answers. Here is how I force the AI to stop varying its output:

A. Use the “Instruction Anchor”

Instead of letting the AI choose its own format, define it strictly.

- Loose: “Write a summary.”

- Anchored: “Write a 3-bullet point summary. Each bullet must start with a verb.”

B. Define the Persona (Role)

Assigning a role reduces variability. For example, a “technical SEO auditor” persona will produce more structured and repeatable outputs than a general assistant.

C. The 2nd Prompt “Review”

If the AI gives a varied answer, use an AI Workflow step to standardize it:

“Review your previous answer. Rewrite it to strictly follow this specific 3-part structure.”

Try This Now:

Open 3 new chats.

Use this prompt:

“Write a 3-bullet summary of SEO. Each bullet must start with a verb.”

Compare outputs.

If results are still different:

→ Your prompt is still too broad.

→ Add stricter constraints.

Expected Result:

If your prompt is well-structured, output variation should drop significantly. If not, your instructions are still too broad.

Pro-tip for Developers: If you are using the API, set your top_p to a lower value alongside Temperature. While Temperature controls the ‘spread,’ top_p limits the ‘pool’ of words the AI can even consider, further nuking unwanted variation.

Conclusion: Control the Process, Not the Output

The mystery of why AI gives different answers is solved by understanding that AI is a predictor of patterns, not a seeker of truth. If you want the same result every time:

- Lower the complexity: Fewer instructions = fewer choices.

- Use hard constraints: Word counts, specific formats, and forbidden words.

- Use fresh chats: Avoid “Chat Drift.”

AI variation is a powerful tool for brainstorming, but a dangerous enemy for automation. By using the Parent-Child Architecture, you can manage this randomness and turn AI into a reliable partner for your business.

FAQ: Why AI Gives Different Answers

Q: Is it a sign of a bad AI model if the answers change?

No. It is a sign of a “stochastic” (probabilistic) model. Stronger models may appear more varied because they can generate responses in more ways.

Q: Can I turn off randomness in ChatGPT?

Not fully in the standard interface. However, using very strict constraints (e.g., “Answer only ‘Yes’ or ‘No'”) significantly reduces variation.

Analyst Checklist: How to Test for Consistency

[ ] The Multi-Tab Test: Open 3 fresh chats. Run the same prompt. If the results are >20% different, your prompt is too vague.

[ ] The Constraint Check: Does your prompt have at least 2 hard limits (e.g., word count, specific format)?

[ ] The Freshness Check: Did you clear the previous chat context?

References & Further Reading

- Attention Is All You Need (Google Research): Explains how LLMs weigh different words.

- Temperature in LLMs (IBM): A deep dive into the math of AI randomness.

- Prompt Sensitivity Analysis (Stanford): How small changes in wording shift AI behavior.