Introduction

AI systems are rarely used as isolated technologies. In most operational environments, they function as components embedded inside structured socio-technical processes where software, data pipelines, and human review mechanisms interact. The concept of AI workflow integration is used to describe how these components are positioned within broader operational sequences. This perspective shifts attention from individual tools toward system relationships and interaction layers (NIST, 2023; ISO, 2022).

From an educational standpoint, understanding AI workflow integration helps explain how data, models, decision layers, and oversight mechanisms are connected. AI is often discussed as a standalone capability, while in operational contexts it typically functions inside defined workflow structures that include non-AI systems and human review stages (NIST, 2023).

This article explains how AI tools are positioned within workflows from a structural and process-oriented perspective. It focuses on conceptual understanding, system structure, and contextual usage rather than implementation or tool comparison. The discussion reflects how academic institutions, standards bodies, and policy organizations describe AI systems as part of broader socio-technical processes rather than independent decision-making entities (ISO, 2023; OECD, 2019).

Conceptual Foundations of AI Workflow Integration

Within institutional AI system documentation, AI workflow integration is described as the structural positioning and coordination of AI processing components within broader socio-technical system processes. Lifecycle-oriented institutional frameworks frequently describe workflows as coordination structures that support sequencing, dependency management, and traceability across system components (ISO, 2022; ISO, 2023).

In institutional and research documentation, AI workflow integration is observed across multiple structural workflow categories. These may include model lifecycle workflows associated with model development and monitoring processes, governance-oriented workflows focused on oversight and accountability structures, and operational decision-support workflows where AI outputs are incorporated into broader system environments. These distinctions are analytical classifications that may overlap within complex socio-technical systems (ISO, 2023; NIST, 2023).

Workflow Documentation as Structural Mapping

Workflow documentation is commonly described in institutional standards and governance frameworks as a structural mapping mechanism used to represent relationships between data flows, processing components, and oversight stages within socio-technical systems. In this context, workflow structures are intended to support documentation clarity, traceability visibility, and accountability mapping across system environments rather than to prescribe operational behavior or system outcomes (ISO, 2023; NIST, 2023).

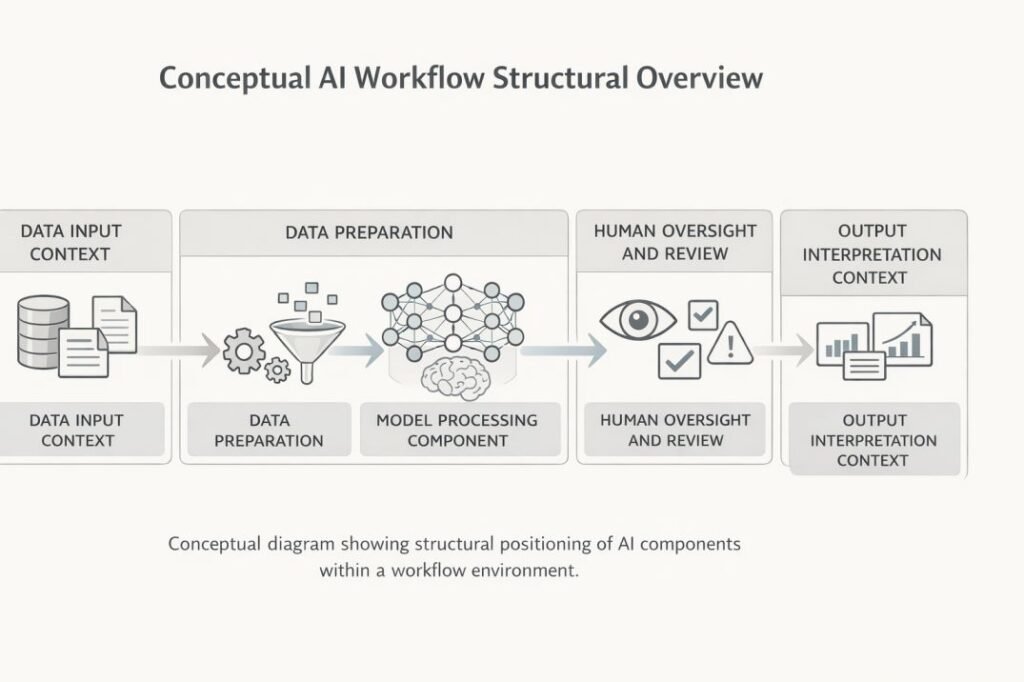

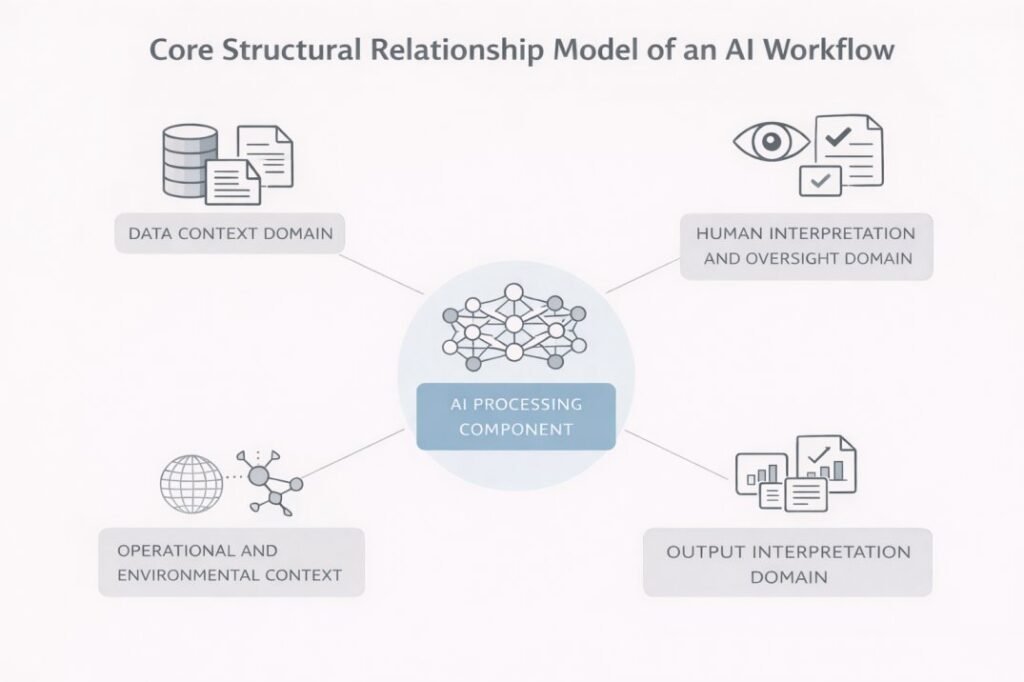

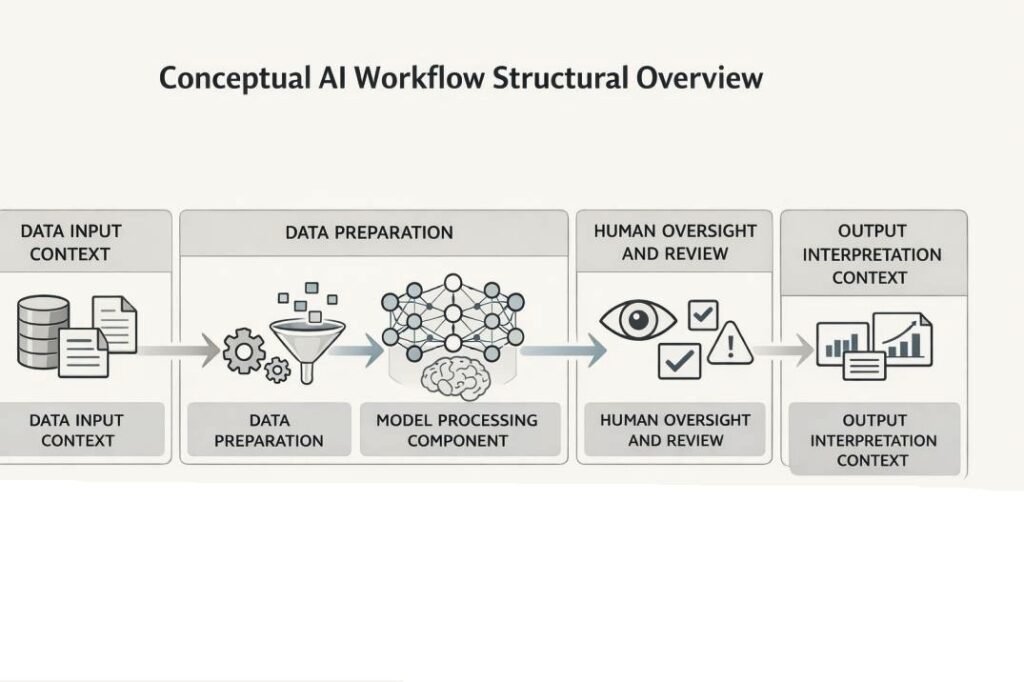

The diagram illustrates how AI processing remains structurally embedded within broader data, oversight, and interpretation domains rather than operating as an isolated decision system.

AI Tools as Process Components

AI tools are commonly described as functional components inside workflows rather than complete independent systems. A workflow may include data preparation stages, model processing stages, validation stages, and interpretation stages. The positioning of AI tools depends on workflow purpose and information processing requirements (ISO, 2022).

Institutional research frequently describes AI systems as combinations of data inputs, computational models, and human oversight processes rather than standalone technologies. This component-based framing reflects the socio-technical structure of AI deployment environments (NIST, 2023).

AI systems often generate probabilistic outputs based on statistical or pattern-recognition processes. Because of this probabilistic nature, outputs typically require interpretation within a broader decision context rather than being treated as deterministic system conclusions (NIST, 2023).

Why Workflow Integration Exists

Workflows exist because many organizational processes require traceability and structured sequencing across system components (ISO, 2023a; NIST, 2023).

AI systems often generate probabilistic outputs, which means results must be interpreted within a broader decision context rather than treated as final determinations (NIST, 2023).

Workflow integration is designed to position these outputs within decision-support environments that include validation, oversight, and contextual review stages (ISO, 2023a; OECD, 2019).

This structural placement helps explain how automated processing is coordinated with human responsibility without implying full automation (UNESCO, 2021).

Common Misunderstandings

A common misconception is that AI workflow integration implies full process automation. Governance and ethics frameworks, including international policy guidance on human oversight in AI systems, describe many AI-enabled workflows as hybrid environments that include multiple human interaction and review points. AI processing may support pattern detection, classification, or data transformation tasks, but these functions typically operate within broader system structures that include non-AI software components, monitoring controls, and human evaluation layers (UNESCO, 2021; OECD, 2019; NIST, 2023).

This conceptual framing explains why workflow diagrams frequently emphasize system relationships, data flows, and responsibility boundaries rather than focusing on individual tool capabilities. In institutional documentation, workflows are commonly used to illustrate system coordination and accountability structure rather than computational performance characteristics (ISO, 2023a; NIST, 2023).

Structural Positioning of AI Tools Inside Workflow Architectures

Within documented workflow environments, AI tools are positioned as processing nodes operating within defined input and output constraints. These components transform or interpret data but are typically not positioned as independent decision entities. Workflow documentation often separates processing logic from interpretation responsibility to support traceability and accountability visibility across system stages (ISO, 2023; OECD, 2019).

Workflow terminology may refer to multiple structural patterns. These may include model lifecycle workflows, decision-support workflows used in organizational review environments, and governance-focused workflows designed to support accountability and audit traceability (ISO, 2023).

Data Interaction Layer

Workflow architectures commonly begin with data acquisition, validation, and transformation stages. Institutional lifecycle documentation describes data preparation as part of system input assurance processes designed to support consistency before data enters AI processing components. In many documented workflow environments, AI processing components are positioned after data normalization or preprocessing stages in order to support consistent data formatting and traceable input preparation.

AI Processing Layer

Within workflow architectures, the AI processing layer is typically defined as the stage where model inference, classification, or pattern recognition occurs. Institutional terminology standards, including ISO/IEC 22989:2022, describe AI processing as transformation of input data into outputs based on model structure and learned patterns. In workflow environments, this stage is generally documented as part of a sequential processing structure rather than as a final decision endpoint.

Output Handling and Routing

Outputs generated by AI processing components are typically routed to additional system layers for storage, validation, reporting, or contextual integration. Institutional lifecycle and monitoring documentation describes output routing as part of system observability and post-processing traceability structures. Workflow architectures may include validation checkpoints where outputs are evaluated against system rules, thresholds, or monitoring conditions before progressing to downstream workflow stages (ISO, 2023b; NIST, 2023).

Human Interaction Points

Human interaction stages are commonly embedded within workflow architectures as review, interpretation, or contextual evaluation stages. Governance and oversight documentation frequently describes these stages as part of system accountability and decision context validation structures. Their presence reflects recognition that AI outputs may require domain-specific interpretation or situational context before further system or organizational action occurs (UNESCO, 2021; ISO, 2023a).

Review and Interpretation Sub-layer

This sub-layer is used to clarify where human or system-level contextual judgment intersects with AI-generated outputs. Institutional governance and risk documentation frequently highlights interpretation stages as part of responsible system design, particularly in environments where AI outputs inform downstream processes or decisions.

Structural positioning is used to clarify system boundaries, component relationships, and responsibility mapping across workflow environments without implying full automation or removal of human involvement in system processes.

Contextual Integration of AI Workflow Integration Across Environments

Within institutional deployment and lifecycle documentation, AI workflow integration is described as occurring in environments where automated data processing must operate alongside existing operational systems. Risk management and governance-oriented frameworks, including deployment context descriptions in the NIST Artificial Intelligence Risk Management Framework (2023), describe workflows as structures used to document how AI processing interacts with legacy software systems, human review procedures, and organizational data governance controls. In this context, workflows do not replace existing systems but instead document relationships between system components and responsibility structures.

In many institutional environments, workflow documentation functions as a coordination layer that connects automated processing stages with review, monitoring, and accountability mechanisms across system environments.

Organizational Contexts

Within organizational system environments, workflows are commonly used to document how AI processing components interact with databases, software platforms, and operational infrastructure layers. Standards documentation describes AI components as analytical or classification stages positioned within broader digital system environments rather than as standalone operational systems.

Research and Documentation Contexts

Within research and technical documentation environments, workflows are used to describe analytical pipelines, experimental data processing sequences, and model interaction structures. These workflow descriptions are used to document data transformations, model inference processes, and evaluation structures in a structured and traceable format. Institutional research guidance frequently uses workflow representations to support reproducibility and documentation clarity across experimental and analytical environments.

Governance and Policy Contexts

Policy and standards organizations frequently use workflow models to describe system accountability structures and oversight boundaries across AI-enabled system environments. International governance frameworks, including accountability and human oversight guidance described in OECD and UNESCO AI policy materials, describe workflow diagrams as tools for illustrating responsibility distribution across technical processing layers and human oversight structures (OECD, 2019; UNESCO, 2021).

Cross-Domain Variability

Institutional documentation recognizes that workflow structures vary across operational sectors and system environments. Some workflow environments emphasize data monitoring and system observability, while others emphasize document processing, classification, or pattern recognition structures. The workflow concept is used to describe structural relationships across system components rather than domain-specific operational outcomes.

This contextual variation reflects how AI processing components are positioned differently depending on system requirements, organizational objectives, and regulatory governance environments.

Limitations, Uncertainty, and Structural Constraints in AI Workflow Integration

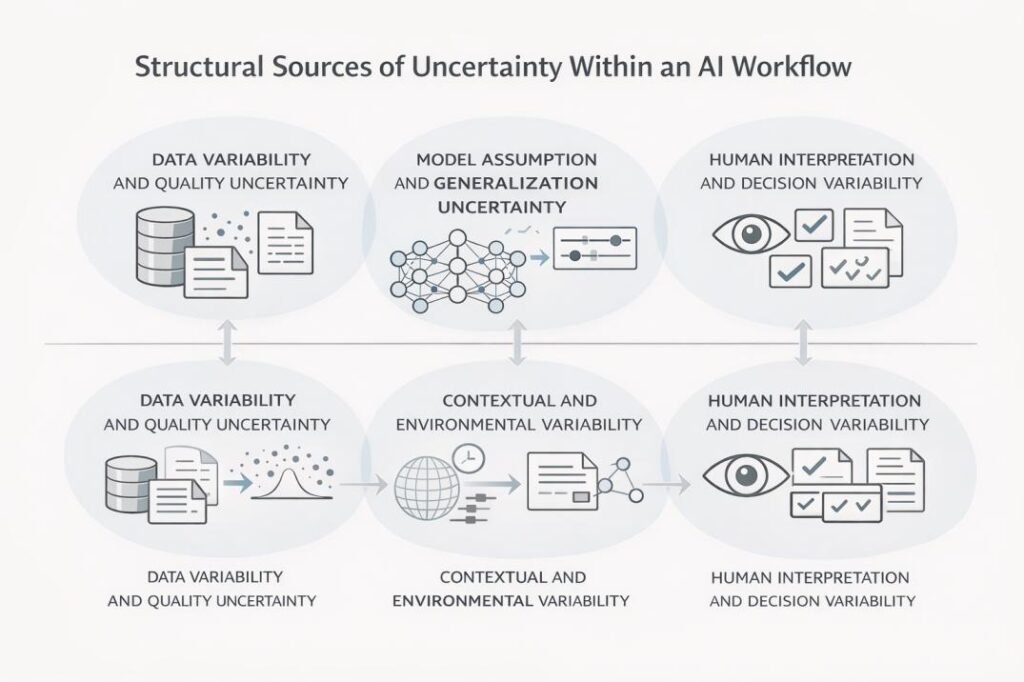

AI workflows are designed to document system structure rather than guarantee output consistency. Variability may originate from data quality differences, model generalization limits, or environmental context changes. Institutional risk frameworks often treat workflow documentation as a transparency mechanism rather than a direct control mechanism for uncertainty (NIST, 2023).

AI models operate based on statistical or pattern-based assumptions. Workflow integration helps contextualize outputs but does not remove uncertainty associated with probabilistic systems (ISO, 2022; NIST, 2023).

Institutional guidance frequently notes that workflow documentation improves transparency and traceability but does not create deterministic system control. These limitations are considered inherent characteristics of AI-assisted systems rather than design failures (ISO, 2023; NIST, 2023).

Similar observations are reflected in European Commission Joint Research Centre analyses, which describe AI system performance as dependent on contextual, data, and environmental variability across deployment environments (EC JRC, 2020).

Data Dependency Constraints

Institutional documentation consistently describes AI system behavior as dependent on input data structure, quality, and representativeness. Variability across data sources may influence downstream workflow processing stages. Workflow documentation is used to identify where data dependencies exist within system architectures and to support monitoring and traceability of data-related variability across workflow stages (ISO, 2022; NIST, 2023).

Model Assumption Limitations

AI models operate using statistical inference, probabilistic pattern recognition, or learned data relationships. Institutional terminology standards and risk documentation describe model outputs as influenced by training data characteristics and model design assumptions. Workflow integration may support contextual interpretation of model outputs within broader system environments but is not described as removing uncertainty associated with probabilistic system behavior.

Structural Constraint Factors

Workflow architectures are often influenced by organizational governance structures, regulatory compliance requirements, and infrastructure capabilities. Institutional governance and lifecycle documentation describes these constraints as shaping how AI processing components are positioned and monitored across system environments. These structural factors may influence workflow design and documentation practices across different deployment contexts.

Documentation vs. Control

Institutional governance and risk documentation frequently distinguishes between documentation transparency and system control. Documentation of workflow structure is described as supporting system transparency, traceability, and accountability mapping rather than enforcing deterministic system behavior. In institutional documentation, these characteristics are described as inherent properties of AI-assisted socio-technical system environments rather than as system design failures.

As a result, workflow structures are commonly described as explanatory system documentation mechanisms rather than as enforcement mechanisms for deterministic or fully predictable system behavior.

Institutional research, including technical analyses from the European Commission Joint Research Centre, describes AI system behavior as influenced by data variability, environmental conditions, and deployment context differences across operational environments (EC JRC, 2020).

Interpretation, Oversight, and Boundary Conditions in Integrated AI Workflows

Human oversight stages are commonly positioned at interpretation or validation boundaries rather than inside automated processing stages. These stages are designed to introduce contextual judgment, regulatory review, or exception handling when required. Oversight does not replace automated processing but exists as a structural accountability layer. In governance frameworks, this layer is treated as part of system accountability documentation structures (UNESCO, 2021; OECD, 2019).

Oversight boundaries describe where automated system processing responsibility transitions to human or organizational review. Institutional frameworks typically describe oversight as a structural governance safeguard rather than as a corrective mechanism applied directly to model outputs (ISO, 2023).

Workflow documentation commonly identifies where interpretation occurs but does not standardize interpretation methods, reflecting the domain-specific nature of contextual evaluation (UNESCO, 2021).

Interpretation Layer

AI outputs are often evaluated within domain-specific or operational contexts. Institutional documentation typically identifies where interpretation stages occur within system workflows but does not standardize how interpretation must be performed. Interpretation is generally described as a contextual evaluation stage that occurs after automated inference and before downstream system or organizational actions occur.

Conceptual Oversight Boundary

Institutional governance frameworks describe system oversight boundaries as points where automated processing responsibility transitions to human, organizational, or system-level accountability structures. In this article, the term oversight boundary is used as a conceptual description of responsibility transition points rather than as a formal standardized technical term. Governance documentation describes oversight as a structural safeguard supporting accountability and system transparency rather than as a corrective extension of model processing.

Contextual Judgment Role

Institutional governance and ethics documentation recognizes that human reviewers may evaluate contextual factors that are not fully represented in training data or automated inference processes. Workflow documentation is used to make these review points visible within system structure and responsibility mapping across workflow environments.

Boundary Definition Importance

Institutional governance frameworks emphasize clear definition of system responsibility boundaries across automated and human system components. Workflow diagrams are often used to document these boundaries in order to clarify system roles, accountability structures, and review responsibilities across workflow stages.

Interpretation and oversight structures are described in institutional documentation as mechanisms for maintaining accountability and transparency across socio-technical system environments. These structures are not described as indicating replacement of human decision-making authority by automated system processing.

Conclusion

AI workflow integration provides a structural framework for understanding how AI tools are positioned inside broader process environments. This perspective explains how AI components interact with data systems, software layers, and human oversight mechanisms inside socio-technical system structures (NIST, 2023).

Institutional and academic literature frequently presents AI workflows as documentation structures that support transparency and traceability across system stages while acknowledging uncertainty and contextual dependency (ISO, 2023; OECD, 2019).

From an educational perspective, AI workflow integration is best understood as a structural and descriptive framework explaining how AI tools operate within organized technical, organizational, and governance environments rather than as mechanisms for guaranteeing deterministic outcomes (NIST, 2023; UNESCO, 2021).

References

- European Commission Joint Research Centre (2020) Trustworthy Artificial Intelligence: From Principles to Practice. Luxembourg: Publications Office of the European Union. Available at: https://publications.jrc.ec.europa.eu/repository/handle/JRC120399 (Accessed: 8 February 2026).

- International Organization for Standardization (ISO) and International Electrotechnical Commission (IEC) (2022) ISO/IEC 22989:2022 Artificial Intelligence — Concepts and Terminology. Geneva: ISO. Available at: https://www.iso.org/standard/74296.html (Accessed: 8 February 2026).

- International Organization for Standardization (ISO) and International Electrotechnical Commission (IEC) (2023a) ISO/IEC 42001:2023 Artificial Intelligence — Management System. Geneva: ISO. Available at: https://www.iso.org/standard/81230.html (Accessed: 8 February 2026).

- International Organization for Standardization (ISO) and International Electrotechnical Commission (IEC) (2023b) ISO/IEC 5338:2023 Artificial Intelligence — AI System Life Cycle Processes. Geneva: ISO. Available at: https://www.iso.org/standard/81183.html (Accessed: 8 February 2026).

- National Institute of Standards and Technology (NIST) (2023) Artificial Intelligence Risk Management Framework (AI RMF 1.0). Gaithersburg, MD: U.S. Department of Commerce. Available at: https://nvlpubs.nist.gov/nistpubs/ai/NIST.AI.100-1.pdf (Accessed: 8 February 2026).

- National Institute of Standards and Technology (NIST) (2023b) Towards a Standard for Identifying and Managing Bias in Artificial Intelligence. Gaithersburg, MD: U.S. Department of Commerce. Available at: https://nvlpubs.nist.gov/nistpubs/SpecialPublications/NIST.SP.1270.pdf (Accessed: 8 February 2026).

- Organisation for Economic Co-operation and Development (OECD) (2019) OECD Principles on Artificial Intelligence. Paris: OECD. Available at: https://oecd.ai/en/ai-principles (Accessed: 8 February 2026).

- United Nations Educational, Scientific and Cultural Organization (UNESCO) (2021) Recommendation on the Ethics of Artificial Intelligence. Paris: UNESCO. Available at: https://unesdoc.unesco.org/ark:/48223/pf0000380455 (Accessed: 8 February 2026).

- Amershi, S. et al. (2019) ‘Software Engineering for Machine Learning: A Case Study’, Proceedings of ICSE. Available at: https://ieeexplore.ieee.org/abstract/document/8804457