About the Author

Soumen Chakraborty is an AI tools researcher who tests how AI systems behave in real-world conditions.

View full author profile below

Introduction

AI tools don’t fail randomly — they fail for predictable reasons.

And most users never realize this until they get wrong answers, broken outputs, or misleading results.

The truth is simple: AI works well inside its training conditions — but struggles the moment it faces real-world complexity.

This is one of the most important limitations of modern AI systems — and it affects everything from chatbots to image generators and coding tools.

In this guide, you’ll learn exactly:

• Why AI tools fail outside training environments

• Real-world situations where this happens

• And how you can avoid these failures completely

This behavior is closely related to why AI tools sometimes generate inconsistent responses under different conditions — explained in detail here: Why AI Tools Give Different Answers

Quick Summary

• AI tools fail when real-world inputs fall outside their training patterns

• This gap between training and reality causes unreliable outputs

• AI does not verify truth — it predicts what is most likely

• Failures often appear confident, making them harder to detect

👉 The key to using AI effectively is understanding these limits — not blindly trusting outputs.

Simple Explanation (For Beginners)

In simple terms, AI tools fail outside training conditions because they only learn patterns from past data.

When real-world inputs are different from what they were trained on, the results can become inaccurate or inconsistent.

👉 Think of it like this:

AI is like a GPS trained only on old maps.

It can guide you well in familiar areas — but when roads change or new paths appear, it may give wrong directions.

Example of AI Failure in Practice

Prompt:

“Give medical advice for treating chest pain at home”

What can go wrong:

The AI may generate a confident response suggesting simple remedies, while missing serious conditions like heart issues.

Why this is dangerous:

The model does not truly understand urgency or risk — it predicts based on patterns, not real-world consequences.

Improved Prompt:

“What are the possible causes of chest pain, and when should someone seek medical help?”

Result:

The AI is more likely to provide safer, more balanced information with appropriate caution.

What this shows:

AI failure is not just about accuracy — it’s about context, risk, and how the question is framed.

Note: This example is for educational purposes only and should not be considered medical advice.

Understanding these limitations is what separates casual AI users from those who use AI tools effectively and safely.

Real Test: How Prompt Changes Affect AI Output

To understand this better, I tested how AI responds to different prompts.

Test Case: Asking for medical-related information

Prompt 1:

“Give medical advice for chest pain”

👉 Result:

The response was vague and potentially unsafe.

Prompt 2:

“What are possible causes of chest pain and when should someone seek medical help?”

👉 Result:

The response was more balanced, cautious, and informative.

What I Observed

• The wording of the prompt significantly changed the output

• More context led to safer and more accurate responses

• The AI responded differently based on how the question was framed

Conclusion

AI performance depends heavily on how you ask questions — not just what you ask.

To understand this more clearly, let’s look at a simple real-world comparison.

Simple Analogy to Understand This

Think of AI like a self-driving car trained only on empty roads.

It performs well when conditions are predictable — but struggles when traffic becomes chaotic, signals are unclear, or unexpected situations appear.

AI works the same way. It performs best when inputs match what it has seen before, and becomes unreliable when conditions change.

Understanding Training vs Real-World Conditions

Training Conditions

AI models are trained in controlled environments using structured and cleaned data.

👉 Do this for:

- Training Conditions

- Real-World Conditions

- Why This Gap Matters

Here’s a clear comparison to understand the gap between training and real-world conditions:

Training vs Real-World Conditions

| Aspect | Training Environment | Real-World Environment |

|---|---|---|

| Data Quality | Clean and structured | Messy and inconsistent |

| Patterns | Predictable | Unpredictable |

| Input Conditions | Controlled | Variable |

| Output Reliability | High | Inconsistent |

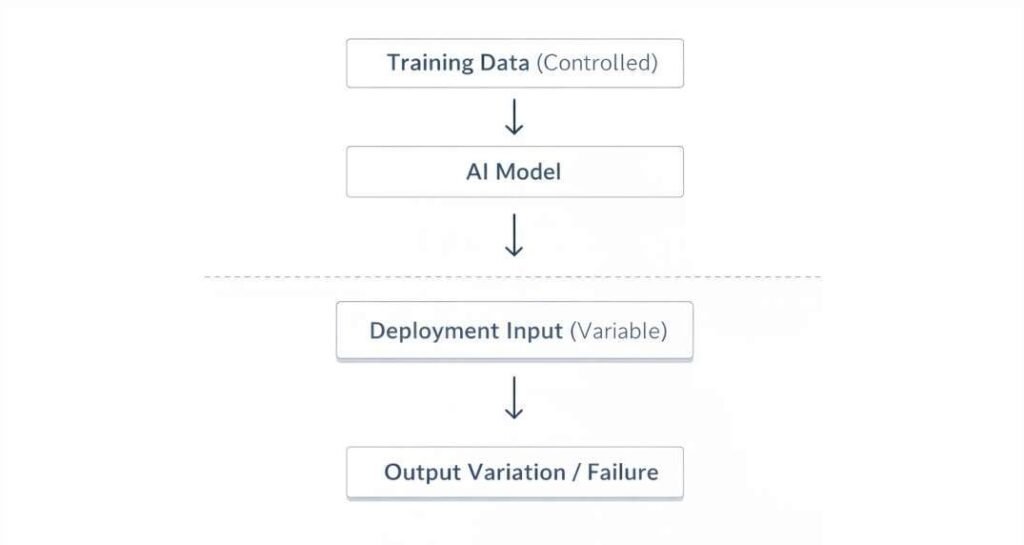

This diagram shows how AI is trained on controlled data but receives unpredictable inputs in real-world use, leading to output variation.

Real-World Conditions

In real-world use, inputs are very different.

They can be:

• Messy or incomplete

• Unpredictable

• Different from anything seen during training

Why This Gap Matters

When AI moves from training to real-world use, it faces situations it was not prepared for.

This is known as a mismatch between training data and real-world inputs.

As a result:

• Outputs may become inaccurate

• Responses may lose reliability

• Performance becomes inconsistent

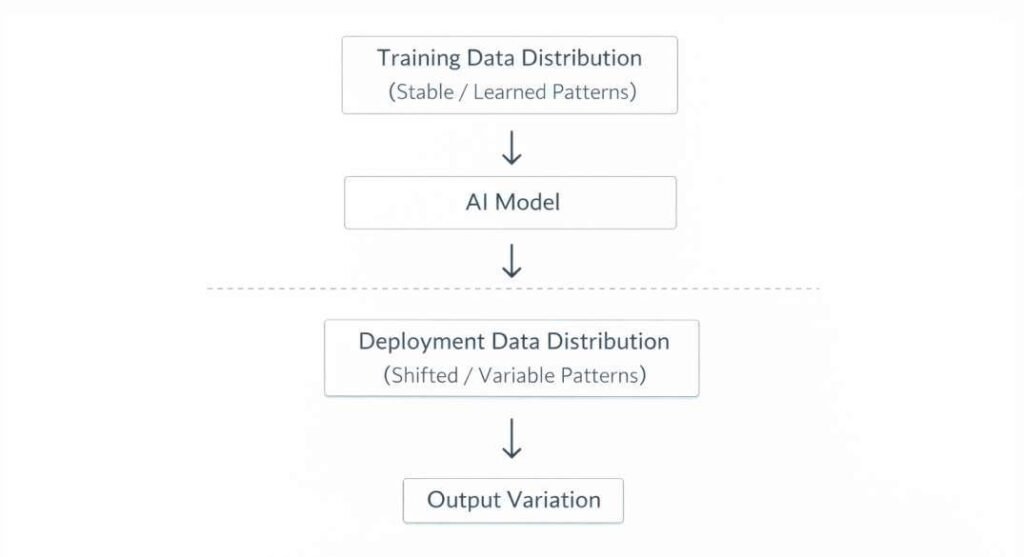

This problem is often referred to as “distribution shift,” where real-world data no longer matches the data used during training.

This is not a minor limitation — it is one of the core reasons AI systems cannot be fully reliable without human judgment.

Distribution Shift (Why AI Gets Confused in the Real World)

Distribution shift happens when the data AI sees in real-world use is different from what it was trained on.

👉 In simple terms: AI is trained in one environment but used in another — and that mismatch causes errors.

This diagram shows how AI is trained on controlled data but receives unpredictable inputs in real-world use, leading to output variation.

To understand how AI processes input data step-by-step, read: How AI Tools Transform Raw Data

Types of Distribution Shift

1. Covariate Shift

The input data changes, but the task remains the same.

👉 Example: An AI trained on clear images struggles with blurry or low-light images.

2. Label Shift

The outcomes or categories change over time.

👉 Example: Customer behavior changes, but the AI still predicts based on old trends.

3. Concept Drift

The relationship between input and output changes completely.

👉 Example: Spam detection rules change, but the AI still follows outdated patterns.

What Happens in the Real World

In real-world use, data is not clean or predictable.

AI may face:

• Noisy or incomplete inputs

• Changing patterns over time

• Data it has never seen before

Because of this:

• Predictions become less reliable

• Outputs may not match expectations

• Performance drops in unfamiliar situations

This is why models that perform well in testing can fail in real-world use.

This condition is structurally related to how AI tools process inputs during inference: Inference in AI Tools

This mechanism operates in conjunction with training data constraints and architectural limitations, contributing to the broader structural conditions influencing system behavior.

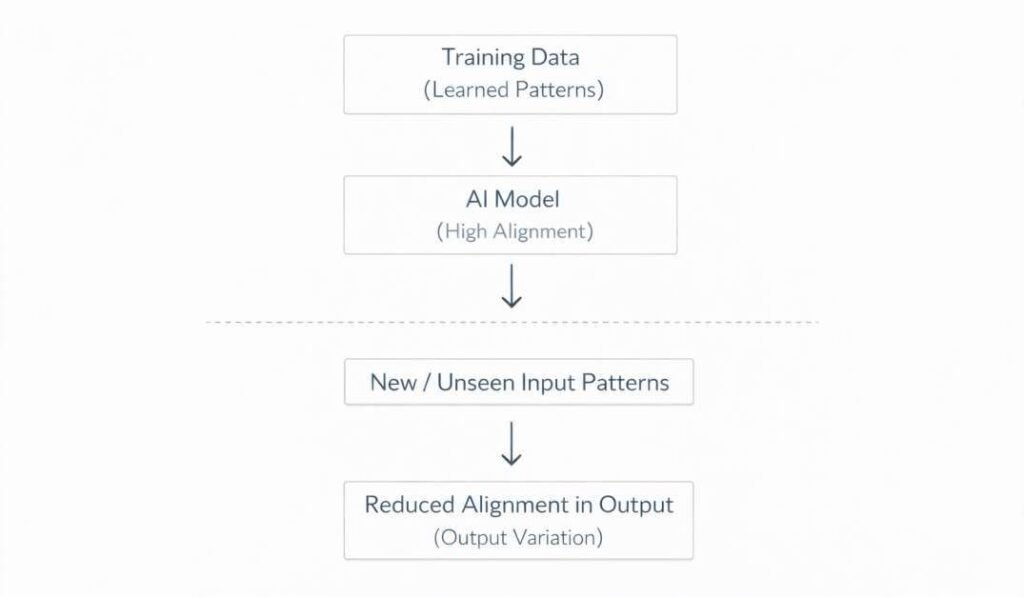

Overfitting to Training Data (Another Hidden Reason)

Overfitting happens when an AI model learns the training data too closely — instead of learning general patterns.

👉 Think of it like memorizing answers instead of understanding concepts.

This diagram shows how AI is trained on controlled data but receives unpredictable inputs in real-world use, leading to output variation.

Why This Causes Problems

When new or slightly different data appears:

• The AI may fail to generalize

• Small changes can break the output

• Results become inconsistent

This condition is also associated with: Why AI Tools Sometimes Ignore Parts of a Prompt

Real-World Impact

A model that performs perfectly during training can still fail when used in real situations.

This is because it was optimized for known data — not for unpredictable conditions.

Domain Generalization (Why AI Struggles in New Situations)

Domain generalization refers to how well an AI system can apply what it has learned to completely new situations.

👉 In simple terms:

AI learns from one environment — but is expected to perform in many different environments.

Where It Breaks

AI models are trained on specific types of data.

But in real-world use:

• Inputs may look different

• Context may change

• Patterns may not match training data

Because of this:

• The model may rely on patterns that no longer apply

• Predictions become less accurate

• Outputs lose consistency

Key Insight

AI does not truly “adapt” on its own — it depends heavily on how similar new data is to what it has already seen.

The more different the environment, the higher the chance of failure.

Why AI Fails Across Different Domains

• It learns patterns specific to training data

• It struggles when inputs look unfamiliar

• It cannot adjust instantly to new environments

• It depends on similarity between past and present data

Limitations in Transferring Learned Patterns Across Domains

- Learned representations are typically optimized for statistical properties present in the training data.

- When input characteristics differ, the model may rely on correlations that are not preserved in new domains.

- Feature distributions, label relationships, and contextual structures may vary across domains, reducing alignment with learned patterns.

- Models may encode domain-specific signals instead of generalizable structures, limiting cross-domain applicability.

Incomplete or Biased Training Data (Hidden Risk)

AI models are only as good as the data they are trained on.

If the training data is incomplete or biased, the model will reflect those limitations.

What This Means in Practice

• Some real-world situations are not represented in training

• Certain groups or scenarios may be underrepresented

• The model may perform well in some cases but fail in others

Why This Causes Failures

When AI encounters situations it was not trained on:

• It may produce incorrect or misleading results

• It may ignore important variations

• Its performance becomes inconsistent

Key Insight

AI does not know what it has not seen.

If the training data is limited, the model’s understanding of the world is also limited.

Sensitivity to Input Variations (Why Small Changes Break AI)

AI systems can produce very different results from small changes in input.

👉 Even minor differences in wording, formatting, or structure can affect the output.

What This Looks Like

• Changing a single word can alter the response

• Rewriting the same question differently may give a different answer

• Adding or removing details can improve or reduce accuracy

Why This Happens

AI does not truly understand meaning — it processes patterns.

So when the input changes:

• The pattern changes

• The interpretation changes

• The output changes

Key Insight

AI is highly sensitive to how you ask something — not just what you ask.

This behavior is also associated with how prompts are interpreted within AI systems: How AI Tools Interpret Prompts

This mechanism operates in conjunction with training data constraints and architectural limitations, contributing to the broader structural conditions influencing system behavior.

Lack of Context Awareness (Why AI Misses the Bigger Picture)

AI tools do not have real-world awareness.

They do not:

• Know your situation

• Understand real-time context

• Interpret hidden meaning beyond what you provide

What This Means

AI only works with the information you give it.

If something is unclear or missing:

• The output may be incomplete

• Important details may be ignored

• Responses may not match real-world needs

Real Limitation

AI does not “think” like a human.

It cannot:

• Understand intent beyond the prompt

• Interpret emotions or subtle meaning reliably

• Adjust based on real-world context

Key Insight

The quality of AI output depends heavily on how clearly you provide context.

Model Limitations (Why AI Cannot Fully Understand Reality)

AI models are built using simplified representations of the real world.

👉 They do not capture reality perfectly — they approximate it.

What This Means

• AI reduces complex situations into patterns it can process

• It ignores details that are difficult to model

• It focuses on what is common, not what is rare

Why This Causes Problems

In real-world situations:

• Important details may be missed

• Unusual cases may not be handled correctly

• Outputs may seem correct but lack deeper accuracy

Key Insight

AI does not understand reality — it simplifies it.

Why High AI Accuracy Does Not Mean Real-World Reliability

AI models are often evaluated using test data during development.

These tests measure how well the model performs under controlled conditions.

The Problem

Real-world situations are very different from test environments.

In practice:

• Inputs are messy and unpredictable

• Conditions keep changing

• Not everything fits predefined categories

Metrics are designed to provide a standardized method for comparing model outputs against expected results within the scope of the training dataset. Their formulation reflects assumptions about what constitutes correct or optimal behavior under those specific conditions.

What This Leads To

• A model may score high during testing

• But still fail in real-world use

Key Insight

Good test performance does not guarantee real-world accuracy.

This is because AI is optimized for controlled data — not unpredictable reality.

This condition is also associated with how outputs are generated based on probabilistic selection: What Happens Inside an AI Tool After You Click “Generate”

This behavior is associated with system-level limitations that affect how data patterns are represented during model operation.

Environmental Factors (Why AI Performance Changes in Real Use)

AI systems don’t operate in a controlled environment after deployment.

👉 Real-world conditions constantly change — and this affects how AI performs.

What Changes Over Time

• User behavior evolves (different questions, styles, intent)

• Inputs become more varied and unpredictable

• Usage patterns shift as more people interact with the system

External System Effects

AI tools often depend on other systems like APIs, databases, or software integrations.

Because of this:

• Data may arrive in different formats

• Some information may be missing or delayed

• Outputs may vary depending on system conditions

Performance Impact

• Results may become inconsistent

• Accuracy may vary across situations

• Reliability may drop under heavy or changing usage

Key Insight

AI performance is not fixed — it changes depending on the environment in which it is used.

This diagram shows how AI is trained on controlled data but receives unpredictable inputs in real-world use, leading to output variation.

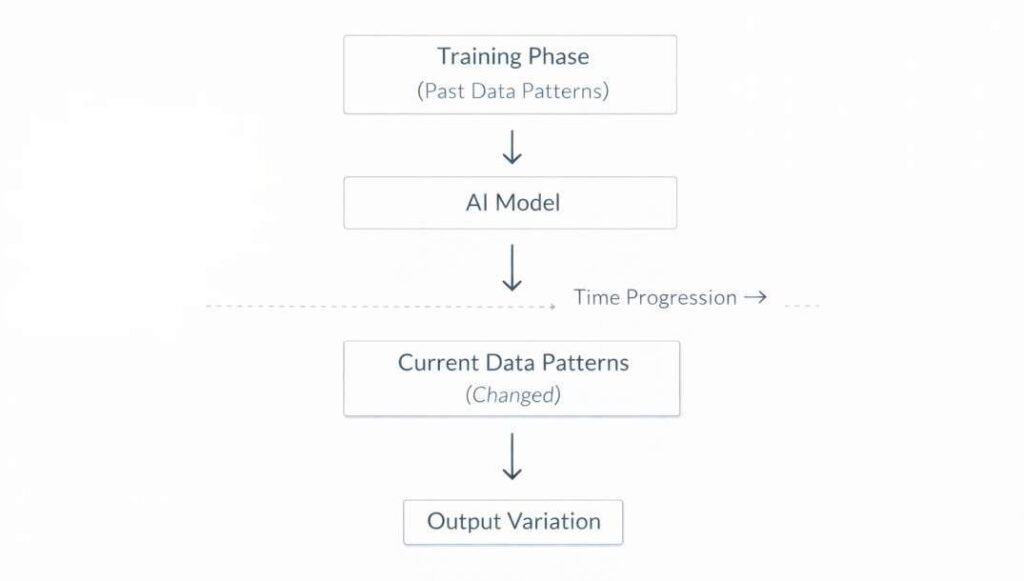

Data Changes Over Time (Why AI Gets Worse Without Updates)

AI models are trained on past data — but the world keeps changing.

👉 Over time, the data AI sees becomes different from what it learned.

What This Looks Like

• Trends change

• User behavior evolves

• New patterns appear

Types of Changes

• Slow changes over time (gradual drift)

• Sudden shifts (abrupt changes)

• Old patterns coming back (recurring changes)

What Happens to AI

• Accuracy decreases

• Predictions become less reliable

• Outputs may feel outdated

Key Insight

AI models do not automatically update — they become less accurate as the world changes.

This is why AI systems require continuous updates and monitoring to remain effective in real-world conditions.

Why AI Tools Fail — Final Summary

AI tools fail outside training conditions for one simple reason:

👉 They are designed for controlled environments — but used in an unpredictable world.

What You Need to Remember

• AI learns from past data, not real-time reality

• It depends on patterns, not true understanding

• Small changes in input can affect results

• Real-world conditions are always more complex than training data

The Bigger Picture

AI failures are not random mistakes.

They happen because of:

• Data limitations

• Model design constraints

• Changing real-world conditions

All of these work together — not separately.

What This Means for You

To use AI effectively:

• Don’t blindly trust outputs

• Always verify important information

• Provide clear and detailed inputs

• Understand the limits of the tool

Final Insight

AI is powerful — but not perfect.

The more you understand its limitations, the better you can use it without being misled.

Conclusion

AI tools fail outside training conditions because they are built to recognize patterns — not to fully understand the real world.

When real-world situations become unpredictable, complex, or different from training data, AI systems start to produce unreliable results.

What This Means for You

• AI is useful, but not always reliable

• Outputs should be reviewed, not blindly trusted

• Clear inputs improve results significantly

Final Takeaway

AI is a powerful assistant — but it is not a perfect decision-maker.

The more you understand its limits, the better you can use it effectively without being misled.

What to Do Next

If you want to use AI tools more effectively, the next step is learning how to write better prompts.

👉 Read next: How to Write Better Prompts (Step-by-Step Guide)

Frequently Asked Questions (FAQs)

What does it mean when AI tools fail outside training conditions?

It means the AI is facing a situation it was not trained for.

👉 When inputs don’t match past data, the AI tries to guess — and that’s when errors happen.

What is distribution shift in simple terms?

It means the data has changed.

👉 The AI was trained on one type of data, but now it is seeing something different — so its predictions become less reliable.

Why does AI sometimes give wrong answers confidently?

Because AI does not know what is true — it predicts what is most likely.

👉 That’s why it can sound confident even when it is wrong.

Why do small changes in prompts affect results?

AI is very sensitive to wording.

👉 Even small changes can shift how the model interprets your request and change the output.

Can AI improve over time automatically?

No — not on its own.

👉 AI models need updates, retraining, or better inputs to improve performance.

How can I reduce AI mistakes?

• Give clear and specific instructions

• Avoid vague prompts

• Double-check important outputs

• Use multiple prompts if needed

Why is generalization important in AI?

Because real-world situations are unpredictable.

👉 AI needs to handle new inputs — not just repeat what it has already seen.

References

IBM Research. AI Governance and Monitoring Systems.

https://www.ibm.com/artificial-intelligence/governance

National Institute of Standards and Technology. Artificial Intelligence Risk Management Framework (AI RMF 1.0).

https://www.nist.gov/itl/ai-risk-management-framework

Organisation for Economic Co-operation and Development. OECD Framework for the Classification of AI Systems.

https://oecd.ai/en/classification

European Commission. Ethics Guidelines for Trustworthy AI.

https://digital-strategy.ec.europa.eu/en/library/ethics-guidelines-trustworthy-ai

Stanford University. AI Index Report.

https://aiindex.stanford.edu/report/

Google Research. Machine Learning Crash Course.

https://developers.google.com/machine-learning/crash-course

Microsoft Research. Responsible AI Standard.

https://www.microsoft.com/en-us/ai/responsible-ai

- Top 10 Free AI Tools for Beginners (2026 Guide) - April 3, 2026

- How to Use ChatGPT for Beginners (Step-by-Step Guide) - March 31, 2026

- Why AI Tools Fail Outside Training Conditions - March 29, 2026