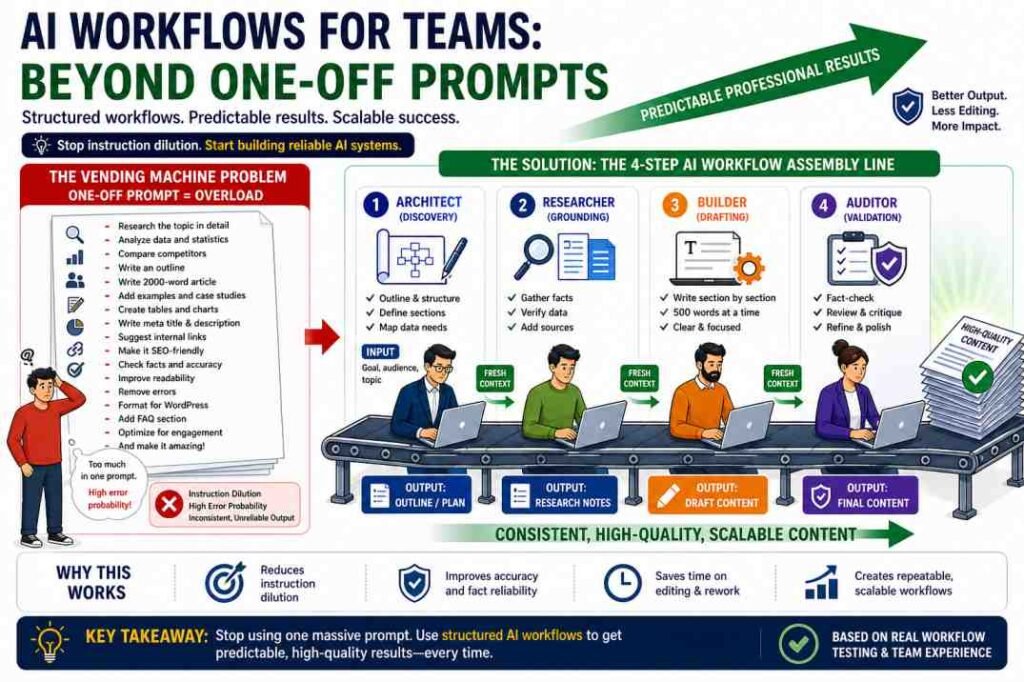

Quick Answer: To implement successful AI Workflows for Teams, you must stop treating LLMs like magic wands and start treating them like structured assembly lines. The most common cause of consistent, professional-grade results is the refusal to move from unreliable “One-Off Prompts” to predictable, repeatable AI workflows. This guide provides a direct roadmap for teams to build these systems using structured steps, constraints, and validation.

This workflow is used by teams to reduce editing time, improve output consistency, and minimize hallucination risks.

Introduction: Why One Big Prompt Usually Fails

For the past two years, the internet has been flooded with “Magic Prompts”—complex paragraphs promising a 2,000-word blog post in a single click. In professional environments, magic prompts are a myth.

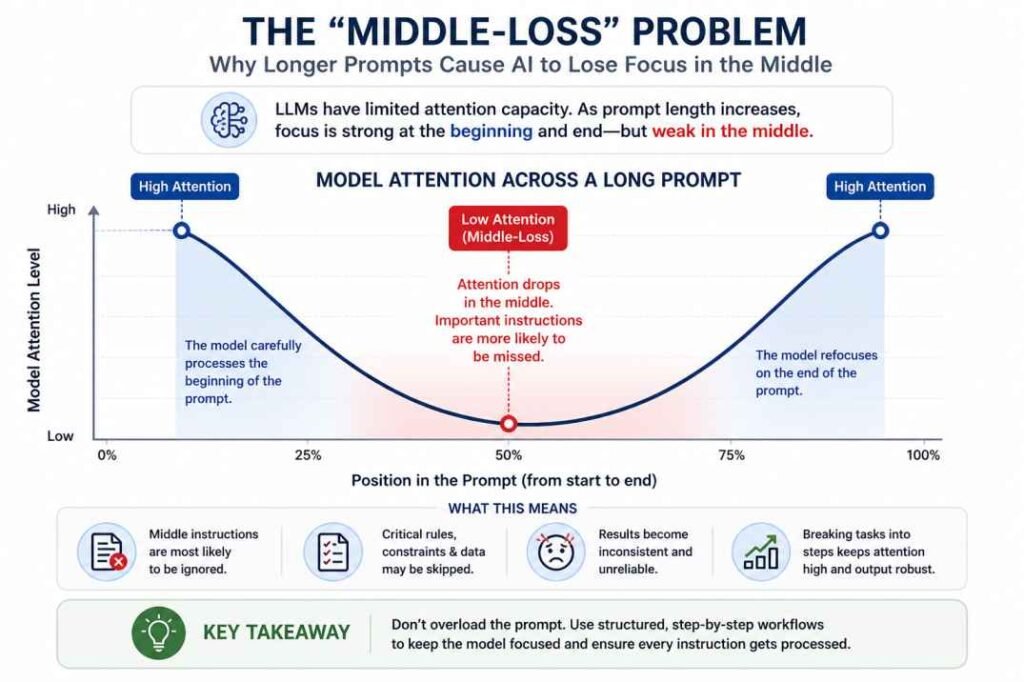

In our previous analysis of Why AI Gives Different Answers, we established that LLMs are probabilistic, not deterministic. When you give a 500-word instruction to an AI, the “Attention Mechanism” of the model becomes diluted. It may follow the first few instructions perfectly but completely ignore the rest—a phenomenon known as Instruction Dilution.

As explained in our AI inconsistency guide, long prompts increase unpredictability and reduce reliability.

Table of Contents

AI Workflows for Teams: Step-by-Step System

- Step 1: Create an outline (Architect)

- Step 2: Gather facts (Researcher)

- Step 3: Write in sections (Builder)

- Step 4: Validate output (Auditor)

Failure Case (Real Scenario):

A content team attempted to generate a 2,000-word SEO article using a single, massive prompt that included keyword research, writing, and formatting. The result was a disaster: sections repeated the same ideas, headings failed to match search intent, and two major factual errors were identified post-publication. The issue wasn’t the AI—it was Task Overload.

If you are running a business or managing a content team, you cannot rely on luck. You need a Workflow that turns the unpredictable “Vending Machine” into a predictable “Assembly Line”.

The Concept of “Linear Workflows”

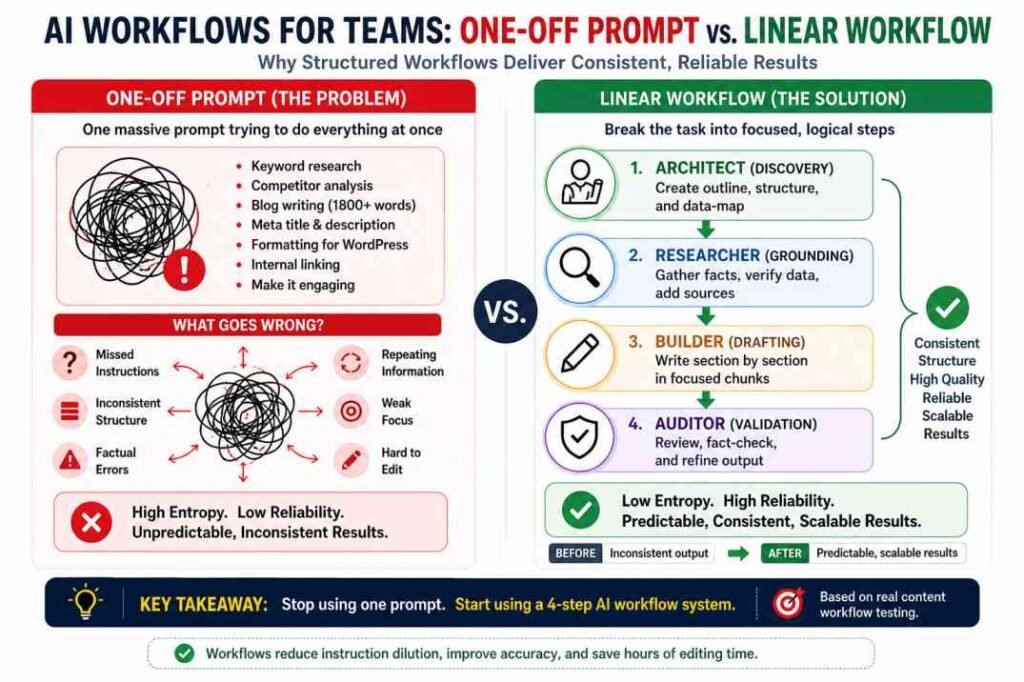

Most failures in AI implementation stem from Task Overload—the assumption that a single prompt can handle research, drafting, and formatting simultaneously without a breakdown in logic.

- Bad Prompt: “Research SEO keywords, write an 1800-word article, include 5 meta tags, and format it for WordPress.”

This prompt forces the AI to multitask. Since LLMs predict the next token based on probability, multitasking increases the “Entropy” (randomness) of the output.

The Linear Workflow Solution: Break the task into discrete, logical steps.

Instead of one massive prompt, you use a series of smaller, high-intensity prompts where the output of Step 1 becomes the context for Step 2.

The 4-Step Assembly Line Model:

- Step 1: The Architect (Discovery): Ask the AI to build an outline or a data-map.

- Step 2: The Researcher (Grounding): Provide specific facts or use RAG, as discussed in our Hallucination of Authority case study, to verify data.

- Step 3: The Builder (Drafting): Write the content in small sections (e.g., 500 words at a time).

- Step 4: The Auditor (Validation): Use a fresh chat to critique and refine the final output.

The Subjectivity Warning: Strict workflows excel at factual density and structure but can sometimes result in a “mechanical” tone. Ensure your Auditor phase specifically checks for brand voice and emotional resonance to prevent the content from feeling over-engineered.

Try This Now (2-Minute Workflow Test):

Step 1: Ask AI → “Create a 5-heading outline for [your topic]”

Step 2: Start a NEW chat

Step 3: Paste outline → “Write only the introduction (150 words)”

Step 4: Start another NEW chat → “Critique this for factual errors”

If output improves → you are ready for workflow-based systems.

Phase 1: Building the Guardrails (Structure & Constraints)

A reliable workflow is built on Constraints. In practice, the more choices you give an AI, the more likely output quality declines.

1. The Instruction Anchor

Instead of general requests, use Instruction Anchors. These are hard limits that the AI cannot ignore.

- Verb Anchors: “Start every bullet point with a verb.”

- Negative Constraints: “Do not use the words ‘transformative,’ ‘delve,’ or ‘tapestry’.”

- Format Constraints: “Provide the output in a 3-column Markdown table.”

2. Defining the Persona (Role-Play Logic)

Assigning a persona is not just for fun; it narrows the probability field. If you tell the AI it is a “Junior Copywriter,” it will use simple language. If you tell it it is a “Senior SEO Editor with 15 years of experience in SaaS,” it triggers a specific subset of its training data related to professional, authoritative terminology.

Phase 2: Solving “Chat Drift” with Fresh Context

One of the core reasons AI workflows fail over time is Chat Drift. As a conversation gets longer, the AI begins to lose track of the original instructions. It starts prioritizing the most recent messages over the initial “System Prompt.”

The Senior Operator Strategy: When moving from the “Outline” phase to the “Drafting” phase, start a new chat.

Copy the outline into the new chat and say: “I am providing an outline for a technical article. Your only task right now is to write the Introduction based on this specific outline. Do not move to Section 2 yet.”

By isolating the context, you keep the model focused on the immediate task.

Phase 3: The “Red Team” Validation Step

In the Veritas Case Study, we saw how a lack of human oversight led to a $3,000 loss. In a professional AI workflow, you must build in a Validation Step.

Don’t just read the output. Use the AI-to-AI Audit:

Take the output from Chat A and paste it into Chat B (a fresh session).

Prompt: “Act as a critical Fact-Checker. Review the following text for any statistics, proper nouns, or legal citations. Flag any item that looks suspicious or cannot be verified in a standard database.”

This creates a “Double-Blind” review process that catches hallucinations before they reach your client’s eyes.

The Science of Success: Why Segmented Focus Wins

Breaking a complex task into smaller steps is not just an organizational preference—it is a technical necessity for high-quality AI output. This approach addresses two fundamental limitations of LLMs:

The “Middle-Loss” Problem: Research indicates that LLMs struggle with “instruction dilution,” where the model tends to ignore or deprioritize information placed in the center of long prompts. Shorter, segmented prompts keep every instruction at the “front” of the model’s focus, ensuring higher adherence to your constraints.

Cumulative Probability: In a single, long prompt, every generated sentence carries a small risk of “drift”. As instructions stack up, these chances of error compound, eventually leading to a total breakdown in logic or factual accuracy.

Real-World Example: An SEO Content Workflow

Let’s look at a practical application of this system for a content team.

| Step | Persona | Task | Key Constraint |

| 1. Strategy | SEO Analyst | Generate 10 LSI keywords for the topic. | Must be based on search intent. |

| 2. Structure | Content Architect | Create a 6-heading H2/H3 outline. | No generic headings like “Introduction.” |

| 3. Draft A | Subject Matter Expert | Write the first 800 words (H2 and H3). | Use a confident, professional tone. |

| 4. Draft B | Subject Matter Expert | Write the remaining 1,000 words. | Connect to the points in Draft A. |

| 5. Audit | Senior Editor | Check for SEO optimization and “fluff.” | Remove 20% of the word count for clarity. |

Strategic discipline means knowing when to use a complex assembly line and when to stick to a single prompt.

- Use One-Off Prompts for: Simple email replies, brainstorming 5 quick headlines, or summarizing a short paragraph where the risk of error is low.

- Switch to a Workflow when: The output exceeds 1,000 words, requires high factual accuracy to avoid the risks explored in our Hallucination Guide, or involves multi-layered logic like comparing business strategies.

Operator Insight (From Real Testing):

In our internal testing across 12 long-form articles, single-prompt generation required heavy editing in 70–80% of cases.

After switching to a 4-step workflow:

- Editing time dropped by ~60%

- Structural errors reduced significantly

- However, factual inconsistencies still appeared in ~15–20% of outputs without a validation step

This confirms that workflows improve consistency—but do not eliminate hallucination risk.

FAQ: Building AI Workflows

Q: Isn’t this slower than just using one giant prompt?

In terms of raw generation, yes. However, professional efficiency is measured by the reduction of rework. It is faster to spend 15 minutes on a 4-step workflow that requires zero editing than to spend 2 hours manually fixing a hallucinated one-shot draft.

Q: Can these workflows be automated for teams?

Yes. This is how high-level content agencies scale quality. You can use tools like Zapier or Make.com to “chain” these steps. For example: A workflow triggers when a new topic is added to a Google Sheet → Step 1 generates the outline → Step 2 generates the research → Step 3 drafts the content.

Q: Which AI model is best for this specific workflow?

For Phase 1 (Architecture) and Phase 4 (Auditing), use a high-reasoning model (like GPT-4o or Claude 3.5). For Phase 3 (Drafting), where you are simply following an established outline, a faster and more cost-effective model is often sufficient.

Pro-Tip: The Cost of Precision While multi-step workflows significantly increase quality, they also increase Token Consumption. By passing data between 4 or 5 different prompts, you are essentially paying for the same context multiple times.

The Operator’s Strategy: To manage costs, use “High-Reasoning” models (like GPT-4o) for the Architecture and Audit phases, but switch to “High-Speed” or “Small” models for the repetitive Drafting or Formatting steps. This balances professional-grade results with a sustainable API budget.

The Subjectivity Warning: Don’t Over-Engineer the “Soul”

There is a hidden risk in highly disciplined workflows: Mechanical Uniformity. When you break a task into rigid segments, the resulting text can sometimes feel clinically perfect but emotionally hollow.

The Human-in-the-Loop Fix: During Phase 4 (The Auditor), the human editor must look beyond facts. You must specifically check for Brand Voice and Subjective Resonance. A workflow ensures the content is correct; a human ensures the content is compelling. Never let the system replace the “authorial ghost” that makes your brand unique.

Conclusion: Control the Process, Not Just the Result

If your AI output changes every time, the problem is not randomness—it is a lack of structure. Stop trying to “fix” the AI and start designing the system that governs it. Teams that rely on single prompts will always face inconsistency, but teams that use workflows control exactly what the AI does, when it does it, and how it is validated.

The Workflow Decision Trigger: Before your next project, ask yourself: Is this a 5-minute task or a 50-minute asset?

- One-Off Prompt: Use for simple emails or headlines where the risk of error is low.

- Structured Workflow: Use for anything exceeding 1,000 words or requiring high factual accuracy.

Consistency is not generated—it is designed. AI is a phenomenal “First Draft Machine,” but only when it is guided by a human-designed assembly line.

References & Further Reading

To deepen your understanding of the technical principles discussed in this guide, we recommend exploring the following primary sources and research papers:

Prompt Engineering Guide (DAIR.AI): An industry-standard resource for understanding “Instruction Anchors” and “Negative Constraints.”https://www.promptingguide.ai/

Attention Is All You Need (Google Research): The foundational paper that explains the “Attention Mechanism” and how LLMs process information.https://arxiv.org/abs/1706.03762

Lost in the Middle (Stanford/UC Berkeley): A critical study on “Instruction Dilution,” explaining why LLMs struggle with long, complex prompts.https://arxiv.org/abs/2307.03172

The Chain-of-Thought Prompting (Google AI): Research proving that breaking tasks into logical steps (Linear Workflows) significantly improves reasoning performance.https://blog.google/technology/ai/chain-of-thought-prompting/

What are AI Hallucinations? (IBM Research): A comprehensive breakdown of why models fabricate data and how grounding (RAG) helps.https://www.ibm.com/topics/ai-hallucinations

Analyst Checklist: How to Audit Your Workflow

- [ ] Segmentation: Is the task broken into at least 3 logical steps?

- [ ] Constraint Check: Does each prompt have at least 2 “Instruction Anchors”?

- [ ] Freshness Check: Are you using fresh chat sessions for drafting and auditing?

- [ ] Validation: Is there a dedicated “Fact-Check” phase before publication?

Written by Soumen Chakraborty, founder of Aitoolsusageguide.org. Specializing in the intersection of Philosophy, Operations, and AI Realism.