Introduction

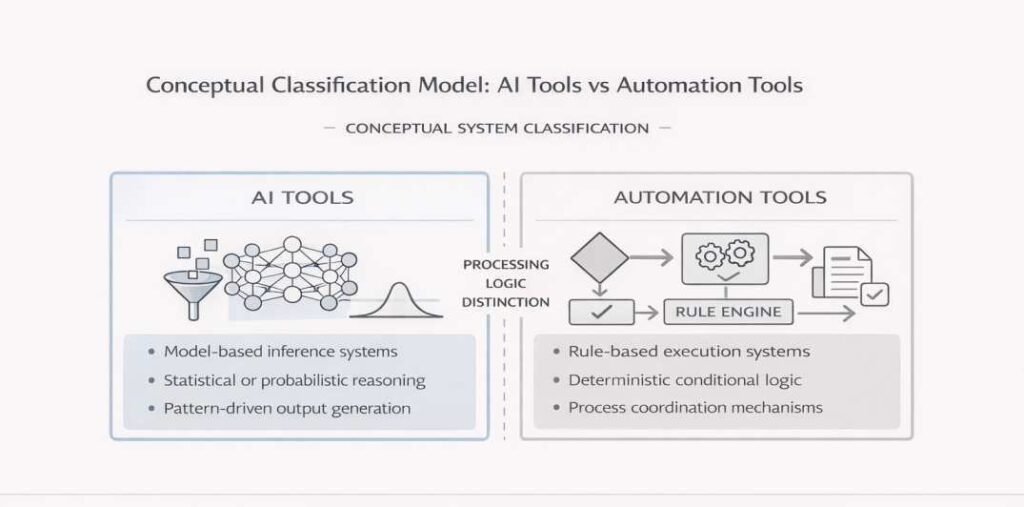

AI tools and automation tools are often discussed together because both are deployed within structured digital workflows. However, they represent different system categories and are based on different processing logics. In systems engineering and governance-oriented literature, a clear distinction is commonly made between inference-driven components and rule-based execution mechanisms when describing how complex digital systems are organized.

AI tools function as components that generate outputs through statistical or model-based inference processes. By contrast, automation systems operate through predefined procedures that coordinate workflow stages and enforce rule-based logic. Although both may operate within the same workflow, they are not functionally equivalent.

This article examines how AI tools and automation tools differ in workflow positioning, processing logic, and governance implications. The discussion remains descriptive and standards-oriented, focusing on structural interpretation rather than product categorization or usage evaluation.

A related foundational comparison is provided in AI tools vs traditional software systems, which explains how inference-based outputs differ from deterministic execution logic.

The distinction concerns system logic and architectural positioning rather than product branding.

Conceptual Definitions

AI Tools

Within institutional and technical literature, AI systems are characterized as components that generate outputs through model-based inference mechanisms. These systems typically rely on statistical learning methods, pattern-recognition models, or probabilistic reasoning mechanisms to analyze input data and produce results. Their behavior is influenced by training data characteristics, model architecture, and contextual deployment conditions rather than by fixed rule execution alone.

Within structured workflows, AI tools are positioned as inference layers that transform input data into classifications, predictions, rankings, or other model-generated outputs. Because their processing logic is derived from learned patterns rather than explicitly coded decision rules, AI outputs may vary across input conditions or inference configurations, particularly in deployments where probabilistic generation mechanisms are involved. Governance frameworks therefore often describe AI tools in relation to lifecycle management, model monitoring, and oversight structures that address variability and traceability.

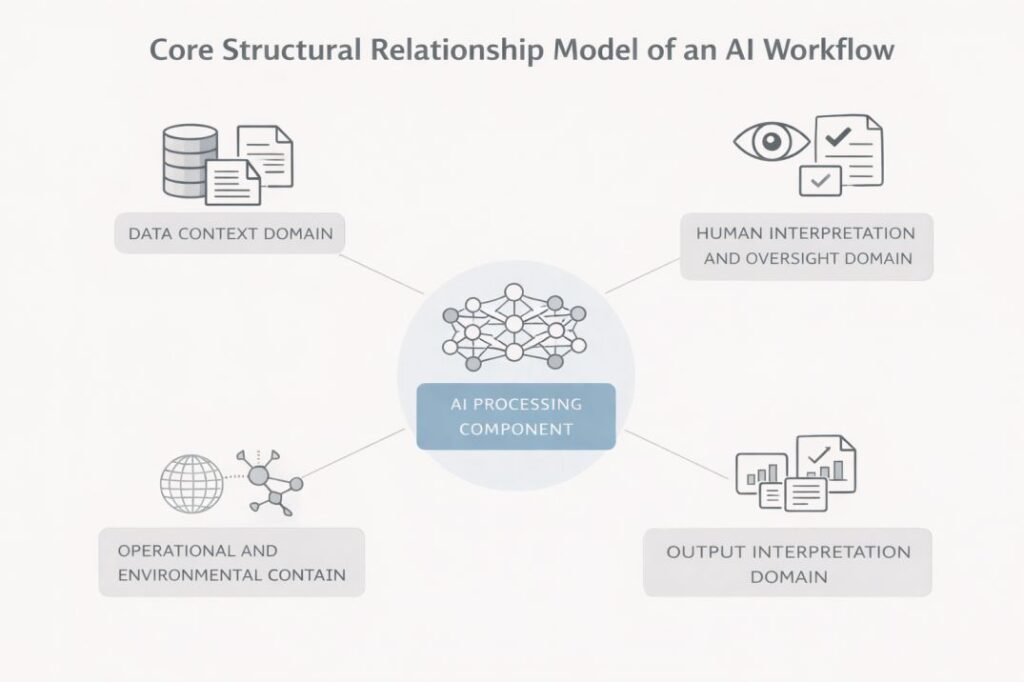

AI tools are not typically designed to coordinate entire workflows. Instead, they function as analytical components embedded within broader system environments that include data preprocessing stages, execution controls, and human review checkpoints.

The diagram below illustrates the conceptual classification distinction between inference-based and rule-based systems.

Automation Tools

Systems engineering literature characterizes automation mechanisms as rule-governed structures that coordinate and execute predefined tasks. These systems commonly operate through deterministic rule sets, event-driven triggers, orchestration frameworks, or process-execution engines that manage task sequencing across digital environments.

Unlike AI tools, automation tools do not inherently generate outputs through statistical inference. Their behavior is generally defined by explicit configuration rules, conditional logic, or structured process models. When the same inputs are provided under identical conditions, automation systems are expected to produce consistent and repeatable outcomes.

Within workflow architectures, automation tools are often positioned as execution or orchestration layers. They may trigger tasks, route information between systems, enforce validation checkpoints, or coordinate interactions between software components. In hybrid environments, automation tools can integrate AI inference modules into larger operational sequences, but their primary function remains process coordination rather than model-based analysis.

Institutional governance documentation typically associates automation tools with process auditing, execution logging, rule validation, and access control structures, reflecting their role in enforcing operational consistency across structured environments.

AI Tools vs Automation Tools (Comparison Table)

The following table summarizes structural and functional distinctions between AI tools and automation tools as described in institutional and systems-oriented documentation. The comparison focuses on processing logic, workflow placement, and governance orientation rather than product categories.

| Dimension | AI Tools | Automation Tools |

|---|---|---|

| Core Processing Logic | Model-based inference using statistical or probabilistic methods | Rule-based execution using predefined conditional logic |

| Output Behavior | May exhibit non-deterministic or distribution-sensitive outputs | Deterministic outputs when conditions and rules remain unchanged |

| Primary Function | Pattern recognition, classification, prediction, or inference | Task execution, sequencing, and workflow orchestration |

| Workflow Position | Analytical or inference layer embedded within broader systems | Execution or control layer coordinating workflow stages |

| Update Mechanism | Model retraining, parameter adjustment, or dataset modification | Rule updates, configuration changes, or process redesign |

| Dependency Type | Sensitive to training data quality and distribution shifts | Dependent on rule completeness and process logic integrity |

| Governance Focus | Model monitoring, bias assessment, drift detection, and lifecycle documentation | Rule validation, execution logging, exception handling, and access control |

| Control Authority | Typically generates outputs that may require interpretation or validation | Determines task triggering, routing, and process continuation |

| Transparency | Internal decision mechanisms may be partially opaque due to model complexity | Rule logic is explicitly defined and typically inspectable |

| Error Characteristics | Errors may appear as inference instability, misclassification, or distribution sensitivity | Errors typically appear as rule misconfiguration, missing conditions, or execution failures |

This comparison illustrates that AI tools and automation tools are differentiated primarily by their underlying processing mechanisms and their structural roles within workflow architectures. While both may coexist within integrated systems, their functional logic and governance considerations are distinct.

Differences in Core Processing Logic

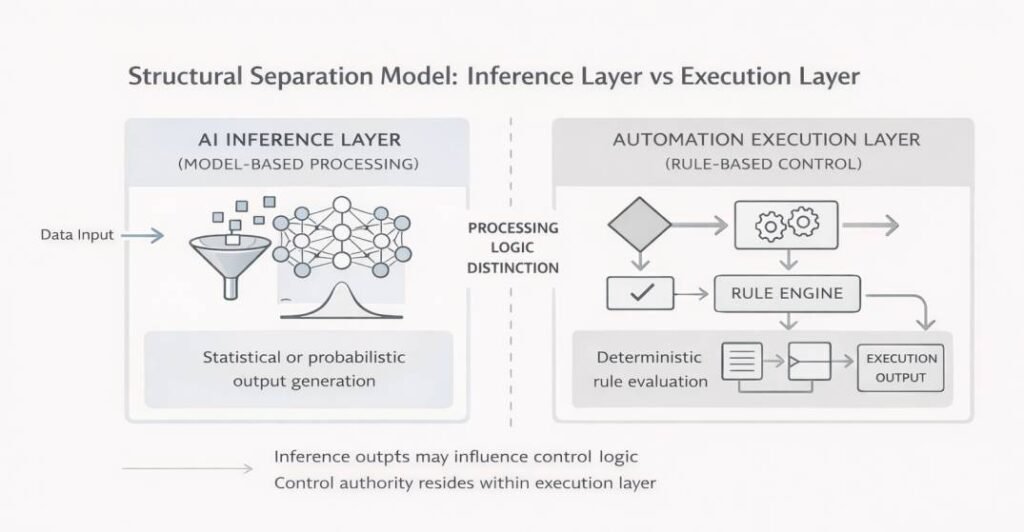

The primary distinction between AI tools and automation tools lies in the logic that governs how each system processes input and produces output. Model-based inference mechanisms govern how AI systems interpret input and generate outputs. Their outputs are generated by analyzing patterns within data using statistical models, machine learning algorithms, or other probabilistic frameworks. Because this processing relies on learned representations rather than fixed instructions, outputs may vary when input conditions or data distributions change.

Automation tools, by contrast, operate through deterministic rule execution. Their behavior is defined by explicit instructions, conditional statements, event triggers, or structured workflow configurations. When identical inputs are processed under unchanged rule conditions, automation systems are expected to produce consistent and repeatable results. This determinism reflects their design as execution mechanisms rather than inference engines.

The difference between probabilistic inference and deterministic execution has architectural implications. AI systems interpret input data to estimate likely outputs, while automation systems evaluate predefined conditions to determine process flow. In institutional system documentation, this distinction is often described as the difference between model-driven reasoning and rule-driven execution logic. Both approaches may coexist within hybrid architectures, but their internal decision mechanisms are structurally different.

Because AI tools depend on statistical generalization, their performance can be influenced by factors such as training data composition, model configuration, and contextual deployment conditions. Automation tools, on the other hand, are influenced primarily by the completeness and accuracy of their rule sets and process definitions. These contrasting dependencies reflect fundamentally different computational paradigms within workflow systems.

The following structural model visually separates inference layers from execution layers within workflow architectures.

Differences in System Architecture

AI tools and automation tools are also differentiated by the way they are embedded within system architecture. AI tools typically operate as model-dependent components that require supporting layers such as data preprocessing pipelines, inference execution environments, and model lifecycle management mechanisms. Their internal behavior is shaped by training data characteristics, parameter configurations, and inference conditions rather than by explicit step-by-step instructions.

Automation tools, by contrast, are commonly structured around rule engines, orchestration modules, and execution controllers. Their architectural logic is expressed through process definitions, conditional branching rules, and workflow routing mechanisms. Instead of depending on training datasets, automation systems depend on configuration integrity, rule completeness, and controlled exception handling.

From an architectural standpoint, analytical subsystems typically take the form of AI inference components embedded within broader environments. In institutional workflow diagrams, this distinction is often represented through separate layers, with inference modules positioned as analytical components and automation modules positioned as execution or orchestration components.

Differences in Workflow Placement

AI tools and automation tools are positioned differently within structured workflows, even when they coexist inside the same system architecture.

AI tools are commonly placed at analytical stages where structured input data is transformed into model-generated outputs such as classifications, probability scores, or predictions. Their placement is typically downstream of data preparation processes and upstream of interpretation, validation, or execution checkpoints.

Automation tools, by contrast, are positioned at control or orchestration stages of workflows. They determine when processes are initiated, how tasks are sequenced, how outputs are routed, and how conditional logic governs workflow continuation. Automation layers may appear before AI inference components (for data collection or preparation) or after them (for routing, escalation, or execution).

In workflow documentation, these placements are often represented through layered diagrams that separate analytical transformation components from execution control mechanisms. AI tools are typically shown as inference modules embedded within analytical segments, while automation tools are represented as execution or coordination modules responsible for process flow management. This distinction reflects functional positioning within workflows rather than internal computational structure.

Workflow structure is discussed separately in what is an AI workflow, which explains how system components are positioned across workflow stages.

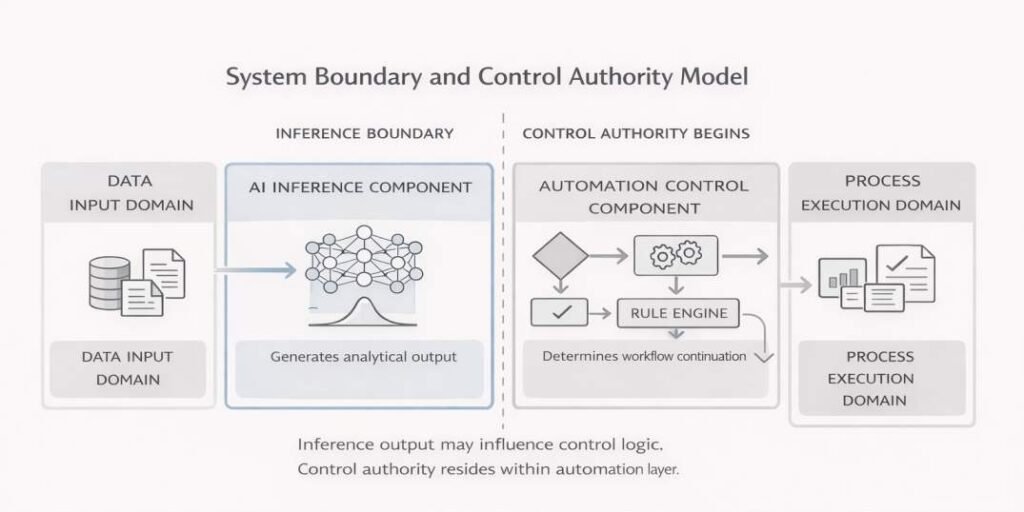

System Boundaries and Control Logic

System boundaries define where analytical processing ends and where execution authority or process control begins. In structured workflow architectures, AI tools and automation tools operate under different control paradigms, even when they are integrated within the same system environment.

An inference boundary generally defines the operational scope within which AI systems generate analytical outputs. They receive structured input data, apply model-based reasoning, and generate outputs derived from statistical patterns learned during training. The internal logic of these systems is shaped by model parameters, training data distributions, and configuration settings. However, AI tools typically do not determine how the broader workflow proceeds. They produce outputs, but the authority to act on those outputs often resides elsewhere in the system.

Control boundaries are commonly associated with automation layers responsible for workflow continuation and execution authority. Their primary role is to determine when tasks are triggered, how information is routed, and how conditional logic governs workflow continuation. Control logic in automation systems is expressed through explicit rules, event-driven conditions, or orchestration configurations. This means that process authority—such as escalation, termination, or downstream execution—usually rests within the automation layer rather than within the AI inference component.

In hybrid architectures, AI-generated outputs may influence control decisions, but the decision logic itself is implemented within automation or governance structures. For example, an AI system may assign a probability score to an input, while an automation rule determines whether that score exceeds a threshold that triggers further action. This separation maintains a clear distinction between inference generation and process control authority.

Systems engineering literature commonly emphasizes the importance of clearly defined boundaries between inference modules and control modules. Such separation supports traceability, accountability mapping, and system transparency by clarifying which components generate analytical results and which components determine workflow progression.

Governance and Oversight Considerations

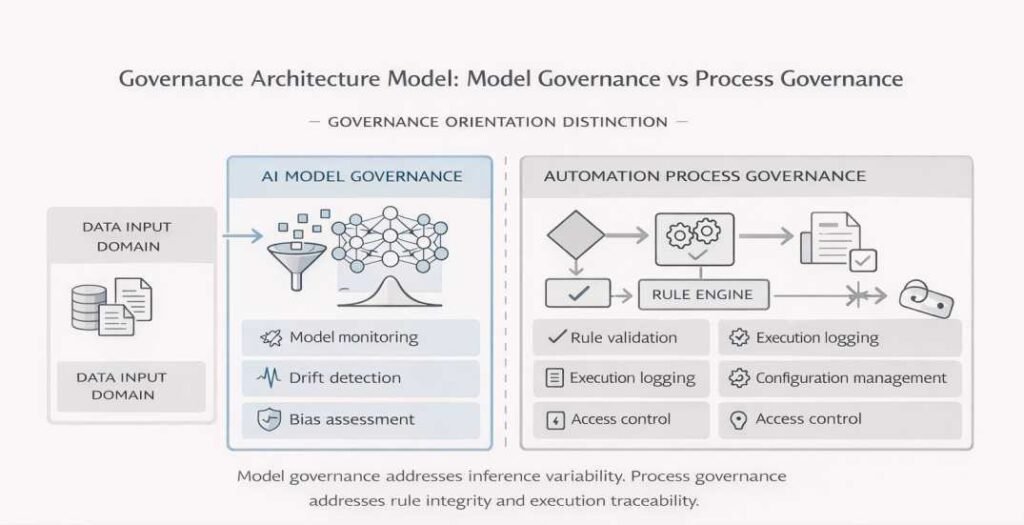

Governance structures for AI tools and automation tools differ according to the risks and control characteristics associated with each system type. Although both operate within structured workflows, the nature of their internal logic leads to distinct oversight priorities and documentation requirements.

Oversight structures for AI systems typically focus on model behavior, data dependency, and output variability. Oversight mechanisms may include performance monitoring, drift detection, dataset provenance documentation, bias assessment procedures, and periodic model evaluation. Because AI systems generate outputs through statistical inference, governance structures are often designed to track how model behavior changes over time and under different deployment conditions. Documentation typically focuses on traceability across the model lifecycle, including training, validation, deployment, and post-deployment monitoring stages.

For internal system-layer breakdown, refer to core structural components of AI tools.

The diagram below differentiates governance orientation across model and process domains.

Governance mechanisms surrounding automation environments concentrate on rule integrity, execution traceability, and configuration control. Oversight mechanisms may include rule validation procedures, configuration change management, access control policies, audit logging, and exception-handling documentation. Since automation tools operate through explicit instructions and deterministic logic, governance priorities center on ensuring that rules are correctly defined, consistently applied, and transparently documented.

In hybrid systems, governance responsibilities may span both domains. An automation layer may orchestrate AI inference stages, while oversight checkpoints ensure that AI-generated outputs are reviewed or validated before further execution. In governance-oriented frameworks, clear separation between model governance and process governance is emphasized to reduce ambiguity in accountability structures.

These governance differences do not imply that one system type requires more oversight than the other. Instead, they reflect distinct structural characteristics: AI tools introduce statistical uncertainty and model lifecycle considerations, while automation tools introduce rule maintenance and process control dependencies. Effective workflow documentation clarifies these distinctions by mapping where governance mechanisms apply within the overall system architecture.

This separation between model governance and process governance is consistent with risk-oriented institutional frameworks such as the NIST AI Risk Management Framework (AI RMF) and ISO/IEC standards literature on AI terminology and lifecycle accountability. These frameworks typically distinguish between systems that generate outputs through inference behavior and systems that enforce workflow control through explicit operational logic.

Common Areas of Overlap

Although AI tools and automation tools are structurally distinct, they frequently operate within the same workflow environments. In many institutional and enterprise architectures, automation systems coordinate process flow while AI systems contribute analytical inference. This interaction creates hybrid configurations in which the two system types are interdependent but retain separate functional roles.

One common area of overlap occurs when automation tools trigger AI inference processes based on predefined conditions. For example, an automation layer may initiate data collection or preprocessing tasks and then pass structured input to an AI component for pattern recognition or classification. After the AI system produces an output, the automation layer may determine how that output is routed—such as forwarding it to storage, escalation pathways, or review checkpoints. This interaction pattern is described in more detail in How AI Tools Are Positioned Within Workflows, which explains how inference outputs are integrated into structured process execution stages.

A similar structural configuration appears in risk or fraud evaluation scenarios. An AI component may classify incoming emails according to predicted fraud probability, while an automation layer determines whether messages exceeding a predefined threshold are escalated for manual review. In this arrangement, inference generation and workflow routing remain structurally distinct even though they operate within the same environment.

Overlap also appears in monitoring and reporting structures. Automation systems may log execution events and manage workflow sequencing, while AI systems generate inference results that are recorded within the same operational environment. In such cases, both systems contribute to traceability through different mechanisms: automation through execution logs and AI through model output documentation.

Even in integrated architectures, the distinction between inference and orchestration remains structurally important. Interaction with inference modules does not transform automation tools into AI systems, nor does embedding AI components within automated pipelines transfer control authority to the inference layer. Workflow and governance documentation therefore commonly represent analytical processing layers separately from execution control layers, even when they coexist within unified system diagrams.

These overlapping configurations illustrate functional interdependence while preserving distinct inference and control roles within workflow architectures. Their interaction reflects coordinated system design rather than functional equivalence.

Limitations and Structural Constraints

Both AI tools and automation tools operate within structural and operational constraints that shape their behavior inside workflow environments. These constraints arise from technical design choices, data dependencies, rule configurations, and organizational governance frameworks rather than from isolated tool characteristics.

AI tools are constrained primarily by data quality, representativeness, and model generalization limits. Because they rely on statistical inference, their outputs may vary when input distributions shift or when deployment environments differ from training conditions. Structural limitations may also arise from model architecture, parameter configuration, and lifecycle management practices. Workflow documentation may support monitoring and traceability of such variability, but it does not eliminate the inherent uncertainty associated with model-based inference.

Constraints in automation systems typically arise from rule completeness, configuration integrity, and exception-handling logic. If rule sets fail to account for specific conditions, process interruptions or unintended routing behaviors may occur. Unlike AI systems, automation tools do not exhibit probabilistic variation; however, they remain dependent on accurate rule definition and maintenance. Structural limitations in automation systems are therefore associated with process design gaps rather than statistical uncertainty.

Both system types are also shaped by organizational policies, regulatory requirements, infrastructure limitations, and access control structures. These external factors influence how components are positioned within workflows and how responsibilities are distributed across system layers. In operational system documentation, these constraints are typically treated as inherent characteristics of structured digital environments rather than as indicators of system malfunction.

Recognizing these limitations supports clearer interpretation of workflow diagrams and governance boundaries. AI tools and automation tools operate within defined structural parameters, and effective documentation makes visible where those parameters influence system behavior.

Frequently Asked Questions

What is the primary difference between AI tools and automation tools?

The primary difference lies in processing logic. AI tools generate outputs through statistical or model-based inference, while automation tools execute predefined rules or orchestrate structured processes. AI systems transform input data into probabilistic results, whereas automation systems apply explicit conditions to determine workflow execution.

Can automation systems include AI components?

Yes. Automation systems may integrate AI inference modules within broader workflows. In such configurations, the automation layer typically manages task sequencing and routing, while the AI component performs analytical processing. The presence of AI within an automated workflow does not eliminate the distinction between inference logic and execution control.

Are AI tools always part of automated workflows?

AI tools may operate within automated environments, but they are not inherently synonymous with automation. An AI system can generate analytical outputs that require interpretation or validation before downstream execution occurs. Automation refers to process execution and orchestration, whereas AI refers to inference-based processing.

Do automation tools use machine learning?

Automation tools are generally defined by rule-based or event-driven logic rather than by machine learning models. While automation frameworks may trigger AI processes, the automation mechanism itself typically relies on deterministic configuration rules.

Do AI tools and automation tools require different governance structures?

Both AI tools and automation tools require governance, but oversight structures differ according to system characteristics. AI governance often focuses on model monitoring, data dependency, and inference variability, while automation governance focuses on rule validation, execution traceability, and configuration management. Governance requirements reflect structural properties rather than relative system importance.

FAQ: What is orchestration in automation tools?

Can automation tools operate without AI inference components?

Yes. Many automation tools operate entirely through rule-based execution, predefined workflows, and deterministic process logic. Automation does not inherently require machine learning or inference modules, although automation systems may integrate AI components when analytical processing is required.

A classification-based overview of AI system categories is available in types of AI tools used.

Conclusion

AI tools and automation tools represent distinct but complementary components within structured workflow architectures. Their differences are rooted in processing logic, system placement, and governance orientation rather than in product category or technological branding. AI tools operate through model-based inference mechanisms that analyze data patterns and generate probabilistic outputs. Automation tools operate through rule-based execution and orchestration mechanisms that coordinate task sequencing and enforce process control.

Throughout this article, the distinction has been examined across conceptual definitions, core processing logic, workflow placement, system boundaries, governance structures, and areas of overlap. These perspectives clarify that AI tools function primarily as analytical or inference modules, while automation tools function as control and execution modules within broader digital environments.

In hybrid architectures, both system types may coexist within the same workflow. Automation layers may initiate or coordinate AI inference stages, while oversight checkpoints ensure traceability and accountability across system boundaries. However, the presence of integration does not remove their structural differences. Each operates under different control paradigms and introduces distinct dependencies.

From a systems perspective, AI tools and automation tools are best understood not as interchangeable technologies but as components with separate functional logics embedded within socio-technical processes. Clarifying these distinctions supports more precise interpretation of workflow diagrams, governance documentation, and architectural boundaries without implying superiority or prescribing implementation choices.

References

IEEE. (2021). IEEE 7000-2021: Model process for addressing ethical concerns during system design. https://standards.ieee.org/standard/7000-2021.html

ISO/IEC. (2022). ISO/IEC 22989:2022: Artificial intelligence — Concepts and terminology. International Organization for Standardization. https://www.iso.org/standard/74296.html

ISO/IEC. (2022). ISO/IEC 23053:2022: Framework for artificial intelligence (AI) systems using machine learning (ML). International Organization for Standardization. https://www.iso.org/obp/ui/

ISO/IEC. (2023). ISO/IEC 23894:2023: Information technology — Artificial intelligence — Risk management. International Organization for Standardization. https://www.iso.org

National Institute of Standards and Technology. (2023). Artificial Intelligence Risk Management Framework (AI RMF 1.0). https://nvlpubs.nist.gov/nistpubs/ai/nist.ai.100-1.pdf

Russell, S., & Norvig, P. (2021). Artificial intelligence: A modern approach (4th ed.). Pearson.

Dumas, M., La Rosa, M., Mendling, J., & Reijers, H. A. (2018). Fundamentals of business process management (2nd ed.). Springer.

Weske, M. (2019). Business process management: Concepts, languages, architectures (3rd ed.). Springer.