Definition — Partial Prompt Emphasis

AI tools may appear to ignore parts of a prompt when certain tokens receive stronger contextual weighting during response generation, causing other elements to be less represented in the output.

AI tools generate responses using statistical language models trained on large collections of text data. When a prompt is submitted, the system processes the input as a sequence of tokens and analyzes statistical relationships among those tokens.

Based on these relationships, the model predicts the most probable sequence of output tokens during response generation. Because response generation relies on statistical patterns rather than human-style reasoning, some instructions within a prompt may receive stronger contextual emphasis than others.

As a result, certain prompt elements may appear less represented in the final response. In many discussions about prompt interpretation, this phenomenon is often described as situations where AI tools ignore parts of a prompt during response generation.

AI tools may emphasize only part of a prompt because language models interpret prompts as sequences of tokens and assign different levels of contextual influence to those tokens during response generation. When prompts contain multiple instructions, long contextual descriptions, or ambiguous phrasing, certain tokens may receive stronger attention weighting, causing the generated output to focus on specific instructions while other elements receive less emphasis.

This discussion focuses on how attention distribution and contextual competition influence the relative emphasis of instructions within a prompt, which differs from semantic misinterpretation or ambiguity-based interpretation processes.

How AI Tools Process a Prompt

Before generating a response, an AI language model converts the prompt into an internal representation that can be processed computationally.

This process typically includes several stages:

Tokenization

The prompt text is divided into tokens representing words, subwords, or symbols. These tokens form the fundamental units used by the model during processing.

Context Construction

The model evaluates how tokens relate to one another within the surrounding sequence to construct contextual meaning. These relationships help form a contextual representation of the prompt.

This process is further explained in: How AI Tools Interpret Prompts

Probability Estimation

Based on statistical patterns learned during training, the model predicts which tokens are most likely to follow during response generation.

Because interpretation depends on statistical relationships rather than explicit task planning, complex prompts may cause certain instructions to receive stronger contextual emphasis than others.

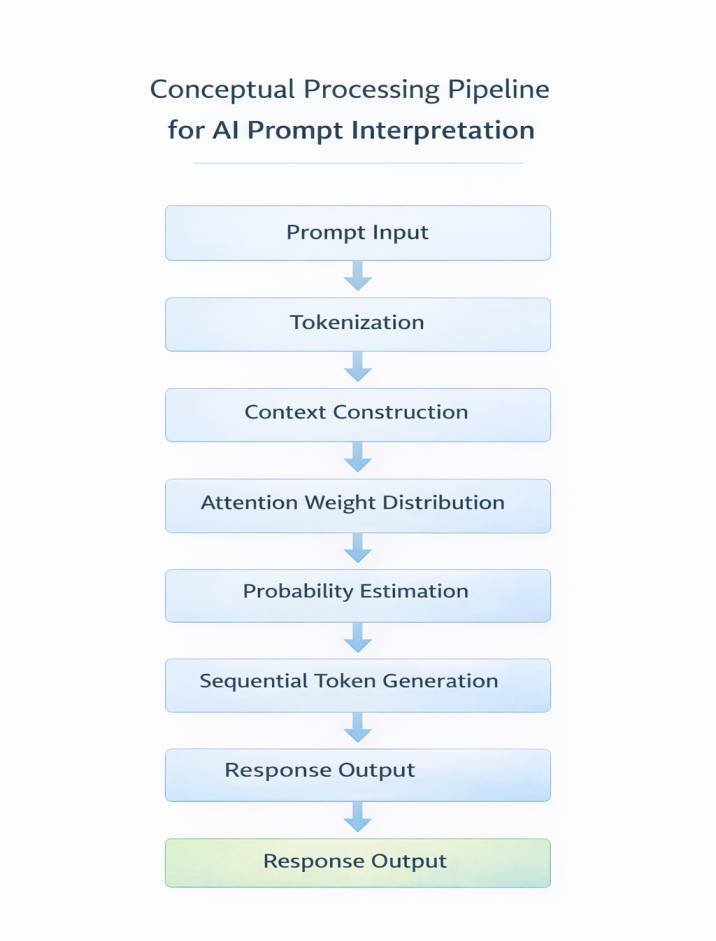

Conceptual Processing Pipeline for AI Prompt Interpretation

The interpretation of prompts in AI language models can be understood as a sequence of computational stages that transform user input into generated text. Although these stages occur within a unified model architecture, they represent distinct analytical processes. Each stage contributes to how the model interprets relationships among tokens before generating output.

A simplified conceptual model includes the following stages:

Prompt Input

↓

Tokenization

↓

Context Construction

↓

Attention Weight Distribution

↓

Probability Estimation

↓

Sequential Token Generation

↓

Response Formation

Within this pipeline, attention mechanisms determine how strongly different tokens influence prediction during response generation.

Understanding this pipeline provides a structural overview of how prompt interpretation occurs during AI response generation within AI Tool Architecture Explained: Layers and Functional Dependencies.

Each stage in this pipeline contributes differently to how instructions are interpreted during response generation.

Tokenization and Instruction Representation

Tokenization is the first stage of prompt interpretation and forms part of the processing pipeline described in What Happens Inside an AI Tool After You Click “Generate”?

A prompt such as:

“Explain how AI tools interpret prompts and provide three examples of prompt failures”

may be converted into dozens of tokens depending on the tokenization method used by the model. Each token becomes part of the sequence used by the model during interpretation.

Within this sequence:

- tokens representing different instructions coexist in the same context

- relationships between tokens influence interpretation patterns

- statistical importance varies depending on patterns seen during training

If one part of the prompt aligns strongly with learned patterns, the model may generate output that focuses primarily on that portion.

This stage is also related to how prompts are defined and structured: What is a Prompt in AI Tools

This mechanism operates in conjunction with contextual representation, attention distribution, and probabilistic evaluation, contributing to how different parts of a prompt are emphasized during response generation.

Instruction Competition in Multi-Instruction Prompts

Many prompts contain multiple instructions that the system must interpret simultaneously, a phenomenon related to Why AI Tools Misinterpret Prompts. These instructions may request different actions such as explanation, summarization, formatting, or example generation.

Because the language model interprets the entire prompt as a single contextual sequence, different instructions may exert varying levels of influence during response generation.

Examples of instructions that may appear together in a prompt include:

- explain a concept

- provide examples

- summarize key points

- format the response in a particular structure

When several instructions appear within the same prompt, the model must interpret them within a unified contextual representation. During this process, several interaction patterns may occur.

Instruction dominance

Some instructions may align more closely with patterns observed in the training data and therefore receive stronger contextual influence during response generation.

Instruction overlap

Instructions containing similar wording or closely related concepts may reinforce each other, increasing their contextual influence within the prompt.

Instruction conflict

Instructions that differ in scope, structure, or intent may produce competing contextual signals during interpretation.

Because the model generates responses sequentially, it may prioritize the instruction that appears most strongly within the statistical context.

Prompt Structure and Instruction Prioritization

Prompt structure can influence how instructions are interpreted by a language model.

When multiple instructions appear within a single prompt, the model processes them as part of one continuous token sequence. The arrangement of instructions, sentence boundaries, and formatting can affect how contextual relationships are constructed during interpretation.

Prompts that combine several tasks within a single sentence may create overlapping contextual signals. For example, a prompt that requests explanation, summarization, and formatting within a single sentence may create competing contextual signals. In contrast, prompts that separate instructions into clearly defined segments can create more distinct contextual relationships.

Several structural characteristics may influence how instructions are represented within the prompt context.

Instruction ordering

Instructions appearing earlier in the prompt may sometimes receive stronger contextual influence within the token sequence. This effect can occur because earlier tokens participate in contextual relationships that shape attention distributions during response generation.

Instruction segmentation

Instructions separated using line breaks, numbered steps, or lists may create clearer contextual boundaries compared to instructions embedded within long sentences.

Instruction nesting

Complex sentences containing multiple nested instructions may produce overlapping contextual signals that compete during interpretation.

Formatting signals

Elements such as punctuation, bullet lists, and paragraph boundaries may influence how tokens are grouped during contextual processing.

Together, these structural characteristics contribute to how different instructions are represented within the prompt context during response generation.

This mechanism operates in conjunction with contextual representation and attention distribution, influencing how instruction prioritization emerges during response generation.

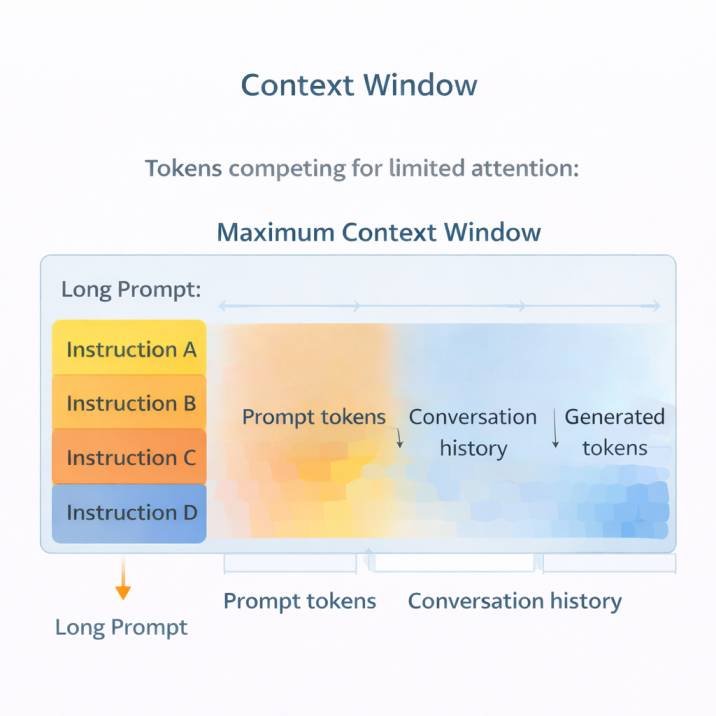

Context Window Constraints

Every language model operates within a finite context window during the inference process described in Inference in AI Tools: Meaning and Output Generation Process. This window includes:

• the prompt provided by the user

• earlier messages in a conversation

• tokens generated during the response

Within this limited space, tokens compete for contextual influence during prediction.

When prompts become long or contain multiple sections, the model may assign stronger contextual weighting to certain segments while others receive less influence. This dynamic can contribute to situations where responses appear to ignore later instructions or secondary questions.

Transformer Architecture and Prompt Interpretation

Many modern AI language models are based on transformer architectures, a framework introduced in the research paper Attention Is All You Need by Vaswani et al. (2017). These architectures function as core components within Core Structural Components of AI Tools: A Conceptual Systems Overview and are further described in AI Tool Architecture Explained: Layers and Functional Dependencies.

Transformer models analyze relationships between tokens using attention-based computations rather than relying solely on sequential processing. Instead of evaluating words strictly in order, the architecture allows the model to compare tokens across the entire prompt context.

Within this framework, each token in the prompt can interact with other tokens through weighted connections that represent contextual relationships.

Because transformer models rely heavily on attention mechanisms, the distribution of attention across tokens plays a central role in determining how prompts are interpreted during response generation.

Attention Mechanisms in Language Models

Modern language models rely on computational mechanisms known as attention mechanisms to determine how different parts of a prompt influence response generation.

Within the model architecture, attention mechanisms evaluate relationships between tokens across the prompt sequence.

Attention mechanisms assign relative influence to tokens within the prompt, determining which elements of the input contribute most strongly to subsequent token predictions.

These relationships are represented through weighted connections that determine which parts of the prompt receive greater contextual emphasis during prediction.

Several characteristics of attention mechanisms influence prompt interpretation:

Token weighting

Different tokens within the prompt may receive different levels of attention depending on their contextual relationships. Tokens that appear more strongly associated with learned patterns may exert greater influence on the generated output.

Instruction proximity

Instructions that appear close to one another in the token sequence may reinforce their contextual influence. In contrast, instructions separated by long segments of text may receive weaker relative attention.

Contextual reinforcement

If certain tokens repeatedly appear in related contexts during model training, the model may assign stronger attention weights to those tokens when similar patterns appear in prompts.

Because response generation relies on these attention-based relationships, the model may emphasize particular instructions or concepts more strongly than others. This mechanism contributes to situations in which responses appear to prioritize some elements of a prompt while other elements receive less emphasis.

This mechanism operates in conjunction with contextual representation, attention distribution, and probabilistic evaluation, contributing to how different parts of a prompt are emphasized during response generation.

Sequential Nature of Response Generation

AI text systems generate responses one token at a time as part of the probabilistic generation process described in Why AI Tools Give Different Answers to the Same Question: Understanding Probabilistic AI Generation. Each predicted token becomes part of the growing context used to determine the next token.

The process can be summarized as follows:

- The model analyzes the prompt context.

- It predicts the most probable next token.

- The predicted token is appended to the output sequence.

- The updated sequence becomes the context for the next prediction.

Because each step depends on the previously generated tokens, the response may gradually move toward a particular topic or instruction even if the original prompt contained several tasks. Once a generation path begins, later tokens tend to reinforce the direction established by earlier predictions.

The generated output therefore interacts continuously with the original prompt during response generation. As new tokens are produced, they become part of the contextual sequence that influences subsequent predictions.

Several dynamics can emerge during this process:

Topic reinforcement

Once the model begins generating tokens associated with a particular concept, subsequent predictions are influenced by that emerging topic. This can gradually narrow the focus of the response toward one part of the prompt.

Instruction drift

If the early portion of the generated response emphasizes one instruction more strongly, later tokens may continue expanding that instruction while other prompt elements remain less represented.

Context accumulation

As the response grows longer, the generated tokens occupy part of the context window alongside the original prompt. This evolving context can shift the weighting of instructions that initially appeared in the prompt.

These dynamics illustrate how prompt interpretation does not occur only at the beginning of the response generation process. Instead, interpretation continues to evolve as the model produces new tokens and incorporates them into the contextual sequence.

Ambiguity in Natural Language Prompts

Human language often allows multiple interpretations of the same statement. AI systems interpret prompts through statistical relationships between tokens rather than human reasoning. As a result, prompts containing ambiguous phrasing may lead to responses that emphasize different parts of the instruction.

Ambiguity may appear in prompts that contain:

- vague instructions

- indirect phrasing

- nested conditions

- complex sentence structures

For example, a prompt such as:

“Describe AI prompt interpretation and also explain how prompt errors happen in detail”

contains two related but distinct instructions. Depending on the contextual relationships among tokens, the system may generate a response that emphasizes one instruction more strongly than the other.

This behavior reflects the model’s reliance on statistical language patterns rather than explicit task planning.

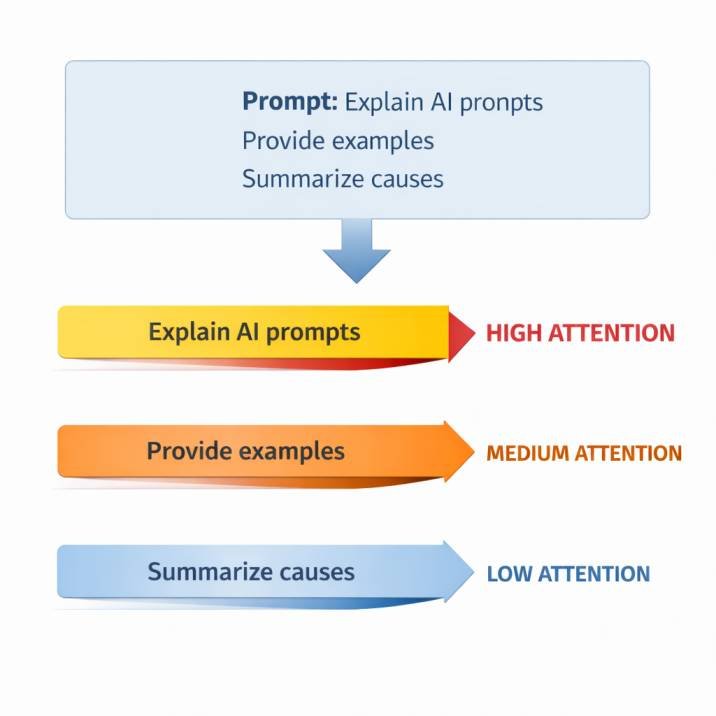

Example of Multi-Instruction Prompt Interpretation

A prompt may contain several requests within a single instruction sequence. For example:

“Explain how AI language models interpret prompts, provide two examples of prompt errors, and summarize the key causes.”

Within this prompt, the model must interpret multiple tasks:

• explain a concept

• provide examples

• summarize key points

Because the system evaluates all tokens within a single contextual representation, different instructions may receive different levels of contextual influence during response generation.

In some cases, the generated output may emphasize one instruction more strongly than the others.

Influence of Training Data Patterns

The interpretation of prompts is influenced by patterns present in the data used during model training, which differs from runtime prompt input as explained in Why AI Tools Don’t Learn From Your Prompts (Training vs Input Data).

During training, language models learn statistical relationships between words, phrases, and contextual structures that frequently appear together. These learned patterns influence how the model interprets prompts during later use.

If a particular prompt structure frequently appears in the training data with a specific response pattern, the model may prioritize that pattern when generating output.

As a result:

- certain instructions may receive stronger contextual weighting

- familiar prompt patterns may dominate interpretation

- uncommon or unusual prompt structures may produce less predictable responses

This behavior reflects how statistical models generalize patterns learned from large text datasets.

Interaction Between Prompt Length and Interpretation

Prompt length also influences how contextual attention is distributed across the prompt. Longer prompts introduce more tokens into the contextual sequence, which can affect how attention is distributed across instructions.

Long prompts may include:

- background explanations

- multiple instructions

- formatting requirements

- examples or references

When many tokens appear in the prompt, the model must balance contextual relationships across the entire sequence.

In such cases, several patterns may emerge:

- early instructions sometimes exerting stronger contextual influence during interpretation

- middle sections reinforcing contextual themes

- later instructions receiving less contextual emphasis

The result can be a response that addresses some parts of the prompt while other elements appear less prominent in the output.

Variability Across AI Language Models

Differences in architecture, training data, and context window size may contribute to this variation.

Although many modern systems rely on transformer-based architectures, implementation details such as training data composition, model scale, and context window size can influence prompt interpretation behavior.

Some factors that contribute to variability include:

Model scale

Larger models trained on broader datasets may develop stronger representations of certain language patterns. This can influence how prompts containing multiple instructions are interpreted.

Training data distribution

The frequency with which particular prompt structures appear in training datasets can affect how models respond to similar prompts during use.

Context window size

Models with larger context windows can analyze longer prompts without discarding earlier tokens. Smaller context windows may require the model to assign stronger emphasis to particular segments within the prompt.

Because of these architectural and training differences, prompt interpretation behavior may vary across AI tools even when the same prompt is provided.

Additional factors may also contribute to differences in prompt interpretation across AI systems. These can include variations in model alignment procedures, instruction-tuning datasets, and architectural modifications introduced during system development. Because these factors influence how models interpret contextual signals, responses to the same prompt may differ across AI tools even when the underlying architecture shares similar principles.

Observable Patterns in AI Tool Responses

These mechanisms lead to several observable response patterns when AI tools process complex prompts.

These include:

Partial task completion

The response addresses one instruction but not others.

Instruction prioritization

One instruction receives stronger contextual emphasis than other instructions

Topic narrowing

The response gradually focuses on one aspect of the prompt during generation.

Format dominance

Formatting instructions may influence structure while content instructions receive less emphasis.

These observable behaviors can be summarized in the following response pattern categories.

| Response Pattern | Description |

|---|---|

| Partial task completion | The response addresses only one instruction within a multi-instruction prompt |

| Instruction prioritization | One instruction receives stronger contextual emphasis than others |

| Topic narrowing | The generated response gradually concentrates on a single topic |

| Format dominance | Formatting instructions influence response structure more strongly than content instructions |

Relationship to Other AI System Behaviors

The behavior described in this article is related to several broader characteristics of AI language models.

These include:

- variability in responses to similar prompts

- sensitivity to prompt structure and wording

- dependence on contextual representation within the model

Understanding these behaviors provides insight into how AI tools interpret prompts during response generation. The mechanisms discussed throughout this article illustrate how prompt interpretation emerges from several interacting components within modern language models.

Conclusion

AI tools generate responses through statistical language models that process prompts as sequences of tokens and estimate the probability of subsequent tokens during output generation. Within this process, prompts containing multiple instructions, ambiguous phrasing, or extended contextual information can introduce competing contextual signals.

Because response generation occurs incrementally within a limited context window, different parts of a prompt may receive varying levels of contextual influence during prediction.

As a result, the generated response may emphasize certain instructions or concepts while other elements of the prompt appear less represented. These dynamics illustrate how prompt interpretation in AI systems reflects statistical relationships within language models rather than explicit task planning.

Frequently Asked Questions (FAQs)

Why do AI tools sometimes emphasize only part of a prompt?

AI language models interpret prompts as sequences of tokens and assign different levels of contextual influence to those tokens through attention mechanisms. When prompts contain multiple instructions or long contextual descriptions, some tokens may exert stronger influence during prediction, causing the generated response to emphasize particular parts of the prompt.

What is instruction competition in AI prompts?

Instruction competition occurs when multiple instructions appear within the same prompt. Because the model processes the entire prompt as a single contextual sequence, different instructions may exert varying levels of influence during response generation depending on their contextual relationships within the token sequence.

How does the context window affect prompt interpretation?

Language models operate within a limited context window that includes prompt tokens, conversation history, and generated tokens. When prompts are long or contain multiple sections, tokens compete for contextual influence within this limited space, which may affect how different instructions are represented during response generation.

Related Concepts in AI Prompt Processing

Several mechanisms influence how AI systems interpret prompts during response generation. The following topics are closely related to the processes described in this article and explain additional components of AI language model behavior.

• Tokenization in language models

Describes how text prompts are divided into tokens that form the basic units used during AI processing.

• Context windows in AI systems

Explains how prompts, conversation history, and generated tokens occupy a limited contextual space during response generation.

• Attention mechanisms in transformer models

Examines how language models assign different levels of contextual influence to tokens when predicting output.

• Sequential token generation

Describes how AI systems generate responses one token at a time while continuously updating the contextual sequence.

References

Examples of research and technical frameworks relevant to AI language model interpretation include:

- Vaswani, A., Shazeer, N., Parmar, N., et al. (2017). Attention Is All You Need.

https://arxiv.org/abs/1706.03762 - National Institute of Standards and Technology (NIST). Artificial Intelligence Risk Management Framework (AI RMF 1.0).

https://www.nist.gov/itl/ai-risk-management-framework - Stanford Institute for Human-Centered Artificial Intelligence. AI Index Report.

https://aiindex.stanford.edu/report/ - Brown, T. et al. (2020). Language Models Are Few-Shot Learners.

https://arxiv.org/abs/2005.14165 - Bender, E., Gebru, T., McMillan-Major, A., & Shmitchell, S. (2021). On the Dangers of Stochastic Parrots: Can Language Models Be Too Big?

https://dl.acm.org/doi/10.1145/3442188.3445922

These materials provide conceptual foundations for understanding the mechanisms that influence prompt interpretation in modern AI systems.

- Top 10 Free AI Tools for Beginners (2026 Guide) - April 3, 2026

- How to Use ChatGPT for Beginners (Step-by-Step Guide) - March 31, 2026

- Why AI Tools Fail Outside Training Conditions - March 29, 2026