Content type: Educational Explainer

Introduction

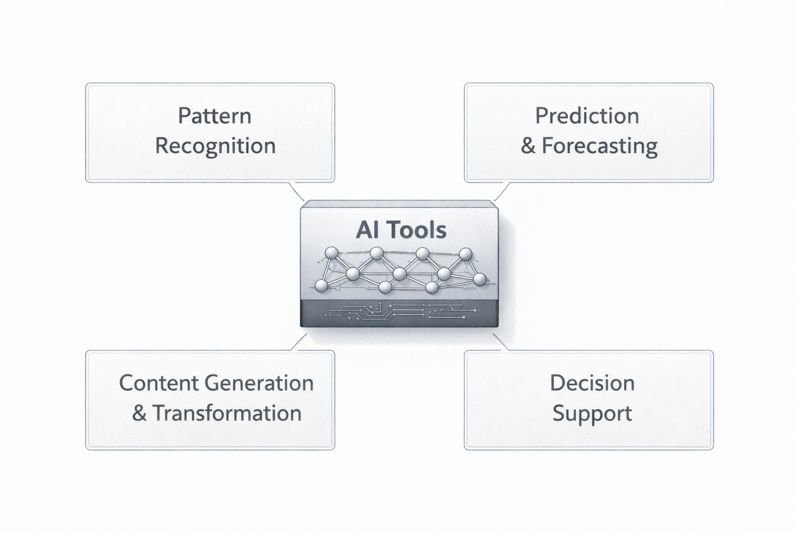

In institutional and academic literature, AI tools are described as technical components embedded within a wide range of digital and organizational systems. While the term “AI” is often used broadly, AI tools represent a specific and limited layer within larger socio-technical systems. They are not autonomous entities or complete systems on their own, but technical components designed to perform defined computational tasks.

Understanding how AI tools are categorized requires distinguishing between different types of AI tools and the system structures that organize their integration. Many public discussions conflate AI tools with broader concepts such as automation, intelligence, or decision-making authority.

The scope of this article is limited to describing types of AI tools and their typical functions within digital systems. It does not provide instructions, recommendations, performance comparisons, or tool endorsements. The focus is on conceptual clarity rather than practical guidance.

A broader conceptual and structural framing of AI tools is presented in AI Tools Explained- Conceptual Foundations of AI Tools in Institutional Systems

Table of Contents

AI Tools for Data Interpretation and Pattern Recognition

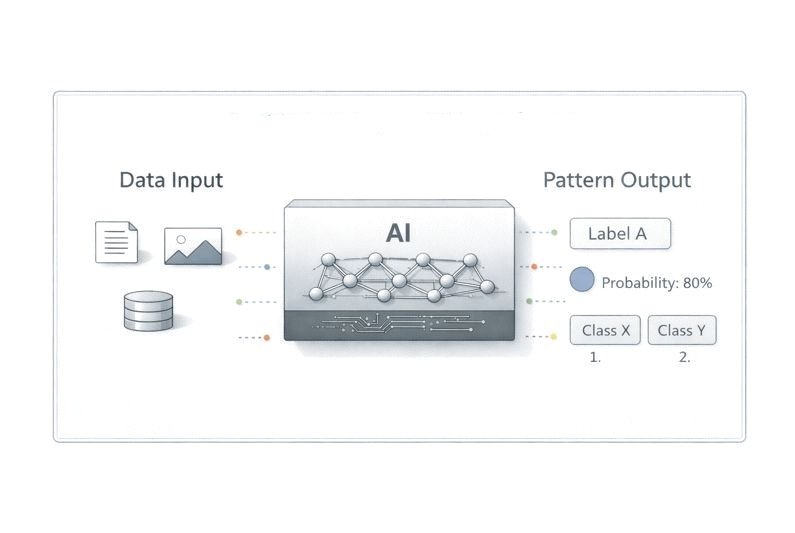

One common category of AI tools described within digital systems includes those designed for data interpretation and pattern recognition. Within institutional classifications, this category refers to systems that process structured or unstructured data to identify recurring statistical patterns.

This category is commonly discussed in relation to classification and pattern-detection functions across structured and unstructured data environments. Such tools rely on statistical or machine-learning models trained on datasets that represent specific domains. Their outputs typically take the form of labels, probabilities, or ranked results rather than definitive conclusions.

These tools function within clearly defined boundaries. They do not understand context in a human sense, nor do they determine how their outputs should be used. Interpretation and decision-making occur outside the tool, within a broader workflow or human-led process. Standards-based frameworks emphasize evaluating these tools in terms of validity, reliability, and contextual appropriateness rather than assuming general intelligence.

In digital systems, pattern recognition tools are often embedded as modular components. This modularity allows them to be assessed, updated, or replaced without altering the overall system architecture. Their role is limited to transforming inputs into structured outputs based on learned patterns.

Boundary Clarifications and Common Misconceptions

Discussions of pattern recognition tools often blur the distinction between detecting patterns and understanding meaning. While such tools can identify statistical regularities in data, they do not interpret significance, intent, or consequence in a human sense. Treating pattern recognition outputs as explanations rather than correlations can lead to overinterpretation.

These tools are also sometimes described as broadly “intelligent” because they perform tasks that resemble human perception, such as recognizing images or speech. In practice, their operation is narrowly defined by training data and model structure. Recognizing these boundaries is essential for understanding both the strengths and the limits of pattern recognition within digital systems.

AI Tools for Prediction and Forecasting

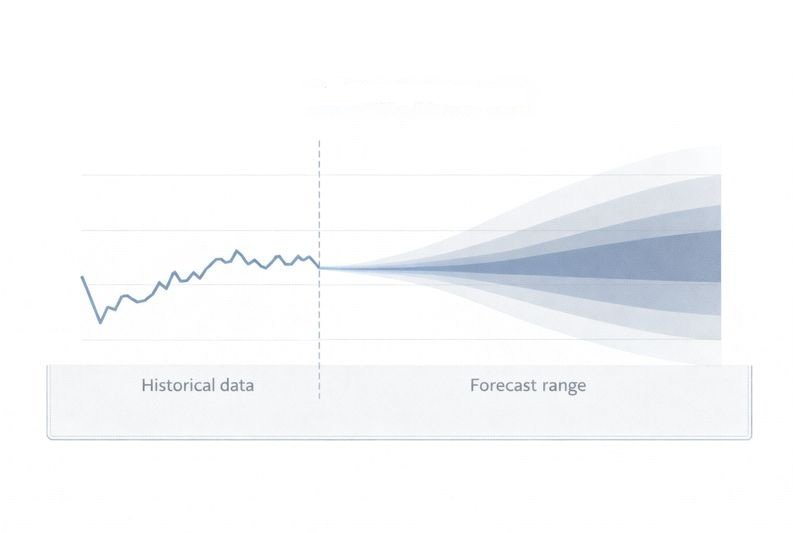

Another category of AI tools focuses on prediction and forecasting. In standards-based taxonomies, predictive AI tools are characterized as systems that produce probabilistic estimates derived from historical data distributions.

Prediction tools operate by identifying relationships between variables in past data and extrapolating those relationships under specified assumptions. Their outputs are inherently uncertain and typically expressed as ranges, confidence levels, or likelihood scores. Within institutional analysis, this uncertainty is framed as a structural characteristic of probabilistic systems rather than as a system fault.

Within institutional system architectures, predictive AI tools are rarely treated as standalone components. They are integrated into workflows that include human review, policy constraints, and contextual interpretation. The tool itself does not determine whether or how a prediction should influence decisions.

Educational discussions emphasize that predictive tools reflect the data and assumptions used during their development. Changes in data quality, context, or external conditions can affect their reliability. As a result, governance frameworks often require that predictive outputs be monitored and periodically reassessed within the systems that use them.

Uncertainty as a Structural Feature of Predictive Tools

Uncertainty is not an incidental property of predictive AI tools but a structural one. Predictions are generated by modeling relationships observed in historical data under specific assumptions. As a result, predictive outputs reflect probabilities rather than determinate future outcomes.

Misunderstandings arise when predictive tools are treated as forecasting certainty rather than estimating likelihood. Changes in context, data quality, or external conditions can significantly alter the relevance of past patterns. For this reason, predictive tools are typically embedded within systems that include review mechanisms, contextual checks, and revision processes.

Understanding prediction as probabilistic estimation rather than foresight helps clarify why human interpretation and oversight remain necessary components of predictive workflows.

AI Tools for Content Generation and Transformation

Some AI tools are designed to generate or transform content, such as text, images, audio, or structured summaries. These tools produce outputs by modeling patterns observed in training data rather than by reasoning about meaning or intent.

Content-generation tools are commonly described as systems that produce intermediate textual, visual, or structured artifacts based on learned data patterns. Their outputs are typically treated as intermediate artifacts rather than final authoritative products. Human oversight remains central to reviewing, validating, and contextualizing generated content.

From a systems perspective, these tools are constrained by design choices and usage policies. They do not establish goals, evaluate consequences, or assume responsibility for outcomes. Their function is limited to producing candidate outputs that may be accepted, modified, or rejected by humans or downstream processes.

Institutional guidance emphasizes transparency when such tools are used and cautions against assuming accuracy or neutrality. The educational value of understanding this category lies in recognizing both its capabilities and its limitations within structured workflows.

Interpretive Limits of Generated Content

AI tools that generate or transform content operate by reproducing patterns present in training data. Although their outputs may appear coherent or contextually appropriate, these tools do not evaluate truth, relevance, or appropriateness independently. Generated content reflects statistical similarity rather than understanding.

Institutional literature emphasizes that fluent output should be interpreted as a communication artifact rather than evidence of accuracy or authority. In practice, content-generation tools may produce outputs that appear coherent but lack completeness or contextual grounding, particularly when operating outside well-represented contexts. Recognizing this limitation is central to understanding why generated outputs are treated as provisional within most digital systems.

This interpretive gap explains why institutional frameworks emphasize transparency and human review when content-generation tools are used in structured environments.

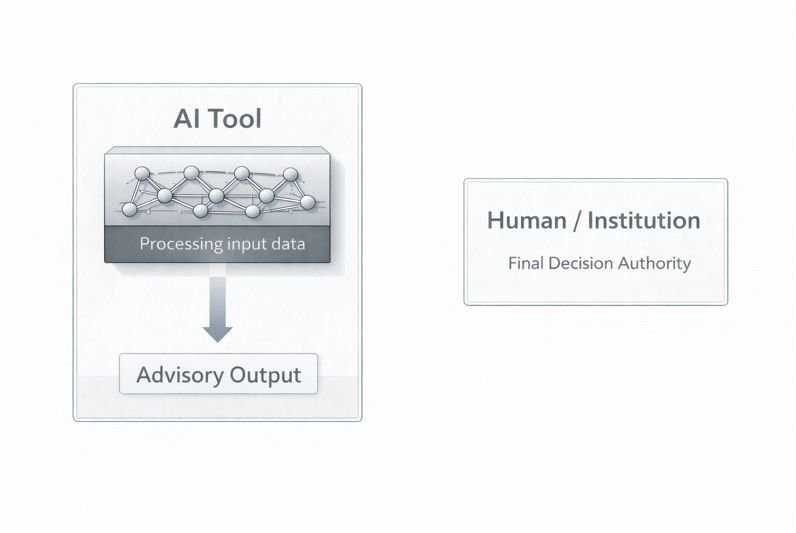

AI Tools for Decision Support and Recommendation

A further category includes AI tools that support decision-making by organizing information, ranking options, or highlighting relevant factors. These tools are often described as decision-support systems rather than decision-makers.

Decision-support AI tools may aggregate data from multiple sources, apply scoring models, or generate recommendations based on predefined criteria. Within governance-oriented documentation, these outputs are framed as advisory signals rather than determinants of final decisions. Responsibility for decisions remains with individuals or organizations using the system.

In digital systems, such tools are typically embedded within governance structures that define when recommendations can be acted upon and when additional review is required. This reflects an understanding that risks arise from how recommendations are used rather than from the tool itself.

Educational frameworks stress that decision-support tools operate within institutional rules and accountability mechanisms. Their influence depends on workflow design, oversight practices, and the broader context in which they are deployed.

Decision Support Versus Decision Authority

Decision-support AI tools are designed to organize information, highlight patterns, or rank options, but they do not possess decision authority. Their role is limited to shaping how information is presented, not determining outcomes.

Confusion often arises when recommendations are mistaken for decisions. In practice, responsibility for decisions remains with the individuals or institutions that define decision criteria and act on recommendations. Governance structures exist to ensure that decision-support tools operate within defined boundaries and that accountability is preserved.

Understanding this distinction helps clarify why risks associated with decision-support systems are closely tied to workflow design rather than to the technical tool alone.

Overlap and Ambiguity Between AI Tool Categories

The functional categories described in this article are analytical constructs rather than rigid classifications. In practice, some AI tools may span multiple categories, such as systems that both generate content and rank options or tools that combine prediction with pattern recognition.

This overlap does not invalidate functional categorization but highlights its purpose. Categories are used to clarify dominant roles within systems, not to describe tools exhaustively. Recognizing ambiguity prevents oversimplification and supports more careful interpretation of AI claims and system descriptions.

By treating categories as interpretive tools rather than fixed labels, readers can better understand how complex AI systems are assembled and governed.

Functional Categories of AI Tools in Digital Systems

| Functional Category | Typical Output Form | Primary Limitation | Role Within Systems |

|---|---|---|---|

| Pattern Recognition | Labels, classifications | Lacks contextual understanding | Structures data for interpretation |

| Prediction & Forecasting | Probabilities, estimates | Inherent uncertainty | Informs planning under assumptions |

| Content Generation | Draft text or media | No independent validation | Produces provisional artifacts |

| Decision Support | Rankings or scores | No decision authority | Supports human judgment |

Conclusion

AI tools used in digital systems can be understood as bounded technical components designed for specific functions such as pattern recognition, prediction, content generation, or decision support. Each type operates within defined limits and depends on data, design choices, and contextual constraints.

This article has taken an educational approach to explaining types of AI tools without promoting specific technologies or outcomes. By distinguishing between functional categories, the article provides a conceptual framework for understanding how AI tools are positioned within broader digital systems and workflows.

These distinctions provide a framework for descriptive discussion about AI capabilities and limitations. Rather than treating artificial intelligence as a single, monolithic concept, categorizing AI tools allows for more precise conceptual characterization consistent with institutional and standards-based documentation.

References

- World Economic Forum (WEF)

Global AI Governance and Systems Reports

Discusses workflow design, organizational context, and risk-based approaches to AI deployment. - National Institute of Standards and Technology (NIST)

AI Risk Management Framework (AI RMF 1.0), 2023

Provides a process-level framework emphasizing governance, oversight, and risk management in AI systems. - International Organization for Standardization (ISO)

ISO/IEC 22989: Artificial Intelligence — Concepts and Terminology, 2022

Defines AI systems, components, and lifecycle concepts, distinguishing tools from system-level processes. - Organisation for Economic Co-operation and Development (OECD)

OECD AI Principles, 2019 (updated)

Outlines human-centered values, accountability, and transparency as operationalized through governance structures. - Institute of Electrical and Electronics Engineers (IEEE)

Ethically Aligned Design: A Vision for Prioritizing Human Well-being with Autonomous and Intelligent Systems

Emphasizes socio-technical systems, human oversight, and responsibility beyond algorithmic performance. - European Commission

Ethics Guidelines for Trustworthy AI

Explains human agency, oversight, and process accountability as core requirements for AI-enabled systems.