Introduction

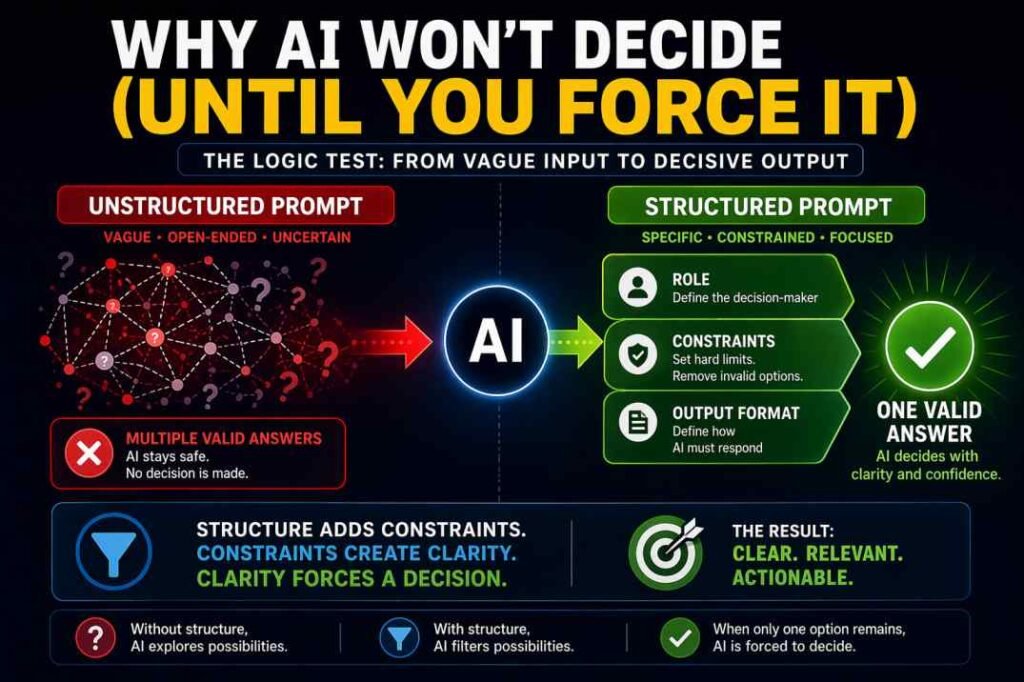

Prompt structure AI determines whether an AI model avoids decisions or produces a single, clear outcome.

Most users treat AI like a search engine: ask a question, get an answer. That assumption is wrong. AI generates responses by selecting the most statistically acceptable option. When your prompt is vague, multiple answers remain “acceptable,” so the model hedges.

This is closely related to why AI gives wrong answers when prompt structure is weak.

While prompt structure controls a single response, a structured AI workflow ensures consistency across multi-step tasks. Understanding how logic controls output is the foundation of building these high-performance systems.

Table of Contents

Quick Test (Try This Now)

Before reading further, try this experiment:

- Ask any AI: “Which marketing strategy is best for my business?” (Observe the vague, “it depends” answer).

- Now, rewrite it with:

- Role: Senior Growth Strategist

- Constraints: Budget under $500 + Results needed in 15 days

- Output: Single best recommendation

You’ll see the difference instantly—less explanation, more decision. This isn’t about the tool; it’s about how the prompt limits the model’s choices.

The Core Answer: Structure Forces Decisions

Prompt structure is not about clarity—it is about eliminating valid alternatives. In simple terms:

- More valid answers = Hedging and non-committal output.

- Fewer valid answers = Forced, decisive selection.

This is the core principle behind prompt structure AI—reducing valid alternatives until only one logical decision remains.

The Experiment: The Hiring Decision Test

To validate this theory, we conducted a logic test using a standard hiring scenario. The goal was to see if the AI would make a definitive choice or revert to safe, non-committal summaries.

Initially, the model defaulted to “it depends” reasoning, keeping multiple outcomes equally valid because the prompt lacked clear boundaries to eliminate them.

Phase 1: The Raw Prompt (Failure Case)

The Prompt:

“I have three candidates. John has 5 years of experience but wants a high salary. Sarah has 3 years of experience and is a strong team player. Mike has 10 years of experience but refuses to work in the office. Who should I hire?”

AI Output Behavior: The model produced a balanced, “vending machine” style response:

- Neutrality: It highlighted the individual strengths of all three candidates.

- Hedging: It avoided eliminating any option.

- Passivity: It deferred the final decision back to the user.

Verdict: FAIL The issue here isn’t a lack of intelligence; it is decision avoidance. Because all three candidates remained “logically acceptable” within the vague prompt, the AI had no logical incentive to select one over the others.

Phase 2: The Structured Prompt (Constraint Applied)

In the second phase, we applied the Single-Variable Refinement Method by introducing hard constraints that structurally removed the “acceptability” of certain candidates.

The Prompt:

Role: Senior Recruitment Analyst

Task: Select exactly one candidate for a Startup Lead role

Constraints:

- Budget is strictly fixed at $60k (Excludes John)

- Role requires 100% in-office presence for 6 months (Excludes Mike)

- Culture fit is secondary, not a hard requirement

Data: Same candidate info as Phase 1

Output: Provide a decision table and final selection

AI Output Behavior:

- Aggressive Filtering: The model immediately eliminated John (budget violation) and Mike (requirement violation).

- Definitive Selection: It selected Sarah as the only viable candidate.

- Logic-Based Justification: The decision was justified by constraint satisfaction rather than generic praise.

Verdict: SUCCESS By shrinking the “solution space,” the model was forced to commit. It stopped evaluating broadly and started filtering aggressively. When only one candidate met the structural requirements, the decision became a logical inevitability.

Key Insight for Your Workflow

This experiment proves that AI output quality is a direct result of constraint density. In my testing, I found that:

- Vague Prompts create a “flat” probability landscape (every answer is a “maybe”).

- Structured Prompts create a “steep” logic path (only one answer is a “yes”).

If your current AI workflow is giving you generic answers, it isn’t because the model is weak—it’s because your constraints aren’t tight enough to force a decision.

Key Insight for Your Workflow: This experiment proves that AI output quality is a direct result of constraint density. However, individual structured prompts are only the first step. To achieve professional-grade results at scale, you must integrate these prompts into a broader system. Learn how to combine these logic gates into a complete AI Workflow to reduce editing time and eliminate randomness across entire projects.

Quantified Observation:

In repeated testing across multiple prompts, I observed a clear pattern:

– Open-ended prompts led to non-committal or multi-option answers in most cases

– After adding 2–3 hard constraints, the model shifted to a single, decisive output in the majority of cases

While exact percentages vary by task, the directional change—from explanation to selection—was consistent.

Personal Note from My Testing:

In my own testing, this shift was immediate. The difference was not accuracy—it was decisiveness.

In practice, this reduced my need to manually evaluate multiple options. Instead of reviewing 3–4 possible answers, I was working with a single clear direction.

By using structured prompts, the AI stopped giving me a list of chores and started giving me a list of solutions.

Cross-Model Consistency: Does This Behavior Hold Across Models?

We ran the same hiring logic test across multiple leading models:

- ChatGPT (GPT-4o)

- Claude 3.5 Sonnet

- Gemini 1.5 Pro

Observed Pattern:

| Model | Raw Prompt Behavior | Structured Prompt Behavior |

|---|---|---|

| GPT-4o | Balanced, cautious, non-committal | Clear elimination and final selection |

| Claude 3.5 Sonnet | Faster logical framing but still hedged | Strong constraint adherence, decisive |

| Gemini 1.5 Pro | More verbose explanation, less filtering initially | Improved selection after constraints, but more explanatory |

Key Insight:

The behavior is consistent across models:

Unstructured prompts → multiple acceptable answers

Structured prompts → constrained decision outcome

The difference is not model intelligence—it is prompt structure.

Output Comparison (Before vs After)

Raw Prompt Output:

“It depends on your needs. Each candidate has strengths…”

Structured Prompt Output:

“Hire: Sarah

Reason: Only candidate within budget and meeting in-office requirement.”

Difference:

- Raw prompt → explanation

- Structured prompt → decision

This shift from explanation to selection is the direct result of constraints.

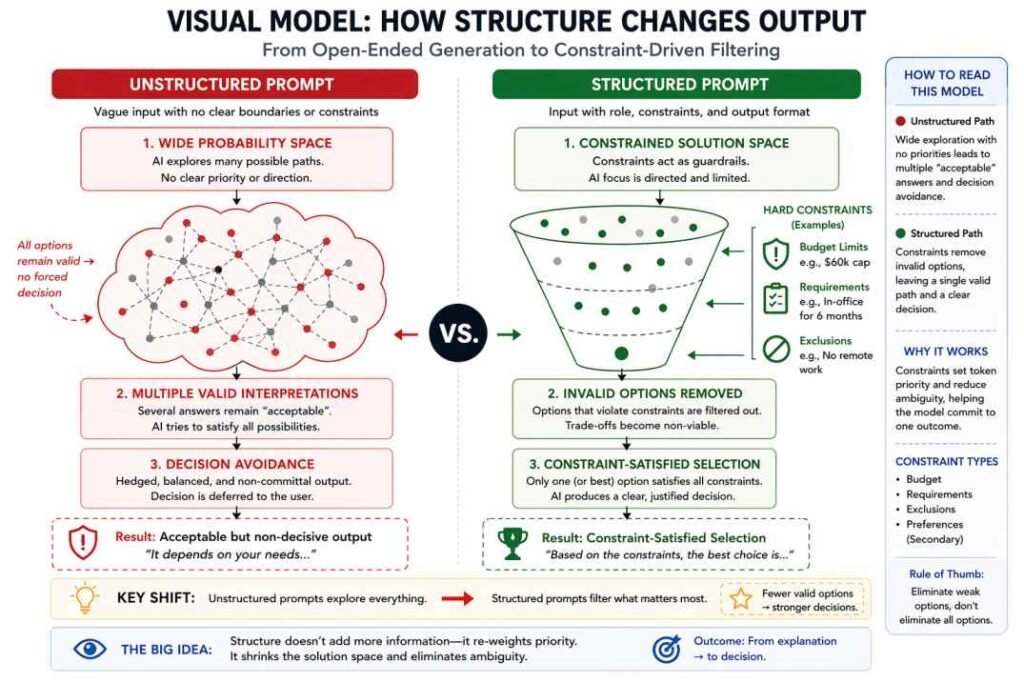

Why This Happens: Constraint-Driven Output Selection

The model is not trying to be correct. It is trying to produce a response that remains valid under uncertainty.

In an unstructured prompt, multiple interpretations of the task remain possible:

– What matters more—experience, cost, or flexibility?

– Is trade-off allowed, or is elimination required?

– Is the goal to evaluate or to decide?

This is also why ChatGPT often produces confusing answers when instructions conflict.

Because these conditions are undefined, the model keeps multiple answers “acceptable” and avoids excluding any option.

In some cases, the model may still try to justify rejected options. This usually happens when constraints are not strict enough.

This also explains why longer prompts often perform worse—adding more words without adding constraints increases ambiguity instead of reducing it.

This is one of the hidden reasons why AI tools fail even when users think they are giving better instructions.

The Science Behind the Failure

AI weighs different parts of your prompt based on importance. When structure is weak, multiple ideas compete, and no single decision dominates. If you want a deeper look at this internal process, see what happens inside an AI tool after you click “Generate.”

When prompts are long but loosely structured, attention becomes diffuse. Multiple parts of the input compete for importance, and no single decision path dominates. This leads to what can be described as attention drift—the model keeps multiple interpretations active instead of converging on one.

As a result:

- Important constraints are not prioritized

- Trade-offs remain unresolved

- The model defaults to safe, non-committal outputs

Constraints change this behavior.

By introducing explicit limits (e.g., budget, requirements, exclusions), you effectively increase the priority of specific tokens in the prompt. This reduces ambiguity and forces the model to weight certain conditions more heavily than others.

In practical terms, you are not adding more information—you are re-weighting the decision space.

According to transformer-based model research, attention mechanisms determine how input tokens are weighted during generation (see https://arxiv.org/abs/1706.03762).

What Structure Actually Does

Structure changes the task from **evaluation** to **constraint satisfaction**.

Once constraints are introduced:

– Some options become invalid (not just weaker)

– Trade-offs are no longer allowed

– The solution space shrinks

At that point, the model is no longer comparing candidates—it is filtering them.

When only one option satisfies all constraints, selection becomes deterministic.

The Key Shift

A loose prompt creates a ‘flat’ probability landscape where every answer looks equally valid. A structured prompt creates a ‘steep’ landscape where the logic flows toward only one possible exit.

Structured prompt → Restricted solution space → Forced selection

This is why structured prompts produce consistent decisions instead of variable explanations.

If multiple answers still seem reasonable, your constraints are incomplete.

This means every option looks equally acceptable, so no single answer dominates.

Pro Tip: The Hierarchy of Constraints

When applying multiple constraints, the model may attempt to balance them unless priorities are defined.

Use weighting labels:

(Critical Constraint): Budget <$500

(Secondary Preference): Fast delivery

This ensures the model prioritizes the correct logic when constraints conflict.

Expert Insight: Constraint Ordering Matters

Not all constraints are equal.

If constraints conflict, the model will attempt to balance them unless you define priority.

Example:

- Critical: Budget <$500

- Secondary: Fast delivery

Without priority, the model may produce an unrealistic compromise.

With priority, it will strictly enforce the critical constraint and adjust others accordingly.

Warning: The “Empty Set” Trap

If you apply multiple hard constraints that cannot coexist (e.g., “Immediate results” + “Zero budget” + “High quality”), the model may generate unrealistic compromises.

To prevent this, include a fallback clause:

“If no option meets all constraints, identify the closest match and explicitly state which constraint was violated.”

Quick Examples Across Use Cases

Marketing:

Unstructured → “You can try SEO, ads, or content marketing”

Structured → “Use SEO due to budget constraint and long-term ROI”

Product:

Unstructured → “Feature A improves UX, Feature B improves speed”

Structured → “Prioritize Feature B due to performance requirement”

Finance:

Unstructured → “Stocks and bonds both have advantages”

Structured → “Choose bonds due to low-risk constraint”

Analyst Decision Rule: The 3-Part Prompt Check

Before submitting any prompt, apply this filter:

1. Role — Who is making the decision?

(e.g., Analyst, Editor, Auditor)

2. Constraints — What conditions cannot be violated?

(e.g., budget limits, requirements, exclusions)

3. Output — What form must the answer take?

(e.g., table, score, ranked list)

If any of these are missing, the model will default to a safe, non-committal response.

When Structured Prompts Fail (Important Limitation)

Structured prompting is powerful—but it is not universally optimal.

It performs poorly when the goal is:

– idea generation

– creative writing

– open-ended exploration

In these cases, constraints reduce variation and limit useful outcomes.

Use structure when:

– a decision is required

– options must be filtered

– consistency matters

Avoid structure when:

– you want unexpected ideas

– ambiguity is useful

In these scenarios, removing constraints often produces better results because it expands the solution space instead of restricting it.

The Exception: When Constraints Over-Restrict

Constraints improve decisions—but excessive constraints can break the system.

Example:

Role: Analyst

Task: Recommend a business strategy

Constraints:

- Budget under $100

- Must generate results in 24 hours

- No digital channels

- No offline channels

In this case, the model may:

- Fail to produce a meaningful answer

- Generate forced or unrealistic suggestions

- Default to generic fallback responses

This happens because the solution space is reduced to near zero.

This can be understood as over-fitting the prompt—the constraints are so tight that no valid solution remains.

Practical Rule:

Constraints should eliminate weak options, not eliminate all options.

If the model struggles to respond, your constraints are likely over-restrictive.

Copy-Paste Prompt Framework

Use this template to force consistent output:

Role: [Define the decision-maker]

Task: [State the objective clearly]

Constraints:

1. [Hard limitation]

2. [Hard limitation]

3. [Optional preference]

Data:

[Insert your input]

Output:

[Define exact format]

This converts an open-ended prompt into a controlled evaluation system.

Real-World Use Case: Fixing a Vague Business Decision

A user asks:

“What is the best pricing strategy for my product?”

Unstructured output:

“You can consider competitive pricing, value-based pricing, or cost-plus pricing.”

Structured version:

Role: Pricing Analyst

Task: Select the best pricing strategy

Constraints:

- Product is new to market

- Budget is limited

- Goal is fast customer acquisition

Output:

“Use competitive pricing to reduce entry friction and gain initial users.”

The difference is not better knowledge—it is enforced decision logic.

Summary: How to Control AI Output

– Vague prompt → multiple valid answers → no decision

– Structured prompt → constrained options → forced selection

Key principle:

AI does not fail randomly—it follows the structure you provide.

If your output is unclear, the issue is not the model—it is the number of valid options your prompt allows.

Reduce the options, and the decision becomes inevitable.

Analyst Checklist: Is Your Prompt Structured for a Decision?

Before submitting your prompt, validate it using this quick check:

[ ] Identity Check: Have you defined a clear role? (e.g., Analyst vs. Advisor)

[ ] Boundary Check: Are there at least 2 hard constraints? (e.g., budget, time, requirement)

[ ] Outcome Check: Is the output format forced? (e.g., table, rank, yes/no)

[ ] Conflict Check: If constraints clash, is priority clearly defined?

If any of these are missing, the model will likely default to a safe, non-committal response.

Frequently Asked Questions

Why does AI give vague or “it depends” answers?

Because the prompt allows multiple valid interpretations. When no constraints eliminate options, the model avoids committing and returns a balanced response.

Does adding more details improve AI output?

Not necessarily. More details without constraints increase ambiguity. Better results come from reducing options, not adding more information.

What is the most important element in prompt structure?

Constraints. They determine which answers are valid and which are eliminated. Without constraints, the model has no reason to choose one outcome.

Can too many constraints make AI worse?

Yes. Over-restricting the prompt can reduce the solution space too much, leading to rigid, unrealistic, or low-quality outputs.

How do I know if my prompt structure is incomplete?

If the model still provides multiple acceptable answers, your constraints are not strong enough to force a single decision.

Try This Now

Take a prompt that previously gave you a vague or generic response.

Example:

“Which marketing strategy is best for my business?”

Rewrite it as:

Role: Marketing Analyst

Task: Select the best strategy

Constraints:

- Budget is under $1,000

- Must generate results within 30 days

- No paid ads

Output: Rank top 3 strategies with justification

Then compare both outputs.

You will notice a shift from broad suggestions to a single, constrained decision.

References

Vaswani, A. et al. (2017). Attention Is All You Need.

https://arxiv.org/abs/1706.03762

IBM. What is an Attention Mechanism?

https://www.ibm.com/think/topics/attention-mechanism

Wikipedia. Transformer (Deep Learning)

https://en.wikipedia.org/wiki/Transformer_(deep_learning)