Introduction

AI tools and AI models are frequently discussed together in artificial intelligence discourse. Although closely related, they function at separate architectural layers. Clear interpretation requires distinguishing the analytical component from the deployment environment, particularly when examining accountability allocation, lifecycle oversight, and risk boundaries.

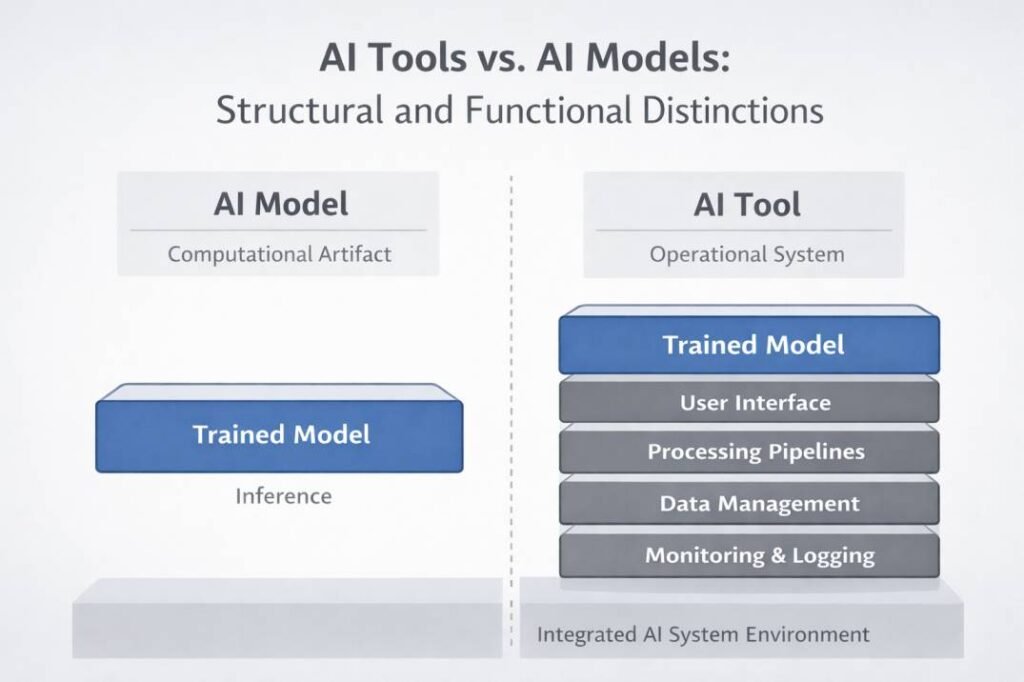

An AI model is a trained mathematical structure that produces outputs from learned data patterns. An AI tool, by contrast, represents a system-level implementation that embeds one or more models within data pipelines, execution environments, interfaces, monitoring layers, and governance controls.

Because deployed platforms are often presented as unified products, the analytical core and the operational wrapper can appear indistinguishable. The discussion below separates these layers across ontology, processing logic, architectural embedding, workflow positioning, control authority, governance structures, and constraint domains.

This layered interpretation also informs related comparisons, including AI tools versus automation systems and tools versus standalone machine learning models.

Why the Distinction Matters

Distinguishing models from tools clarifies system documentation, governance allocation, and architectural interpretation. When analytical and operational layers are conflated, responsibility mapping and risk attribution become difficult to trace within technical records. Maintaining this separation clarifies design accountability, strengthens compliance traceability, and improves workflow interpretation.

Conceptual Definitions

AI Models

An AI model is a computational structure trained to perform inference tasks such as classification, regression, prediction, or generation. Models are typically represented as parameterized mathematical functions derived from training data through optimization procedures. Their primary function is analytical: transforming input data into structured outputs according to learned relationships.

In isolation, a model operates independently of deployment context. It does not inherently include user interfaces, workflow controls, monitoring systems, or governance documentation. Instead, it represents the analytical core of an inference process.

AI Tools

At the system level, tools function as implementations that deploy one or more AI models within an operational environment. In addition to the embedded model, a tool typically includes data ingestion mechanisms, preprocessing pipelines, execution environments, user interfaces, integration connectors, and oversight controls.

Unlike standalone models, AI tools are designed to function within workflows. They coordinate how input data is received, how models are invoked, how outputs are delivered, and how system-level controls govern execution and traceability.

Ontological Status: Artifact vs System

Beyond functional differences, AI models and AI tools differ in ontological status within system design.

An AI model is a computational artifact — a trained mathematical object that can be stored, versioned, replicated, or transferred across infrastructures without altering its internal analytical structure.

An AI tool, by contrast, constitutes a socio-technical system. It includes computational components alongside configuration settings, access controls, interface layers, logging mechanisms, and human interaction points. Its behavior is shaped not only by embedded models but also by operational context and organizational policy.

The distinction positions the model as an analytical entity and the tool as the operational system that embeds and governs it. In practical documentation environments, models are often versioned as discrete artifacts in repositories, while deployment configurations are tracked separately in infrastructure management systems. This separation formalizes the difference between analytical artifacts and operational systems within documentation records.

When models and tools are documented at different abstraction levels, governance boundaries become easier to trace. Architectural diagrams can then distinguish computational logic from operational control without conflating responsibilities.

Abstraction Hierarchy in AI System Design

The distinction becomes more visible when examined through abstraction levels.

At the model layer, abstraction refers to a parameterized mathematical structure optimized over training data.

At the tool layer, abstraction concerns how computational components are organized, invoked, monitored, and governed within an operational system.

A trained model file may remain unchanged while the surrounding deployment configuration is modified to introduce new logging policies or access controls. In such cases, the abstraction level of the model remains stable, while the abstraction level of the tool evolves to reflect operational requirements.

Comparison Table

| Dimension | AI Models | AI Tools |

|---|---|---|

| Core Nature | Mathematical inference structure | Operational system implementation |

| Primary Function | Generate analytical outputs | Deploy and manage model execution |

| Architectural Scope | Computational artifact | Multi-layer system including infrastructure |

| Workflow Role | Analytical core | Embedded workflow component |

| Governance Focus | Validation, retraining, drift monitoring | Deployment oversight, configuration control |

| Dependency Type | Statistical representativeness | Infrastructure and integration reliability |

| Control Authority | Produces outputs | Manages invocation and routing |

| Failure Mode | Analytical error | Operational or execution error |

The table describes abstraction layers, not differences in capability or technological sophistication. Neither component subsumes the other; they operate at different abstraction levels.

Core Processing Logic

Processing logic introduces an additional structural axis of differentiation. An AI model performs inference by applying learned parameters to input data. Its internal logic is defined by mathematical transformations derived during training.

An AI tool does not introduce new analytical reasoning beyond the embedded model. Instead, it governs when and how inference occurs. Tool-level processing involves orchestration: triggering execution, validating inputs, formatting outputs, logging results, and integrating outputs into larger workflows.

Inference originates within the model, whereas execution management and orchestration reside within the tool.

Differences in System Architecture

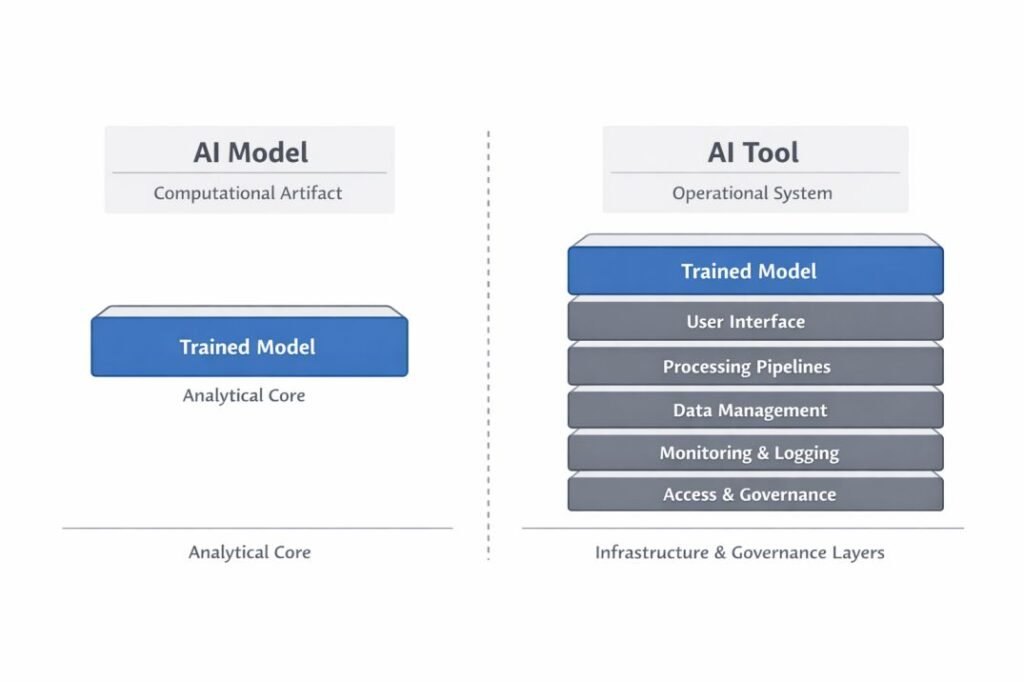

Architectural scope further differentiates the computational artifact from its deployment framework.

A model represents a self-contained computational artifact composed of learned parameters and inference logic. By itself, it does not define data sourcing, storage mechanisms, runtime environments, or execution governance.

At the architectural level, tools operate as multi-layer system implementations. They incorporate models alongside preprocessing layers, runtime engines, interface abstractions, monitoring subsystems, and integration connectors. These components enable models to function within operational infrastructures.

Architectural complexity is concentrated at the tool layer rather than within the model artifact itself.

Interface Contract and Encapsulation

AI tools typically encapsulate AI models behind defined interface contracts. These contracts specify input schema, output format, invocation conditions, and error handling protocols.

Encapsulation ensures that changes to model parameters or architecture do not necessarily disrupt external system components. The tool layer mediates interactions between inference logic and surrounding systems.

Models, in contrast, do not inherently define interface governance. They assume structured input and generate output but do not manage interoperability requirements.

The separation aligns with established principles of modular system design and encapsulation.

Interface Abstraction Layer

AI tools frequently introduce abstraction layers that decouple user interaction from model complexity. These interfaces translate raw model outputs into structured system responses and integrate inference into workflow logic.

Models themselves do not define such abstraction. They generate outputs but do not prescribe how those outputs are presented, validated, or consumed within system environments.

Differences in Workflow Placement

Workflow analysis further clarifies how models and tools occupy different positions within structured processes.

AI tools span multiple workflow stages. They may handle data validation, inference invocation, output routing, logging, escalation, and integration with downstream systems.

The model contributes a discrete analytical function, whereas the tool governs how that function interacts with surrounding process stages.

For example, a natural language model may generate a sentiment score for a text input. The AI tool deploying it may define how that score is logged, displayed, or integrated into downstream analytics systems. The inference logic remains contained within the model, while execution management resides within the tool.

In a fraud detection system, a model may generate a probability score indicating transaction risk. The surrounding tool defines whether that score results in automatic account suspension, routing to human review, or passive logging for audit purposes. The model performs inference; the tool enforces operational policy within defined control thresholds.

Data Flow and Control Flow Distinction

Within workflow computation, models operate primarily along data flow pathways. They transform structured input into analytical output.

AI tools operate across both data flow and control flow. In addition to managing data transformations, they define conditional logic that governs when inference occurs and how outputs influence subsequent workflow stages.

This distinction places computational transformation at the model layer and process coordination at the deployment layer.

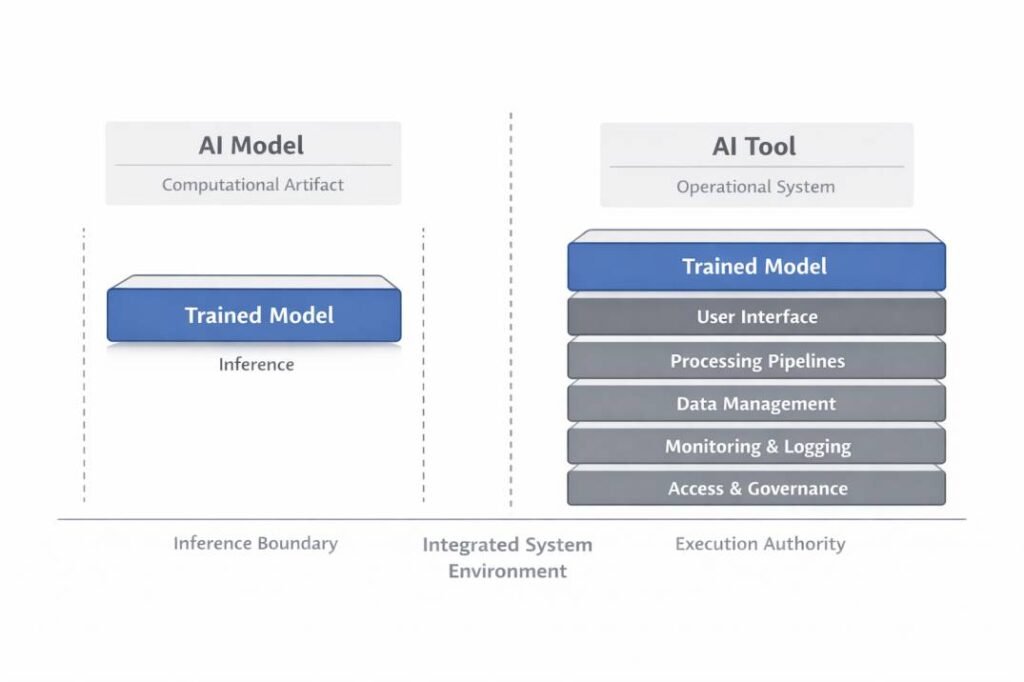

System Boundaries and Control Authority

Inference Boundary

The boundary of an AI model typically begins at structured input and ends at output generation. It does not inherently determine how outputs are acted upon.

Execution Authority

An AI tool extends beyond the inference boundary. It governs execution authority, including invocation conditions, threshold definitions, routing logic, and user notifications.

For example, a classification model may generate a probability score. The AI tool deploying it may define whether that score triggers escalation, storage, or manual review. Inference generation and operational control remain structurally distinct.

The separation between models and tools becomes particularly consequential when analyzing governance and lifecycle responsibilities.

In integrated environments, undefined boundaries complicate traceability and responsibility assignment across governance records.

Lifecycle analysis makes these boundary distinctions operationally visible.

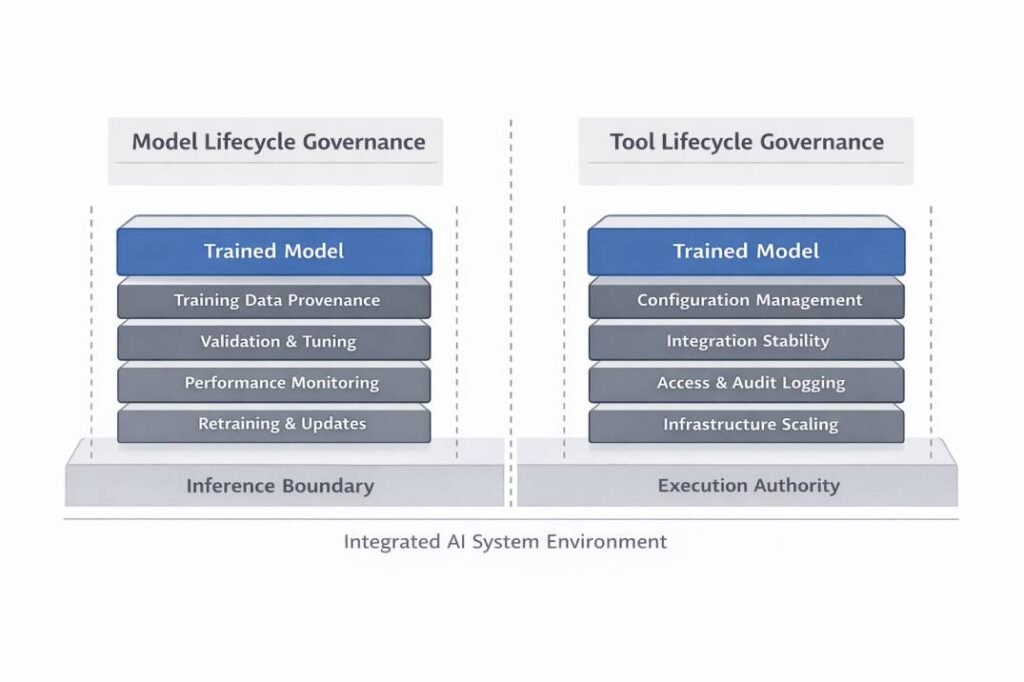

Governance and Lifecycle Considerations

Governance responsibilities differ according to operational role.

At the model layer, oversight centers on training data provenance, validation procedures, parameter tuning, performance monitoring, and drift detection. Typical activities include training, evaluation, controlled deployment, monitoring, and retraining cycles.

At the tool layer, governance focuses on configuration management, integration stability, access control, logging mechanisms, and infrastructure scaling. Oversight therefore extends beyond analytical performance to encompass system stability, traceability, and operational control.

Documenting these domains independently improves clarity in governance records and reduces ambiguity during compliance review.

Accountability Granularity

Responsibility allocation becomes clearer when analytical and deployment layers are documented separately.

At the model level, accountability concerns training data integrity, validation methodology, performance metrics, fairness evaluation, and drift monitoring.

At the tool level, accountability concerns configuration integrity, access governance, audit logging, deployment stability, and user interaction management.

This layered separation reflects distinctions commonly found in institutional governance frameworks that differentiate analytical oversight from system-level operational control. Regulatory or audit processes may therefore assess model validation independently from deployment monitoring procedures.

Evolution and Versioning Dynamics

AI models evolve through retraining, hyperparameter modification, architectural redesign, or dataset expansion. Version control at the model level typically focuses on parameter changes and evaluation metrics.

AI tools evolve through interface updates, integration adjustments, infrastructure migration, and configuration modification. Tool versioning reflects operational changes that may occur independently of model updates.

Without clear separation, change tracking becomes difficult in multi-layer deployments.

Dependency Typology

Model performance depends primarily on statistical representativeness, feature integrity, and parameter optimization. Sensitivity therefore arises from changes in data distribution.

At the deployment layer, stability depends on runtime infrastructure, API compatibility, and configuration coherence. Operational disruption may occur even when the model continues to perform as expected.

For example, performance degradation caused by distribution shift reflects a model-layer issue, even if the surrounding tool remains operational. Conversely, a configuration error in the deployment layer may interrupt execution despite stable analytical behavior. These cases demonstrate that statistical and infrastructural dependencies operate independently.

Failure Modes and Constraint Domains

Analytical failure typically manifests at the model layer as misclassification, prediction instability, or generalization error.

Operational disruption, by contrast, emerges at the tool layer through execution interruption, routing misconfiguration, integration breakdown, or logging failure.

Clear layer identification limits the risk of misattributing analytical instability to deployment malfunction. In audit or incident review contexts, separating model error from tool misconfiguration improves root-cause accuracy and strengthens traceable documentation.

Risk Surface Differentiation

The risk surface of an AI model is primarily analytical. Risks may include generalization error, bias amplification, statistical instability, or misclassification.

The risk surface of an AI tool extends into operational and socio-technical domains. Risks may include unauthorized access, logging failure, integration breakdown, misconfiguration, or incorrect routing of outputs.

Analytical risk and operational risk require different monitoring mechanisms and escalation pathways within system documentation.

Systems Design Implications

Distinguishing models from tools influences documentation practices, audit design, and change management.

Documentation at the model layer emphasizes dataset provenance, parameter revision history, and evaluation metrics. Documentation at the tool layer addresses configuration integrity, access governance, execution logging, and integration stability.

Treating these documentation domains separately reduces ambiguity during incident investigation and compliance review.

Common Areas of Overlap

Structural interdependence exists between models and tools, despite their layered distinction. A model requires deployment mechanisms to operate in real environments, while tools rely on models to generate analytical outputs.

Tools may coordinate multiple models within structured workflows. In such configurations, the tool orchestrates inference without altering internal model logic.

Interdependence does not eliminate structural distinction.

Limitations and Structural Constraints

AI models are constrained primarily by training data representativeness, parameter configuration, and generalization limits. Their performance may vary when input distributions shift or when deployment contexts differ from training conditions.

AI tools are constrained by infrastructure design, integration architecture, configuration integrity, and organizational policy. Operational limitations may arise from system dependencies, interface stability, or access control mechanisms.

These constraint domains operate at different system layers and should not be conflated within system documentation or governance analysis.

Frequently Asked Questions

What is the primary difference between an AI model and an AI tool?

An AI model is a trained computational structure that performs inference. An AI tool is a system-level implementation that deploys and manages one or more models within operational workflows.

Can an AI model exist without being part of a tool?

Yes. A model can exist independently as a trained artifact. However, practical deployment typically requires integration into a tool or system environment.

Do AI tools always contain multiple models?

Not necessarily. A tool may deploy a single model or coordinate several models, depending on system design.

Which requires more governance: the model or the tool?

Both require governance, but oversight domains differ. Model governance focuses on analytical reliability, while tool governance focuses on deployment and operational stability.

Socio-Technical Embedding

AI tools operate within socio-technical environments that include human oversight, organizational policy frameworks, compliance requirements, and infrastructure governance. Their behavior is influenced not only by embedded computational components but also by procedural and regulatory contexts.

AI models, by contrast, remain computational artifacts whose behavior is shaped primarily by training data and parameterization. They do not independently engage with regulatory structures, user interaction contexts, or organizational workflows.

This embedding indicates that governance obligations and responsibility allocation primarily attach to operational systems rather than to analytical artifacts in isolation.

Structural Summary

| Layer | Function | Governance Focus |

|---|---|---|

| AI Model | Performs inference | Validation, retraining, drift |

| AI Tool | Manages deployment | Configuration, monitoring, access |

Conclusion

Within artificial intelligence system architecture, models and tools occupy distinct yet interdependent layers. The model functions as the analytical engine that transforms data into inference outputs, while the tool provides the operational and governance structure within which that engine executes.

Across abstraction level, architectural embedding, workflow placement, governance oversight, and constraint domains, this layered separation remains consistent. Models generate inference; tools manage execution authority within integrated socio-technical systems.

Preserving this perspective strengthens documentation clarity, supports accountability traceability, and reduces architectural ambiguity without implying hierarchical superiority.

References

ISO/IEC 22989:2022

Artificial intelligence — Concepts and terminology.

International Organization for Standardization (ISO).

Official page: https://www.iso.org/standard/74296.html

ISO/IEC 23053:2022

Framework for Artificial Intelligence (AI) Systems Using Machine Learning (ML).

International Organization for Standardization (ISO).

Official page: https://www.iso.org/standard/74438.html

ISO/IEC 23894:2023

Information technology — Artificial intelligence — Risk management.

International Organization for Standardization (ISO).

Official page: https://www.iso.org/standard/77304.html

NIST (2023).

Artificial Intelligence Risk Management Framework (AI RMF 1.0).

National Institute of Standards and Technology.

Full publication: https://nvlpubs.nist.gov/nistpubs/ai/nist.ai.100-1.pdf

Overview page: https://www.nist.gov/itl/ai-risk-management-framework

Dumas, M., La Rosa, M., Mendling, J., & Reijers, H. A. (2018).

Fundamentals of Business Process Management (2nd ed.). Springer.

Publisher page: https://link.springer.com/book/10.1007/978-3-662-56509-4

Weske, M. (2019).

Business Process Management: Concepts, Languages, Architectures (3rd ed.). Springer.

Publisher page: https://link.springer.com/book/10.1007/978-3-662-59432-2

These standards are cited to support terminology consistency and lifecycle distinctions used in this analysis.