Introduction

AI tools are commonly understood as computational systems designed to process data, learn statistical patterns, and generate outputs through structured model architectures. At a structural level, these systems consist of interconnected technical components that collectively support data handling, model computation, inference processes, output generation, and oversight mechanisms. Examining these components supports a clearer understanding of AI system architecture, inter-layer information flow, and how observed behavior emerges from underlying technical design constraints.

This educational explainer focuses on the core structural components of AI tools, outlining the foundational layers that enable their operation within digital environments. The discussion emphasizes system architecture rather than practical usage, highlighting the roles of data pipelines, model frameworks, computational infrastructure, inference mechanisms, and governance layers. Attention is also given to how oversight, monitoring, and control structures are embedded into AI systems to support accountability, traceability, and bounded interpretation.

The sections that follow describe how these components influence system behavior, moving from foundational architecture to system governance. The objective is to support conceptual clarity regarding how AI tools are constructed, how their internal structures interact, and how their limitations and constraints arise from underlying design frameworks.

A broader conceptual framing of AI tools is presented in AI Tools Explained — Conceptual Foundations, System Logic, and Institutional Context.

Conceptual Foundations of AI Tool Architecture

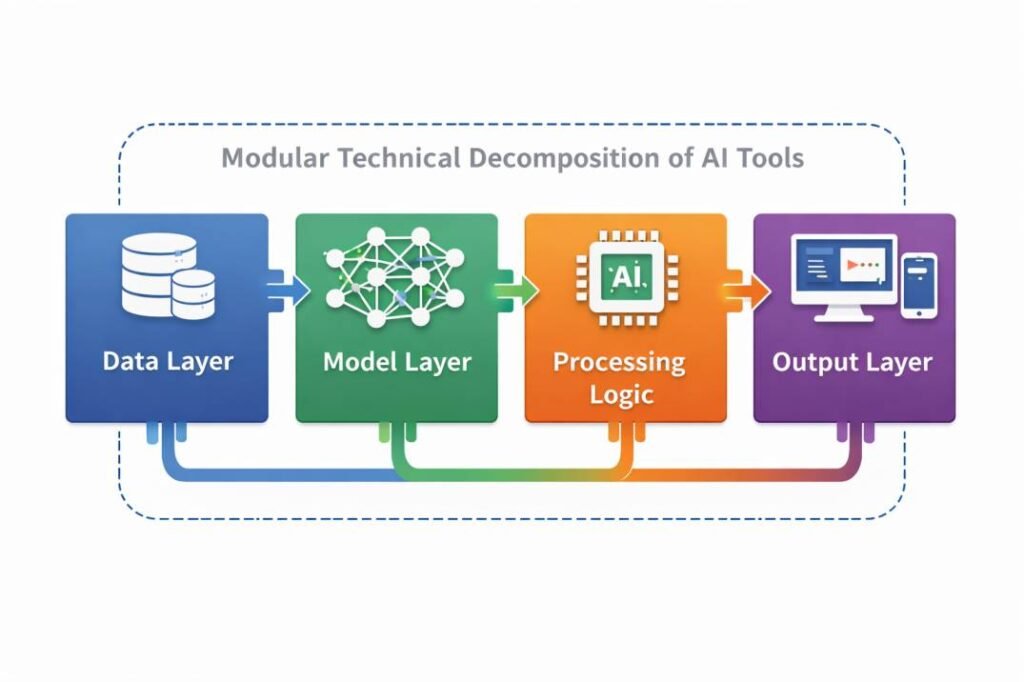

AI tools are commonly described as layered technical systems composed of interconnected components that transform input data into outputs through structured computational processes. Rather than functioning as monolithic entities, AI tools are organized into modular architectural layers that separate data handling, computation, inference, and output transformation functions.

Although specific implementations vary across domains and platforms, institutional standards bodies and academic research literature frequently identify recurring architectural patterns in AI system design in AI system design. These patterns reflect shared architectural principles that shape how data is represented, how models learn statistical relationships, and how results are produced and interpreted within digital environments.

What Defines an AI Tool at the Structural Level

From a structural perspective, an AI tool can be understood as a bounded technical system designed to perform defined computational functions within constrained data, model, and operational environments. Unlike traditional rule-based software, which executes explicitly programmed instructions, AI tools typically rely on data-driven models that infer patterns from training datasets. This distinction is commonly observed to influence how system behavior is generated, how outputs are derived, and how system performance is evaluated.

AI tools operate within defined technical and operational constraints that shape input scope, output range, and system reliability. Structural boundaries help differentiate AI tools from broader AI systems, which may encompass organizational workflows, human oversight structures, and governance mechanisms beyond the technical tool itself.

Core Structural Layers in AI Systems

This layered organization is designed to support modularityin which distinct components handle data input, computation, model inference, and output transformation within an integrated architectural framework.

This layered organization supports modularity, enabling individual components to be evaluated, updated, or replaced without restructuring the full system architecture. It also provides a conceptual framework for examining how errors, biases, or uncertainty may propagate across different system layers, contributing to a more structured understanding of AI tool behavior.

Structural components within AI tools operate as interdependent modules rather than isolated elements. Constraints at the data layer may influence model learning capacity, while computational limits can shape inference latency and output resolution. These cross-layer dependencies mean architectural decisions in one component can propagate system-wide effects, reinforcing the interpretation of AI behavior as an emergent property of interconnected technical structures.

Institutional Perspectives on AI System Architecture

AI system architecture is commonly discussed within institutional research and governance frameworks that emphasize modularity, accountability, and traceability across system components. For example, technical standards bodies such as the National Institute of Standards and Technology (NIST) and the Organisation for Economic Co-operation and Development (OECD) describe AI systems as layered constructs in which data pipelines, model logic, and output interfaces must be evaluated as interconnected structures rather than isolated modules. Within these frameworks, architectural transparency is viewed as essential for system oversight, interpretability, and responsible deployment across organizational contexts.

Similar architectural framing appears in institutional AI governance literature, including NIST’s AI Risk Management Framework and OECD system classification guidance, which describe AI systems as layered technical and organizational structures requiring component-level transparency and accountability.

Institutional perspectives referenced in this section align with publicly available documentation from organizations such as NIST, OECD, and IEEE regarding AI system structure, accountability, and governance.

Structural Differences Between AI Tools and Traditional Software

Traditional software systems are commonly structured around deterministic, rule-based logic designed to produce predictable behavior within predefined constraints. AI tools, by contrast, generally operate through probabilistic inference, generating outputs based on learned statistical relationships rather than fixed decision pathways.

These structural differences affect how predictability, traceability, and accountability are interpreted. While traditional software behavior can often be traced directly to explicit code, AI tool behavior reflects interactions between model parameters, training data, and algorithmic design choices. Understanding these architectural distinctions is essential for interpreting how AI tools function within broader digital systems.

Related functional classifications are discussed in Types of AI Tools in Digital Systems — Conceptual Overview.

Data, Model, and Computation Components

AI tools rely on a set of foundational technical components that enable data handling, model execution, and computational reasoning. Among these, data systems, model architectures, and computational infrastructure form the structural core that determines how information is processed and how outputs are generated within AI-driven environments.

Rather than operating as isolated elements, these components function as interdependent layers whose design choices shape system behavior, representational capacity, and operational constraints.

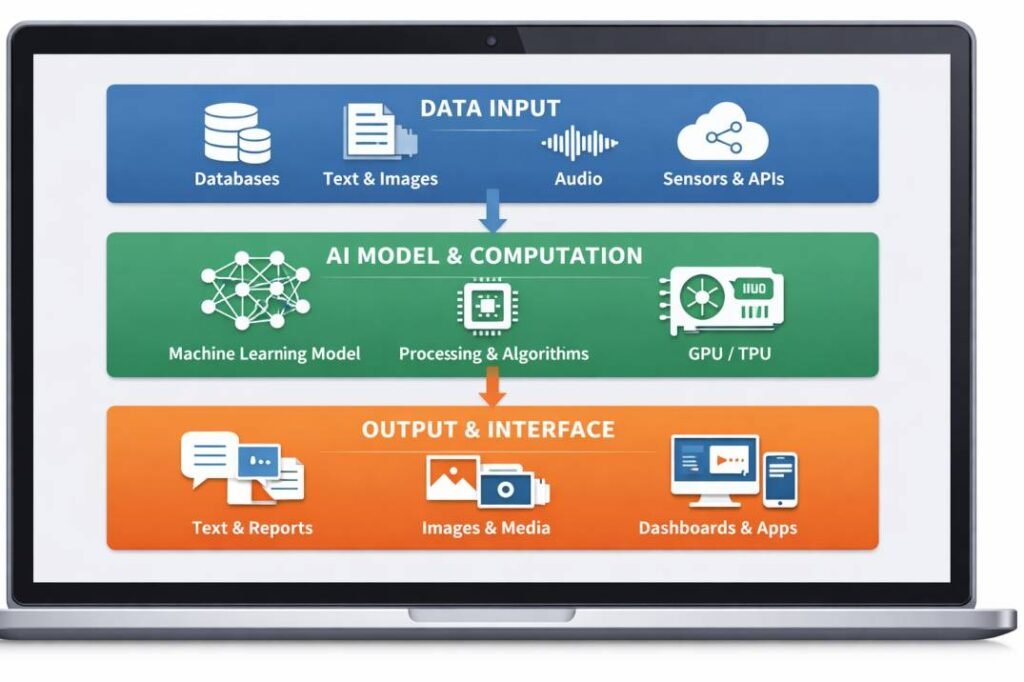

This diagram presents a conceptual decomposition of AI tools into layered technical components, including data input structures, computational and model-processing layers, and output interfaces. The visualization functions as an abstract system reference model rather than a real-world implementation, supporting conceptual analysis of how information flows through interconnected modules. It does not imply empirical performance characteristics or deployed system capabilities.

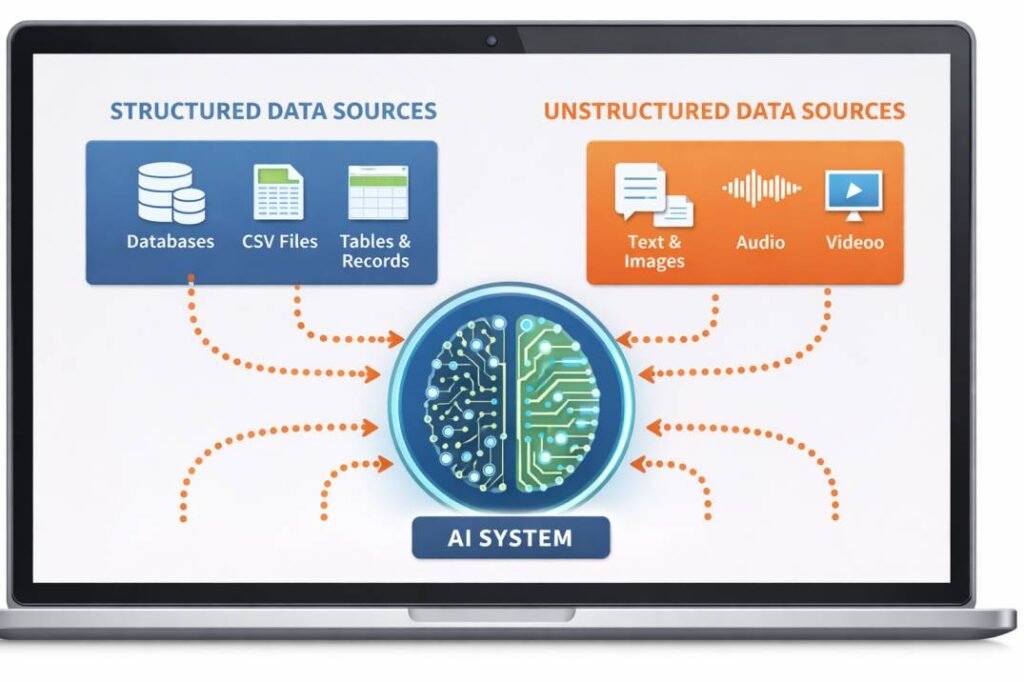

Data as a Foundational Structural Component

Data functions as a primary structural input within AI tools, serving both as a training resource during model development and as inference input during deployment. Training datasets influence how models encode patterns, while inference data determines how those learned patterns are applied in real-time system operation.

Data pipelines commonly incorporate structured processes for collection, filtering, normalization, formatting, and transformation. These processes ensure that incoming information conforms to expected technical specifications and reduces inconsistencies that could affect downstream model behavior. Decisions made at the data layer—such as dataset scope, labeling structure, and representational balance—can influence how models generalize, how outputs are shaped, and how interpretive limits emerge.

Model Architecture as a Core System Element

The model layer represents the computational engine of an AI tool. This layer includes algorithmic structures such as neural networks, probabilistic classifiers, or statistical learning frameworks that transform processed data into predictions, classifications, or generated outputs.

Model architectures define how information is encoded, how internal representations are structured, and how relationships within data are learned. Model parameters encode learned statistical patterns derived from training data, which are later applied during inference to produce probabilistic results. Structural aspects such as model depth, connectivity, and representational capacity shape both the expressive potential of the system and its inherent limitations.

Computational Infrastructure Supporting AI Tools

AI tools are typically deployed within computational environments designed to support model execution and data processing at scale. This infrastructure may include specialized hardware—such as graphics processing units (GPUs) or tensor processing units (TPUs)—as well as software frameworks that manage model loading, inference execution, and system orchestration.

Runtime environments coordinate tasks including memory allocation, computation scheduling, and interface communication between model components and external systems. Infrastructure constraints—including processing capacity, latency thresholds, and energy requirements—shape model configuration, inference efficiency, and deployment feasibility.

System Constraints and Performance Boundaries

Infrastructure and architectural constraints jointly define the operational boundaries within which AI tools configure models, execute inference, and remain deployable. These constraints may involve limitations related to processing throughput, model size, storage capacity, or input complexity. As a result, system designers often balance trade-offs between computational efficiency, model expressiveness, and deployment feasibility.

Recognizing these boundaries supports a more accurate conceptual understanding of AI tools as engineered technical artifacts with finite capabilities. AI tools function within finite operational capacities shaped by data, computation, and system architecture.

Inference, Output, and Interpretation Layers

Beyond data processing and model computation, AI tools include structural layers responsible for inference, output construction, and result interpretation. These components determine how internal model representations are transformed into externally visible signals and how system outputs are framed for downstream use within digital environments.

Inference layers translate learned statistical patterns into structured probabilistic outputs rather than direct factual assertions.

Inference Mechanisms Within AI Tools

Inference refers to the process through which trained models apply learned parameters to new input data. During inference, models evaluate patterns, assign probabilities, and generate classifications or predictions based on statistical relationships encoded during training.

Similar probabilistic inference structures are documented in academic literature on statistical learning theory, where model outputs are formally interpreted as conditional probability estimates rather than deterministic conclusions.

Inference mechanisms are not designed to establish definitive truth claims. Instead, they produce outputs that express likelihoods, rankings, confidence values, or probabilistic associations. As a result, inference outputs represent structured approximations derived from model constraints, training scope, and representational limits rather than objective determinations.

The nature of inference is shaped by model design choices, parameter calibration, and the statistical properties of both training and input data.

Output Representation and System Interfaces

AI tools include output layers that format internal model results into interpretable external representations. These outputs may appear as numerical scores, probability distributions, categorical labels, generated text, or structured data objects intended for machine-to-machine exchange.

The design of output formats influences how system results are interpreted, validated, or integrated into broader workflows. Interface components act as boundary structures between internal model processes and external systems, managing data transmission, visualization, and integration with application platforms.

From a structural perspective, output layers function as translation mechanisms that convert abstract model states into communicable representations suitable for technical or institutional environments.

Interpretation Limits and Structural Uncertainty

AI tools operate within bounded technical and data-defined domains, meaning their outputs represent probabilistic inferences constrained by training scope, system architecture, and available information.

To communicate these structural limits, systems may include confidence indicators, probability scores, or error estimates that express uncertainty associated with generated outputs. These signals provide contextual information about model reliability rather than guarantees of correctness.

As a result, AI tool outputs do not constitute independent cognition or authoritative knowledge claims, but rather statistically mediated system interpretations. Instead, they represent technically mediated interpretations shaped by model design, data coverage, and inference logic within defined operational constraints.

Oversight, Governance, and Control Components

In addition to technical processing layers, AI tools may incorporate structural components associated with oversight, monitoring, and governance. These elements establish accountability pathways, traceability mechanisms, and institutional boundary conditions that shape how AI systems are documented, evaluated, constrained, and interpreted within organizational or regulatory contexts.

Rather than operating independently, these governance-related components function as meta-control layers that influence how system behavior is reviewed, recorded, and contextualized over time.

Human-in-the-Loop as a Structural Layer

Human oversight can be embedded into AI tool architectures as a formal structural layer. Review protocols, validation checkpoints, and intervention mechanisms serve as control nodes that shape how system outputs are assessed, contextualized, and applied.

These structures reflect the principle that interpretive authority and decision responsibility remain external to the AI tool itself, even when automated inference processes generate outputs. Human-in-the-loop elements therefore operate as governance interfaces between computational systems and institutional decision frameworks.

Monitoring, Logging, and Audit Systems

Monitoring systems track AI tool behavior over time, capturing metrics related to model performance, input distribution patterns, output variability, and system stability. Logging mechanisms record operational events, supporting traceability, retrospective analysis, and system documentation.

Audit structures provide systematic evaluation frameworks that enable institutional actors to examine how models perform under varying conditions, how outputs change across deployment environments, and how system assumptions evolve. Collectively, these components contribute to operational transparency by documenting how AI tools function and how their behavior is shaped across different contexts.

Governance Frameworks Embedded in AI Tools

Governance-related structures function as embedded control and documentation layers that define how AI tools are developed, deployed, maintained, and interpreted within institutional environments. These components formalize procedural oversight, accountability boundaries, and documentation standards that shape system lifecycle management.

Documentation mechanisms record design assumptions, training data characteristics, model limitations, and operational constraints, supporting informed interpretation of system outputs. In this sense, governance layers contribute to the institutional framing of AI tools as regulated technical systems rather than autonomous agents.

Governance concepts discussed here reflect frameworks published by UNESCO, OECD, and national technology standards bodies addressing AI oversight and system accountability.

Comparative distinctions between AI tools and traditional software systems are examined in How AI Tools Differ from Traditional Software Systems.

Structural Risk and System Reliability Boundaries

AI tools may exhibit structurally identifiable failure modes associated with distribution shifts, model misgeneralization, or deployment-context variation. Structural risk components define operational thresholds, reliability boundaries, and review conditions that shape how system behavior is monitored and evaluated.

By incorporating governance and control layers, AI tools can be conceptually framed as managed socio-technical systems whose behavior emerges from interactions between computational architecture, data dependencies, and institutional oversight structures, rather than as autonomous or self-directing entities.

Why Structural Decomposition Is Used in AI System Analysis

Structural decomposition is commonly used in academic and institutional analysis to isolate technical dependencies, trace system behavior, and examine how performance, bias, or reliability concerns propagate across system layers. This approach supports clearer conceptual modeling of AI tools without relying on implementation-specific details.

Conclusion

AI tools can be understood as structured computational systems composed of interconnected technical and governance components. Their operation depends on layered architectures that encompass data pipelines, model frameworks, computational infrastructure, inference mechanisms, output interfaces, and embedded oversight structures. Each component shapes how information is processed, how patterns are represented, and how outputs are generated within defined technical, data, and design constraints.

Examining the core structural components of AI tools provides a clearer conceptual understanding of how these systems are internally organized.

System behavior emerges from architectural decisions, dataset characteristics, and computational limitations. AI tools operate as bounded technical artifacts whose capabilities and constraints reflect underlying system design.

This analysis underscores that AI tool outputs represent statistically mediated inferences derived from probabilistic models and finite datasets, rather than definitive or authoritative knowledge claims.

Structural uncertainty, interpretive boundaries, and governance frameworks remain central to how these systems are assessed within institutional, academic, and regulatory contexts.

By emphasizing architecture, system logic, and embedded oversight layers, this explainer frames AI tools as engineered socio-technical systems whose behavior is best understood through their internal structures, component interdependencies, and operational boundaries, rather than through surface-level system behavior alone.

These interpretations are consistent with institutional research and policy literature on AI system design, risk management, and governance.

References

- NIST. Artificial Intelligence Risk Management Framework. https://www.nist.gov/ai

- OECD. AI System Classification Framework. https://oecd.ai

- Stanford HAI. AI Index Report. https://aiindex.stanford.edu

- IEEE — Standards for Artificial Intelligence Systems https://standards.ieee.org

- UNESCO — Artificial Intelligence Ethics and Governance Reports https://www.unesco.org/en/artificial-intelligence

Advertising Disclosure

This website may display advertising through Google AdSense or similar monetization programs. Advertisements do not influence editorial content. All published content is intended for educational and informational purposes only.