Introduction

As AI adoption expands across departments, many organizations are discovering that the real challenge is not gaining access to AI models, but maintaining consistent operational behavior across teams. Most enterprise AI initiatives do not fail because the model is “bad.” They fail because every employee interacts with the system differently.

When one employee generates a strong strategic response while another receives vague or unreliable output for the exact same task, the problem is no longer technical—it is operational. At that point, the organization does not have a dependable workflow. It has inconsistent prompting behavior producing inconsistent results.

This is why AI Prompt Engineering for Teams is becoming an important operational discipline. Without shared structures, review standards, and governance rules, AI-generated work quickly turns into repeated editing cycles, fragmented communication styles, and growing distrust in output quality.

Table of Contents

Why Individual Prompting Fails Inside Teams

Most users interact with AI reactively, entering a prompt and expecting a reliable result without a defined workflow structure.

- Personal Use: You know your internal context. Even a fragmented prompt works because you can fill in the gaps mentally.

- Team Operations: Every team member has a different skill level. Without a structured framework, AI outputs become fragmented and inconsistent.

In one internal workflow review, we found that three employees using the “same” AI process produced outputs with completely different tone, formatting, and factual depth. The problem was not the model—it was the absence of shared operational instructions.

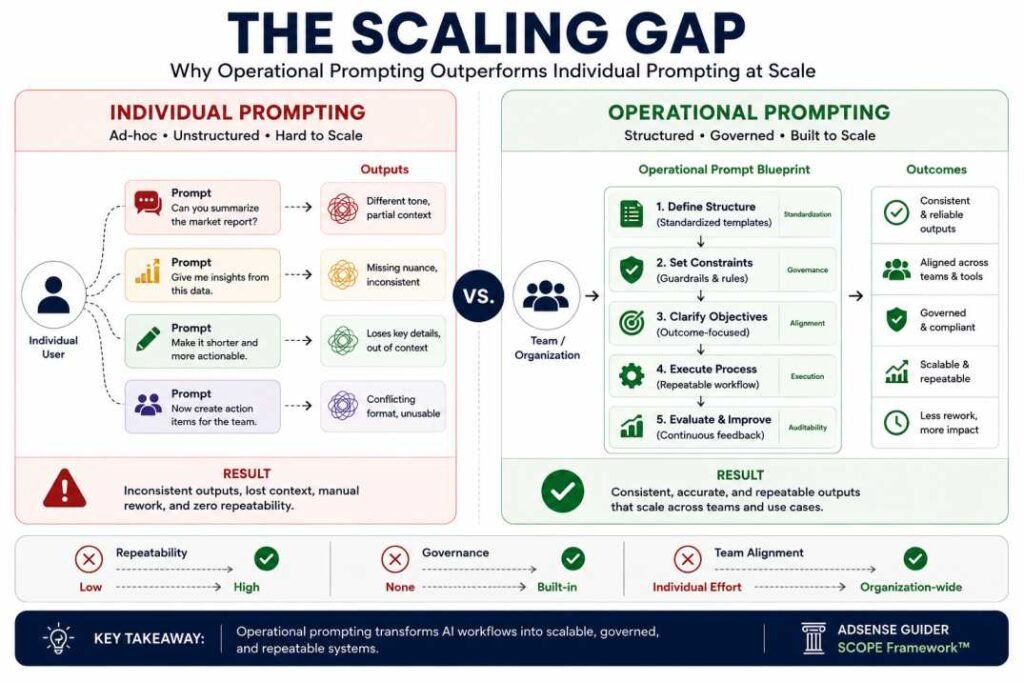

Comparison: The Scaling Gap

| Individual Prompting | Operational Prompting |

|---|---|

| Different prompt styles per employee | Shared prompt templates |

| Inconsistent outputs | Standardized outputs |

| High revision workload | Reduced editing overhead |

| Difficult to monitor | Easier governance and auditing |

| Dependent on individual skill | Scalable across teams |

The Decision Rule: If your team’s AI output requires more than two rounds of human revision, your prompt design is “Individual,” not “Operational.”

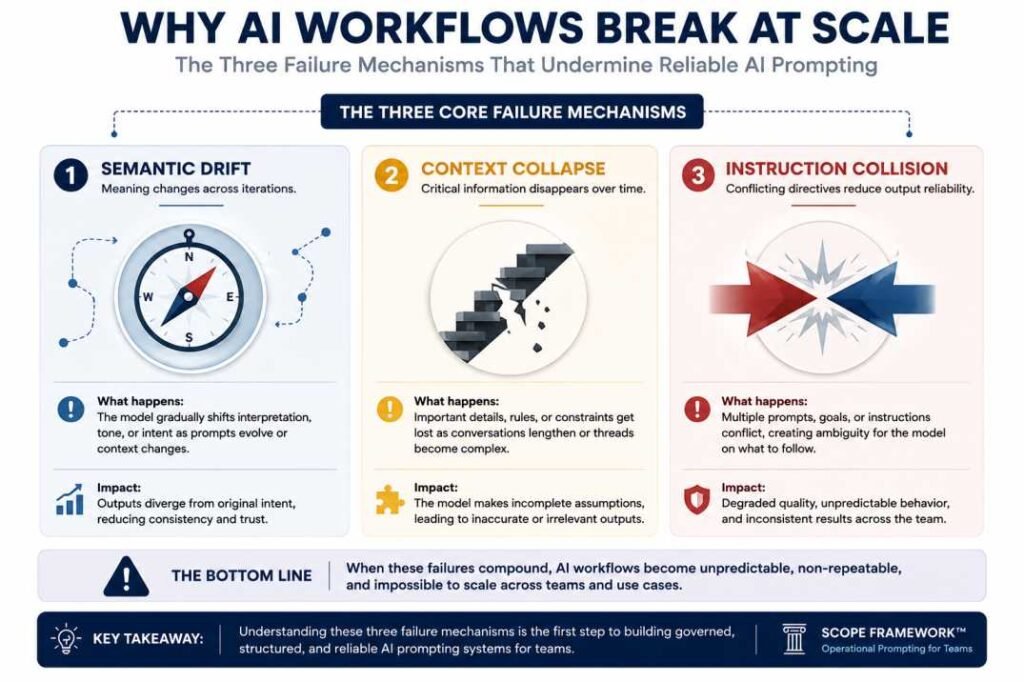

Deep Dive: Why AI Workflows Break at Scale

In practical workflow environments, three recurring “invisible” failure mechanisms appear repeatedly. Understanding these is the difference between a beginner and a systems architect.

A) Semantic Drift and Terminology Variance

Semantic Drift occurs when the AI’s interpretation of a word slowly shifts away from the user’s intent due to lack of constraints. When you tell AI to “write in a professional tone,” the meaning of “professional” varies depending on departmental goals and communication context. To a Sales team, “professional” might mean persuasive and aggressive; to Customer Support, it means empathetic and formal. Without a defined Style Registry within the prompt, the AI drifts into the most “average” version of that word, resulting in bland, generic content.

B) Context Collapse in Multi-Step Logic

AI models have practical context-processing limitations within a single prompt. When a prompt lacks a designated area for data processing, the AI loses track of complex instructions mid-way through the generation process. This is the “invisible ceiling” where reasoning breaks down because the model is trying to solve step five before it has fully “digested” step two. This often leads to why multi-step prompts fail.

C) Instruction Collision and Priority Conflicts

In a long, unorganized prompt, instructions “fight” for priority. If you say “be detailed” in the second paragraph and “keep it brief” in the fifth, the AI experiences Instruction Collision. Often, the model will prioritize the instruction closest to the end of the prompt (Recency Bias), leading to the root cause of conflicting instructions in prompts.

In operational environments, the cost of an AI hallucination is not just a wrong answer—it is a breakdown in organizational trust that can disrupt entire workflows.

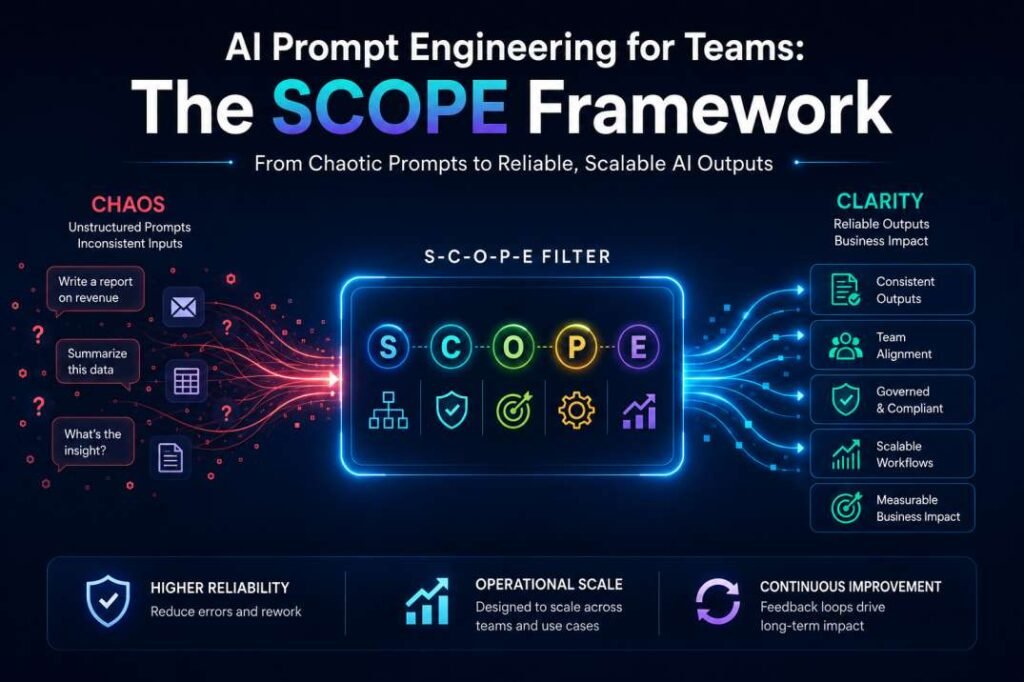

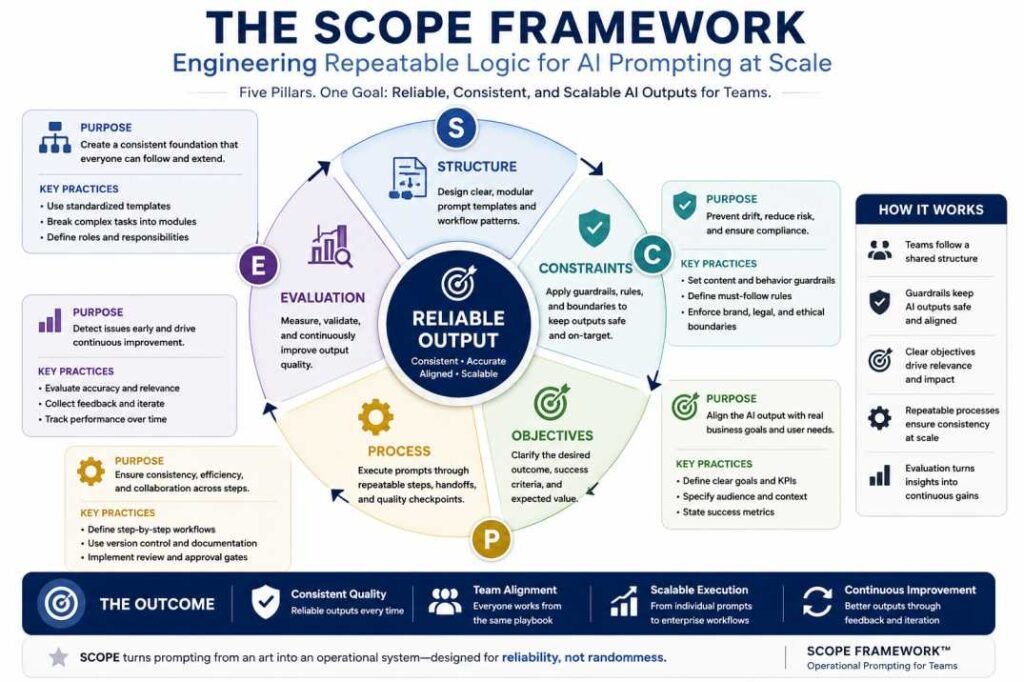

The SCOPE Framework: Engineering Repeatable Logic

To move beyond one-off prompts, teams must adopt a rigorous structural framework. The S-C-O-P-E framework is designed for operational reliability.

Weak Prompt

“Write a report about customer complaints.”

Operational Prompt

“You are a Senior Customer Operations Analyst. Review the complaint summaries, identify the three most common operational failures, and present the findings in bullet points for executive review. Keep the response under 250 words.”

Starter Operational Template:

Role: [Department Role]

Goal: [Business Objective]

Constraints: [Word limits, legal boundaries, style rules]

Process: [Step-by-step workflow]

Output Format: [Markdown, table, JSON]

Evaluation: [Accuracy, compliance, tone checks]

S – System Role (The Persona Anchor)

The System Role should define the AI’s function, communication style, and operational boundaries. In team environments, this role acts as a consistency anchor across workflows.

Operational:

“You are a Senior Content Strategist for an ESG Compliance firm. Simplify environmental regulations for non-technical stakeholders while maintaining factual and regulatory accuracy.”

Weak:

“You are a writer.”

C – Constraints (The Negative Boundaries)

In a team setting, knowing what not to do is more important for consistency than knowing what to do.

- Mandatory Constraints: “Do not use technical jargon. Do not exceed 300 words. Never refer to the user in the third person. Avoid mentions of competitor features.”

O – Output Format (Structured Output Rules)

Dictate the exact Markdown structure, JSON schema, or table headers required. This ensures the output can be plugged directly into team tools like Notion, Slack, or Trello without manual reformatting.

P – Process Logic (Step-by-Step Instruction Flow)

Guide the AI through a structured reasoning sequence. Instruct the model to perform internal checks: “First, analyze the raw data for three key trends. Second, summarize those trends in a draft. Third, refine the draft for a C-suite audience.” This prevents the model from rushing to a low-quality conclusion.

E – Evaluation Criteria (The Self-Audit)

Include a self-correction loop: “Before finalizing the response, verify that all three trends were included and that the word count is under the limit. If a constraint is violated, rewrite the section.”

Structured prompting also introduces tradeoffs. Highly constrained workflows improve consistency and governance, but they can reduce creativity and exploratory thinking in brainstorming-heavy environments. Teams should apply operational prompting selectively based on task criticality.

The Path to Standardization: Building a Prompt Library

Organizations building scalable AI systems should also understand how structured AI workflows operate across departments. Related reading: AI Workflows for Teams: Moving Beyond One-Off Prompts.

Teams cannot scale on “copy-paste” habits. They scale on Standardized Prompt Libraries.

Step 1: Centralization

Move all high-performing prompts out of individual chat histories and into a centralized repository (e.g., GitHub, Notion, or a dedicated Prompt Management tool). This creates a “Single Source of Truth.”

Step 2: Variable Injection

Transform static prompts into templates using variables.

- Static: “Write an email to John about a delay.”

- Template:

Draft a response for [Client_Name] regarding [Issue_Type]. Adhere to [Policy_Level_X] and include [Resolution_Link].

Step 3: Version Control

As AI models update (e.g., moving from GPT-4 to GPT-4o), prompts may behave differently. Organizations must version their prompts just like software code. “Marketing_Newsletter_v1.2” should be tested against “v1.3” whenever the underlying model or brand guidelines change.

Governance and Human-in-the-Loop (HITL)

A common misconception is that a “perfect” prompt removes the need for oversight. In reality, even highly structured workflows can still produce hallucinations, reasoning failures, or inconsistent outputs.

The 10% Audit Rule

Teams should manually review at least 10% of AI-generated outputs to detect Prompt Regression—situations where output quality gradually declines due to weak constraints, changing context, or workflow drift.

Try This Now: The 5-Minute Governance Audit

Use this rapid checklist to evaluate workflow reliability:

- Constraint Check: Did the AI follow all mandatory rules, such as word limits or tone restrictions?

- Drift Detection: Does the output still match the intended Persona Anchor and communication style?

- Logic Verification: Were the required process steps followed in the correct order?

- Factual Accuracy: Are names, dates, links, and variables correctly integrated?

- Bias Review: Does the output remain neutral, inclusive, and policy-aligned?

Figure 4. A lightweight AI governance audit helps teams evaluate workflow reliability, operational consistency, and scalable prompting readiness.

Managing Systemic Bias

In operational environments, prompt bias scales quickly. A flawed instruction written by one employee can influence outputs across an entire department. This is why governance reviews should evaluate prompts for neutrality, compliance, and factual consistency before they enter a shared prompt library.

Even well-structured prompting systems still depend on reliable source data. Prompt engineering improves consistency, but it cannot replace domain expertise, human review, or factual verification. This broader pattern is part of a larger category of operational AI failures discussed in Why AI Tools Fail (Hidden Errors Most Users Miss).

Operational Case Study: From Chaos to Consistency

A mid-sized marketing team began noticing a recurring issue in its AI-assisted newsletter workflow. Some editions sounded overly formal, while others became unusually casual, despite targeting the same audience and using similar source material.

After reviewing the process, the problem became clear: employees were modifying the core prompt slightly every week. Small wording changes gradually weakened formatting consistency and diluted the company’s brand voice.

To stabilize the workflow, the team standardized three components:

- Persona Anchor: A fixed 200-word brand profile was added to every prompt.

- Format Locking: Outputs were restricted to a predefined Markdown structure.

- Variable Standardization: Employees could only change the “Weekly Topic” field while keeping the core template unchanged.

The impact was immediate. Average revision time dropped from 45 minutes to 10 minutes per newsletter, and most cases of “brand drift” disappeared within the first month.

The transition, however, was not frictionless. Several employees initially resisted the structured templates because they felt less flexible than their personal prompting habits. Over time, consistency and reduced editing overhead outweighed those concerns.

Strategic Implementation: How to Start Tomorrow

If you are a manager looking to implement these core structural components, follow this three-day plan:

Day 1: The Audit. Ask every team member to share their three most-used prompts. Identify the inconsistencies.

Day 2: The Template. Pick the most common task. Rebuild it using the SCOPE framework.

Day 3: The Pilot. Have the entire team use the same new template for that task. Compare the outputs. You will likely find that 80% of the previous “quality issues” were actually “instruction issues.”

Final Thoughts: Reliability as a Competitive Advantage

Over the next decade, most organizations will have access to similar AI models. The real differentiator will be operational consistency: which teams can generate reliable outputs without constant human correction, workflow drift, or governance failures.

In practice, most AI failures inside organizations are not model failures—they are instruction failures. Teams that standardize prompting systems, audit outputs regularly, and reduce workflow ambiguity will scale faster than teams relying on individual experimentation.

The long-term advantage is not simply using AI. It is building operational workflows where quality remains stable regardless of which employee initiates the task. If you are new to workflow design concepts, start with What Is an AI Workflow? (Simple Explanation + Real Example).

Frequently Asked Questions (FAQ)

Why do teams get inconsistent AI results?

Teams often receive inconsistent AI outputs because employees use different prompting styles, instructions, and formatting rules. Without standardized templates and governance, the AI interprets tasks differently for each user, leading to variations in tone, accuracy, and structure.

How can businesses standardize AI prompts?

Most organizations standardize prompting by building shared templates, defining approval rules, and storing high-performing prompts in a centralized library accessible across departments.

What is the difference between personal prompting and team prompting?

Personal prompting is usually informal and depends heavily on individual experience. Team prompting requires structured instructions, standardized workflows, governance rules, and repeatable formatting so multiple employees can generate consistent outputs.

Can structured prompts reduce AI hallucinations?

Structured prompts can reduce hallucinations by improving clarity, limiting ambiguity, and guiding the AI through step-by-step reasoning processes. However, structured prompting cannot completely eliminate factual errors or reasoning failures, especially in high-stakes workflows.

What is the SCOPE framework in AI prompting?

The SCOPE framework is a structured methodology for operational AI prompting. It stands for:

- System Role

- Constraints

- Output Format

- Process Logic

- Evaluation Criteria

The framework helps teams create reliable, repeatable, and scalable AI workflows.

Resources & Further Reading

To deepen your understanding of operational prompt design and AI governance, we recommend exploring the following industry-standard documentations:

- OpenAI Prompt Engineering Best Practices: A comprehensive guide on strategies to get better results from large language models.

- Anthropic Prompt Engineering Documentation: Detailed insights into building reliable workflows specifically with Claude and other advanced models.

- NIST AI Risk Management Framework: The gold standard for managing risks and ensuring the reliability of artificial intelligence systems in enterprise environments.

- Microsoft Prompt Engineering Guidance: Technical documentation on maximizing the performance and safety of AI deployments at scale.