Introduction

AI tool architecture refers to the structured arrangement of technical components that collectively enable artificial intelligence systems to process data, perform computational inference, and generate outputs within defined operational boundaries. Rather than functioning as monolithic systems, AI tools are typically organized into layered modules that separate data handling, model computation, inference processes, output formatting, and oversight mechanisms. A broader definitional framing of AI tools appears in AI Tools Explained — Conceptual Foundations, System Logic, and Institutional Context.

Understanding AI tool architecture requires examining how these layers interact, how dependencies propagate across components, and how structural constraints influence system behavior. This explainer presents a conceptual analysis of architectural layers and functional dependencies without addressing implementation practices or deployment recommendations.

Conceptual Overview of AI Tool Architecture

AI tools are commonly described as layered computational systems. Each layer performs a distinct function within a coordinated structural arrangement. Architectural design determines how information flows from input to output and how system limitations emerge from structural decisions.

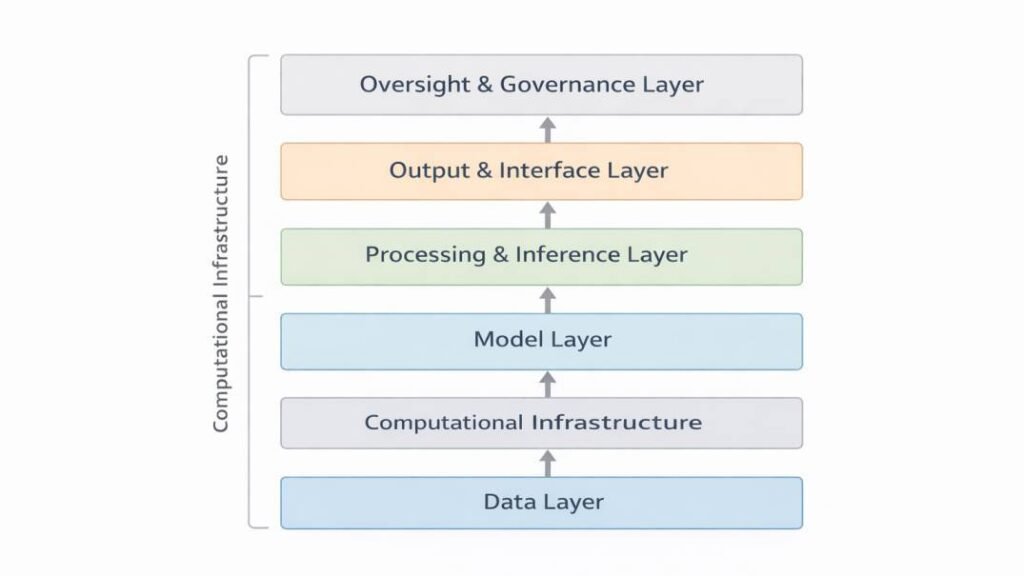

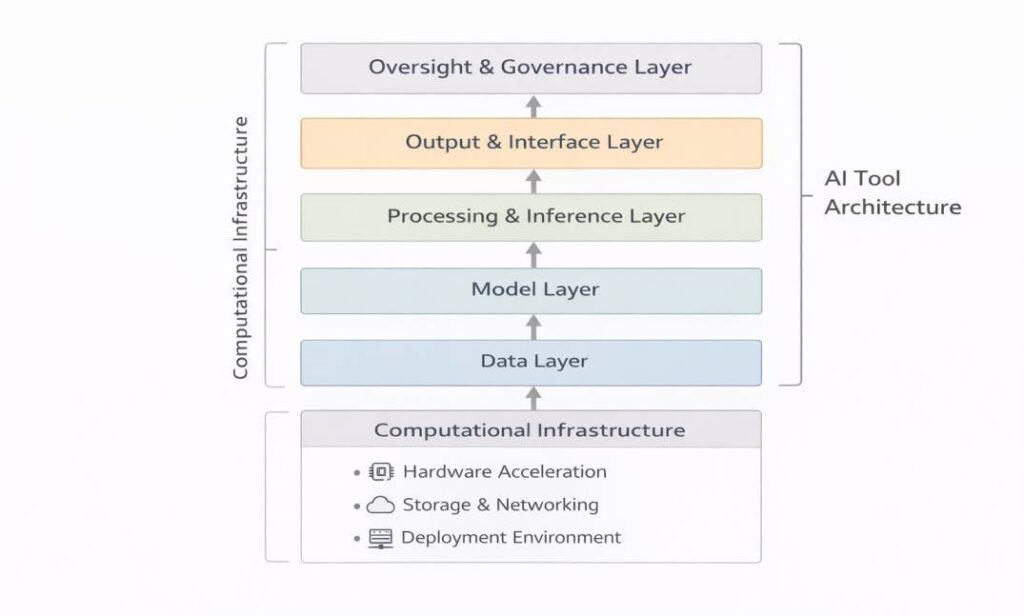

At a high level, AI tool architecture may be decomposed into the following core layers:

- Data Layer

- Model Layer

- Processing and Inference Layer

- Output and Interface Layer

- Oversight and Governance Layer

These layers operate within computational infrastructure that supports execution and coordination. A component-focused breakdown of these structural layers is examined in Core Structural Components of AI Tools.

1. Data Layer: Foundational Input Structures

The data layer forms the structural foundation of AI tools. It governs how information is collected, formatted, stored, and prepared for model processing.

Core Functions of the Data Layer

- Data ingestion from structured or unstructured sources

- Preprocessing and normalization

- Feature extraction and transformation

- Dataset partitioning for training and evaluation

- Validation of input consistency

Data decisions influence model behavior because model parameters encode statistical relationships derived from available training distributions. Constraints at this layer may shape representational capacity, bias propagation, and inference boundaries.

The data layer is not limited to initial model training. During deployment, real-time input data passes through structured pipelines to ensure conformity with system specifications. Inconsistent formatting, incomplete records, or distribution shifts at this stage may affect downstream inference behavior.

2. Model Layer: Representational and Computational Core

The model layer serves as the computational engine of the AI tool. It contains algorithmic structures that transform processed data into internal representations and probabilistic outputs. The distinction between model artifacts and system-level implementations is examined in AI Tools vs Machine Learning Models.

Structural Characteristics

- Parameterized architectures (e.g., neural networks, probabilistic models)

- Encoded statistical relationships derived from training data

- Representational capacity shaped by depth, connectivity, and parameter scale

- Model constraints determined by architectural design choices

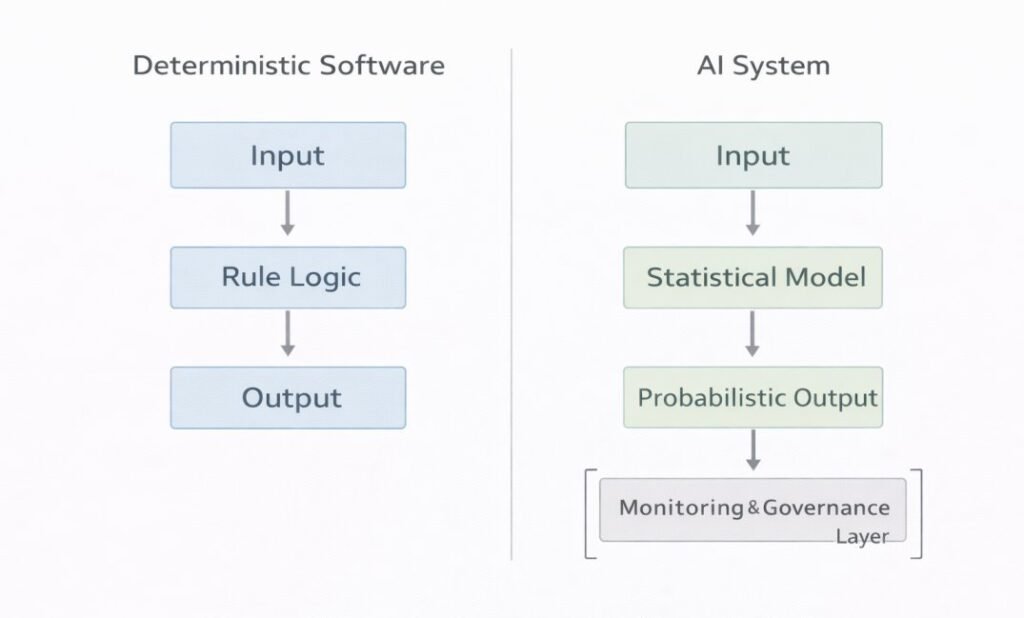

Models do not store explicit rule sets in the traditional sense. Instead, they encode statistical approximations that reflect learned correlations within data distributions. A structural distinction between statistical learning systems and rule-based software is examined in How AI Tools Differ from Traditional Software Systems.

The structure of the model layer determines:

- Expressive capacity

- Generalization boundaries

- Sensitivity to data variation

- Interpretability characteristics

Architectural complexity may influence how easily internal reasoning processes can be examined or traced.

3. Processing and Inference Layer: Functional Transformation

The processing and inference layer governs how trained models apply learned parameters to new inputs.

Inference as Probabilistic Computation

Inference involves:

- Evaluating incoming data against encoded patterns

- Assigning likelihoods or probability distributions

- Producing classifications, rankings, predictions, or generated content

Outputs represent probabilistic approximations rather than deterministic conclusions. This probabilistic foundation distinguishes AI tools from rule-based systems that execute predefined decision trees. A structural comparison of inference-based and rule-based systems appears in AI Tools vs Automation Tools.

Inference behavior depends on:

- Model calibration

- Input distribution alignment

- Parameter stability

- Computational resource availability

Latency constraints, memory allocation, and parallelization structures may influence real-time inference performance.

4. Output and Interface Layer: External Representation

The output layer translates internal computational states into externally interpretable formats.

Output Structures May Include:

- Probability scores

- Categorical labels

- Generated text

- Numerical forecasts

- Structured data objects

Interface components act as boundary mechanisms between internal computation and external systems. They manage:

- Data transmission

- Visualization

- API-based integration

- Logging of output events

The format of outputs influences how results are interpreted within organizational workflows. For example, confidence scores may accompany predictions to indicate structural uncertainty. Workflow positioning is examined conceptually in What Is an AI Workflow?

This layer does not generate knowledge independently; rather, it renders statistically derived results into communicable forms.

5. Oversight and Governance Layer: Control and Accountability Structures

Beyond technical processing layers, AI tool architecture frequently incorporates embedded oversight mechanisms. These mechanisms operate as structural controls that monitor system behavior, evaluate performance boundaries, and support institutional accountability.

Governance-Related Structures

- Human-in-the-loop validation checkpoints

- Monitoring systems tracking model performance over time

- Logging mechanisms documenting input–output interactions

- Audit frameworks assessing behavior across varying operational contexts

- Documentation of model assumptions, limitations, and training scope

Rather than functioning as external add-ons, these elements are commonly integrated within the architecture itself. Governance structures may interact with data validation pipelines, inference monitoring systems, and output review processes to maintain traceability across layers.

In probabilistic systems, outputs are conditioned on statistical patterns rather than deterministic rule execution. As a result, performance may vary with data distribution changes, contextual shifts, or evolving operational conditions. Ongoing monitoring therefore becomes an architectural requirement rather than a discretionary feature.

Institutional risk management frameworks, including the NIST Artificial Intelligence Risk Management Framework (AI RMF 1.0), conceptualize oversight as a continuous process embedded throughout system lifecycles (NIST, 2023). Within layered AI architectures, governance mechanisms support detection of performance drift, documentation of uncertainty, and evaluation of model behavior under shifting inputs. Governance boundary distinctions between system-level tools and analytical artifacts are examined in AI Tools vs AI Models.

Unlike traditional deterministic software—where behavior can often be traced directly through predefined logic—AI tools may require iterative assessment due to their data-dependent and probabilistic foundations.

Cross-Layer Functional Dependencies

AI tool architecture is defined not only by discrete functional layers but also by the structural dependencies that connect them. System behavior emerges from conditional relationships between components rather than from isolated modules.

Examples of Structural Dependencies

- Data imbalance may influence model generalization boundaries.

- Model complexity may increase computational demand and resource allocation requirements.

- Infrastructure limits may constrain model size, memory usage, or inference latency.

- Output design may affect interpretability within organizational workflows.

- Monitoring systems may detect performance drift originating from shifts in input distributions.

These examples illustrate that architectural layers operate within conditional dependencies, where decisions in one layer influence performance and constraint boundaries in adjacent layers. Process-level placement of these dependencies is discussed in How AI Tools Are Positioned Within Workflows.

Architectural trade-offs are therefore not confined to single components; they propagate through the system as linked dependencies.

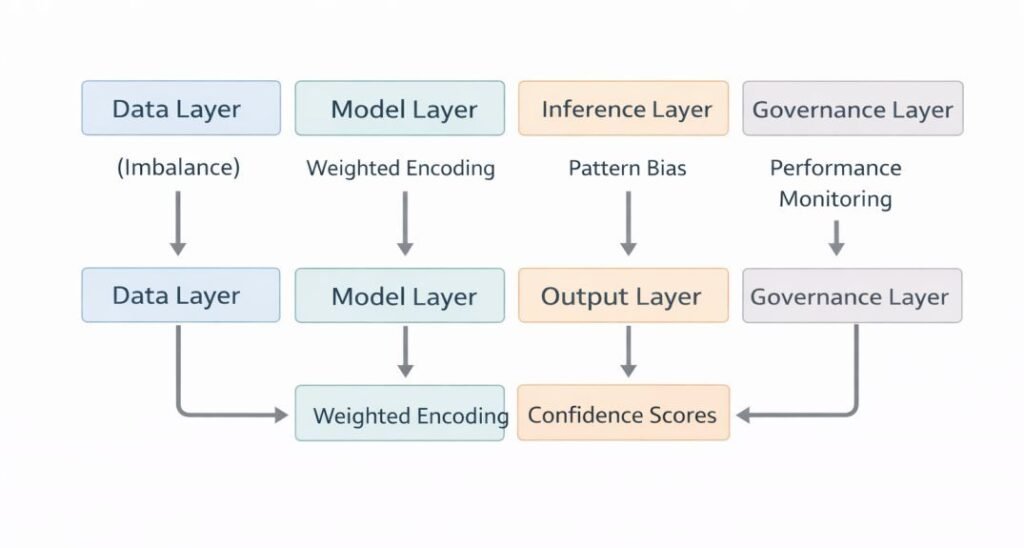

Illustrative Dependency Flow

Consider a scenario in which training data contains a disproportionate representation of a particular category.

At the data layer, this imbalance shapes feature distributions and available statistical signals.

At the model layer, learned parameters encode these asymmetries as weighted relationships within internal representations.

During inference, predictions may reflect those encoded weightings, producing outputs that systematically favor dominant patterns.

At the output layer, confidence scores may appear numerically stable, even when representational skew influences outcome distributions.

Within the governance layer, monitoring systems may identify differential performance across subgroups, triggering evaluation of upstream data composition.

This sequence demonstrates that layers are analytically separable but operationally dependent during deployment. Performance characteristics and governance responses are shaped by distributed structural conditions rather than by any single component.

Infrastructure as Enabling Environment

AI tools operate within computational infrastructure that supports:

- Model loading and execution

- Memory management

- Hardware acceleration (e.g., GPUs or specialized processors)

- Task scheduling

- Scalable deployment environments

Infrastructure limitations define operational boundaries, including throughput capacity, latency thresholds, and energy consumption constraints.

These factors influence architectural trade-offs between model size, speed, and deployment feasibility.

Structural Boundaries and System Constraints

AI tool architecture establishes explicit boundary conditions:

- Bounded training scope

- Finite representational capacity

- Sensitivity to distribution shifts

- Dependency on computational resources

Outputs remain conditioned by training data characteristics and architectural constraints. Structural uncertainty arises from the probabilistic nature of model inference and the incompleteness of real-world data coverage.

Understanding these boundaries supports clearer interpretation of system behavior without attributing autonomous reasoning capabilities to the tool itself.

Architectural Comparison with Deterministic Systems

Traditional software architecture is commonly structured around explicit procedural logic, where behavior is traceable through predefined rules. AI tool architecture differs structurally because internal reasoning processes depend on learned statistical parameters rather than fully transparent decision pathways. This distinction aligns with terminology and system framing described in ISO/IEC 22989:2022, which differentiates statistical learning systems from rule-based software structures (ISO, 2022).

In deterministic systems:

- Inputs map to predefined outputs through coded logic.

In AI systems:

- Inputs are evaluated through statistical pattern recognition mechanisms.

This distinction affects explainability, traceability, and governance requirements across digital environments.

Why Architectural Decomposition Matters

Architectural decomposition is commonly used in academic and institutional analysis to:

- Isolate technical dependencies

- Examine error propagation

- Assess interpretability limits

- Evaluate governance needs

- Clarify accountability distribution

By separating layers conceptually, analysts can examine how reliability, bias, and uncertainty originate within specific structural components.

Table: Layered Architecture Summary

| Layer | Core Function | Key Constraints | Dependency Impact |

|---|---|---|---|

| Data Layer | Ingestion, preprocessing, validation | Data quality, imbalance, distribution shifts | Shapes model learning boundaries |

| Model Layer | Statistical pattern encoding | Finite representational capacity | Influences downstream inference behavior |

| Inference Layer | Probabilistic evaluation of new inputs | Calibration, latency, resource limits | Affects output stability |

| Output Layer | External representation of results | Interpretability limits | Shapes workflow integration |

| Governance Layer | Monitoring, audit, accountability | Oversight requirements, compliance structures | Detects and manages drift |

Conclusion

AI tool architecture is structured as a layered system composed of data pipelines, model frameworks, inference processes, output interfaces, computational infrastructure, and embedded governance mechanisms. Each layer performs a distinct function within a bounded yet coordinated architectural system.

System behavior emerges from the interaction of architectural design choices, dataset characteristics, computational resource constraints, and oversight structures. Outputs do not constitute autonomous reasoning; rather, they represent probabilistic inferences generated within bounded technical and statistical conditions.

Viewing AI tools through layered architectural decomposition clarifies how information moves across components, how constraints propagate through the system, and how governance mechanisms support accountability in probabilistic environments. This perspective situates AI tools within engineered socio-technical systems shaped by design parameters, data distributions, and institutional oversight frameworks.

References

ISO/IEC 22989:2022

Artificial Intelligence — Concepts and Terminology.

International Organization for Standardization (ISO).

https://www.iso.org/standard/74296.html

ISO/IEC 23053:2022

Framework for Artificial Intelligence (AI) Systems Using Machine Learning (ML).

International Organization for Standardization (ISO).

https://www.iso.org/standard/74438.html

ISO/IEC 23894:2023

Information Technology — Artificial Intelligence — Risk Management.

International Organization for Standardization (ISO).

https://www.iso.org/standard/77304.html

ISO/IEC 5338:2023

Artificial Intelligence — AI System Life Cycle Processes.

International Organization for Standardization (ISO).

https://www.iso.org/standard/81183.html

National Institute of Standards and Technology (NIST). (2023).

Artificial Intelligence Risk Management Framework (AI RMF 1.0).

https://www.nist.gov/itl/ai-risk-management-framework

Organisation for Economic Co-operation and Development (OECD). (2019, updated 2022).

OECD Principles on Artificial Intelligence.

https://oecd.ai/en/ai-principles

Russell, S., & Norvig, P. (2021).

Artificial Intelligence: A Modern Approach (4th ed.). Pearson.

Publisher page:

https://www.pearson.com/en-us/subject-catalog/p/artificial-intelligence-a-modern-approach/P200000003482