Introduction

Inference in AI tools refers to the computational process through which a trained model evaluates new input data and generates outputs based on previously learned statistical patterns. Unlike rule-based software systems that execute predefined instructions, AI systems apply encoded parameters to incoming data in order to produce probabilistic results.

Inference does not involve retraining or modification of model parameters. Instead, it represents the operational phase in which stored representations are applied to new inputs within defined architectural and computational constraints.

Understanding inference clarifies how AI-generated outputs emerge and how statistical uncertainty is expressed within model computations.

This article presents a structural and conceptual explanation of inference as it occurs within AI tools. It does not provide implementation guidance or optimization techniques.

Inference as a Conditional Mapping Function

From a computational perspective, inference may be understood as a conditional mapping process.

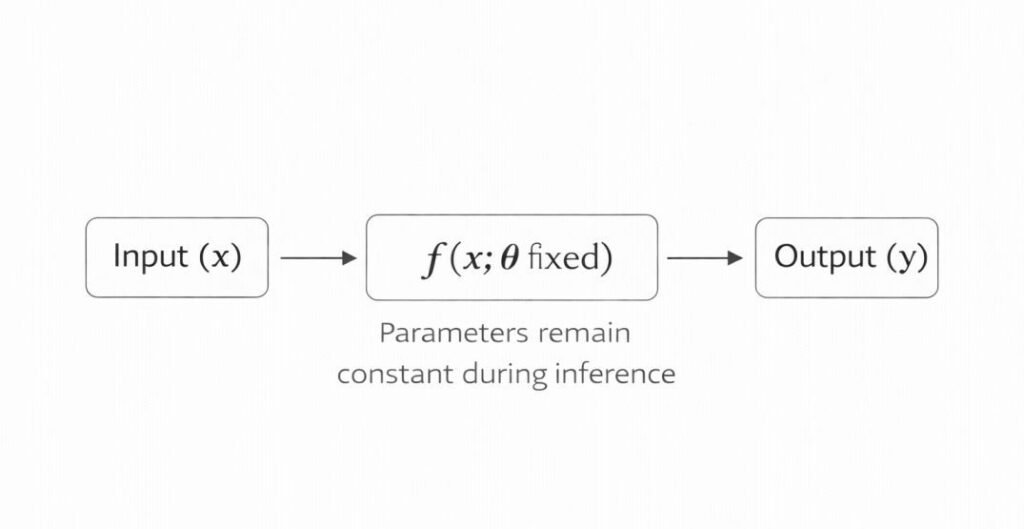

A trained model represents a function:

f(x; θ)

Where:

- x represents input data

- θ (theta) represents fixed model parameters

- f represents the transformation defined by the architecture

During inference:

- The input x is evaluated through f

- Parameters θ remain constant

- The output y = f(x; θ) is computed

This formulation emphasizes that inference is not adaptive. It does not revise θ. It computes outputs by applying a fixed mapping function established during training.

The distinction between parameter learning and parameter application is foundational to understanding AI system behavior.

Inference Within AI Tool Architecture

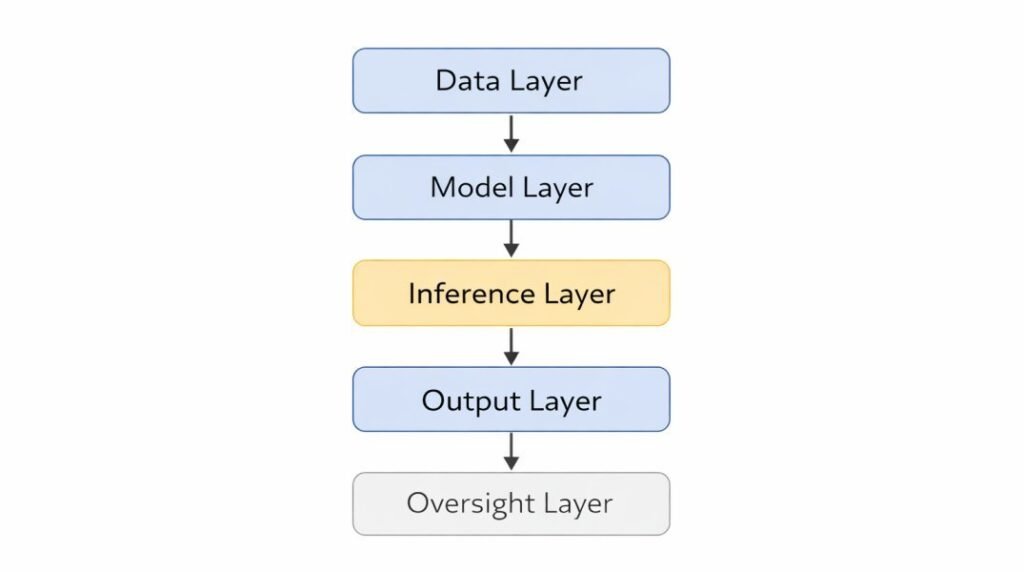

Within layered AI system design, inference occupies a distinct operational layer situated between the model layer and the output interface layer.

As discussed in AI Tool Architecture Explained, AI tools are structured into coordinated layers that include:

- Data layer

- Model layer

- Processing and inference layer

- Output and interface layer

- Oversight and governance layer

Inference occurs after:

- Input data is validated and formatted within the data layer.

- A trained model containing learned statistical parameters is loaded into computational infrastructure.

The inference layer governs how the model applies its encoded parameters to new inputs.

Inference therefore represents the functional transformation stage within AI tool architecture. It connects learned statistical representations to externally visible outputs.

What Inference Means in AI Systems

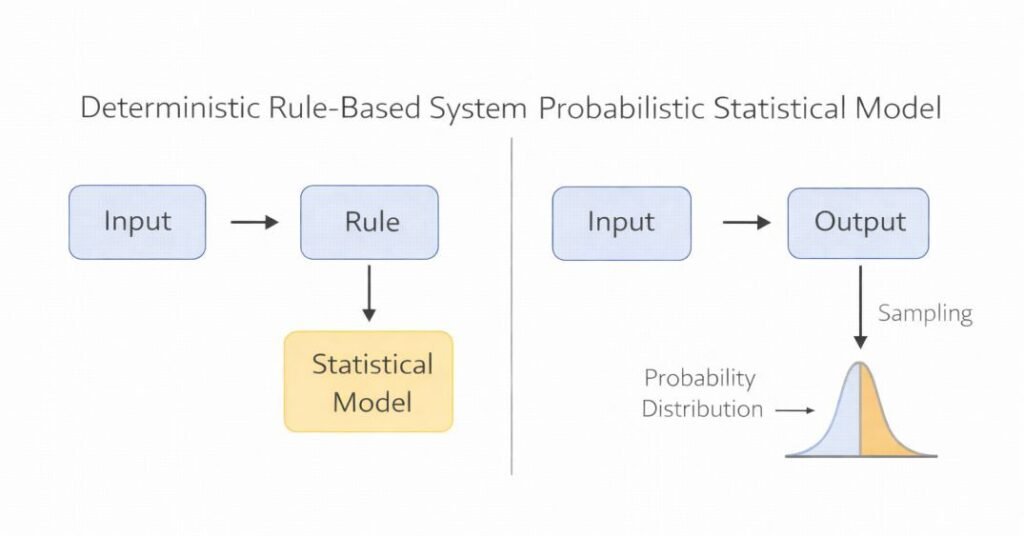

In deterministic software systems, outputs are produced through explicit rule execution. The same input consistently results in the same predefined outcome.

In AI tools, inference operates differently. Instead of executing fixed rule trees, the model evaluates input patterns against statistical relationships encoded during training. Outputs are generated through probabilistic computation rather than deterministic mapping.

Inference may involve:

- Pattern recognition

- Probability estimation

- Classification scoring

- Ranking operations

- Sequence generation

- Numerical prediction

The output represents a likelihood-weighted approximation conditioned on training data distributions and model architecture.

This distinction reflects broader differences between statistical computation and rule-based execution models. In statistical systems, probabilistic evaluation replaces predefined decision trees as the primary mechanism of output generation.

Step-by-Step Conceptual Flow of Inference

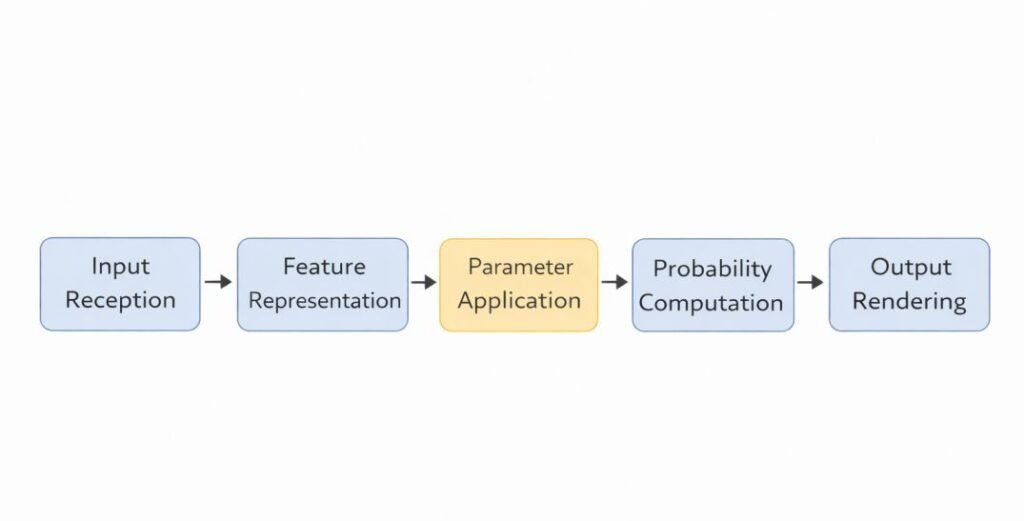

Although implementation details vary across systems, inference in AI tools commonly follows a structured computational sequence:

1. Input Reception and Formatting

New input data enters the system through defined interfaces.

The data layer ensures formatting consistency, normalization, and structural validation.

This stage does not modify model parameters. It prepares input for computational evaluation.

2. Feature Representation

Input data is transformed into internal numerical representations compatible with the trained model architecture.

For example:

- Text may be tokenized into vector representations.

- Images may be converted into pixel matrices or embeddings.

- Structured data may be transformed into standardized feature arrays.

This transformation allows the model to process inputs within its encoded representational space.

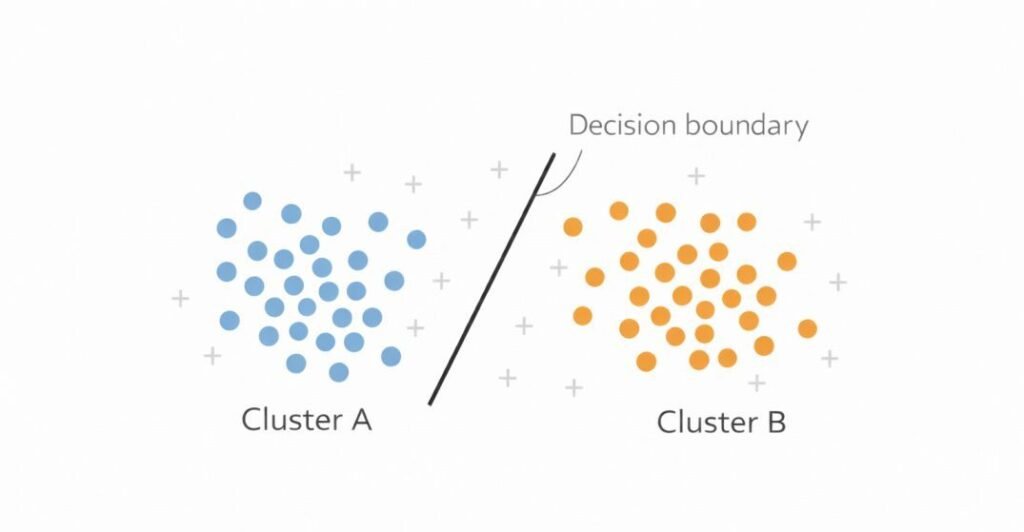

Latent Representational Space

During inference, inputs are often transformed into latent representations.

A latent space is a structured numerical environment in which:

- Similar inputs occupy nearby positions.

- Statistical relationships are encoded geometrically.

- Output boundaries are defined by parameterized decision surfaces.

Inference occurs within this space.

For example:

- A text input becomes a vector in high-dimensional space.

- A classification model determines which region of that space the vector occupies.

- Output probabilities correspond to distances from learned decision boundaries.

This geometric interpretation clarifies that inference operates through numerical transformation rather than symbolic reasoning. The term “space” refers to a mathematical vector space rather than a physical or symbolic environment.

3. Parameter Application

The trained model applies learned weights and structural relationships to the input representation.

At this stage:

- Encoded statistical patterns are activated.

- Internal layers compute weighted transformations.

- Representational states propagate forward through the architecture.

Parameter values remain unchanged during inference; the model applies stored weights without adjustment.

4. Probabilistic Evaluation

The model computes probability distributions or score values associated with potential outputs.

Depending on system type, inference may generate:

- Classification probabilities

- Confidence scores

- Ranked outputs

- Predicted values

- Generated text sequences

These outputs are conditioned on model calibration, training scope, and input alignment.

5. Output Rendering

The output layer converts internal computational states into externally interpretable forms.

This may include:

- Labels

- Text responses

- Numeric predictions

- Structured data objects

- Confidence metrics

The output layer does not generate knowledge independently. It translates statistically derived internal states into communicable formats.

Why Inference Is Probabilistic

Inference in AI tools is probabilistic because models encode statistical relationships rather than explicit rule sets.

Unlike deterministic software systems where:

Input → Rule → Output

AI inference follows a structure closer to:

Input → Statistical Model → Probability Distribution → Output

The output reflects:

- Learned correlations within training data

- Representational capacity of the model

- Calibration and architecture constraints

- Input distribution alignment

This probabilistic foundation explains why:

- Similar inputs may produce slightly different outputs

- Outputs may include uncertainty or variability

- Confidence measures accompany predictions

Variations in Inference Across Model Architectures

Inference behavior differs depending on the underlying model class.

1. Deterministic Statistical Models

In models such as linear regression or logistic regression:

- Inference computes a weighted sum of input features.

- The output is a numerical prediction or probability.

- Computation is mathematically transparent and directly traceable.

2. Neural Network Models

In neural architectures:

- Input representations propagate through multiple nonlinear layers.

- Each layer transforms intermediate representations.

- Final outputs emerge through accumulated transformations.

Because of layered nonlinearity, internal activations are distributed rather than rule-based.

3. Generative Models

In generative systems:

- Inference may involve sequential token prediction.

- Each output element conditions the next.

- The system samples from probability distributions over possible outputs.

Here, inference is iterative rather than single-step.

These variations illustrate that inference is a general computational category, not a single mechanism. These variations reflect broader distinctions between AI tools and underlying model types, as discussed in machine learning model distinctions.

Deterministic Computation vs Probabilistic Modeling

Although AI inference produces probabilistic outputs, the computation itself is deterministic.

Given:

- Identical input

- Identical parameters

- Identical runtime conditions

The internal computation produces the same probability distribution. The distribution itself is stable under identical computational conditions.

Variability arises not from randomness in computation, but from:

- Sampling strategies

- Floating-point precision

- Stochastic generation techniques (in generative models)

Thus, probabilistic modeling does not imply arbitrary computation. It reflects distributional evaluation within deterministic mathematical operations.

This clarification prevents conceptual confusion between randomness and statistical inference.

Inference and Computational Infrastructure

Inference does not occur in abstraction. It operates within computational infrastructure that defines operational limits.

Infrastructure constraints may influence:

- Latency

- Throughput capacity

- Memory allocation

- Hardware acceleration usage

- Parallel processing capability

These constraints shape deployment feasibility and real-time performance boundaries.

Infrastructure does not alter model knowledge but may affect how quickly or efficiently inference computations are executed.

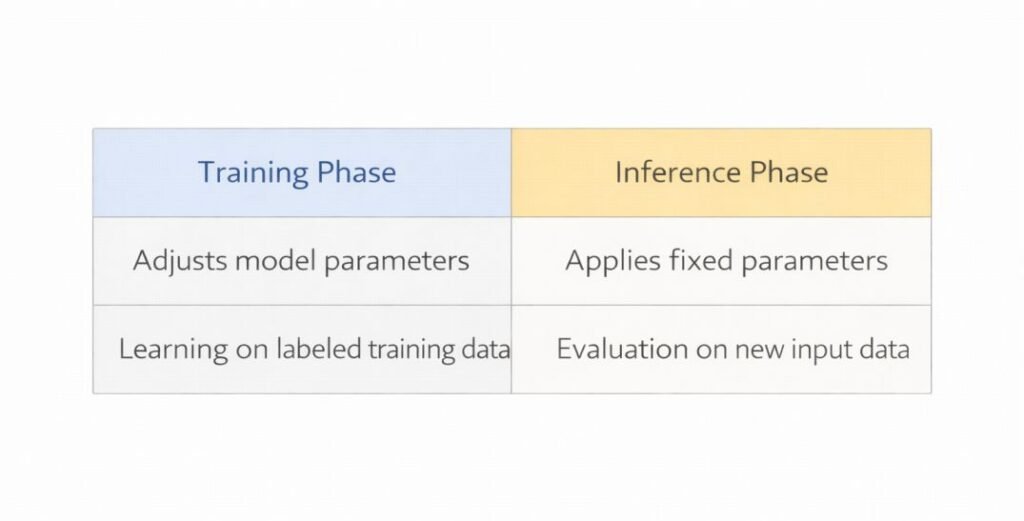

Inference vs Training: Conceptual Separation

It is important to distinguish inference from model training.

| Training Phase | Inference Phase |

|---|---|

| Model parameters are adjusted | Model parameters remain fixed |

| Data distributions shape weight updates | Stored weights evaluate new input |

| Learning occurs | Application of learned representation occurs |

| Often computationally intensive | Typically optimized for operational efficiency |

Inference and Output Reliability

Output reliability reflects distributional likelihood rather than binary rule conformity.

In deterministic software:

- Outputs either conform to specification or they do not.

In AI systems:

- Outputs reflect likelihood-weighted approximations.

- Accuracy depends on data alignment and representational capacity.

- Performance may vary when input distributions differ from training distributions.

Because inference relies on statistical approximation, output stability depends on model calibration, representational scope, and input alignment. Variability reflects the probabilistic structure of the model rather than procedural inconsistency.

Statistical Approximation and Error Characteristics

Inference outputs represent approximations rather than certainties.

Error may arise from:

- Incomplete training distributions

- Representational compression

- Overfitting to prior data

- Underfitting of complex patterns

Uncertainty can be expressed through:

- Probability scores

- Confidence intervals

- Entropy measures

These measures quantify distributional likelihood, not factual correctness.

Inference therefore reflects bounded approximation conditioned on prior data exposure.

Distribution Shifts and Inference Behavior

Inference performance depends on alignment between training data distributions and real-world inputs.

When input distributions shift:

- Model calibration may degrade

- Confidence scores may not reflect true reliability

- Prediction patterns may change

Changes in input distribution may alter how stored parameters are activated during computation, affecting output probability patterns.

Inference is therefore not an isolated computational event.

It is embedded within layered architectural dependencies.

Inference and Interpretability

Inference processes in complex neural architectures may not always be fully traceable at the level of individual internal activations.

The mechanism remains statistically structured despite limited traceability. Rather, it reflects:

- High-dimensional parameter spaces

- Distributed representations

- Nonlinear transformations

Interpretability techniques aim to approximate or analyze inference pathways, but the underlying mechanism remains statistical rather than rule-executable.

This distinction influences how inference pathways are analyzed within technical evaluation frameworks.

Structural Boundaries of Inference

Inference in AI tools operates within defined structural limits:

- Bounded training scope

- Finite representational capacity

- Sensitivity to distribution changes

- Dependency on computational resources

Outputs do not constitute autonomous reasoning.

They represent probabilistic transformations of input data within engineered architectural constraints.

Recognizing these boundaries supports clearer interpretation of AI-generated results.

Scope and Boundary Constraints

Inference cannot:

- Access information outside training scope

- Revise model architecture

- Modify parameter weights

- Acquire new conceptual categories

It operates strictly within:

- Encoded statistical relationships

- Representational limits

- Computational constraints

Outputs remain transformations of input data under fixed parameterization.

Inference does not introduce new knowledge structures during execution.

Conclusion

Inference in AI tools refers to the operational process by which trained statistical models evaluate new inputs and generate outputs through probabilistic computation.

Within layered AI architecture, inference functions as the transformation stage that connects stored model parameters to externally visible results. Unlike deterministic rule-based software, AI inference applies encoded statistical relationships rather than predefined logic chains.

Outputs produced during inference are conditioned by:

- Training data distributions

- Model architecture

- Calibration parameters

- Computational infrastructure

Understanding inference clarifies how trained statistical models transform new inputs into probabilistically structured outputs within bounded computational systems.

Inference is not a learning event, nor an autonomous reasoning process.

It is a structured probabilistic computation occurring within bounded technical conditions.

References

ISO/IEC 22989:2022 — Artificial Intelligence — Concepts and Terminology, ISO. https://www.iso.org/standard/77194.html

Google Cloud — What Is Machine Learning Inference?. https://cloud.google.com/learn/what-is-machine-learning-inference

Stanford CS231n — Introduction to Neural Networks. http://cs231n.github.io/neural-networks-1/

Kevin P. Murphy — Probabilistic Machine Learning: An Introduction. https://probml.github.io/pml-book/

Distill.pub — Understanding Latent Space in Deep Learning. https://distill.pub/2016/deconv-checkerboard/

Goodfellow, Bengio & Courville — Deep Learning. https://www.deeplearningbook.org/