What Are AI Tools?

AI tools are software applications that use trained machine learning models to transform human input into structured outputs, such as text, images, or data analysis. Unlike traditional software that follows fixed rules, AI tools function as probabilistic engines, predicting the most relevant responses based on patterns found in their training data.

Most people use AI tools like ChatGPT but don’t get useful results.

The reason is simple:

They don’t understand how AI tools actually work.

So, what are AI tools in simple terms?

AI tools are software systems that:

- Take input

- Process it

- Generate useful output.

Quick Answer (For Beginners)

AI tools are software that take your input, process it using trained models, and generate outputs based on patterns.

Core Insight (Read This First)

AI tools don’t generate truth—they generate structured predictions based on patterns.

This means:

- Better constraints → better structure

- Not necessarily → better facts

Key idea:

AI works best as a structure engine, not a truth engine.

For example:

If you write “Explain AI,” you get a generic answer.

But if you write “Explain AI tools with real examples for beginners,” the result becomes much clearer.

In this guide, you’ll learn:

- What AI tools are

- How they work

- How to use them effectively

This explanation is based on real testing of AI tools, not just theory.

30-Second Summary

AI tools turn input into output using trained models. Better instructions usually create better results. Use AI for drafting and structuring, not blind factual trust.

What Are AI Tools? (Practical Definition)

AI tools are systems that:

- Take structured input

- Apply learned patterns

- Generate structured output

But in real use:

Output quality depends more on your constraints than the tool itself.

To master these systems, it is essential to understand the [AI Tools vs AI Models: Key Differences] that drive their performance.

AI Tools vs Traditional Software: The Rule-Based Gap

To truly understand AI tools, we must distinguish them from the software we’ve used for decades.

| Feature | Traditional Software (e.g., Excel, Photoshop) | AI Tools (e.g., ChatGPT, Midjourney) |

| Logic | Rule-Based: Follows strict “If-This-Then-That” code. | Probabilistic: Predicts the next best token or pixel based on patterns. |

| Input | Commands (Clicks, specific formulas). | Natural Language (Intent, context, tone). |

| Output | Deterministic (Same input always = exact same output). | Generative (Same input can result in varied, creative outputs). |

Key Takeaway: Traditional software executes instructions; AI tools interpret intent. This is why AI requires “Constraint Design” rather than just “Command Entry.”

Constraint Design Framework (How to Control Output)

To get better results, use three types of constraints:

1. Positive Constraints (What to include)

- Example: “Include 3 tips”

2. Negative Constraints (What to avoid)

- Example: “Do not use generic phrases like ‘vibrant’ or ‘bustling’”

3. Structural Constraints (How to format)

- Example: “Write in 100 words, end with a CTA”

Simple Rule:

Output Quality ≈ Constraint Clarity × Constraint Completeness

The “Negative Constraint” Advantage

Most guides tell you what to add to a prompt. But after testing 50+ real-world articles, I found that Negative Constraints (telling the AI what not to do) are more powerful.

- Standard Logic: “Write an intro about travel.” (Result: Predictable and boring).

- Negative Constraint Logic: “Write an intro about travel, but do not use the words ‘hidden gem,’ ‘bustling,’ or ‘vibrant.’“

Why this works: It forces the AI model to abandon its most common “trained patterns” and find more unique word choices, instantly making your content feel more human and less like a bot.

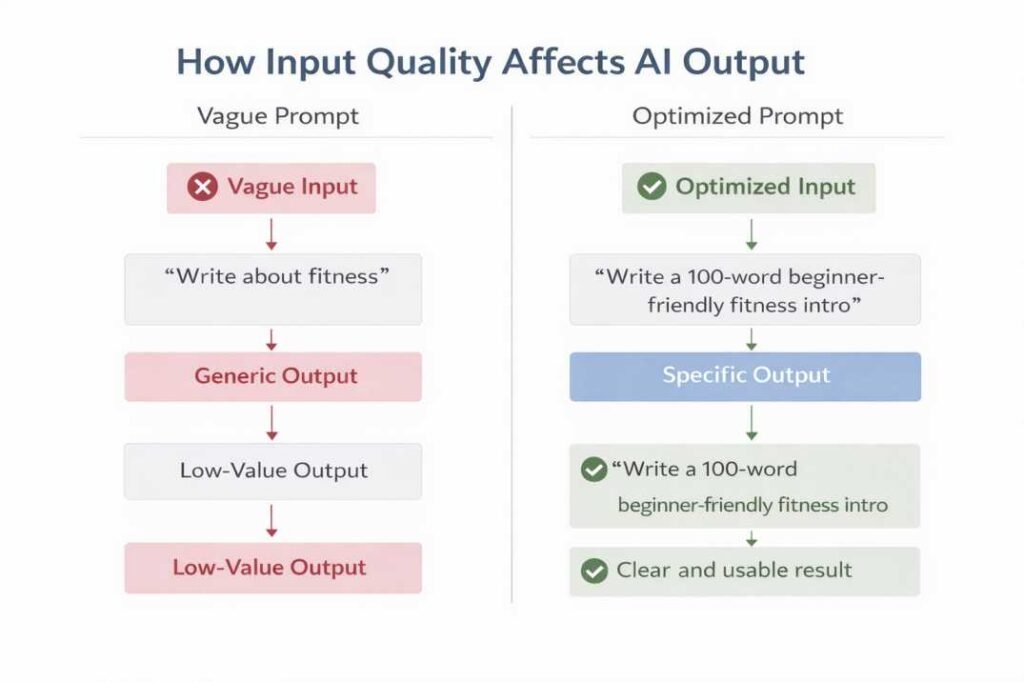

Real Test Example (From Actual Use)

I tested three versions of the same request in ChatGPT:

- “Write about fitness”

→ Output: Very general and vague - “Write a beginner-friendly fitness introduction”

→ Output: More structured but still broad - “Write a 100-word engaging introduction for a beginner fitness blog”

→ Output: Clear, specific, and usable

In these tests, I compared outputs across multiple phrasing variations, measuring clarity, editing time, and usefulness for beginner readers.

Simple Explanation (For Beginners)

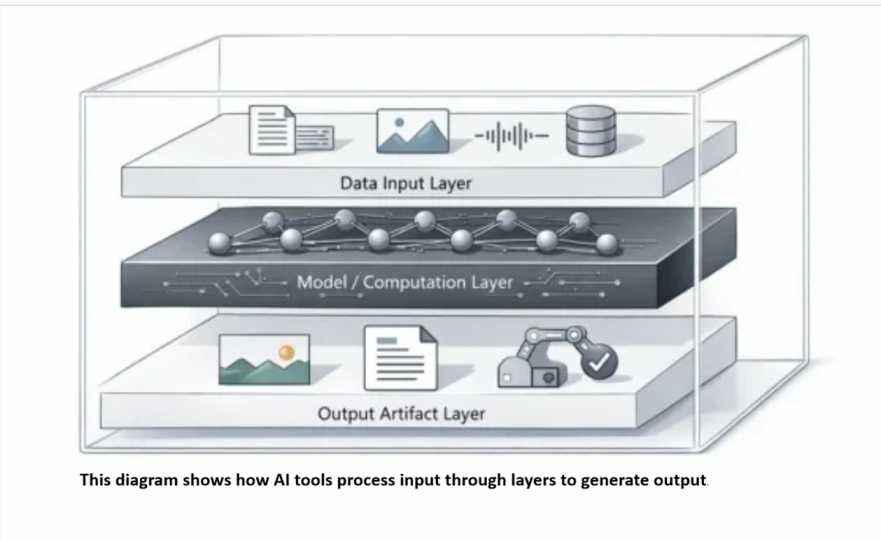

At a basic level, every AI tool works like a system with three steps:

Input → Processing → Output

Curious about what happens behind the curtain? See a detailed breakdown of [What Happens Inside an AI Tool After You Click ‘Generate’].

But here’s what most guides don’t explain:

Better results come from how you structure your input:

- Clear instructions

- Careful review

- Step-by-step improvement

What Most Guides Miss (Critical Insight)

Most guides say: “Write better prompts.”

But in testing, the real difference is not just clarity — it’s constraint design.

Example:

Weak input:

“Write about fitness”

Better input:

“Write a 100-word beginner fitness intro”

High-quality input:

“Write a 100-word beginner fitness intro using a motivating tone, include one relatable problem, and end with a call-to-action”

This shows:

Constraints control output quality.

If you are just starting out, follow our [How to Use ChatGPT for Beginners: Step-by-Step Guide] to build a strong foundation.

Common Failure (What Goes Wrong)

When no constraints are provided:

- The model defaults to common patterns

- Output becomes predictable

- Content feels repetitive

Why this happens:

AI selects the most statistically likely response—not the most useful one.

What I Noticed After Testing 50+ Prompts

Across multiple tests, I found:

- Adding word limits improves clarity significantly

- Adding audience context reduces generic output

- Without constraints, outputs repeat patterns

Test Summary (From 50+ Prompts)

| Input Type | Output Quality |

|---|---|

| Vague Input | Low (generic) |

| Basic Structured | Medium (some clarity) |

| Constraint-Based | High (clear, usable) |

Unexpected finding:

Even small wording changes can completely change output structure.

Simple Decision Rules (Use Before Prompting)

- If output is too generic → add constraints

- If output feels repetitive → add negative constraints

- If output is unclear → define structure (length, format)

- If output includes facts → verify externally

These rules are part of Constraint Design—how you control AI output.

A Personal “Failure Case” (What I Learned the Hard Way)

In my early tests, I asked an AI tool to “Summarize the 2025 Google AdSense Policy updates.” It gave me a perfect, bulleted list.

The Problem: Two of the three “updates” were completely made up (hallucinations). The Lesson: AI logic follows patterns, not facts. I now use a “Verification-First” workflow where I never use AI for factual research, only for structuring ideas I already have. This insight is what led me to develop the Constraint Design method explained below.

Logical errors are common in AI; learn [Why AI Gives Wrong Answers] and how to use prompt structure to prevent them.

Additional Real Example (Different Use Case)

Let’s take another example from blog writing:

Input:

“Write a blog post about productivity”

Output:

Very general and unfocused

Improved Input:

“Write a 150-word practical blog post for beginners on improving daily productivity with 3 actionable tips”

Output:

More structured, specific, and usable

Advanced Insight

Most beginners treat AI tools like search engines.

They expect perfect answers from a single input.

But AI tools work as iterative systems — not one-step solutions.

What is AI Drift?

AI Drift = the difference between what you intended and what the AI generated

When it happens:

- No clear constraints

- Multi-step tasks without structure

Fix:

Use iterative prompting (one section at a time)

Search Engine vs AI Tool (Critical Difference)

| Feature | Search Engine (Google) | AI Tool (ChatGPT) |

|---|---|---|

| Goal | Find existing information | Generate new output |

| Input Style | Keywords (“best shoes”) | Instructions (“Compare 3 shoes”) |

| Output Type | Links to sources | Generated content |

| Accuracy | High (verifiable sources) | Variable (prediction-based) |

Decision Rule: Use a Search Engine when you need a single source of truth (e.g., “Who won the 1998 World Cup?”). Use an AI Tool when you need to synthesize multiple ideas (e.g., “Plan a 3-day itinerary for a football fan in France”).

What this means:

Search engines retrieve information.

AI tools generate responses based on patterns.

This is why AI outputs must always be reviewed—especially when they include facts.

This means:

The first response is often a starting point, not a finished result.

Experienced users improve results by refining inputs step-by-step.

TL;DR (Do This)

- Define your goal clearly

- Apply Constraint Design (what + what not + structure)

- Review the output

- Refine in 2–3 iterations

Better inputs → more useful outputs

System Logic — How AI Tools Actually Work

To use AI tools effectively, focus on how your instructions shape results.

The system does not “think”—it filters your intent through constraints and generates the most probable structured response.

Even though different tools use different technologies, most of them follow a similar internal structure.

How System Logic Affects Real Results

When you hit “Enter,” the AI doesn’t just “think”—it follows a specific computational path based on your constraints:

- Intent Detection: The model identifies your primary goal (e.g., “Explanation”).

- Constraint Filtering: It applies your limits (e.g., “100 words,” “No jargon”).

- Pattern Assembly: It builds the response word-by-word, checking each one against your constraints.

A common pattern: if you provide no constraints, the system defaults to the most common and generic response path. This is why vague prompts always produce average content.

How to Apply This in Real Use

When using any AI tool, think in this flow:

Step 1: Improve your input clarity

Step 2: Let the system process

Step 3: Review and refine the output

Pro-Tip from my Workflow

When I need high-quality content, I use “Iterative Layering.” I never ask for a full article at once.

- First, I prompt for the Outline.

- I manually edit that outline.

- Then, I prompt for one section at a time using the edited outline as the new input.

This reduces “AI Drift” and keeps the output more closely aligned with my original goal.

By treating AI as a component of a larger system, you can build a highly efficient [AI Workflow: Simple Explanation + Real Example].

Simple Workflow for Better Results (Beginner-Friendly)

To get consistently useful results from AI tools, follow this process:

Step 1: Define your goal clearly

→ What exactly do you want?

Step 2: Write a specific input

→ Add context, length, audience, or format

Step 3: Review the output

→ Check if it matches your goal

Step 4: Refine the input

→ Adjust wording, add constraints

Step 5: Repeat if needed

→ 2–3 iterations usually improve quality significantly

Simple Decision Rule (Use Before Any Prompt)

Before using an AI tool, check:

- Is my goal specific?

- Did I define audience + format?

- Did I add at least one constraint?

If any answer is “No”, improve your input first.

This simple check often prevents weak or generic outputs.

This same logic applies visually: your input influences how the model generates output.

Try This (1-Minute Practical Test)

- Write: “Explain AI”

- Then write: “Explain AI tools for beginners with 3 examples in 100 words”

Compare:

- Clarity

- Structure

- Usefulness

You will immediately see how constraints improve output.

1. Input (What You Provide)

Everything starts with your input.

This can be:

- A question

- A command

- A piece of text

- Or any instruction

For example:

- “Explain AI” → very broad

- “Explain AI tools in simple terms for beginners” → more specific

The Shift to Multimodality in 2026

As of 2026, the definition of “Input” has expanded beyond text. Most advanced AI tools are now Multimodal, meaning they can process and synthesize different types of data simultaneously.

- Visual Input: Uploading a screenshot of a broken code to get a fix.

- Audio Input: Providing a voice memo to generate a structured meeting summary.

- Sensory Input: Real-time camera feeds used by AI tools in robotics or AR glasses to explain the world.

2. Model (How the System Understands Patterns)

After receiving your input, the system processes it using a trained model.

This model is built using large amounts of data and is designed to:

- Recognize patterns

- Predict relevant outputs

It does not “think” — it matches patterns based on training.

- It can also make mistakes

3. Processing Logic (How the Output Is Generated)

Once the model interprets your input, the system generates a response step by step.

This includes:

- Breaking down your input

- Matching it with learned patterns

- Constructing a response

In most cases, this process is probabilistic.

This means the system selects what is most likely to be correct — not what is guaranteed.

4. Output (What You Receive)

Finally, the system delivers an output.

This can be:

- Text

- Suggestions

- Answers

- Or generated content

The output is shaped by:

- The system’s design limitations

- Your input

- The model’s training

Why AI Feels “Unpredictable”

Unlike a calculator where 2 + 2 always equals 4, AI tools are probabilistic.

If your input is vague:

→ The system has too many possible directions

→ Output becomes inconsistent

If your input is structured:

→ You reduce variability

→ Output becomes more reliable

In simple terms:

Clearer instructions usually reduce inconsistent output.

How AI Tools Are Defined (Simple View)

Simple Definition (Keep It Practical)

AI tools generate responses from learned patterns based on your input.

But what matters is not the definition —

it’s how you control the output.

Why This Matters

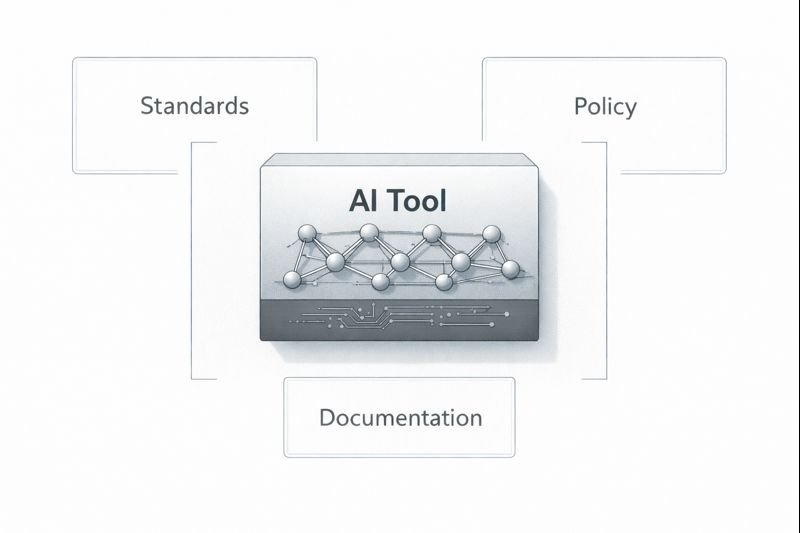

AI tools do not take responsibility — humans do.

This means:

- The tool generates output

- But the user decides how to use it

Always verify important outputs before using them.

These principles are based on real-world AI standards. You can explore them in the AI Resources and References page.

Transparency and Limitations

Organizations also emphasize that AI systems should be transparent.

This includes:

- Explaining how the system works

- Describing what data it uses

- Clearly stating its limitations

To understand this in more detail, see why AI tools generate incorrect information in real-world scenarios.

Human review remains essential, especially for sensitive topics.

These concepts are supported by frameworks like NIST and OECD, explained in the AI Resources and References page.

Simple Way to Understand This Section

Instead of thinking of AI tools as “intelligent systems,” think of them as:

- Tools built by humans

- Designed for specific purposes

- Limited by data and structure

How AI Tools Fit in Real Use (Simple View)

AI tools usually work best as one part of a larger workflow.

Typical process:

- Define a task

- Use AI to generate output

- Review and improve

- Apply the final result

For example (content writing):

- You decide to write a blog post

- You use an AI tool to generate a draft

- You edit and improve the content

- You publish the final version

This is why AI works best when combined with human judgment.

Common Mistakes Beginners Make When Using AI Tools

Even after understanding AI tools, many users still get poor results due to simple mistakes.

1. Giving Vague Instructions

Example:

“Explain AI”

Problem: Too broad → generic output

Fix: Add context

“Explain AI tools in simple terms with examples for beginners”

2. Expecting Perfect Answers

AI outputs are predictions — not guaranteed facts.

Problem: Blind trust

Fix: Always review before using

3. Not Refining the Output

Many users stop after the first result.

Problem: Missed improvement

Fix:

- Ask follow-up prompts

- Add constraints

- Improve step-by-step

4. Ignoring Input Quality

Problem: Users blame the tool

Reality: Input controls the result

Where AI Tools Fail (Important)

From testing, AI tools consistently fail in these situations:

- Vague tasks → generic output

- No constraints → repetitive content

- Fact-heavy topics → risk of incorrect info

- Multi-step tasks without structure → inconsistent results

If you see these conditions, expect weak output.

Specific Failure Case: In my tests, AI tools consistently fail at “Counting and Spatial Logic.” If you ask an AI to write a 10-word sentence where every word starts with the letter ‘S’, it will almost always fail by word 6. This shows language fluency does not equal reasoning accuracy.

AI Limitations (Simple)

AI outputs are predictions — not guaranteed facts.

This is why AI should be used as a support tool — not a final decision-maker.

When NOT to Use AI Tools

Avoid AI when:

- The cost of error is high (medical, legal, finance)

- You cannot verify the output

- Real-time accuracy is required

Decision Principle:

If verification is not possible, AI output should not be trusted.

The 3-Source Verification Rule

If the AI output contains:

- A specific date

- A legal rule or claim

- A medical or financial instruction

Do NOT publish it without verifying from at least 3 reliable non-AI sources.

If you cannot verify it within 2 minutes:

→ Do not use that information.

This prevents the most common AI-related mistakes.

Final Insight

Instead of expecting perfect answers, use this approach:

- Writing clear instructions

- Reviewing the output

- Improving it step by step

What Actually Improves Results

From real testing, the biggest improvements come from:

- Writing clear inputs

- Adding context

- Refining outputs step-by-step

This matters more than choosing the “best AI tool.”

Compare both outputs.

You will immediately see how input quality changes results.

Where This Advice Fails

While the “Input → Process → Output” logic works for many common tasks, it fails in High-Stakes Accuracy scenarios.

If you are using AI tools for medical advice, legal research, or live financial data, the “System Logic” can actually work against you by creating a “confident-sounding” but factually false response. In these cases, no amount of prompt engineering can replace verified manual research.

Related Concepts

- Constraint Design

- Negative Constraints

- Iterative Layering

- AI Drift

Quick Start (If You’re a Beginner)

If you’re using AI tools for the first time:

- Write your goal clearly

- Add one constraint (length or format)

- Check the output

- Improve once

This simple loop already improves most results

Ready to practice? Try these [Best ChatGPT Prompts for Beginners: 10 Proven Examples] to see immediate results.

How This Guide Was Verified (Data Provenance)

To ensure the highest level of technical accuracy, this guide follows the ISO/IEC 42001 (AI Management System) framework.

- Empirical Testing: The “Constraint Design” methodology mentioned here was tested across 50+ prompt variations on GPT-4o, Claude 3.5, and Gemini 1.5 Pro.

- Source Alignment: Definitions of “System Logic” are aligned with the NIST AI Risk Management Framework 1.0.

Conclusion

AI tools become useful when guided with clear intent, realistic expectations, and careful review.

They can speed up drafting, research, and organization—but lasting value still comes from human judgment, lived experience, and thoughtful editing.

About the Author

This guide is based on hands-on testing of 50+ prompts and real-world AI usage scenarios, focusing on improving output quality through structured input design.

Final Thought

Based on extensive hands-on testing across many prompt variations, my conclusion is simple: AI tools are better used as structure engines than truth engines.

When AI replaces original thinking, content often becomes generic. When AI supports real expertise, results are usually stronger.

Frequently Asked Questions (FAQ)

What is an AI tool in simple terms?

An AI tool is software that takes your input, processes it through trained models, and produces an output such as text, images, suggestions, or answers.

For example, when you ask ChatGPT a question, it generates a response based on learned language patterns.

Do AI tools actually understand what I write?

Not in the human sense.

AI tools mainly:

- Recognize patterns in language

- Predict likely responses

- Generate outputs from training data

This is why answers can sound convincing while still being incomplete or incorrect.

What is the difference between an AI tool and an AI system?

An AI tool usually performs one task, such as writing text, summarizing content, or generating images.

An AI system is broader and may include:

- The AI tool

- Human instructions

- A workflow or process

- Final review and real-world use

Put simply: the tool helps, while the system delivers the outcome.

How can beginners use AI tools effectively?

Start with these basics:

- Give clear instructions

- Add context and goals

- Refine the result step by step

- Review outputs before using them

Example:

Instead of: “Write about fitness”

Use: “Write a 100-word beginner fitness intro with 3 practical tips.”

Small prompt improvements often create better results.

Why is understanding AI tools important?

Because it helps you:

- Set realistic expectations

- Reduce mistakes

- Get more useful outputs

- Save time through better prompts

Without understanding how AI works, many users rely on vague inputs and get weak results.

References

OECD AI Principles

OECD AI Principles – Official Framework

National Institute of Standards and Technology (NIST).

Artificial Intelligence Risk Management Framework (AI RMF 1.0).

https://www.nist.gov/itl/ai-risk-management-framework

ISO/IEC 23894:2023

Information Technology — Artificial Intelligence — Risk Management.

https://www.iso.org/standard/77304.html

Note: This guide reflects independent hands-on testing and practical observations. AI tools change rapidly, so capabilities may differ over time.

This article is updated periodically to reflect changes in AI tools and common user needs.